Original Link: https://www.anandtech.com/show/1595

NVIDIA nForce Professional Brings Huge I/O to Opteron

by Derek Wilson on January 24, 2005 9:00 AM EST- Posted in

- CPUs

Introduction

AMD is not going to offer a PCI Express based chipset for its Opteron line. They are, instead, relying on third party partners to provide core logic for motherboards.

Stepping back to look at the professional space, it seems quite odd that NVIDIA would have taken so long to provide a PCI Express based Opteron chipset in light of the fact that their flagship Quadro FX 4400 graphics card is PCI Express. This seems like the kind of graphics card that would have made sense to be paired with dual Opterons and an NVIDIA chipset. Until now, anyone who wanted more than a desktop board for PCI Express would have been forced to go with an Intel platform where NVIDIA had previously not been invited.

Of course, all that will change very soon, now that NVIDIA has launched their nForce Professional line of core logic chipsets. These single chip core logic solutions for AMD Opteron based servers and workstations will bring the professional level of support that NVIDIA offers with its professional nFroce 3 line to a PCI Express based setup.

A whole host of other features are offered as well, including an implementation of the SATA II spec, which supports the connection of SATA 3Gb/s devices, support for 10 USB 2.0 devices, and much more. Shortly, we will also be able to find both NVIDIA based Intel motherboards as well, but without the advantages of HyperTransport, it will be hard for NVIDIA to offer the kind of advantages the nForce Professional line has.

The New nForce Professional

The nForce Professional marks the fifth core logic offering from NVIDIA, who dubs their motherboard chipsets MCPs (for Media and Communications Processors). Never has the MCP moniker been truer than this time around.

Like the the Quadro and GeForce line, the nForce line is supported by NVIDIA's Unified Driver Architecture. This means that no matter what hardware you are running, any driver will work, whether past present or future. Since NVIDIA brings its UDA to both Windows and Linux, broad corporate support will be available for nForce Pro upon launch.

NVIDIA has also informed us that they have been validating AMD's dual core solutions on nForce Professional before launch as well. NVIDIA wants its customers to know that it's looking to the future, but the statement of dual core validation just serves to create more anticipation for dual core to course through our veins in the meantime. Of course, with dual core coming down the pipe later this year, the rest of the system can't lag behind.

We've seen several good steps in the connectivity department. At the same time, performance and scalability have become more dependant on core logic as functionality moves over the PCI Express bus, more storage needs SATA connections, and more devices are plugged into USB ports, for example. NVIDIA's unique solution is the combination of single chip core logic with the ability to drop multiple MCPs (of lesser function) on a motherboard for expanded I/O capabilities.

The nForce Professional 2200 MCP

One of the big questions that we first wanted answered was whether or not nForce Pro and nForce 4 Ultra/SLI were the same silicon with different parts turned on/off. NVIDIA maintains that they are different silicon, and it is entirely possible that they are. They did, in fact, give us transistor counts for nForce 4 and nForce Pro:

nForce 4: 22 Million Transistors

nForce Pro: 24 Million Transistors

Economically, it still doesn't make sense to run two different batches of silicon when functionality is so nearly identical, especially when features could just be turned off after the fact. Pro chips don't have the same volume as desktop chips, and desktop chips don't have the same margins as pro silicon. Combining the two allows a company to produce more volume for a single IC (which lowers cost per part) that feeds both high volume and high margin SKUs. Of course, as we saw in our recent article on modding nForce Ultra to SLI, there are some issues with running all your chips from the same silicon. The fact that potential Quadro users have been buying and modding GeForce cards for years speaks to the issue as well. Of course, there's more in a Quadro than just professional performance (build quality and support/service come to mind).

But just because something doesn't make economic sense doesn't mean that we don't want to see it happen. There's just something that doesn't sit right about charging a thousand dollars more for a card that has a few features enabled. We would rather see professional parts be worth their price. Part of that equation is running separate silicon for parts with pro features. We're glad to hear that this is what NVIDIA has said they are doing here.

For now, let's get on to what we do know about the nForce Pro.

NVIDIA nForce Pro 2200 MCP and 2050 MCP

There will be two different MCPs in the nForce Professional lineup: the nForce Pro 2200 and the nForce Pro 2050. The 2200 is a full-featured MCP, and while the 2050 doesn't have all the functionalty of the 2200, they are based on the same silicon. The feature set of the NVIDIA nForce Pro 2200 MCP is just about the same as the nForce 4 SLI and is as follows:

- 1 1GHz 16x16 HyperTransport Link

- 20 PCI Express lanes configurable over 4 physical connections

- Gb ethernet w/ TCP/IP offload Engine (TOE)

- 4 SATA 3Gb/s

- 2 ATA-133 channels

- RAID and NCQ support (RAID can span SATA and PATA)

- 10 USB 2.0

- PCI 2.3

The 20 PCI Express lanes can be spread out over 4 controllers at the motherboard vendor's discretion via NVIDIA's internal crossbar connection. For instance, a board based on the 2200 could employ 1 x16 slot and 1 x4 slot, or 1 x16 and 3 x1 slots. It cannot host more than 4 physical connections or 20 total lanes. Technically, NVIDIA could support configurations like x6 which don't match PCI Express spec. This may prove interesting if vendors decide to bend the rules on anything, but likely server and workstation products will stick to the guidelines.

Maintaining SATA and PATA support is a good thing, especially with 4 SATA 3Gb/s channels, 2 PATA channels (for 4 devices), and support for RAID on both. Even better is the fact that NVIDIA's RAID solution can be applied across a mixed SATA/PATA environment. Our initial investigation of NCQ wasn't all that impressive, but hardware is always improving, and applications in the professional space are a good fit to NCQ features.

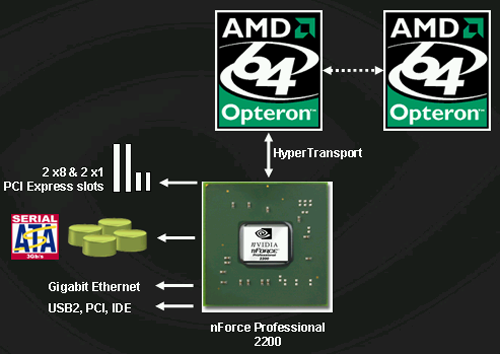

This is the layout of a typical system with the nForce 2200 MCP.

The nForce Pro 2050 MCP, the cut down version of the 2200 that will be used as an I/O add-on, supports these features:

- 1 1GHz 16x16 HyperTransport Link

- 20 PCI Express lanes configurable over 4 physical connections

- Gb ethernet w/ TCP/IP offload Engine (TOE)

- 4 SATA 3Gb/s

Again, the PCI Express controllers and lanes are configurable. Dropping this down to add those plus another GbE and 4 more SATA connections is an obvious advantage, but there is more.

As far as we can tell from this list, the only new feature introduced from nForce 4 is the TCP/IP offload Engine in the GbE. Current nForce 4 SLI chipsets are capable of all other functionality discussed in the NFPro 2200 MCP, although there may be some server level error reporting built into the core logic of the Professional series that we are not aware of. After all, those extra two million transistors had to go somewhere.

But that is definitely not all there is to the story. In fact, the best part is yet to come.

The Kicker(s)

On server setups with available HyperTransport lanes to processors, up to 3 nForce Pro 2050 MCPs can be added alongside a 2200 MCP.

As each MCP makes a HT connection to a processor, a dual or quad processor setup is required for a server to take advantage of 3 2050 MCPs (in addition to the 2200), but in the case where such I/O power is needed, the processing power would be an obvious match. It is possible for a single Opteron to connect to one 2200 and 2 2050 MCPs for slightly less than the maximum I/O, as any available HyperTransport link can be used to connect to one of NVIDIA's MCPs. Certainly, the flexibility now offered by AMD and NVIDIA to third party vendors has been increased multiple orders of magnitude.

So, what does a maximum of 4 MCPs in one system get us? Here's the list:

- 80 PCI Express lanes across 16 physical connections

- 16 SATA 3Gb/s channels

- 4 GbE interfaces

Keep in mind that these features are all part of MCPs connected directly to physical processors with 1GHz HyperTransport connections. Other onboard solutions with massive I/O have provided multiple GbE, SATA, and PCIe lanes through a south bridge, or even the PCI bus. The potential for onboard scalability with zero performance hit is staggering. We wonder if spreading out all of this I/O across multiple HyperTransport connections and processors may even help to increase performance over the traditional AMD I/O topography.

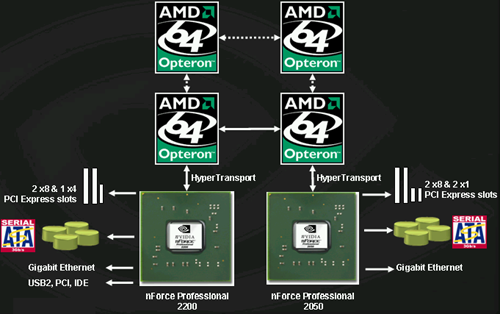

Server configurations can drop an MCP off of each processor in an SMP system.

The fact that NVIDIA's RAID can span across all of the devices in the system (all 16 SATA 3Gb/s and the 4 PATA devices from the 2200) is quite interesting as well. Performance will be degraded by mixing PATA and SATA devices (as the system will have to wait longer on PATA drives), and likely, most will want to keep RAID limited to SATA. We are excited to see what kind of performance that we can get from this kind of system.

So, after everyone catches their breath from the initial "wow" factor of the maximum nForce Pro configuration, keep in mind that this will only be used in very high end servers using Infiniband connections, 10 GbE, and other huge I/O interfaces. Boards will be configured with multiple x8 and x4 PCIe slots. This will be a huge boon to the high end server market. We've already shown the scalability of the Opteron at the quad level to be significantly higher than Intel's Xeon (single front side bus limitations hurt large I/O), but unless Intel has some hidden card up their sleeve, this new boost will put AMD based high end servers well beyond the reach of anything that Intel could put out in the near term.

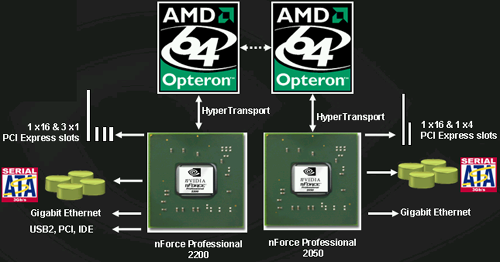

On the workstation side, NVIDIA will be pushing dual processor platforms with one 2200 and one 2050 MCP. This will allow the following:

- 40 PCI Express lanes across 8 physical connections

- 8 SATA 3Gb/s channels

- 2 GbE interfaces

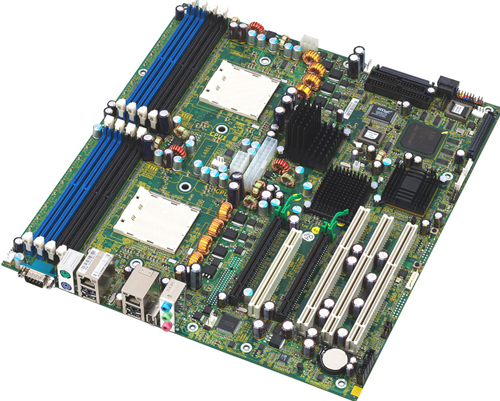

This is the workstation layout for a DP NFPro system.

Again, it may be interesting to see if a vendor will come out with a single processor workstation board requiring an Opteron 2xx to enable a 2200 and 2050. This way, a single processor workstation could be had with all the advantages of the system.

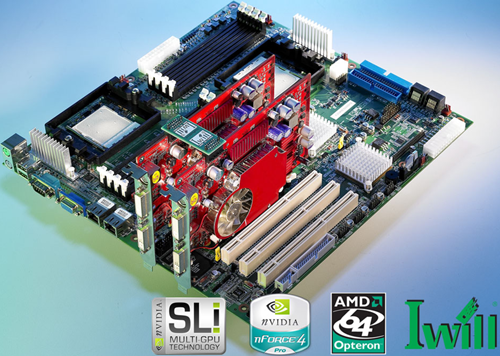

IWill is shipping their DK8ES as we speak and should have availability soon.

While NVIDIA hasn't announced SLI versions of Quadro or support for them, boards based on the nForce Pro will support SLI version of Quadro when available. And the most attractive feature of the nFroce Pro when talking about SLI is this: 2 full x16 PCI Express slots. Not only do both physical x16 slots have all x16 electrical connections, but NVIDIA has enough headroom left for an x4 slot and some x1 slots as well. In fact, this feature is big enough to be a boon without the promise of SLI in the future. The nForce Pro offers full bandwidth and support for multiple PCI Express cards, meaning that multimonitor support on PCI Express just got a shot in the arm.

One thing that's been missing since the move away from PCI graphics cards has been multiple card support in systems. Due to the nature of AGP, running a PCI card alongside an AGP card is nice, but PCI cards are much lower performance. Finally, those who need multiple graphics cards will have a solution with support for more than one of the latest and greatest graphics cards. This means two 9MP monitors for some, and more than two monitors for others. And each graphics card has a full x16 connection back to the rest of the system.

Tyan motherboards based on nForce Pro are also shipping soon according to NVIDIA.

The two x16 connections will also become important for workstation and server applications that wish to take advantage of GPUs for scientific, engineering, or other vector-based applications that lend themselves to huge arrays of parallel floating point hardware. We've seen plenty of interest in doing everything from fluid flow analysis to audio editing on graphics cards. It's the large bi-directional bandwidth of PCI Express that enables this, and now that we'll have two PCI Express graphics cards with full bandwidth, workstations have even more potential.

Again, workstations will be able to have RAID across all 8 SATA devices and 4 PATA devices if desired. We can expect mid-range and lower end workstation solutions only to have a single nForce 2200 MCP on them. NVIDIA sees only the very high end of the market looking at pairing the 2200 with 2050 in the workstation space.

Perhaps the only down side to the huge number of potential PCI Express lanes available is the inability of NVIDIA MCPs to share lanes between MCPs and slight lack of physical PCI Express connections. In otherwords, it will not be possible to have a motherboard with 5 x16 PCI Express slots or 10 x8 PCI Express slots, even though there are 80 lanes available. Of course, having 4 x16 slots is nothing to sneeze at. On the flip side, it's not possible to put 1 x16, 2 x4, and 6 x1 PCIe slots on a dual processor workstation. Even though there are a good 16 PCI Express lanes available, there are no more physical connections to be had. Again, this is an extreme example, and not likely to be a real world problem. But it's worth noting nonetheless.

Final Words

We can't wait to get our hands on a board. Now that NVIDIA has announced this type of scaling I/O with HyperTransport connections, we wonder why we haven't been pushing it all along. It seems rather obvious in hindsight that using the extra HT connections processors would be advantageous and relatively simple in an Opteron environment. This is especially true when all the core logic fits on a single chip. Kudos to NVIDIA for bringing the 2200 and 2050 combination to market.

Though much of the nForce Professional series is very similar to the nForce 4, NVIDIA has likely made good use of those two million extra transistors. Though, we can't be exactly sure what went in there - it's likely the TCP/IP offload Engine, and possibly some server level error reporting routines. But for this, nForce Pro is exactly the same as the nForce 4.

The creativity that the nForce Pro 2050 MCP will offer vendors is unfathomable. We've already seen what everyone has tried with the NF4 Ultra and SLI chipsets, and now that we have something made for scalability and multiple configurations, we are sure to see some ingenious designs spring forth.

NVIDIA mentioned that many of their partners wanted a launch in December. NVIDIA also told us that IWill and Tyan are already shipping boards, but we aren't sure how widespread availability is yet. We will have to speak with IWill and Tyan about these matters. As far as we are concerned, the faster that NVIDIA can get nForce Professional out the door, the better.

The last thing to look at is how the new NVIDIA solution compares to its competition from Intel. Well, here's a handy comparison chart for those who wish to know what they can get in terms of I/O from NVIDIA and from Intel on their server and workstation boards.

| Server/Worstation Platform Comparison | |||

| NVIDIA nForce Pro (single) | NVIDIA nForce Pro (quad) | Intel E7525/E7520 | |

| PCI Express Lanes | 20 Lanes | 80 lanes | 24 |

| SATA | 4 SATA II | 16 SATA II | 2 SATA 1.0 |

| Gigabit Ethernet MAC | 1 | 4 | 1 |

| USB 2.0 | 10 | 10 | 4 |

| PCI-X Support | No | No | Yes |

| DDR/DDR2 | DDR | DDR | DDR2 |

Opteron boards with NFPro can have PCI-X support when combined with the proper AMD-8000 series chips, but NVIDIA didn't build in PCI-X support. It's obvious how well beyond Lindenhurst and Tumwater (E7520 and E7525) that the nForce Pro will scale with dual and quad Opteron solutions. Even in a single MCP configuration, NVIDIA has a lot of flexibility with its configurable PCI Express controller. Intel's solutions are locked into either 1 x16 slot + 1 x8 (E7525) or 3 x8 (E7520). The x8 connections to the MCH can run 2 physical devices instead (up to 2 x4). Also, if the motherboard vendor includes Intel's additional PCI hub for more PCI-X slots, either 4 or 8 of those PCI Express lanes go away.

Unfortunately, there isn't a whole lot more that we can say until we get our hands on it for testing. Professional series products can take longer to get into our lab, so it may be some time before we can get a review out, but we will try our best to get product as soon as possible. Of course, boards will cost a lot, and the more exciting the board, the less affordable it will be. But that won't stop us from reviewing them. On paper, this is definitely one of the most intriguing advancements that we've seen in AMD-centered core logic, and could be one of the best things ever to happen to high end AMD servers.

On the workstation side, we are very interested in testing a full 2 x16 PCI Express SLI setup, as well as the multiple display possibilities of such a system. It's an exciting time for the AMD workstation market, and we're really looking forward to getting our hands on systems.