Micron M600 (128GB, 256GB & 1TB) SSD Review

by Kristian Vättö on September 29, 2014 8:00 AM ESTRandom Read/Write Speed

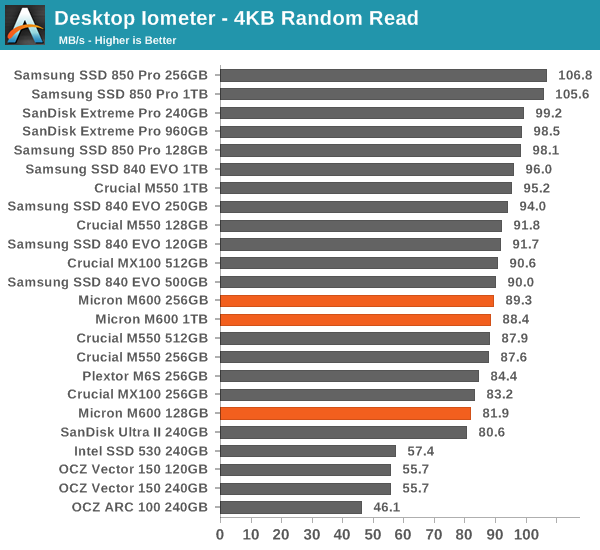

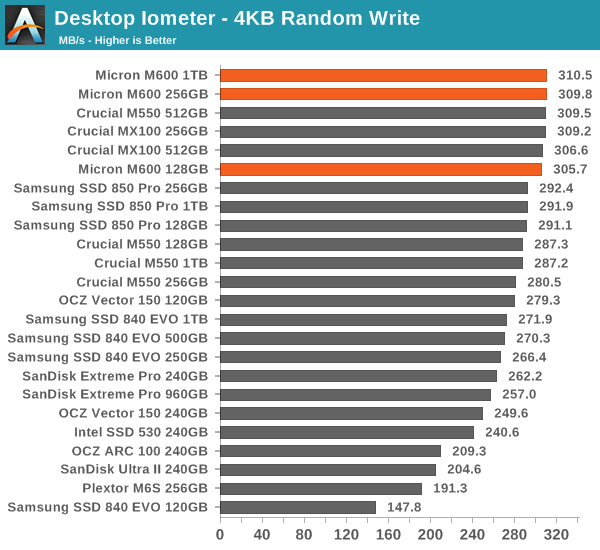

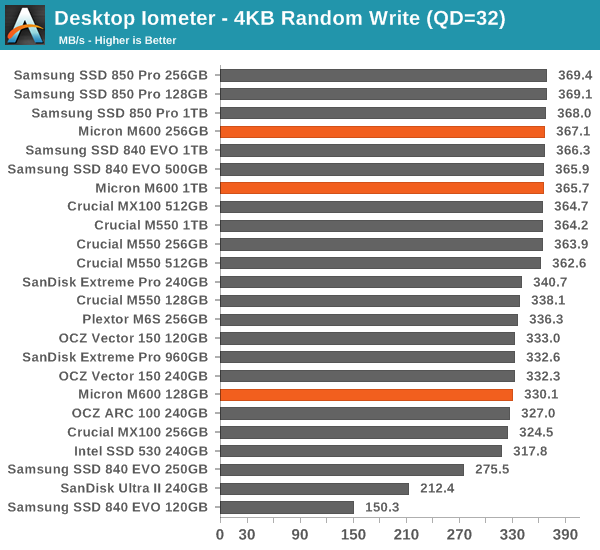

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). We perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time.

Random performance remains more or less unchanged from the MX100 and M550. Micron has always done well in random performance as long as the IOs are of bursty nature, but Micron's performance consistency under sustained workloads has never been top notch.

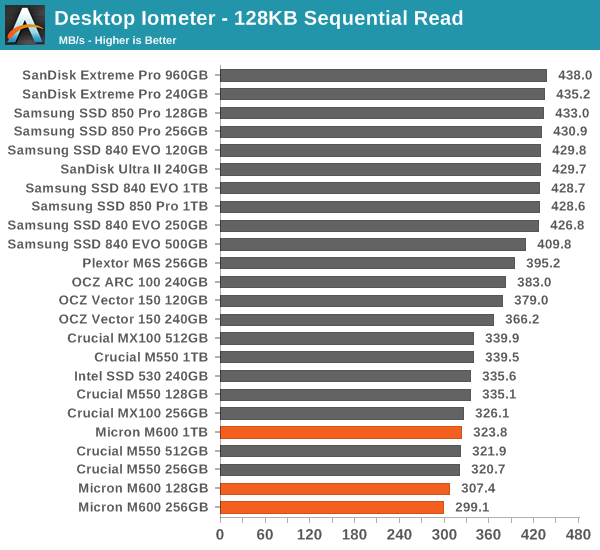

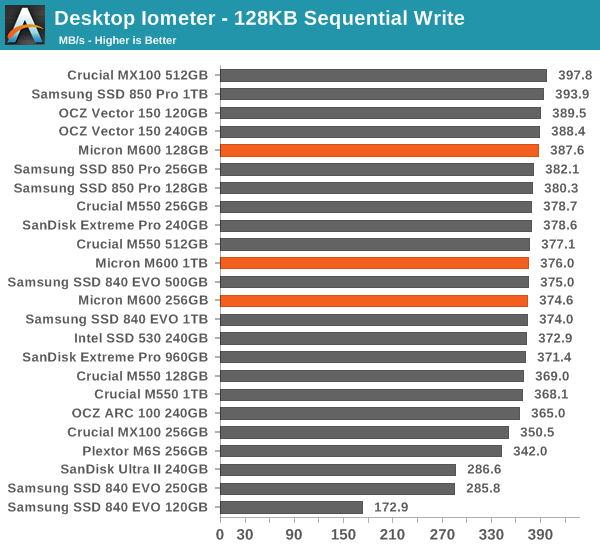

Sequential Read/Write Speed

To measure sequential performance we run a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

Sequential write performance sees a minor increase at smaller capacities thanks to Dynamic Write Acceleration, but aside from that there is nothing surprising in sequential performance.

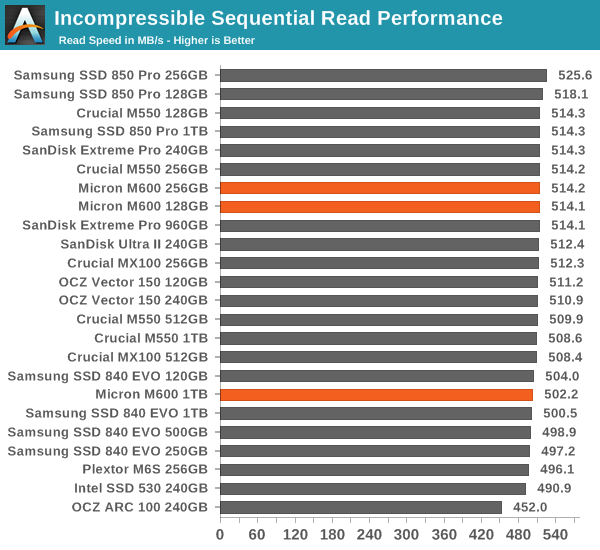

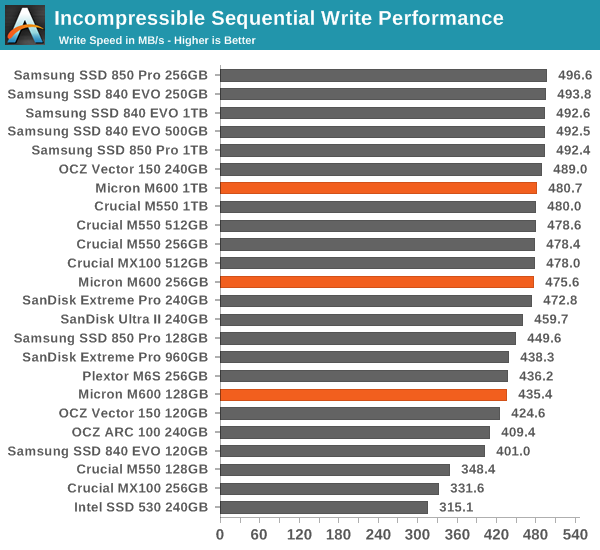

AS-SSD Incompressible Sequential Read/Write Performance

The AS-SSD sequential benchmark uses incompressible data for all of its transfers. The result is a pretty big reduction in sequential write speed on SandForce based controllers, but most other controllers are unaffected.

56 Comments

View All Comments

Kristian Vättö - Tuesday, September 30, 2014 - link

We used to do that a couple of years ago but then we reached a point where SSDs became practically indistinguishable. The truth is that for light workloads what matters is that you have an SSD, not what model the SSD actually is. That is why we are recommending the MX100 for the majority of users as it provides the best value.I think our Light suite already does a good job at characterizing performance under typical consumer workloads. The differences between drives are small, which reflects the minimal difference one would notice in real world with light usage. It's not overly promoting high-end drives like purely synthetic tests do.

Then again, that applies to all components. It's not like we test CPUs and GPUs under typical usage -- it's just the heavy use cases. I mean, we could test the application launch speed in our CPU reviews, but it's common knowledge that CPUs today are all so fast that the difference is negligible. Or we could test GPUs for how smoothly they can run Windows Aero, but again it's widely known that any modern GPU can handle that just fine.

The issue with testing heavy usage scenarios in real world is the number of variables I mentioned earlier. There tends to be a lot of multitasking involved, so creating a reliable test is extremely hard. One huge problem is the variability of user input speed (i.e. how quickly you click things etc -- this vary from round to round during testing). That can be fixed with excellent scripting skills, but unfortunately I have a total lack of those.

FYI, I spent a lot of time playing around with real world tests about a year ago, but I was never able to create something that met my criteria. Either the test was too basic (like installing an app) that showed no difference between drives, or the results wouldn't be consistent when adding more variables. I'm not trying to avoid real world tests, not at all, it's just that I haven't been able to create a suite that would be relevant and accurate at the same time.

Also, once we get some NVMe drives in for review, I plan to revisit my real world testing since that presents a chance for greater difference between drives. Right now AHCI and SATA 6Gbps limit the performance because they account for the largest share in latency, which is why you don't really see differences between drives under light workloads as the AHCI and SATA latency absorb any latency advantage that a particular drive provides.

AnnonymousCoward - Tuesday, September 30, 2014 - link

Thanks for explaining The State of SSDs.I suspect a lot of people don't realize there's negligible performance difference across SSDs. And I think lots of people put SSDs in RAID0! Reviews I've seen show zero real-world benefit.

This isn't a criticism, but it's practically misleading for a review to only include graphs with a wide range of performance. What a real-world test does is get us back to reality. I think ideally a review should start with real-world, and all the other stuff almost belongs in an appendix.

Users should prioritize SSDs with:

1. Good enough (excellent) performance.

2. High reliability and data protection.

3. Low cost.

If #1 is too easy, then #2 and #3 should get more attention. I generally recommend Intel SSDs because I suspect they have the best reliability standards, but I really don't know, and most people probably also don't. OCZ wouldn't have shipped as many as they did if people were aware of their reliability.

leexgx - Saturday, November 1, 2014 - link

nowadays you cant buy a bad SSD (unless its phison based, they norm make Cheap USB flash pen drives) even JMicron based SSDs are OK nowits only compatibility problems that make an SSD bad with some setups

JMicron JMF602 had a Very very very bad SSD controller when they made there first 2 (did i say that to many times) http://www.anandtech.com/show/2614/8 (1 second Write delay)

Impulses - Monday, September 29, 2014 - link

Probably because top tier SSD reached a point a while ago where the differences in performing basic tasks like that is basically milliseconds, which would tell the reader even less.For large transfers the sequential tests are wholly representative of the task.

I think Anand used to have a test in the early days of SSD reviews where he'd time opening five apps right after boot, but it'd basically be a dead heat with any decent drive these days.

Gigaplex - Monday, September 29, 2014 - link

It would tell the reader that any of the drives being tested would fit the bill. Currently, readers might see that drive A is 20% faster than drive B and think that will give 20% better real world performance.Both types of tests are useful, doing strictly real-world tests would miss information too.

AnnonymousCoward - Tuesday, September 30, 2014 - link

> is basically milliseconds, which would tell the reader even less.Wrong; that tells the reader MORE! If all modern video cards produced within 1fps of each other, would you rather see that, or solely relative performance graphs that show an apparent difference?

Wolfpup - Monday, September 29, 2014 - link

Darn, that's a shame these don't have full data loss protection. I assumed they did too! Still, Micron/Crucial and Intel are my top choices for drives :)Wormstyle - Tuesday, September 30, 2014 - link

Thanks for posting the information here. I think you are a bit soft on them with the power failure protection marketing, but you did a good job explaining what they were doing and hopefully they will now accurately reflect the capability of the product in their marketing collateral. A lot of people have bought these products with the wrong expectations on power failure, although for most applications they are still very good drives. What is the source for the market data you posted in the article?Kristian Vättö - Tuesday, September 30, 2014 - link

It's straight from the M500's product page.http://www.micron.com/products/solid-state-storage...

Wormstyle - Tuesday, September 30, 2014 - link

The size of the SSD market by OEM, channel, industrial and OEM breakdown of notebook, tablet, and desktop? I'm not seeing it at that link.