Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

The Supermicro "PUE-Optimized" Server

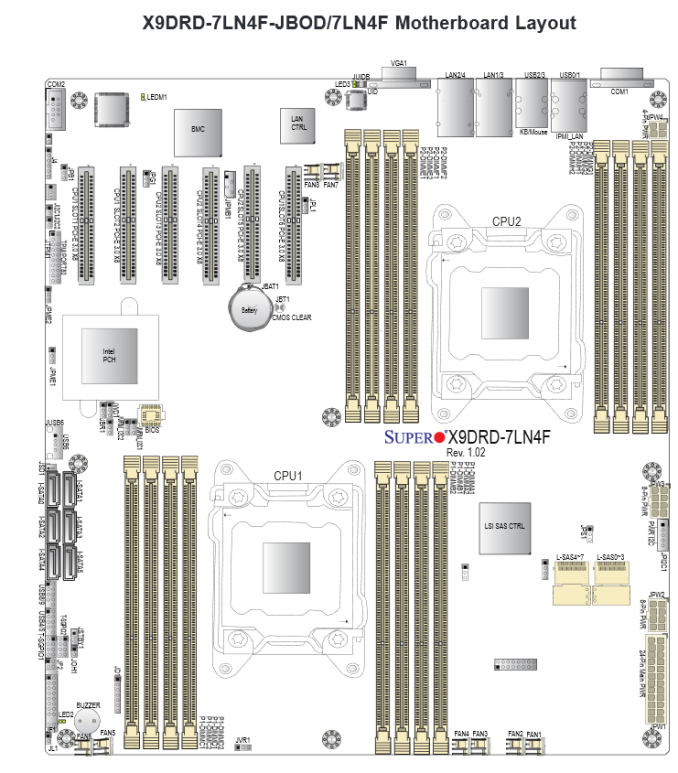

We tested the Supermicro Superserver 6027R-73DARF. We chose this particular server for two main reasons: first, it is a 2U rackmount server (larger fans, better airflow) and secondly, it was the only PUE optimized server with 16 DIMMs. Many applications are more memory capacity than CPU limited, so a 16 DIMM server is more desirable to most of our readers than an 8 DIMM server.

On the outside, it looks like most other Supermicro servers, with the exception that the upper third of the front is left open for better airflow. This in contrast with some Supermicro servers where the upper third is filled with disk bays.

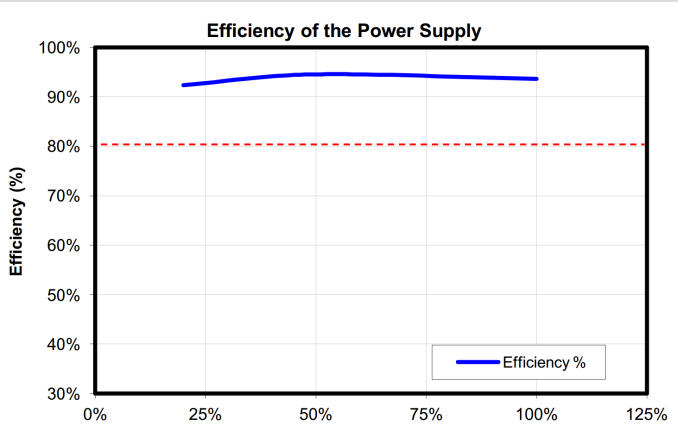

This superserver has a few features to ensure that it can can cope with higher temperatures without a huge increase in energy consumption. First of all, it has an 80 Plus Platinum power supply. A platinum PSU is not exceptional anymore: almost every server vendors offers at least the slightly less efficient 80 Plus Gold PSUs. Platinum PSUs are the standard for new servers, DELL and Supermicro even started offering 80 Plus Titanium PSUs (230V).

Nevertheless, these Platinum PSUs are pretty impressive: they offer better than 92% efficiency from 20% to 100% load.

Secondly, it uses a spreadcore design. Here, the CPU heatsinks do no obstruct each other: the air flow will go over them in parallel.

Three heavy duty fans blow over a relatively simple motherboard design. Notice that even the heatsink on the 8W Intel PCH (602J chipset) is also in parallel with the CPU heatsinks. Indeed, the PCH heatsink will get an unhindered airflow. Last but not least, these servers come with specially designed air shrouds for maximum cooling.

There is some room for improvement though. It would be great to have a model with 2.5-inch drive bays. Supermicro offers a 2,5'' HDD conversion tray (MCP-220-00043-0N), but a native 2.5-inch drive bay model would give even better airflow and serviceability.

We would also like an easier way to replace the CPUs. The screws of the heatsink tend to wear out quickly. But that is mostly a problem of a lab testing servers, less a problem of a real enterprise.

48 Comments

View All Comments

ShieTar - Tuesday, February 11, 2014 - link

I think you oversimplify if you just judge the efficiency of the cooling method by the heat capacity of the medium. The medium is not a heat-battery that only absorbs the heat, it is also moved in order to transport energy. And moving air is much easier and much more efficient than moving water.So I think in the case of Finland the driving fact is that they will get Air temperatures of up to 30°C in some summers, but the water temperature at the bottom regions of the gulf of Finland stays below 4°C throughout the year. If you would consider a data center near the river Nile, which is usually just 5°C below air temperature, and frequently warmer than the air at night, then your efficiency equation would look entirely different.

Naturally, building the center in Finland instead of Egypt in the first place is a pretty good decision considering cooling efficiency.

icrf - Tuesday, February 11, 2014 - link

Isn't moving water significantly more efficient than moving air because a significant amount of energy when trying to move air goes to compressing it rather than moving it, where water is largely incompressible?ShieTar - Thursday, February 13, 2014 - link

For the initial acceleration this might be an effect, though energy used for compression isn't necessary lost, as the pressure difference will decay via motion of the air again (but maybe not in the preferred direction. But if you look into the entire equation for a cooling system, the hard part is not getting the medium accelerated, but to keep it moving against the resistance of the coolers, tubes and radiators. And water has much stronger interactions with any reasonably used material (metal, mostly) than air. And you usually run water through smaller and longer tubes than air, which can quickly be moved from the electronics case to a large air vent. Also the viscosity of water itself is significantly higher than that of air, specifically if we are talking about cool water not to far above the freezing point of water, i.e. 5°C to 10°C.easp - Saturday, February 15, 2014 - link

Below Mach 0.3, air flows can be treated as incompressible. I doubt bulk movement of air in datacenters hits 200+ Mphjuhatus - Tuesday, February 11, 2014 - link

Sir, I can assure you the Nordic Sea hits ~20°C in the summers. But still that tempereture is good enough for cooling.In Helsinki they are now collecting the excess heat from data center to warm up the houses in the city area. So that too should be considered. I think many countries could use some "free" heating.

Penti - Tuesday, February 11, 2014 - link

Surface temp does, but below the surface it's cooler. Even in small lakes and rivers, otherwise our drinking water would be unusable and 25°C out of the tap. You would get legionella and stuff then. In Sweden the water is not allowed to be or not considered to be usable over 20 degrees at the inlet or out of the tap for that matter. Lakes, rivers and oceans could keep 2-15°C at the inlet year around here in Scandinavia if the inlet is appropriately placed. Certainly good enough if you allow temps over the old 20-22°C.Guspaz - Tuesday, February 11, 2014 - link

OVH's datacentre here in Montreal cools using a centralized watercooling system and relies on convection to remove the heat from the server stacks, IIRC. They claim a PUE of 1.09iwod - Tuesday, February 11, 2014 - link

Exactly what i was about to post. Why Facebook, Microsoft and even Google didn't manage to outpace them. PUE 1.09 is still as far as i know an Industry record. Correct me if i am wrong.I wonder if they could get it down to 1.05

Flunk - Tuesday, February 11, 2014 - link

This entire idea seems so obvious it's surprising they haven't been doing this the whole time. Oh well, it's hard to beat an idea that cheap and efficient.drexnx - Tuesday, February 11, 2014 - link

there's a lot of work being done on the UPS side of the power consumption coin too - FB uses both Delta DC UPS' that power their equipment directly at DC from the batteries instead of the wasteful invert to 480vac three phase, then rectify again back at the server PSU level, and Eaton equipment with ESS that bypasses the UPS until there's an actual power loss (for about a 10% efficiency pickup when running on mains power)