Free Cooling: the Server Side of the Story

by Johan De Gelas on February 11, 2014 7:00 AM EST- Posted in

- Cloud Computing

- IT Computing

- Intel

- Xeon

- Ivy Bridge EP

- Supermicro

The Supermicro "PUE-Optimized" Server

We tested the Supermicro Superserver 6027R-73DARF. We chose this particular server for two main reasons: first, it is a 2U rackmount server (larger fans, better airflow) and secondly, it was the only PUE optimized server with 16 DIMMs. Many applications are more memory capacity than CPU limited, so a 16 DIMM server is more desirable to most of our readers than an 8 DIMM server.

On the outside, it looks like most other Supermicro servers, with the exception that the upper third of the front is left open for better airflow. This in contrast with some Supermicro servers where the upper third is filled with disk bays.

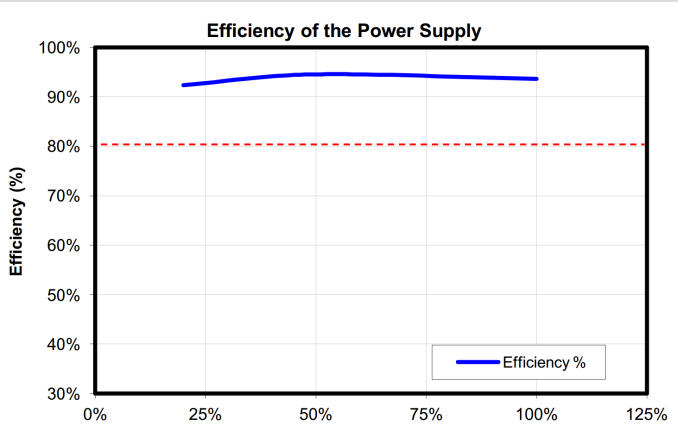

This superserver has a few features to ensure that it can can cope with higher temperatures without a huge increase in energy consumption. First of all, it has an 80 Plus Platinum power supply. A platinum PSU is not exceptional anymore: almost every server vendors offers at least the slightly less efficient 80 Plus Gold PSUs. Platinum PSUs are the standard for new servers, DELL and Supermicro even started offering 80 Plus Titanium PSUs (230V).

Nevertheless, these Platinum PSUs are pretty impressive: they offer better than 92% efficiency from 20% to 100% load.

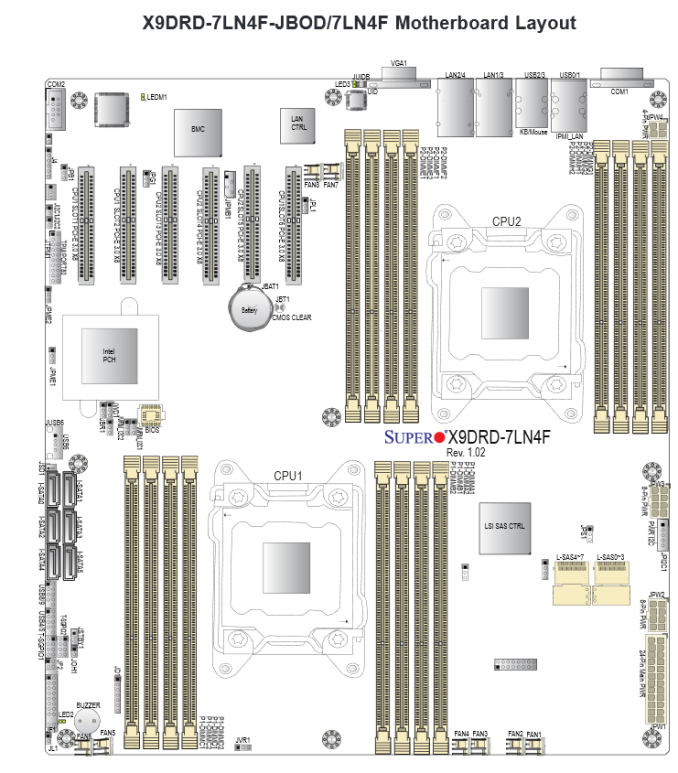

Secondly, it uses a spreadcore design. Here, the CPU heatsinks do no obstruct each other: the air flow will go over them in parallel.

Three heavy duty fans blow over a relatively simple motherboard design. Notice that even the heatsink on the 8W Intel PCH (602J chipset) is also in parallel with the CPU heatsinks. Indeed, the PCH heatsink will get an unhindered airflow. Last but not least, these servers come with specially designed air shrouds for maximum cooling.

There is some room for improvement though. It would be great to have a model with 2.5-inch drive bays. Supermicro offers a 2,5'' HDD conversion tray (MCP-220-00043-0N), but a native 2.5-inch drive bay model would give even better airflow and serviceability.

We would also like an easier way to replace the CPUs. The screws of the heatsink tend to wear out quickly. But that is mostly a problem of a lab testing servers, less a problem of a real enterprise.

48 Comments

View All Comments

lwatcdr - Thursday, February 20, 2014 - link

Here in south florida it would probably be cheaper. The water table is very high and many wells are only 35 feet deep.rrinker - Tuesday, February 11, 2014 - link

It's been done already. I know I've seen it in an article on new data centers in one industry publication or another.A museum near me recently drilled dozens of wells under their parking lot for geothermal cooling of the building. Being large with lots of glass area, it got unbearably hot during the summer months. Now, while it isn't as cool as you might set your home air conditioning, it is quite comfortable even on the hottest days, and the only energy is for the water pumps and fans. Plus it's better for the exhibits, reducing the yearly variation in temperature and humidity. Definitely a feasible approach for a data center.

noeldillabough - Tuesday, February 11, 2014 - link

I was actually talking about this today; the big cost for our data centers is Air Conditioning; what if we had a building up north (arctic) where the ground is alway frozen even in summer? Geothermal cooling for free, by pumping water through your "radiator".Not sure about the environmental impact this would do, but the emptiness that is the arctic might like a few data centers!

superflex - Wednesday, February 12, 2014 - link

The enviroweenies would scream about you defrosting the permafrost.Some slug or bacteria might become endangered.

evonitzer - Sunday, February 23, 2014 - link

Unfortunately, the cold areas are also devoid of people and therefore internet connections. You'll have to figure the cost of running fiber to your remote location, as well as how your distance might affect latency. If you go into permafrost area, there are additional complications as constructing on permafrost is a challenge. A datacenter high in the Mountains but close to population centers would seem a good compromise.fluxtatic - Wednesday, February 12, 2014 - link

I proposed this at work, but management stopped listening somewhere between me saying we'd need to put a trench through the warehouse floor to outside the building, and that I'd need a large, deep hole dug right next to building, where I would bury several hundred feet of copper pipe.I also considered using the river that's 20' from the office, but I'm not sure the city would like me pumping warm water into their river.

Varno - Tuesday, February 11, 2014 - link

You seem to be reporting on the junction temperature which is reported by most measurement programs rather than the cast temperature that is impossible to measure directly without interfering with the results. How have you accounted for this in your testing?JohanAnandtech - Tuesday, February 11, 2014 - link

Do you mean case temperature? We did measure the outlet temperature, but it was significantly lower than Junction temperature. For the Xeon 2697 v2, it was 39-40 °C at 35°C inlet, 45°C at 40°C inlet.Kristian Vättö - Tuesday, February 11, 2014 - link

Google's usage of raw seawater for cooling of their data center in Hamina, Finland is pretty cool IMO. Given that the specific heat capacity of water is much higher than air's, it more efficient for cooling, especially in our climate where seawater is always relatively cold.JohanAnandtech - Tuesday, February 11, 2014 - link

I admit, I somewhat ignored the Scandinavian datacenters as "free cooling" is a bit obvious there. :-)I thought some readers would be surprised to find out that even in Sunny California free cooling is available most of the year.