AMD Kaveri Review: A8-7600 and A10-7850K Tested

by Ian Cutress & Rahul Garg on January 14, 2014 8:00 AM ESTKaveri and Counting Cores

With the move towards highly integrated SoCs we've seen a variety of approaches to core counts. Apple, Intel and Qualcomm still count CPU cores when advertising an SoC. For Apple and Qualcomm that's partially because neither company is particularly fond of disclosing the configuration of their GPUs. More recently, NVIDIA took the somewhat insane stance of counting GPU CUDA cores on its Tegra K1 SoC. Motorola on the other hand opted for the bizarre choice of aggregating CPU, GPU and off-die companion processors with the X8 platform in its Moto X smartphone. Eventually we will have to find a way to characterize these highly integrated SoCs, particularly when the majority of applications actually depend on/leverage both CPU and GPU cores.

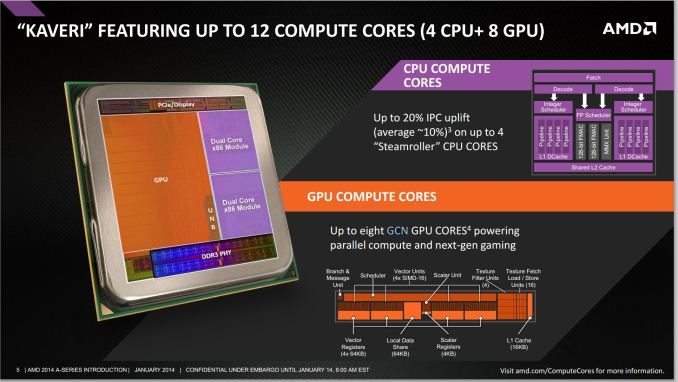

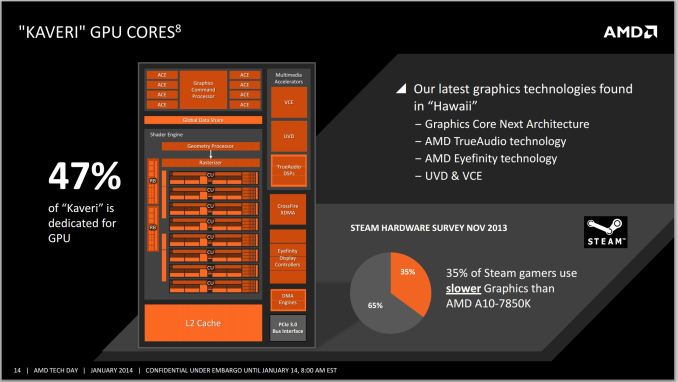

AMD finds itself in a unique position with Kaveri where it has a truly unified CPU/GPU architecture and needs to establish a new nomenclature for use in the future. With 47% of the Kaveri die dedicated for GPU use, and an architecture that treats both CPU and GPU as equals, I can understand AMD's desire to talk about the number of total cores on the APU.

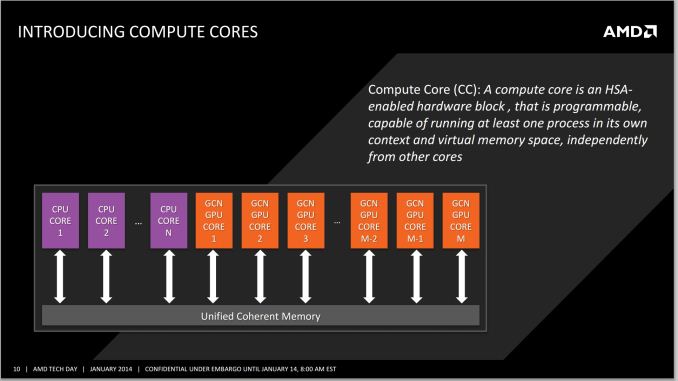

AMD settled on the term "Compute Core", which can refer to either an x86 (or maybe eventually ARM) CPU core or a GCN compute unit. The breakdown is as follows:

- Each thread on a CPU is a Compute Core

- Each Compute Unit on the IGP is a Compute Core

- Total Compute Cores = CPU Compute Cores + IGP Compute Cores

This means that the high end SKU, the A10-7850K will have a total of 12 compute cores: four from the CPU (two Steamroller modules supporting four threads) and eight from the IGP (due to eight compute units from the R7 graphics).

There are some qualifications to be made on this front. Technically, AMD is correct – each compute unit in the IGP and each thread on the CPU can run separate code. The Hawaii GCN architecture can spawn as many kernels as compute units, whereas a couple of generations ago we were restricted to one compute kernel on the GPU at once (merely with blocks of work being split across the CUs). However, clearly these 12 compute units are not equivalent: a programmer will still have to write code for the CPU and GPU specifically in order to use all the processing power available.

Whenever AMD (or partners) are to promote the new APUs, AMD tells us clearly that two sets of numbers should be quoted in reference to the Compute Cores – the total, and the breakdown of CPU/GPU on the APU. Thus this would mean that the A10-7850K APU would be marketed at a “12 Compute Core” device, with “(4 CPU + 8 GPU)” following immediately after. I applaud AMD's decision to not obfuscate the internal configuration of its APUs. This approach seems to be the most sensible if it wants to tout the total processing power of the APU as well as tell those users who understand a bit more what the actual configuration of the SoC is. The biggest issue is how to address the users who automatically assume that more cores == better. The root of this problem is very similar to the old PR-rating debates of the Athlon XP. Explaining to end users the intracacies of CPU/GPU programming is really no different than explaining why IPC * frequency matters more than absolute frequency.

When a programmer obtains an APU, the OpenCL profiler should locate the eight compute units of the GPU and display that to the user for offloading compute purposes; at the same time it is up to the programmer to leverage the threads as appropriately as possible, even with AMD’s 3rd generation Bulldozer modules implementing a dual-INT + single-FP solution.

At launch, AMD will offer the following configurations:

- A10-7850K: 12 Compute Cores (4 CPU + 8 GPU)

- A10-7700K: 10 Compute Cores (4 CPU + 6 GPU)

- A8-7600: 10 Compute Cores (4 CPU + 6 GPU)

The problem with distilling the processing power of the APU into a number of compute cores is primarily on the CPU side. AMD will be keeping the GPU at approximately the same clock (720 MHz for these three) but the CPU frequency will differ greatly, especially with the A8-7600 which has configurable TDP and when in 45W mode will operate 300-400 MHz less.

Steamroller Architecture Redux

We've already documented much of what Steamroller brings to the table based on AMD's Hot Chips presentation last year, but I'll offer a quick recap here.

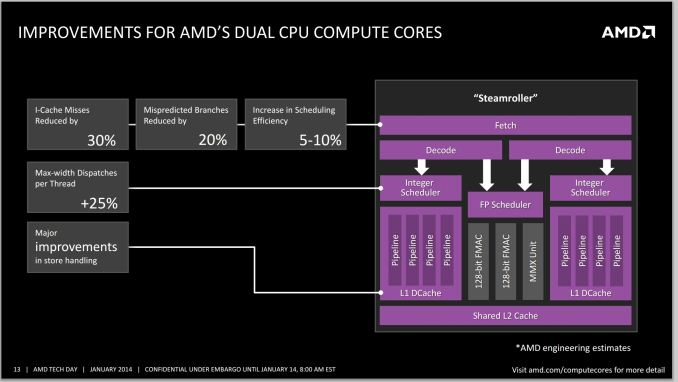

Steamroller brings forward AMD's Bulldozer architecture, largely unchanged. We're still talking about a dual-core module featuring two independent integer execution cores with a single shared floating point execution core capable of executing instructions from two threads in parallel. A single module still appears as two cores/threads to the OS.

In Bulldozer and Piledriver, each integer core had its own independent scheduler but the two cores shared a single fetch and decode unit. Instructions would come in and decodeded operations would be fed to each integer pipe on alternating clock cycles. In Steamroller the decode hardware is duplicated in each module, so now each integer core gets its own decode unit. The two decode units are shared by the one FP unit.

L1 instruction caches increase in size from 64KB to 96KB per module, which AMD claims reduces misses by up to 30%. There's an updated branch predictor which is responsible for reducing mispredicted branches by up to 20%. Both integer and FP register files grow in size as well as an increase in the size of the scheduling window, a combination of which increases the number of dispatches per thread by up to 25%.

There are huge improvements on the store side. Steamroller can now issue up to 2 stores at the same time compared to 1 in Bulldozer/Piledriver. The load/store queue sizes go up by around 20% as well. It's remarkable just how much low hanging fruit there was in the Bulldozer design.

GCN in an APU

The integrated graphics solution on Trinity/Richland launched with a Cayman-derived VLIW4 architecture, which unfortunately came just shortly after desktop side of the equation finished moving from VLIW5/VLIW4 to GCN. Having a product stack with largely different GPU architectures doesn't help anyone, particularly on the developer front. Looking forward, sticking with GCN was the appropriate thing to do, as now Kaveri is using GCN, the same architecture found in AMD’s high end R9-290X GPU, based on the Hawaii platform.

This enabled AMD to add in all the features they currently have on Hawaii with little to no effort – the TrueAudio DSP, the upgraded Video Coding Engine and Unified Video Decoder are such examples. Whether or not AMD decides to develop an APU with more than 8 GCN CUs is another matter. This is a point we've brought up with AMD internally and one that I'm curious about - do any AnandTech readers have an interest in an even higher end APU with substantially more graphics horsepower? Memory bandwidth obviously becomes an issue, but the real question is how valuable an Xbox One/PS4-like APU would be to the community.

380 Comments

View All Comments

dbcoopernz - Tuesday, January 14, 2014 - link

I'd like an APU with enough GPU power to run all the high quality options in MadVR. Would make a very nice HTPC chip.thomascheng - Tuesday, January 14, 2014 - link

I think Mantle can make that happen, but will see how much support they get.MrSpadge - Tuesday, January 14, 2014 - link

Mantle has nothing to do with GP-GPU, that's not using DirectX anyway.JDG1980 - Tuesday, January 14, 2014 - link

My discrete 7750 couldn't handle Jinc scaling in MadVR (at least not without dropping frames on some 1080i test clips), so this is going to be another generation or two in the future.The PS4 APU could probably do it, if that was available in a generic PC form factor.

MrSpadge - Tuesday, January 14, 2014 - link

Add to that DDR4 and/or 4 memory channels, or at least a large on-package buffer like Crystal Well.yankeeDDL - Wednesday, January 15, 2014 - link

But the whole point of HSA is to get the GPU to do CPU work that it could do better (like FP).So you wouldn't need more CPU cores at all.

Look at it this way: AMD's CPU is less efficient than Intel's, while the GPU is more efficient.

Having a CPU-imbalanced APU, would put it in a tough(er) spot to compete against Intel. A GPU imbalanced, as Kaveri is, would improve the lead than it already has on the GPU side.

Now imagine that HSA kicks in, and the GPU lead translates directly in CPU lead ...

mikato - Wednesday, January 15, 2014 - link

This is true. I hope we see more articles as adoption of HSA starts to take hold.It is too bad they are far behind in CPU power, but AMD has the right strategy. Either way, some things are better done on the GPU. AMD just has more benefit than Intel to get things moving that way sooner with their GPU advantage and CPU disadvantage. Intel will have no choice but to follow that lead.

nissangtr786 - Tuesday, January 14, 2014 - link

http://www.extremetech.com/computing/174632-amd-ka...hsa does well on amd main thing they marketed in libreoffice.

Ryan Smith - Tuesday, January 14, 2014 - link

I'm normally not one to speak about other articles, but those are all OpenCL benchmarks. The OpenCL HSA driver won't be released for another quarter. And the HSA SDK is similarly far off.http://www.anandtech.com/show/7677/amd-kaveri-revi...

krumme - Tuesday, January 14, 2014 - link

What a bencmark of a review. I learned a lot. Great. Thanx.