Intel SSD 530 (240GB) Review

by Kristian Vättö on November 15, 2013 1:45 PM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 530

The consumer SSD market is currently at a turning point. SATA 6Gbps is starting to be a bit long in the tooth but SATA Express support is still very limited. There is currently only one motherboard (ASUS Maximus VI Extreme) that has an M.2 slot and even that is limited to PCIe x1, making M.2 quite redundant at this point. On top of the lack of motherboard support, all M.2 SSDs are OEM only at the moment, which makes sense because it's pointless to sell a product that can't be used anywhere yet. The only way to get the full M.2 PCIe experience right now is to buy one of the few laptops with M.2 PCIe SSDs, such as the new Sony VAIO Pro 13 we just reviewed and the 2013 MacBook Air (though it has a proprietary connector).

The wait for moving to PCIe has put manufacturers in an odd situation. Ever since SSDs started gaining popularity there's always been a ton of room for improvement. Fundamentally the SSD market has gone through three different stages. First everyone focused on fast sequential speeds because that was the most important benchmark with hard drives. Shortly after it was understood that it's not the sequential speeds that make SSDs fast but the small random transfers that make hard drives crawl. IOPS quickly became a word that every manufacturer was shouting. Getting the IOPS as high as possible for marketing reasons was an important goal for many but in the process many bypassed another very important metric: Performance consistency. In the last year or so we've finally seen manufacturers paying attention to making performance more consistent instead of just focusing on the peak numbers.

The problem now is that every significant segment from a performance angle has been covered. Almost every SSD in the market is able to saturate the SATA 6Gbps bus. Random IO has also more or less stayed the same for the last year, which suggests that we've hit a hardware bottleneck. IO consistency is the only aspect that still requires some tweaking but most of the latest SSDs do a pretty good job at it too. There's nothing that manufacturers can do with SATA 6Gbps to really take performance to the next level. What makes things even more complex is the physics of NAND because read and program times increase as we move to smaller lithographies.

Since driving performance up is getting harder and harder, manufacturers need to rely on other methods to improve their products. Transitioning to smaller lithography NAND is one of the most common ways because it helps to reduce cost. Even though smaller lithography NAND is actually a step back when it comes to NAND endurance and potentially performance, it's still a powerful marketing tool because consumers tend to think that smaller equals better performance and lower power (we can thank CPUs and GPUs for that mindset). Another rising aspect has been power consumption and especially Windows 8's DevSleep has been a big part of that.

With an overview of the state of the SSD market out of the way, let's focus on the actual Intel SSD 530. The above probably would have been a good tease for the first consumer M.2 drive or a revolutionary SATA 6Gbps drive but unfortunately that's not the case. Intel didn't make much noise when they released the SSD 530 in August and there's a reason for that. Like its predecessor, the SSD 520, the SSD 530 is still SF-2281 based but unlike the SSD 335, the SSD 530 uses a newer silicon revision of the SF-2281. The new B02 stepping doesn't change performance in any way but it lowers power consumption especially when the drive is idling.

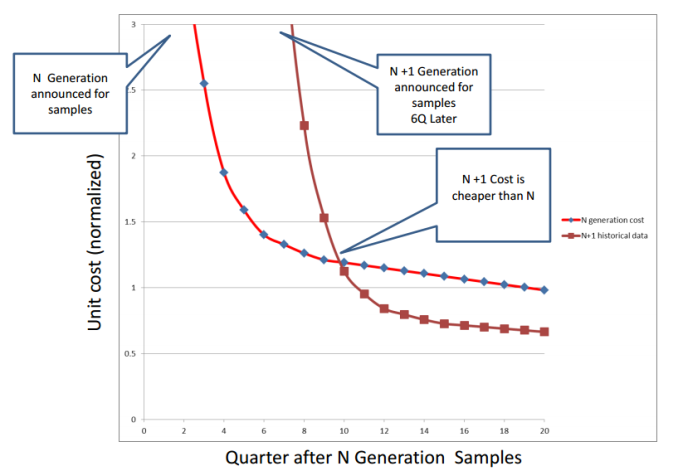

Similar to the SSD 335, the SSD 530 moves from 25nm IMFT NAND to 20nm IMFT NAND. I went through the major differences in the SSD 335 review, but shortly put Intel's 20nm NAND has slightly slower erase times and is otherwise comparable to their 25nm NAND. Some of you probably would have preferred that Intel had stuck with 25nm NAND in their high-end consumer offering but the fact is that 25nm NAND is no longer cost effective and hasn't been for over a year. Let me show you a cool graph of NAND price scaling:

Courtesy of Mark Webb, taken from his 2013 Flash Memory Summit presentation

Now let's apply that graph to the case of Intel's 20nm NAND. "N generation" in the graph means 25nm NAND and "N+1 generation" stands for 20nm NAND. In April 2011 IMFT announced that they have started sampling 20nm MLC NAND (you should now be looking at the "N+1 Generation announced for samples" part, which is at about quarter 7). The Intel SSD 335 was released six quarters later in October 2012. As you can see in the graph, that's the exact spot when 20nm NAND prices started to settle down and they're about 30-40% cheaper than 25nm NAND.

Since 25nm NAND was more mature and had higher performance and endurance, Intel kept using it in the SSD 520 while the mainstream SSD 300-series switched to 20nm NAND. However, the 20nm process has now matured and both performance and endurance are close to what the 25nm process offered, making it viable for Intel's high-end consumer SSD as well. I'd like to remind that while the graph above is based on historical data, each generation from every manufacturer is its own challenges. Delays and unexpected wafer cost increases can shift the N+1 graph to the right, meaning smaller price benefits and a longer period for the new generation to become cost efficient.

Unlike Intel's previous SSDs, the SSD 530 is available in three different form factors: 2.5", mSATA and M.2 (80mm). The capacities for the 2.5" version range from 80GB to 480GB, whereas the mSATA is limited to 240GB and M.2 to 360GB (those are the highest capacities you can get with 64Gbit NAND; mSATA only has room for four packages and 80mm M.2 for six NAND packages). The 2.5" version has also gone through a facelift. Aluminum has remained as the building material of the chassis but the Intel logo is now bigger and more centered and there's a sticker representing a die shot in the upper right corner.

| Intel SSD 530 2.5" Specifications | ||||||

| Capacity (GB) | 80 | 120 | 180 | 240 | 360 | 480 |

| Controller | SandForce SF-2281 | |||||

| NAND | Intel 20nm MLC | |||||

| Sequential Read | 540MB/s | |||||

| Sequential Write | 480MB/s | 490MB/s | ||||

| 4KB Random Read | 24K IOPS | 41K IOPS | 45K IOPS | 48K IOPS | ||

| 4KB Random Write | 80K IOPS | |||||

| Endurance | 20GB/day for 5 years | |||||

| Warranty | 5 years | |||||

Intel rates the SSD 530 at 20GB of host writes for five years. This is a typical rating for high-end consumer SSDs with a 5-year warranty and for heavier workloads Intel advises you to get an enterprise-grade drive. For the record, the Intel SSD 520 was also rated at 20GB/day for five years so there's been no degradation in that sense.

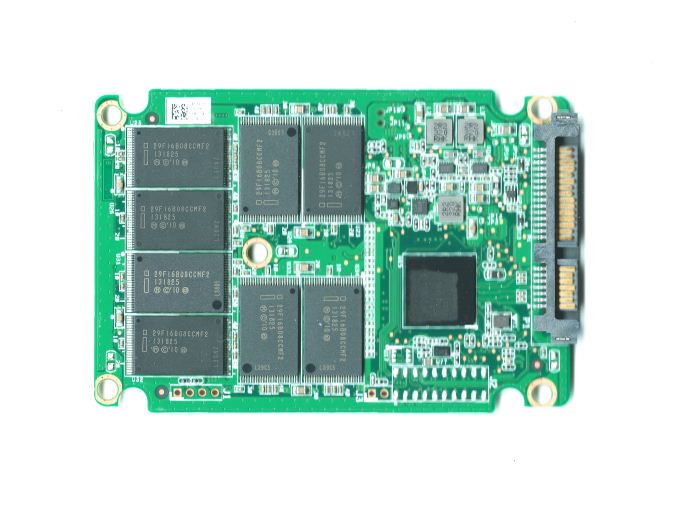

The PCB itself looks similar to the SSD 520. There is a total of 16 NAND packages (eight on each side of the PCB) and no DRAM similar to every other SandForce based drive. However, what's interesting is that the actual controller is Intel branded. I'm still waiting for Intel to clarify the reason but this isn't the first time we've seen a manufacturer branded SandForce controller (Toshiba has been doing this for a while).

Test System

| CPU | Intel Core i5-2500K running at 3.3GHz (Turbo and EIST enabled) |

| Motherboard | AsRock Z68 Pro3 |

| Chipset | Intel Z68 |

| Chipset Drivers | Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory | G.Skill RipjawsX DDR3-1600 4 x 8GB (9-9-9-24) |

| Video Card |

XFX AMD Radeon HD 6850 XXX (800MHz core clock; 4.2GHz GDDR5 effective) |

| Video Drivers | AMD Catalyst 10.1 |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

Thanks to G.Skill for the RipjawsX 32GB DDR3 DRAM kit

60 Comments

View All Comments

dynamited - Friday, November 15, 2013 - link

I count seven asus motherboards with mPCIE, not one, at newegg. Regarding 6bps sata saturated, just run with RAID 0, is that hard to figure out how to do?ExodusC - Friday, November 15, 2013 - link

I don't think TRIM commands can be passed through to SSDs running in RAID 0. At one point the Intel storage drivers supported this, but I heard that this had been pulled. I can't find any documentation on this.Additionally, even though SSDs are fairly reliable, adding another drive simply adds another point for failure.

Wetworkz - Friday, November 15, 2013 - link

You CAN pass TRIM commands through to SSDs running in Raid 0 on Intel hardware. It has NOT been pulled. You need to have the latest Intel Toolbox in combination with the latest RST drivers installed. I just TRIMMED both my arrays a couple days ago.ExodusC - Friday, November 15, 2013 - link

Out of curiosity, is that an automated process, or does it require manual TRIM through the Intel SSD Toolbox? What RAID levels are supported?I also wonder about the compressibility of striped data and if there is any effect there.

Samus - Friday, November 15, 2013 - link

I pass TRIM to my RAID 0 Samsung 840 RAID through the Windows 8 defrag on my H87 chipset. Performance tests prove it works. Unfortunately if I have the IRST software installed the drives are downgraded to SATA 3Gbps. I tried different cables and everything. Uninstalling the IRST software after making the RAID 0 restores them to 6Gbps...DMCalloway - Friday, November 15, 2013 - link

It was my understanding that TRIM worked in RAID 0 with the newer RST drivers, but only on Intel 7 series chipsets and newer. I do like Intel products but this is one thing they shafted us on.Wetworkz - Saturday, November 16, 2013 - link

I just TRIMMED both my arrays a few days ago and one array was on an Intel 6 series chipset. I know that series 6 was not previously supported but I was able to initiate TRIM on the array with the newest Intel Toolbox and the newest RST drivers for the first time the other day. I cannot confirm this is officially supported behavior but I was able to do it with the newest drivers. I would give it a try if you have a series 6 board.'nar - Monday, November 18, 2013 - link

I don't really care about TRIM. Garbage Collection works better anyway, especially on SandForce drives.extide - Friday, November 15, 2013 - link

mPCIE is not the same as M.2dynamited - Friday, November 15, 2013 - link

I believe they are calling it "mPCIe Combo Card" which actually has two connects to one on the motherboard.