NVIDIA Montreal Event - Live Blog

by Anand Lal Shimpi on October 18, 2013 9:49 AM EST

11:41AM EDT - And we're done! Thanks for following!

11:39AM EDT - G-Sync will be available in Q1-2014

11:35AM EDT - Wrapping up now

11:34AM EDT - "the best GPU that's ever been built"

11:34AM EDT - Performance is "outstanding", power is "low"

11:33AM EDT - On shelves in mid-November

11:33AM EDT - New high-end GPU, reviewers getting this soon

11:33AM EDT - NVIDIA GTX 780 Ti

11:33AM EDT - "Today we're announcing our new baby here"

11:32AM EDT - "You can't really do an NVIDIA press conference without graphics cards"

11:31AM EDT - I was told no multi-million dollar checks were exchanged to make this happen

11:30AM EDT - These three guys flew in just to give their thoughts about g-sync

11:30AM EDT - We're skipping lunch

11:30AM EDT - Then we'll do a Q&A with the superstars

11:30AM EDT - One more announcement to make apparently

11:29AM EDT - "once we start going above 90, 95, 100 fps, there are additional benefits that we're not even talking about here"

11:29AM EDT - "going above 60 fps brings in additional benefits"

11:28AM EDT - "almost every single game can benefit from this"

11:27AM EDT - Now Carmack is talking

11:26AM EDT - "With this we don't really need to do things in the same way, probably don't want to jump too much in frame rate though"

11:26AM EDT - "We design games for a specific budget, games now are these massive experiences of different types of environments (cut scenes, star fields, massive #s of explosions) - impossible to design a game for a fixed frame rate today - have to make massive compromises"

11:25AM EDT - "I was actually surprised at how my mind interpreted it"

11:25AM EDT - "you see a continuous stream of what your mind interprets as a moving picture"

11:25AM EDT - Johan on G-Sync "it's pretty incredible"

11:24AM EDT - "With g-sync you have a lot more wiggle room in terms of frame rate"

11:24AM EDT - "It frees up the game developers to make a much more immersive experience"

11:24AM EDT - Tim Sweeney on Gsync - "it's like using an iPhone for the first time"

11:21AM EDT - John, Johan and Tim

11:21AM EDT - Yep here we go

11:20AM EDT - These folks happen to be some of the most important contributors to modern computer graphics

11:19AM EDT - Johan, Sweeney and Carmack is my guess

11:19AM EDT - NVIDIA invited three game developers to come and talk about their thoughts on G-Sync

11:18AM EDT - It's seriously a huge difference

11:18AM EDT - The one on the right had gsync on, and they ran the same content to demonstrate the differences between traditional gaming PC monitors and g-sync displays

11:17AM EDT - I'm going to write up all of this in greater detail, but they had two identical systems/displays setup side by side

11:15AM EDT - The difference is pretty dramatic, seriously it has a tremendous impact on game smoothness

11:15AM EDT - Wow that was pretty awesome

10:53AM EDT - Brb clustering by demo stations

10:52AM EDT - We're about to go see a demo of this

10:52AM EDT - In normal mode, G-Sync monitors have to behave normally, in 3D mode they will flip into G-Sync mode

10:51AM EDT - "The wow is going to come in just a moment when we show you G-Sync in operation"

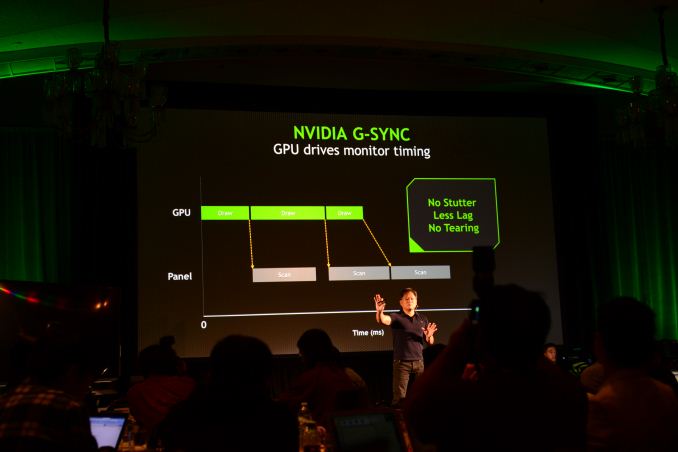

10:51AM EDT - No stutter, no tearing, less lag

10:51AM EDT - Even if you're getting more than 144fps, your lag will still be much lower since we're talking about higher refresh rates

10:50AM EDT - Now the refresh rate in the examples we'll show you is 144Hz

10:50AM EDT - We'll drive the screen as fast as it can be driven

10:50AM EDT - If we have frame rates that are incredibly high, we wait until the max refresh rate for the update (still variable)

10:50AM EDT - That art has been captured into our G-Sync module

10:49AM EDT - Over the years our engineers have figured out how to not only transfer the control to the GPU/software, but also to drive the monitors such that the color continues to be vibrant and beautiful without decay, that gamma is correct

10:49AM EDT - These liquid crystals don't like being driven with sporadic timing

10:49AM EDT - And at the molecular level

10:49AM EDT - LCD displays are complicated things at the electronic level

10:48AM EDT - The logic is so fundamentally simple, implementation is incredibly complicated

10:48AM EDT - As soon as the frame is done drawing, we should update the monitor as soon as we can

10:48AM EDT - We shouldn't update the monitor until the frame is done drawing

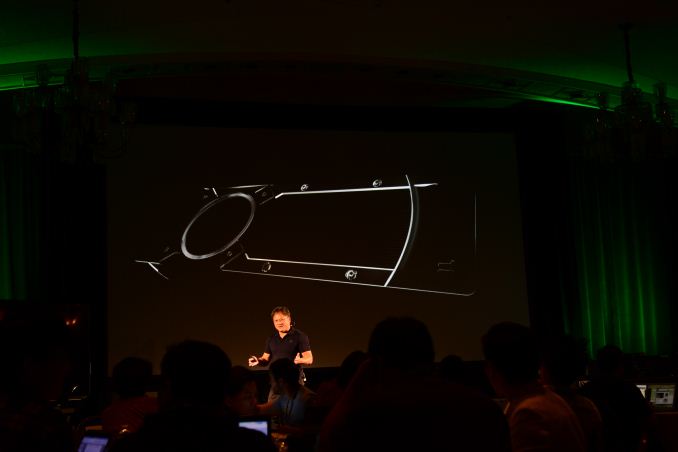

10:48AM EDT - Instead of the monitor driving the timing (every 60Hz there's a scan), we transfer the timing to the GPU

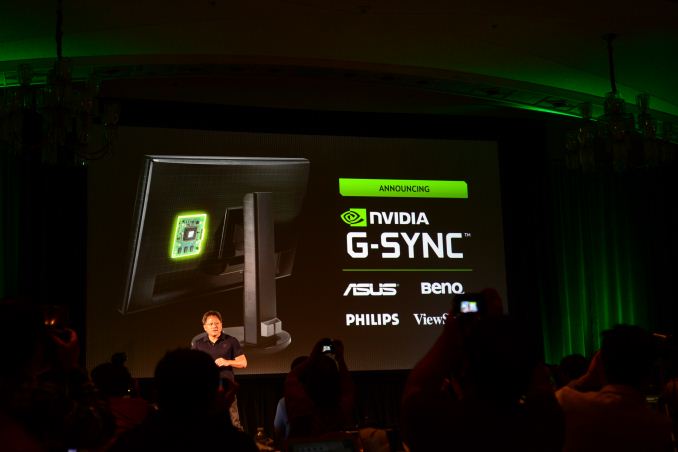

10:47AM EDT - ASUS, BenQ, Philips, ViewSonic have already signed up

10:47AM EDT - G-Sync Module will be integrated into the top gaming monitors around the world

10:47AM EDT - Results can't be projected onto the screen since the projector doesn't have G-Sync

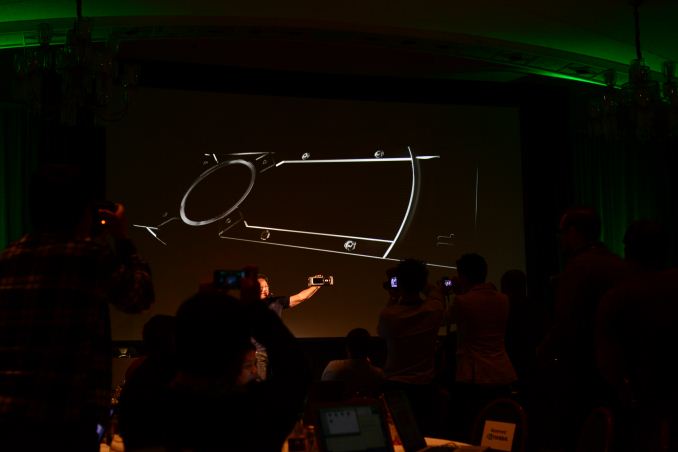

10:46AM EDT - G-Sync module goes into a gaming monitor, replaces the video scaler

10:46AM EDT - End to end architecture that starts with Kepler GPU, ends with a brand new technology - G-Sync Module

10:46AM EDT - NVIDIA G-Sync

10:46AM EDT - "One of my proudest contributions as a company to computer graphics"

10:45AM EDT - Announcement imminent

10:45AM EDT - They went off to study the problem and came back with a solution

10:45AM EDT - With a fantastic team of engineers, we went off on the side to solve this problem

10:45AM EDT - You simply have to take a step back and ask yourself, where are these artifacts coming from, why do they happen and how do I eliminate the bane of our existence

10:44AM EDT - "New GPU, new monitor technology and software that integrates the two"

10:44AM EDT - "I've described the fundamental problem, so the fundamental solution will be a part of that"

10:44AM EDT - Sounds like we're heading towards NVIDIA doing some sort of a new display tech

10:44AM EDT - Reiterating that the GPU and monitor are autonomous, creating this problem of stutter/lag/tearing

10:43AM EDT - This seems to be how NVIDIA does its major announcements, fully expecting things to get crazy very soon

10:43AM EDT - I will say that I like Jen-Hsun presenting, but we're ~50 minutes in with nothing substantially new now

10:41AM EDT - Artifact called tearing

10:41AM EDT - By not waiting for vsync, the monitor could be in the middle of a frame update, as a result you'll end up scanning part of the last frame and part of the new frame

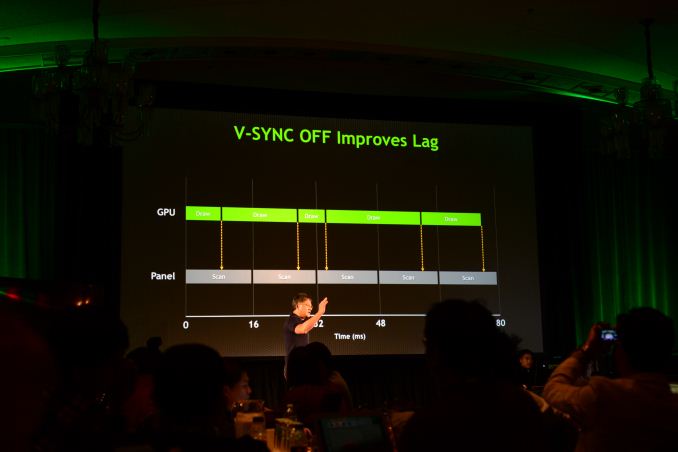

10:41AM EDT - Turning off vsync lets us update the moment the frame buffer is complete

10:40AM EDT - We want games to play well but also have higher production quality

10:40AM EDT - That's unlikely/undesirable, because we want content to get better, not just higher frame rate

10:39AM EDT - The only option is to make sure you're rendering at some insane frame rate so the minimum frame rate stays above 60Hz

10:39AM EDT - Something you can't avoid

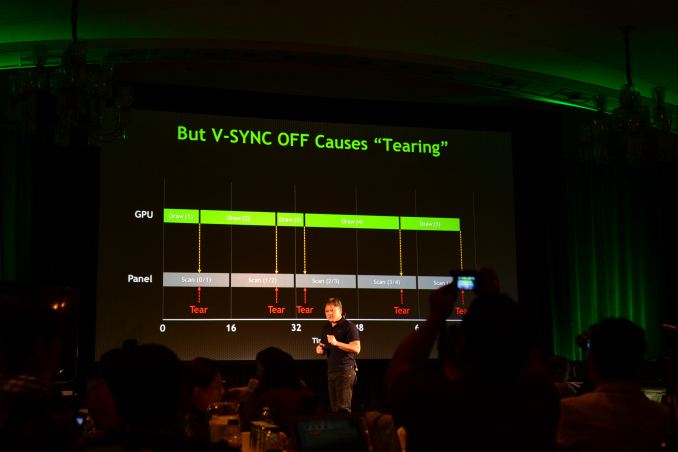

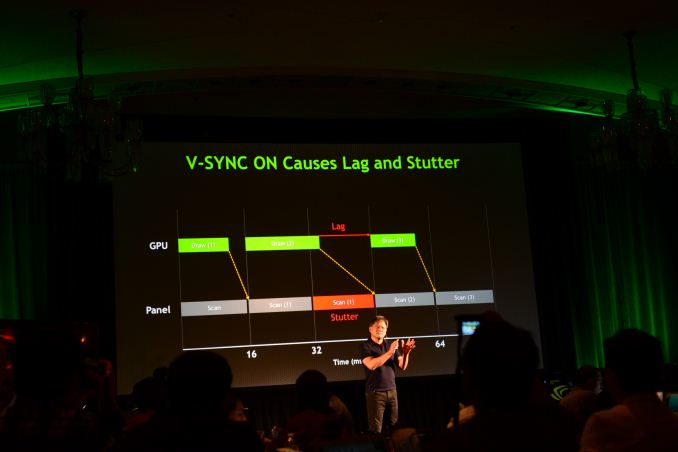

10:39AM EDT - Stutter and lag are two fundamental experiences of having vsync on, independent of GPU, monitor or PC

10:39AM EDT - You can improve this scenario with triple buffering, but you just have another buffer to deal with - still deal with the same fundamental issue of draw rate vs. refresh rate imbalance

10:38AM EDT - Similar in nature to the stutter effect you get from playing a 24 fps source on a 60Hz TV, you get doubling of frames

10:38AM EDT - Difference where scan rate of the monitor is different from the frame rate of the GPU

10:37AM EDT - Because you can't flip to the other buffer until you're done drawing, and once you're done drawing you have to wait until the next vertical sync, there are times when you effectively scan the same frame to the monitor twice

10:37AM EDT - Not only does it have to complete its own frame but it has to wait for vertical sync

10:37AM EDT - GPU has to wait until the monitor is done scanning all of its vertical lines from the last buffer before it flips

10:36AM EDT - Vsync on looks like this

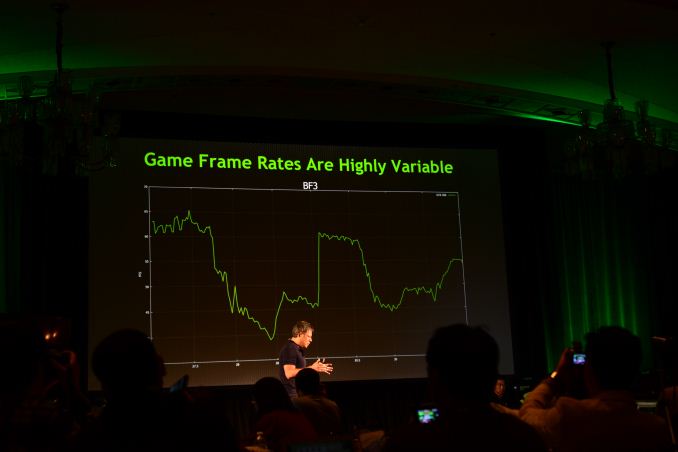

10:36AM EDT - Example: BF3 frame rate varies between 60 fps all the way down to upper 30s

10:36AM EDT - Multiplayer games, big explosions, etc... can cause big variances in frame rates

10:36AM EDT - Game frame rates are instead, highly variable

10:35AM EDT - That's the way it was architected, how it was intended and what comp graphics was hoped to be

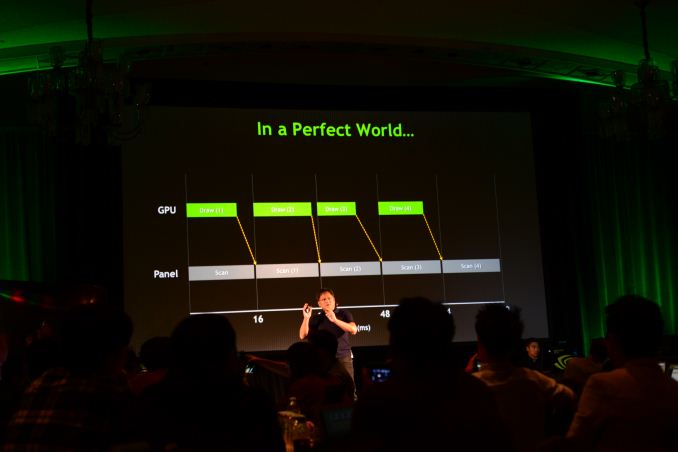

10:35AM EDT - Or you could have so much performance that every single frame is faster than scan rate, as a result you have perfectly smooth 60Hz

10:35AM EDT - Games could decide to just stop drawing, that's one technique

10:35AM EDT - This is your flight simulation experience, this is your game console experience for driving games, because they can control how much they draw

10:34AM EDT - In a perfect world we're drawing faster than we're scanning out, always ready for scanning out, as a result every single 16 ms we see a new frame

10:34AM EDT - In this example we're drawing faster than the refresh rate (60Hz), every 16ms we complete the scanning of one frame, flip the buffer, the other buffer is now completed drawing, we scan out of that while we draw into the other

10:34AM EDT - When that scan is complete it flips, scans out of the other buffer - double buffering

10:33AM EDT - When the scan is complete, it flips, scans out of the other (completed) frame buffer

10:33AM EDT - In a perfect world, the way computer graphics was intended to work, the GPU would render into one buffer while the monitor is scanning out of another

10:33AM EDT - if you try to overcome it, you will see tearing

10:33AM EDT - and lag

10:33AM EDT - Because the sample rate is different from the source rate, you have no choice but to experience stutter

10:33AM EDT - Monitor is sampling what's being drawn

10:32AM EDT - GPU renders to frame buffer, monitor scans out from another autonomous frame buffer

10:32AM EDT - The problem is fundamental in the sense that your monitor and GPU rendering update independently of one another

10:32AM EDT - "More brain cells have been dedicated to the elimination of these three PC gaming conditions than just about any other"

10:32AM EDT - Applause for these problems

10:31AM EDT - "These things stutter, lag, tearing - has been the bane of our existence for as long as we remember"

10:31AM EDT - "You're asking yourself, but he did say that this is fundamental to modern computer graphics"

10:31AM EDT - "It doesn't kill you, but nothing would bring you more joy than if these three conditions were to disappear"

10:31AM EDT - "You can survive it, but you like it to go away"

10:31AM EDT - "probably acne"

10:30AM EDT - "Stutter, lag and tearing - that is as frustrating, as undesirable for a PC gamer as foot fungus"

10:30AM EDT - This is the bane of existence of PC gamers

10:30AM EDT - "All of the work that we do to optimize for frame rate and experience, in the final analysis the gamer and their experience comes down to stutter, lag and tearing"

10:29AM EDT - Now talking about 4K Surround support, challenges of synchronization/latency when doing SLI to drive these insane surround setups

10:28AM EDT - Sorry for the delay there, NVIDIA killed the WiFi in order to test Twitch streaming

10:28AM EDT - Now talking about 4K

10:27AM EDT - Demonstrating Twitch streaming via GFE/ShadowPlay

10:24AM EDT - Jen-Hsun is getting very friendly on stage

10:23AM EDT - One click broadcast using ShadowPlay directly to Twitch

10:22AM EDT - GFE Streams directly to twitch

10:22AM EDT - Available in beta on October 28th as well

10:22AM EDT - Shadowplay was originally delayed because it used to generate M2TS files but there was a desire to shift over to MP4 instead for broader playback

10:21AM EDT - Recap: Shadowplay uses Kepler's video encode engine to capture and save the last 20 minutes of PC gameplay

10:20AM EDT - http://www.anandtech.com/show/6973/nvidia-geforce-gtx-780-review/5

10:20AM EDT - NVIDIA has already announced Shadowplay, so this is a re-announcement I guess

10:20AM EDT - Second announcement: NVIDIA Shadowplay

10:19AM EDT - Launches on October 28th, same time when Shield's game console mode goes live via OTA update

10:19AM EDT - These bundles are pretty cool

10:19AM EDT - If you buy a GTX 660 or 760 you get Splinter Cell + Assassins Creed and $50 off of Shield

10:18AM EDT - If you have a GTX 770, 780 or Titan, you get Batman, Splinter Cell, Assassins Creed Black Flag and $100 off Shield

10:18AM EDT - Announcing Holiday Bundle

10:17AM EDT - Ok Gamestream PC to Shield demo is done, back to the presentation

10:15AM EDT - Showing off Batman Arkham Origins

10:14AM EDT - This seems like an overly complex solution to this problem

10:14AM EDT - GeForce PC renders, Shield streams, external BT controller controls

10:13AM EDT - Using a wireless Bluetooth controller to control all of this

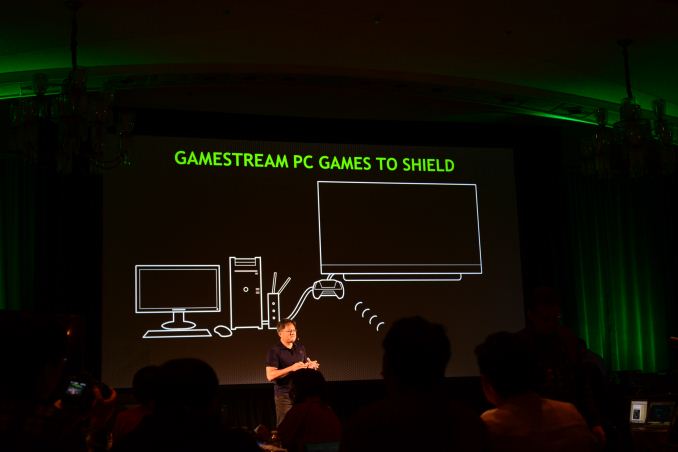

10:12AM EDT - Close the lid on Shield, puts it in "game console mode", transmits controls to your PC, receives the compressed video stream from your PC

10:11AM EDT - Demo time, they're about to demonstrate streaming PC games to Shield - we've seen this before, nothing new yet

10:10AM EDT - Now talking about Gamestreaming PC games to Shield

10:09AM EDT - All of those examples use Gamestream

10:09AM EDT - Gamestream has already been demonstrated, parts of it at least, in streaming from Grid to Shield, streaming from GeForce to Shield

10:08AM EDT - Created a fundamental new technology for remote graphics, remotely processed, compressed and streamed back quickly

10:08AM EDT - Natively on a device, streaming to another device, streaming from the cloud...

10:08AM EDT - "You should be able to enjoy video games the way you enjoy digital video today"

10:07AM EDT - First one is called Gamestream

10:07AM EDT - These are all examples of game technologies NV has created over the years - "today I've got some really really special new game technologies to reveal to you"

10:06AM EDT - GFE downloads optimal playable settings, based on system specs and other data points in the cloud

10:06AM EDT - "We've collected a massive database of the best way to play your games and store it in the cloud"

10:05AM EDT - Recapping what GFE does.

10:04AM EDT - Now talking about GeForce Experience, another game tech innovation from NV

10:04AM EDT - "Because of 3D vision we have a much deeper understanding of monitor technology and your visual systems"

10:03AM EDT - Next example of NV's investment in game tech: 3D Vision

10:03AM EDT - Calling SLI a "time machine" to see what PCs would perform like in the future

10:03AM EDT - If you wanted to develop for a PC system 2 - 3 years out, using SLI can get you there

10:03AM EDT - Talking about SLI as one of the first examples of NVIDIA's investment in game tech

10:02AM EDT - Third pillar is game tech, actual technology incorporated into PCs

10:02AM EDT - "We want to make that fundamental contribution to real time games"

10:02AM EDT - "In game works our vision is to be to games what ILM is to movies"

10:02AM EDT - "Today I want to talk about the third pillar of our strategy"

10:01AM EDT - (Side note, I'm still testing video playback battery life on the ASUS T100 during the press conference)

10:01AM EDT - All of this is a part of Game Works

10:00AM EDT - Flame Works - world's first, real time volumetric fire and smoke rendering system. Simulated, different every time, interacts with physical scene objects, casts shadows

10:00AM EDT - GI Works is a scalable global illumination system that game devs can use instead of attempting to simulate global lighting through tons of local light sources

09:59AM EDT - Talking about NV's Global Illumination lighting system

09:59AM EDT - NVIDIA's Game Works is the second pillar, a bunch of tools/modules to help game developers implement physics and other effects into their games

09:58AM EDT - Jen-Hsun is talking about NVIDIA's gaming strategy, the first pillar is the computing platform (NV wants to span Android, PC, Cloud)

09:57AM EDT - Jen-Hsun took the stage earlier than expect, we're on!

24 Comments

View All Comments

jjj - Friday, October 18, 2013 - link

Any chance you can ask if they plan to integrate G-Sync into mobile devices (make the display driver if they have to) or suggest that they should try to?DukeN - Friday, October 18, 2013 - link

Finally, G-Unit and N-Sync merged.It's a great day for the world..

Kiste - Friday, October 18, 2013 - link

For some reason I have the strong suspicion that the G-Sync module will go into TN-Panel "gaming monitors", which are useless to those of us who actually care about image quality.Sabresiberian - Friday, October 18, 2013 - link

My thoughts, too. None of the so-called gaming monitors have received good reviews for their screen quality. 120Hz gets raves, but the TN panels used, not so much.repoman27 - Friday, October 18, 2013 - link

So the G-Sync chip is either replacing or directly driving the T-Con in the display... And we've totally thrown CVT out the window, so what protocol are the GPUs even using to connect to the display? Something proprietary over a DisplayPort/HDMI physical link?Ikas - Friday, October 18, 2013 - link

Would be great to see G-Sync available on laptops as well.Any word if it's coming for laptops or is it PC monitors only?dew111 - Friday, October 18, 2013 - link

I am very skeptical about this implementation. If you know anything about how an LCD display works, you know that all the pixel data is swept out to the panel, kind of (but not really) like on a CRT. And you have to keep sweeping, because if you stop, the pixels start to wander back to a neutral position. Like JHH said, it would be immensely complicated to get around this. Interrupt the sweep, and some pixels may start to wander too far. Wait for the sweep to finish, and you have stutter.So the big questions are: how expensive is it? Is a new kind of LCD needed? Will there be versions on quality monitor types, or just TN panels (not interested)?

And of course, an open standard would be nice, if it truly is a revolutionary as this press release makes it sound. Then again, they said the same thing about 3D Vision, and look where that is. At least on the face, it smells like another vendor lock-in scheme (although maybe it is also a really good technology).

dew111 - Friday, October 18, 2013 - link

Also, comments like, "I was actually surprised at how my mind interpreted it," do not inspire much confidence. If you are conscious of how your mind interprets it, that seems like a bad thing. Maybe you just had your reviewer hat on at the time.mdrejhon - Friday, October 18, 2013 - link

Actually, LCD's today are now able to sync to any refresh rate (60Hz through 144Hz). My existing 144Hz monitor can use any refresh rate, as long as it's unchanging. The monitor maker has successfully been able to drive pixels at any refresh rate as long as the refresh rate is constant.What appears to be happening now, is that the monitor is always scanned at a speed of 1/144sec, but asynchronously of the 144Hz intervals. Modern LCD pixels are now stable, as LCD pixel transitions on modern 120Hz monitors are now slowly starting to resemble square waves (small ripples during the first few milliseconds, then a flat plateau until the end of a refresh). Basically a 1ms monitor (usually ~4-5ms actual) leaves plenty of time (16.7ms) between refreshes at 60Hz. Because the transitions curves are becoming cliffs now on faster LCD monitors compared to the large length of a refresh (16.7ms), LCD pixels are now stable enough to support asynchronous refresh rate operation.

An excellent animation of LCD pixel transitions slowly starting to resemble square-waves:

http://www.testufo.com/#test=eyetracking&patte...

View this on a modern 120Hz or 144Hz monitor.

This is proof that modern LCD panels are now ready for asynchronous refresh rates. They might need a "re-refresh" after maybe a tenth of a second or so, but GPU game frame rates are much higher than that.

drinkperrier - Friday, October 18, 2013 - link

montréal power!