The iPhone 5s Review

by Anand Lal Shimpi on September 17, 2013 9:01 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 5S

Display

The iPhone 5s, like the iPhone 5c, retains the same 4-inch Retina Display that was first introduced with the iPhone 5. The 4-inch 16:9 LCD display features a 1136 x 640 resolution, putting it at the low end for most flagship smartphones these days. It was clear from the get-go that a larger display wouldn’t be in the cards for the iPhone 5s. Apple has stuck to its two generation design cadence since the iPhone 3G/3GS days and it had no indication of breaking that trend now, especially with concerns of the mobile upgrade cycle slowing. Recouping investment costs on platform and industrial design are a very important part of making the business work.

Apple is quick to point out that iOS 7 does attempt to make better use of display real estate, but I can’t shake the feeling of being too cramped on the 5s. I’m not advocating that Apple go the route of some of the insanely large displays, but after using the Moto X for the past month I believe there’s a good optimization point somewhere around 4.6 - 4.7”. I firmly believe that Apple will embrace a larger display and branch the iPhone once more, but that time is just not now.

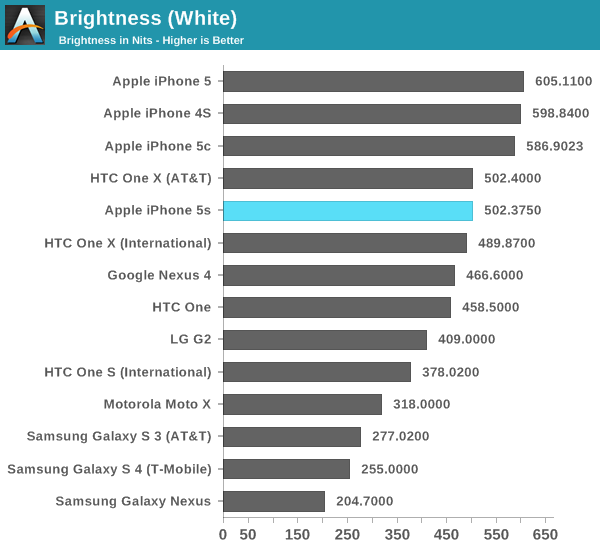

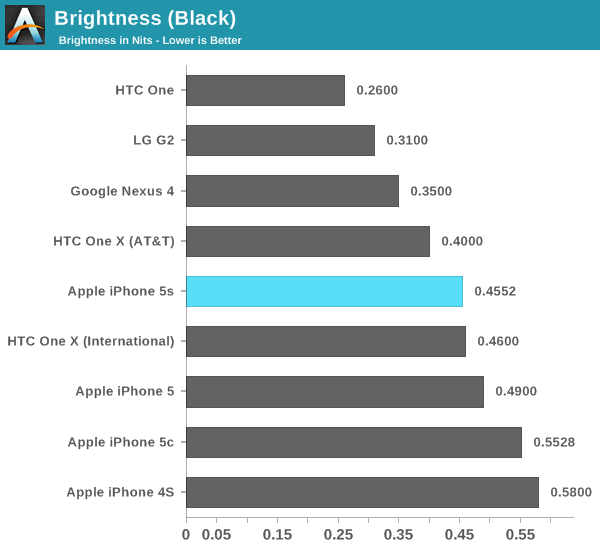

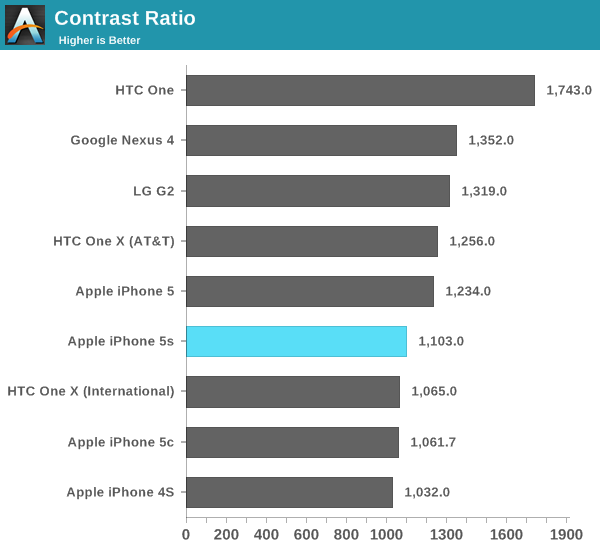

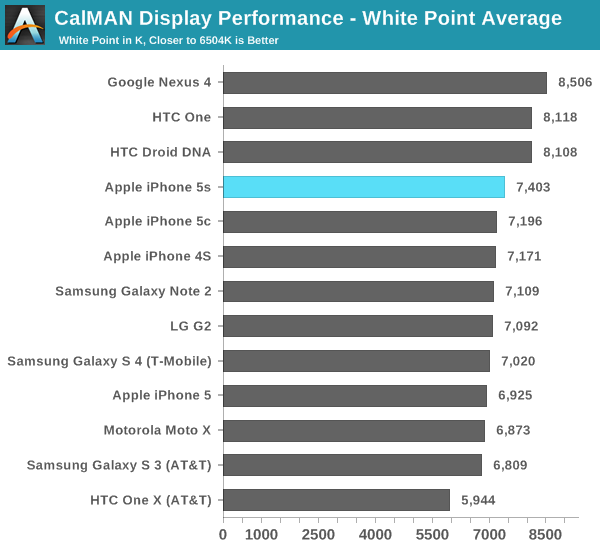

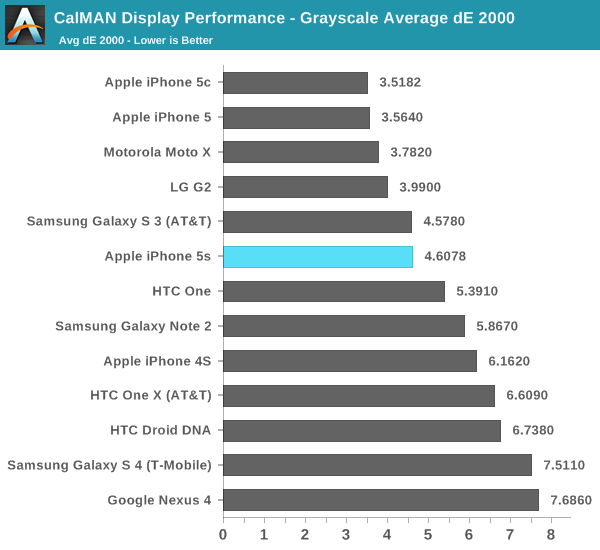

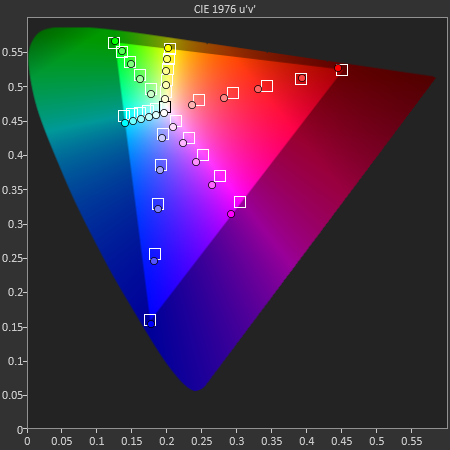

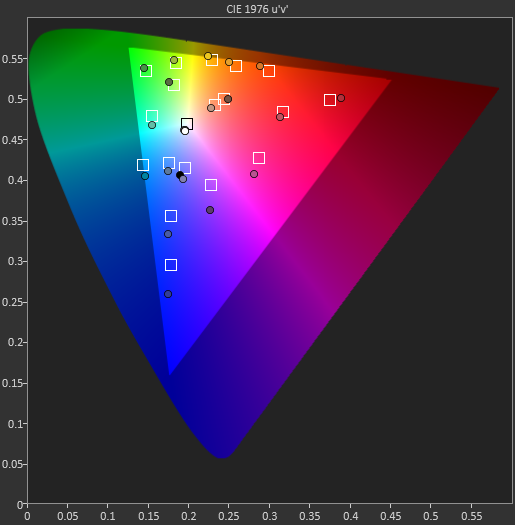

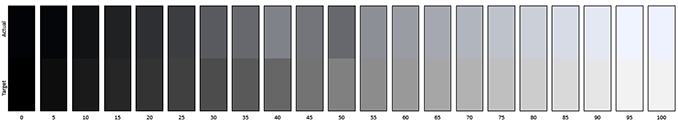

The 5s’ display remains excellent and well calibrated from the factory. In an unusual turn of events, my iPhone 5c sample came with an even better calibrated display than my 5s sample. It's a tradeoff - the 5c panel I had could go way brighter than the 5s panel, but its black levels were also higher. The contrast ratio ended up being very similar between the devices as a result. I've covered the panel lottery in relation to the MacBook Air, but it's good to remember that the same sort of multi-source components exist in mobile as well.

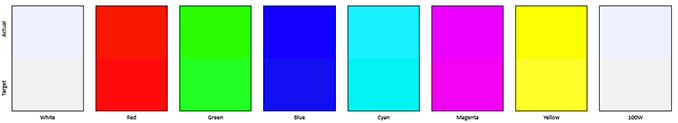

Color accuracy is still excellent just out of the box. Only my iPhone 5c sample did better than the 5s in our color accuracy tests. Grayscale accuracy wasn't as good on my 5s sample however.

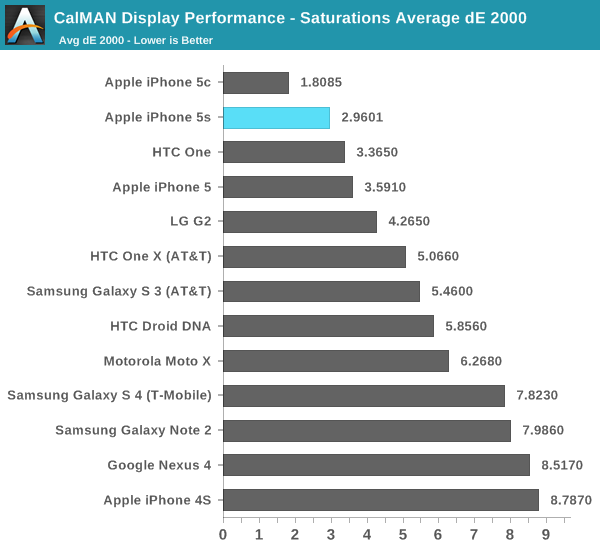

Saturations:

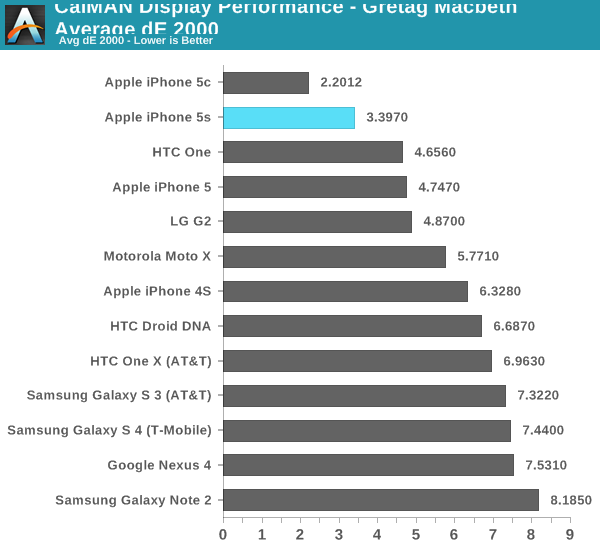

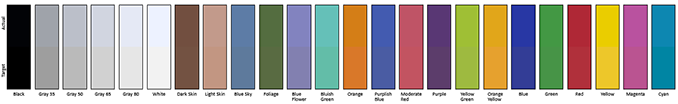

GMB Color Checker:

Cellular

When early PCB shots of the 5s leaked, I remember Brian counting solder pads on the board to figure out if Apple moved to a new Qualcomm baseband solution. Unfortunately his count came out as being the same as the existing MDM9x15 based designs, which ended up what launched. It’s unclear whether or not MDM9x25 was ready in time in order to be integrated into the iPhone 5s design, or if there was some other reason that Apple chose against implementing it here. Regardless of the why, the result is effectively the same cellular capabilities as the iPhone 5.

Apple tells us that the wireless stack in the 5c and 5s is all new, but the lack of LTE-Advanced features like carrier aggregation and Category 4 150Mbps downlink make it likely that we’re looking at a MDM9x15 derivative at best. LTE-A support isn’t an issue at launch, however as Brian mentioned on our mobile show it’s going to quickly become a much needed feature for making efficient use of spectrum and delivering data in the most power efficient way.

The first part is relatively easy to understand. Carrier aggregation gives mobile network operators the ability of combining spectrum across non-contiguous frequency bands to service an area. The resulting increase in spectrum can be used to improve performance and/or support more customers on LTE in areas with limited present day LTE spectrum.

The second part, improving power efficiency, has to do with the same principles of race to sleep that we’ve talked about for years. The faster your network connection, the quicker your modem can transact data and fall back into a lower power sleep state.

The 5s’ omission of LTE-A likely doesn’t have immediate implications, but those who hold onto their devices for a long time will have to deal with the fact that they’re buying at the tail end of a transition to a new group of technologies.

In practice I didn’t notice substantial speed differences between the iPhone 5s, 5c and the original iPhone 5. My testing period was a bit too brief to adequately characterize the device but I didn’t have any complaints. The 5s retains the same antenna configuration as the iPhone 5, complete with receive diversity. As Brian discovered after the launch, the Verizon iPhone 5s doesn’t introduce another transmit chain - so simultaneous voice and LTE still aren’t possible on that device.

Apple is proud of its support for up to 13 LTE bands on some SKUs. Despite the increase in support for LTE bands there are a lot of iPhone 5s SKUs that will be shipped worldwide:

| Apple iPhone 5S and 5C Banding | |||||||

| iPhone Model | GSM / EDGE Bands | WCDMA Bands | FDD-LTE Bands | TDD-LTE Bands | CDMA 1x / EVDO Rev A/B Bands | ||

|

5S- A1533 (GSM) |

850, 900, 1800, 1900 MHz | 850, 900, 1700/2100, 1900, 2100 MHz | 1, 2, 3, 4, 5, 8, 13, 17, 19, 20, 25 | N/A | N/A | ||

|

5S- A1533 (CDMA) |

800, 1700/2100, 1900, 2100 MHz | ||||||

|

5S- A1453 |

1, 2, 3, 4, 5, 8, 13, 17, 18, 19, 20, 25, 26 | ||||||

|

5S- A1457 5C- A1507 |

850, 900, 1900, 2100 MHz | 1, 2, 3, 5, 7, 8, 20 | N/A | ||||

|

5S- A1530 5C- A1529 |

1, 2, 3, 5, 7, 8, 20 | 38, 39, 40 | |||||

| Apple iPhone 5S/5C FCC IDs and Models | |||

| FCC ID | Model | ||

| BCG-E2642A | A1453 (5S) A1533 (5S) | ||

| BCG-E2644A | A1456 (5C) A1532 (5C) | ||

| BCG-E2643A | A1530 (5S) | ||

| BCG-E2643B | A1457 (5S) | ||

| BCG-E2694A | A1529 (5C) | ||

| BCG-E2694B | A1507 (5C) | ||

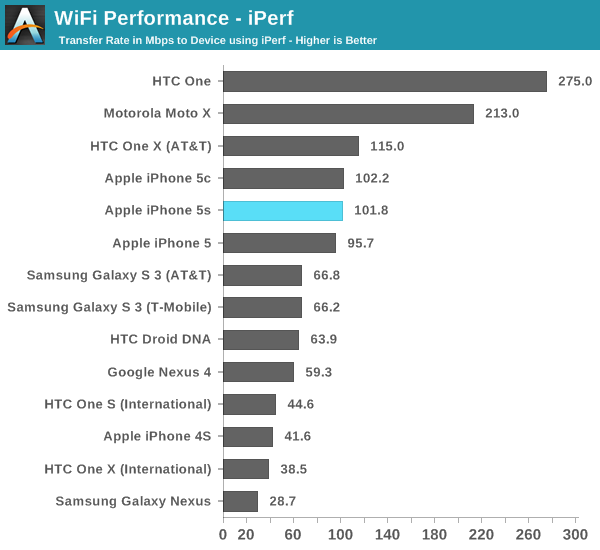

WiFi

WiFi connectivity also remains unchanged on the iPhone 5s. Dual band (2.4/5GHz) 802.11n (up to 150Mbps) is the best you’ll get out of the 5s. We expected Apple to move to 802.11ac like some of the other flagship devices we’ve seen in the Android camp, but it looks like you’ll have to wait another year for that.

I don’t believe you’re missing out on a lack of 802.11ac support today, but over the life of the iPhone 5s I do expect greater deployment of 802.11ac networks (which can bring either performance or power benefits to a mobile platform).

WiFi performance seems pretty comparable to the iPhone 5. The HTC One and Moto X pull ahead here as they both have 802.11ac support.

464 Comments

View All Comments

MatthiasP - Tuesday, September 17, 2013 - link

Wow, first real review on the web AND deep as always, a very nice job from Anand. :)sfaerew - Wednesday, September 18, 2013 - link

Benchmarks(GFXBench 2.7,3DMark.Basemark X.etc.) are AArch64 version?There are 30~40% performance gap between v32geekbench and v64geekbench.

INT(ST)1471 vs 1065.

FP(ST)1339 vs 983

Wilco1 - Wednesday, September 18, 2013 - link

And Bay Trail Geekbench at 2.4GHz: 1063 (INT), 866 (FP)So A7 has beaten BT already by a huge margin despite BT not even being for sale yet...

TraderHorn - Wednesday, September 18, 2013 - link

You're comparing 64bit A7 vs 32bit BT. The 32bit #s are dead even. It'll be interesting to see if BT gets a similar performance boost when Win8 64bit versions are released in 1h 2014.Wilco1 - Wednesday, September 18, 2013 - link

BT's 32-bit result includes hardware accelerated AES, which skews its score (without it, its score is ~936). The 64-bit A7 result does also use hardware acceleration, so it is more comparable.Yes BT will get a speedup from 64-bit as well, but won't be nearly as much as A7 gets: its 32-bit result already has the AES acceleration, and x64 nearly isn't as different from x86 as A64 is from A32.

However the interesting things is that not even in 32-bit A7 wins by a good margin, but that it wins despite running at almost half the frequency of Bay Trail... Forget about Bay Trail, this is Haswell territory - the MacBook Air with the 15W 3.3GHz i7-4650U scores 3024 INT and 3003 FP.

Now imagine a quad core tablet/laptop version of the A7 running at 2GHz on TSMC 20nm next year.

smartypnt4 - Wednesday, September 18, 2013 - link

Why does the frequency matter? If the TDP of the chips are similar (Bay Trail was tested and verified by Anand as using 2.5W at the SoC level under load), who gives a flip about the frequency?If Apple wanted to double the frequency of the chip, they'd need something on the order of 4x the amount of power it already consumes (assuming a back-of-the-napkin quadratic relationship, which is approximately correct), putting it at ~6-8W or so at full load. That's assuming such a scaling could even be done, which is unlikely given that Apple built the thing to run at 1.3GHz max. You can't just say "oh, I want these to switch faster, so let's up the voltage." There's more that goes in to the ability to scale voltage than just the process node you're on.

Now, I will agree that this does prove that if Apple really wanted to, they could build something to compete with Haswell in terms of raw throughput. Next year's A8 or whatever probably will compete directly with Haswell in raw theoretical integer and FP throughput, if Apple manages to double performance again. That's not a given since they had to use ~50% more transistors to get a performance doubling from the A6 to the A7, and building a 1.5B transistor chip is nontrivial since yields are inversely proportional to the number of transistors you're using.

Next year will be really interesting, though. What with Apple's next stuff, Broadwell, the first A57 designs, Airmont, and whatever Qualcomm puts out (haven't seen anything on that, which is odd for Qualcomm.)

Wilco1 - Wednesday, September 18, 2013 - link

Frequency & process matters. Current phones use about 2W at max load without the screen (see recent Nexus 7 test), so the claimed 2.5W just for BT is way too much for a phone. That means (as you explained) it must run at a lower frequency and voltage to get into phones - my guess we won't see anything faster than the Z3740 with a max clock of 1.8GHz. Therefore the A7 will extend its lead even further.According to TSMC 20nm will give a 30% frequency boost at the same power. So I'd expect that a 2GHz A7 would be possible on 20nm using only 35% more power. That means the A7 would get 75% more performance at a small cost in power consumption. This is without adding any extra transistors.

Add some tweaks (like faster memory) and such a 2GHz A7 would be similar in performance as the 15W Haswell in MacBook Air. So my point is that with a die shrink and a slight increase in power they already have a Haswell competitor.

smartypnt4 - Wednesday, September 18, 2013 - link

Frequency and process matter in that they affect power consumption. If Intel can get Bay Trail to do 2.4GHz on something like 1.0V, then the power should be fine. Current Haswell stuff tops out its voltage around 1.1V or so in laptops (if memory serves), so that's not unreasonable.All of this assumes Geekbench is valid for comparing HSW on Win8 to ARMv8/Cyclone on iOS, which I have serious reservations about attempting to do.

The other issue I have is this: you're talking about a 50% clock boost giving a 100% increase in performance if we look at the Geekbench scores. That's simply not possible. Had you said "raise the clock to 1.6-1.7GHz and give it 4 cores," I'd be right behind you in a 2x theoretical performance increase. But a 50% clock boost will never yield a 100% increase with the same core, even if you change the memory controller.

Also, somehow your math doesn't add up for power... Are you hypothesizing that a 2GHz A7 (with 75% of the performance of Haswell 15W, not the same - as per Geekbench) can pull 2.6W while Haswell needs 15W to run that test? Granted, Haswell integrates things that the A7 doesn't. Namely, more advanced I/O (PCIe, SATA, USB, etc.), and the PCH. Using very fuzzy math, you can claim all of that uses 1/2 the power of the chip.

That brings Haswell's power for compute down to 7-8W, more or less. And you're going to tell me that Apple has figured out how to get 75% of the performance of a 7W part in 2.6W, and Intel hasn't? Both companies have ~100k employees. One is working on a ton of different stuff, and one makes processors, basically exclusively (SSDs and WiFi stuff too, but processors is their main drive). You're telling me that a (relatively) small cadre of guys at Apple have figured out how to do it, and Intel hasn't done it yet on a part that costs ~6x as much after trying to get deep into the mobile space for years. I find that very hard to believe.

Even with the 14nm shrink next year, you're talking about a 30% power savings for Intel's stuff. That brings the 15W total down to 10.5W, and the (again, super, ridiculously fuzzy) computing power to ~5-6W. On a full node smaller than what Apple has access to. And you're saying they'd hypothetically compete in throughput with a 2.6W part. I'm not sure I believe that.

Then again, I suppose theoretical bandwidth could be competitive. That's simply a factor of your peak IPC, not your average IPC while the device is running. I don't know enough about the low level architecture of the A7 (no one does), so I'll just leave it here I guess.

I'm gonna go now... I'm starting to reason in circles.

Wilco1 - Wednesday, September 18, 2013 - link

The sort of "simple" tweaks I was thinking of are: an improved memory controller and prefetcher, doubling of L2, larger branch predictor tables. Assuming a 30% gain due to those tweaks, the result is a 100% speedup at 2GHz (1.3 to 2.0 GHz is a 54% speedup, so you get 1.54 * 1.3 = 2.0x perf). The 30% gain due to tweaks is pure speculation of course, however NVidia claims 15-30% IPC gain for similar tweaks in Tegra 4i, so it's not entirely implausible. As you say a much simpler alternative would be just to double the cores, but then your single threaded performance is still well below that of Haswell.You can certainly argue some reduction in the 15W TDP of Haswell due to IO, however with Turbo it will try to use most of that 15W if it can (the Air goes up to 3.3GHz after all).

Yes I am saying that a relative newcomer like Apple can compete with Intel. Intel may be large, but they are not infallible, after all they made the P4, Itanium and Atom. A key reason AMD cited for moving into ARM servers was that designing an ARM CPU takes far less effort than an equivalent performing x86 one. So the ISA does still matter despite some claiming it no longer does.

smartypnt4 - Wednesday, September 18, 2013 - link

My point wasn't that Apple can't compete; far from it. If anything, the A7 shows they can compete for the most part. However, what you suggest is that Apple could theoretically have the same performance as Intel on a full node process larger at half the power. Ihave no illusions that Intel is infallible. Stuff like Larrabee and the underwhelming GPU in Bay Trail prove that they aren't. I just seriously doubt that Apple could beat Intel at its own game. Specifically, in CPU performance, which is an area it's dominated for years. It's possible, but I find it relatively unlikely, especially this early in Apple's lifetime as a chip designer.

On a different note, after looking at the Geekbench results more, I feel like it's improperly weighted. The massive performance improvement in AES and SHA encryption may be skewing the overall result... I need to dig more in to Geekbench before coming to an actual conclusion. I'm also still not convinced that comparing cross-platform results is actually valid. I'd like to believe it is, but I've always had reservations about it.