The 2013 MacBook Air Review (13-inch)

by Anand Lal Shimpi on June 24, 2013 12:01 AM ESTReal World 802.11ac Performance Under OS X

A good friend of mine recently bought an older house and had been contemplating running a bunch of Cat6 through the crawlspace in order to get good, high-speed connectivity through his home. Pretty stoked about what I found with 802.11ac performance on the MacBook Air, I thought I came across a much easier solution to his problem. I shared my iPerf data with him, but he responded with a totally valid request: was I seeing those transfer rates in real world file copies?

I have an iMac running Mountain Lion connected over Gigabit Ethernet to my network. I mounted an AFP share on the MacBook Air connected over 802.11ac and copied a movie over.

21.2MB/s or 169.6Mbps is the fastest I saw.

Hmm. I connected the iMac to the same ASUS RT-AC66U router as the MacBook Air. Still 21.2MB/s.

I disabled all other wireless in my office. Still, no difference. I switched ethernet cables, I tried different Macs, I tried copying from a PC, I even tried copying smaller files - none of these changes did anything. At most, I only saw 21.2MB/s over 802.11ac.

I double checked my iPerf data. 533Mbps. Something weird was going on.

I plugged in Apple’s Thunderbolt Gigabit Ethernet adaptor and saw 906Mbps, clearly the source and the MacBook Air were both capable of high speed transfers.

What I tried next gave me some insight into what was going on. I setup web and FTP servers on the MacBook Air and transferred files that way. I didn’t get 533Mbps, but I broke 300Mbps. For some reason, copying over AFP or SMB shares was limited to much lower performance. This was a protocol issue.

Digging Deeper, Finding the Culprit

A major component of TCP networking, and what guarantees reliable data transmission, is the fact that all transfers are acknowledged and retransmitted if necessary. How frequently transfers are acknowledged has big implications on performance. Acknowledge (ACK) too frequently and you’ll get terrible throughput as the sender has to stop all work and wait for however long an ACK takes to travel across the network. Acknowledge too rarely on the other hand and you run the risk of doing a lot of wasted work in sub optimal network conditions. The TCP window size is a variable that’s used to define this balance.

TCP window size defines the max amount of data that can be in flight before an acknowledgement has to be sent/received. Modern TCP implementations support dynamic scaling of the TCP window in order to optimize for higher bandwidth interfaces.

If you know the round trip latency of a network, TCP window size as well as the maximum bandwidth that can be delivered over the connection you can actually calculate maximum usable bandwidth on the network.

The ratio of the network’s bandwidth-delay product to the TCP window size gives us that max bandwidth number.

The 2-stream 802.11ac in the new MacBook Air supports link rates of up to 867Mbps. My iPerf data showed ~533Mbps of usable bandwidth in the best conditions. Round trip latency over 50 ping requests between the MBA client and an iMac wired over Gigabit Ethernet host averaged 2.8ms. The bandwidth-delay product is 533Mbps x 2.8ms or 186,550 bytes. Now let’s look at the maximum usable bandwidth as a function of TCP window size:

| Impact of TCP Window Size on 802.11ac Transfer Rates, 533Mbps Link, 2.8ms Latency | ||||||||

| Window Size | Bandwidth-Delay Product | TCP Window/BDP | Percentage | Link Bandwidth | Max Realized Bandwidth | |||

| 32KB | 186550B | 32768/186550B | 17.6% | 533Mbps | 93.6Mbps | |||

| 64KB | 186550B | 65536/186550B | 31.1% | 533Mbps | 187.2Mbps | |||

| 128KB | 186550B | 131072/186550B | 70.3% | 533Mbps | 374.5Mbps | |||

| 256KB | 186550B | 262144/186550B | 140.5% | 533Mbps | 533Mbps | |||

The only way to get the full 533Mbps is by using a TCP window size that’s at least 256KB.

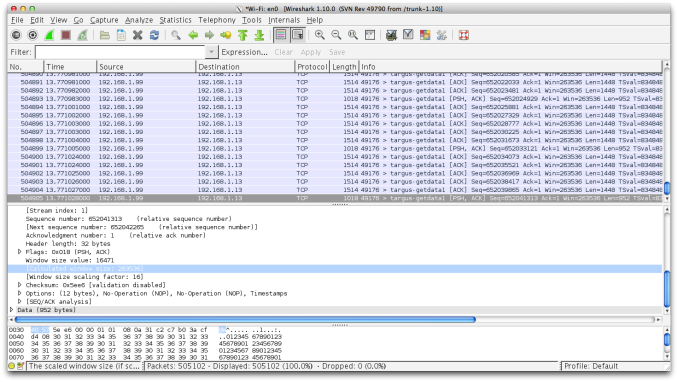

I re-ran my iPerf test and sniffed the packets that went by to confirm the TCP window size during the test. The results came back as expected. OS X properly scaled up the TCP window to 256KB, which enabled me to get the 533Mbps result:

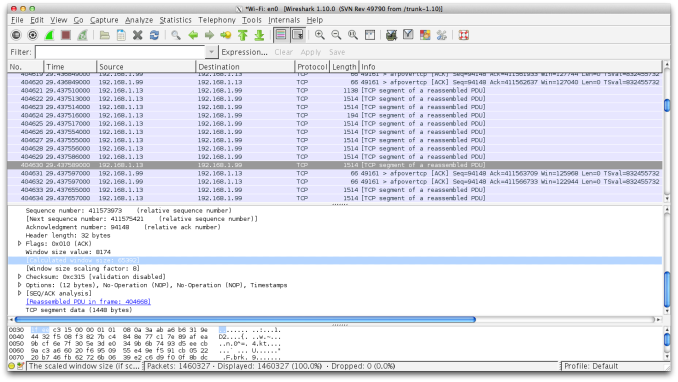

I then monitored packets going by while copying files over an AFP share and found my culprit:

OS X didn’t scale the TCP window size beyond 64KB, which limits performance to a bit above what I could get over 5GHz 802.11n on the MacBook Air. Interestingly enough you can get better performance over HTTP or FTP, but in none of the cases would OS X scale TCP window size to 256KB - thus artificially limiting 802.11ac.

I spent a good amount of time trying to work around this issue, even manually setting TCP window size in OS X, but came up empty handed. I’m not overly familiar with the networking stack in OS X so it’s very possible that I missed something, but I’m confident in saying that there’s an issue here. At a risk of oversimplifying, it looks like the TCP window scaling algorithm features a hard limit in OS X’s WiFi networking stack optimized for 802.11n and unaware of ac’s higher bandwidth capabilities. I should also add that the current developer preview of OS X Mavericks doesn’t fix the issue, nor does using an Apple 802.11ac router.

The bad news is that in its shipping configuration, the new MacBook Air is capable of some amazing transfer rates over 802.11ac but you won’t see them when copying files between Macs or PCs. The good news is the issue seems entirely confined to software. I’ve already passed along my findings to Apple. If I had to guess, I would expect that we’ll see a software update addressing this.

233 Comments

View All Comments

seapeople - Tuesday, June 25, 2013 - link

Brightness is pretty much the number one power consumer in a laptop like this (which is actually mentioned in the review). If you expect to run anything at 100% brightness and get anywhere near ideal battery life then you are bound to be disappointed.name99 - Monday, June 24, 2013 - link

"802.11ac ... better spatial efficiency within those channels (256QAM vs. 64QAM in 802.11n). Today, that means a doubling of channel bandwidth and a 4x increase in data encoded on a carrier"This is a deeply flawed statement in two ways.

(a) The modulation form describes (essentially) how many bits can be packed into a single up/down segment of a sinusoid wave form, ie how many bits/Hz. It is constrained by the amount of noise in the channel (ie the signal to noise ratio) which smeers different amplitudes together so that you can't tell them apart.

It can be improved somewhat over 802.11n performance by using a better error correcting code (which essentially distributes the random noise level over a number of bits, so that a single large amount of noise rather than destroying that bit information gets spread into a smaller amount of noise over multiple bits).

802.11ac uses LDPC, a better error correcting code, which allows it to use more aggressive modulation.

Point is, in all this the improved modulation has nothing to do with spatial encoding and spatial efficiency.

(b) The QAM64 and QAM256 refer to the number of possible states encoded per bit, not in any way to the number of bits encoded. So QAM64 encodes 6 bits per Hz, QAM256 encodes 8 bits per Hz. the improvement is 8/6=1.33 which is nice, but is not "a 4x increase in data encoded on a carrier".

We are close to the end of the line with fancy modulation. From now on out, pretty much all the heavy lifting comes from

(1) wider spectrum (see the 80 and 160MHz of 802.11ac) and

(2) smaller, more densely distributed base stations.

We could move from 3 up to 4 spatial streams (perhaps using polarization to help out) but that's tough to push further without much larger antennas (and a rapidly growing computational budget).

There is one BIG space for a one-time 2x improvement, namely tossing the 802.11 distributed MAC, which wastes half the time waiting randomly for one party or another to talk, and switching to a centrally controlled MAC (like the telcos) along with a very narrow RACH (random access channel) for lightweight tasks like paging and joining.

My guess/hope is that the successor to 802.11ac will consist primarily of the two issues I've described above (and so will look a lot more like new SW than new DSP algorithms), namely a central arbiter for a network along with the idea that, from the start, the network will consist of multiple small low-power cells working together, about one per room, rather than a single base station trying to reach out to 100 yards or more.

bittwiddler - Monday, June 24, 2013 - link

• The keyboard key size and spacing is the same on the 11 and 13" MBAs.• The 11" MBA is exempt from being removed from luggage during TSA screenings, unlike the 13.

• The 11" screen is lower height than most and doesn't get caught by the clip for the airplane seat tray table.

• When it comes to business travel computing, I'm not interested in a race to the bottom.

Sabresiberian - Monday, June 24, 2013 - link

One thing I would NOT like is for Apple to make a move to a 16:9 screen. I'd certainly rather have 1440x900 on a 13" screen than anything denser that was 16:9. I mean, I'm one of the guys that has been harping on pixel density and refresh rates since before we had modern smart phones (the move to LCDs set us back a decade or more in that regard), but on a screen smaller than 27", 16:9 is just bad. In my not-so-humble opinion.4:3 is better for something smaller than 17", but I can live with 16:10. :)

Kevin G - Monday, June 24, 2013 - link

Re-reading trough the review I have a question about the display: does it use panel self refresh? I recall Intel hyping up this technology several years ago and the Haswell slides in this review indicate support for it. The question is, does Apple take advantage of it?Kevin G - Monday, June 24, 2013 - link

I think that I can answer my own question. I couldn't find the data sheet for the review panel LSN133BT01A02 but references on the web point towards an early 2012 release for it. Thus it looks like it appeared on the market before panel self refresh was slated for wide spread introduction alongside Haswell.hobagman - Monday, June 24, 2013 - link

Hi Anand & all -- could I ask a more CPU related question I've been wondering about a lot -- how come the die shots always look so colorful and diverse, when isn't the top layer all just interconnects? Or are the die shots actually taken before they do the interconnects, consisting in the top 10-15 layers? Would really appreciate an explanation of this ...hobagman - Monday, June 24, 2013 - link

I mean, what are we actually seeing when we look at the die shot? Are those all different transistor regions, and if so, we must be looking at the bottom layers. Or is it that the interconnects in the different regions look different ... or ... ?SkylerSaleh - Tuesday, June 25, 2013 - link

When making the ASIC, thin layers of glass are grown on the silicon, etched, and filled with metal to build the interconnects. This leaves small sharp geometric shapes in the glass, which reacts with the light similarly to how a prism would, causing the wafer to appear colorful.cbrownx88 - Monday, June 24, 2013 - link

Please please please revisit with the i7 config - been wanting to make a purchase but have been waiting for this review (and now waiting on the update lol).