A Look at Intel HD 5000 GPU Performance Compared to HD 4000

by Anand Lal Shimpi on June 24, 2013 6:02 PM EST

When I got my hands on a Haswell based Ultrabook, Acer's recently announced S7, I was somewhat disappointed to learn that Acer had chosen to integrate Intel's HD 4400 (Haswell GT2) instead of the full blown HD 5000 (Haswell GT3) option. I published some performance data comparing HD 4400 to the previous generation HD 4000 (Ivy Bridge GT2) but added that at some point I'd like to take a look at HD 5000 to see how much more performance that gets you. It turns out that all of Apple's 2013 MacBook Air lineup features Haswell GT3 (via the standard Core i5-4250U or the optional Core i7-4650U). Earlier today I published our review of the 2013 MBA, but for those not interested in the MBA but curious about how Haswell GT3 stacks up in a very thermally limited configuration I thought I'd do a separate post breaking out the findings.

In mobile, Haswell is presently available in five different graphics configurations:

| Intel 4th Generation Core (Haswell) Mobile GPU Configurations | ||||||||

| Intel Iris Pro 5200 | Intel Iris 5100 | Intel HD 5000 | Intel HD 4400 | Intel HD 4200 | ||||

| Codename | GT3e | GT3 | GT3 | GT2 | GT2 | |||

| EUs | 40 | 40 | 40 | 20 | 20 | |||

| Max Frequency | 1.3GHz | 1.2GHz | 1.1GHz | 1.1GHz | 850MHz | |||

| eDRAM | 128MB | - | - | - | - | |||

| TDP | 47W/55W | 28W | 15W | 15W | 15W | |||

The top three configurations use a GPU with 40 EUs, while the HD 4400/4200 features half that. Intel will eventually introduce Haswell SKUs with vanilla Intel HD Graphics, which will only feature 10 EUs. We know how the Iris Pro 5200 performs, but that's with a bunch of eDRAM and a very high TDP. Iris 5100 is likely going to be used in Apple's 13-inch MacBook Pro with Retina Display as well as ASUS' Zenbook Infinity, neither of which are out yet. The third GT3 configuration operates under less than a third of the TDP of Iris Pro 5200. With such low thermal limits, just how fast can this GPU actually be?

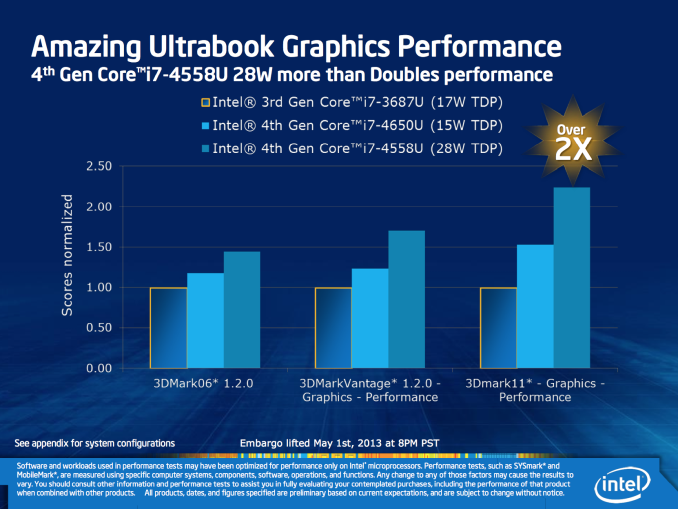

First, let's look at what Intel told us earlier this year:

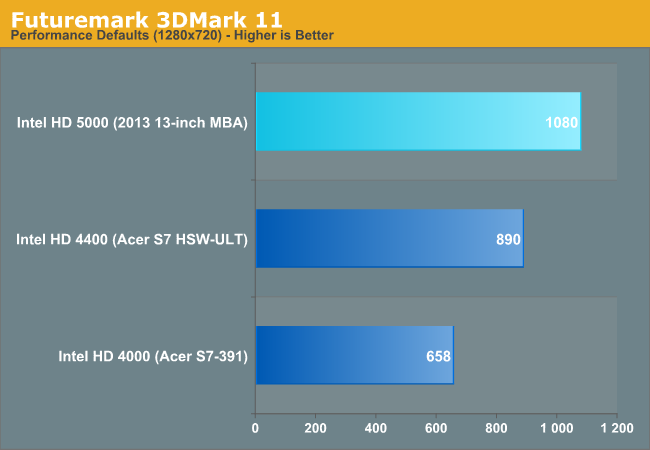

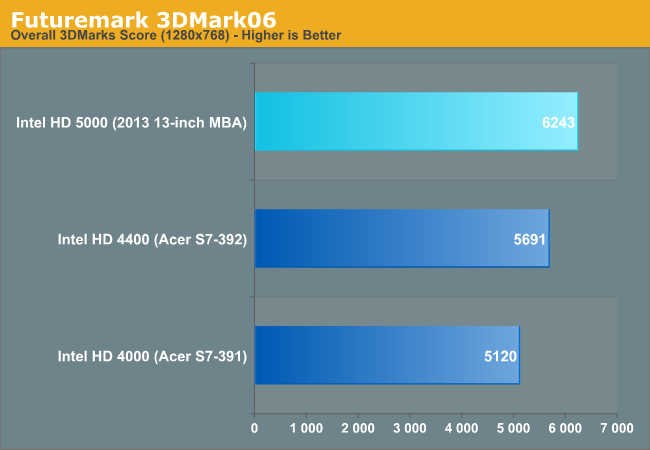

Compared to Intel's HD 4000 (Ivy Bridge/dark blue bar), Intel claimed roughly a 25% increase in performance with HD 5000 in 3DMark06 and a 50% increase in performance in 3DMark11. We now have the systems to validate Intel's claims, so how did they do?

In 3DMark 11 we're showing a 64% increase in performance if we compare Intel's HD 5000 (15W) to Intel's HD 4000 (17W). The 3DMark06 comparison yields a 21% increase in performance compared to Ivy Bridge ULV. In both cases we've basically validated Intel's claims. But neither of these benchmarks tell us much about actual 3D gaming performance. In our 2013 MBA review we ran a total of eight 3D games. I've summarized the performance advantages in the table below:

| Intel HD 5000 (Haswell ULT GT3) vs. Intel HD 4000 (Ivy Bridge ULV GT2) | |||||||||||

| GRID 2 | Super Street Fighter IV: AE | Minecraft | Borderlands 2 | Tomb Raider (2013) | Sleeping Dogs | Metro: LL | BioShock 2 | ||||

| HD 5000 Advantage | 16.2% | 12.4% | 16.9% | 3.0% | 40.8% | 6.5% | 2.3% | 24.4% | |||

The data ranges from a meager 2.3% advantage over Ivy Bridge ULV to as much as 40.8%. On average, Intel's HD 5000 offered a 15.3% performance advantage over Intel's HD 4000 graphics. Whether or not that's impressive really depends on your perspective. Given the sheer increase in transistor count, a 15% gain on average seems a bit underwhelming. To understand why, you have to keep in mind that the performance gains come on the same 22nm node, with a lower overall TDP. Haswell ULT GT3 has to be faster, with less thermal headroom than Ivy Bridge ULV GT2.

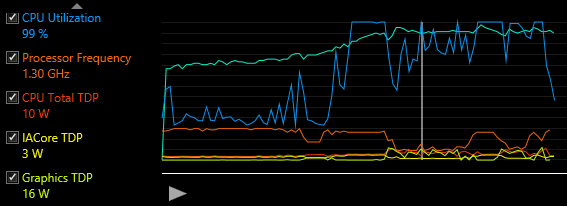

The range of performance improvement really depends on turbo residency. With only a 15W TDP (inclusive of the CPU and PCH), games that have more CPU activity or the right combination of GPU activity will see lower GPU clocks. In Borderlands 2 for example, I confirmed that the GT3 GPU alone was using up all of the package TDP thus forcing lower clocks:

All of this just brings us to the conclusion that increasing processor graphics performance in thermally limited conditions is very tough, particularly without a process shrink. The fact that Intel even spent as many transistors as it did just to improve GPU performance tells us a lot about Intel's thinking these days. Given how thermally limited Haswell GT3 is at 15W, it seems like Broadwell can't come soon enough for another set of big gains in GPU performance.

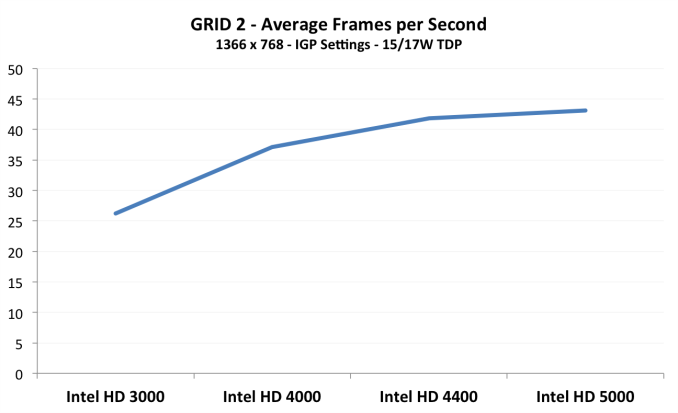

I also put together a little graph showing the progression of low TDP Intel GPU performance since Sandy Bridge. I used GRID 2 as it seemed to scale the most reasonably across all GPUs:

Note how the single largest gain happens with the move from 32nm to 22nm (there was also a big architectural improvement with HD 4000 so it's not all process). There's definite tapering that happens as the last three GPUs are on 22nm. The move to 14nm should help the performance curve keep its enthusiasm.

If you want more details and Intel HD 5000 numbers feel free to check out the GPU sections of our 2013 MacBook Air review.

55 Comments

View All Comments

IntelUser2000 - Tuesday, June 25, 2013 - link

It won't work with GPU-Z, you need to use HWINFO. GPU-Z is pretty crap for Intel iGPUs.MrSpadge - Tuesday, June 25, 2013 - link

In the article you're often referring to these chips having "less thermal headroom". I'd rather say they are power constrained: attach a better cooler (not easy in an Ultrabook, but possible) and these chips won't perform any better, just because they're already using their full 15 W under load. If the chips were thermally limited you should hear the fan screaming.. which you didn't, as I understand from the article.BTW: it would be really nice to see measured power draw while running these benchmarks as well. This would make Haswell look even better compared to Ivy. And average clock speeds could also reveal some more.. maybe HD5000 has to clock so low in that 15 W config that the voltage already hits the absolute minimum and further sclaing down couldn't improve efficiency over HD4400. For this one would need to read out the voltages or at least know the frequency-voltage curves of these GPUs. Would be nice if you could do either of this :)

Shadowmaster625 - Tuesday, June 25, 2013 - link

Wow what a waste it is to use HD5000. It is only fractionally better than HD4400. All those transistors... wasted.tipoo - Tuesday, June 25, 2013 - link

It's primarily for the lower power required. With more EUs, they can run at lower clock speed and voltages to perform as well with less power used. In the 28W versions (presumably headed for the 13" MBP) we'll see how the GT3 can really perform when power is less of a consideration.Penti - Tuesday, June 25, 2013 - link

MacBook Pros at least the 15 inch will use non-single chip quad-core processors, also the 13.3 inch version uses 35W chips today. They could bump that one to the 47W GT3e part if they wanted performance, as that would roughly put it slightly under the old 15.6 inch pros in graphics performance. You just have to wait and see what the refreshes and new models brings when it comes to Haswell, Apple or not Apple for that matter. Lots of PC's simply use the dual-core GT2-part for example. The single-chip ULT-parts doesn't have any external PCIe links for any gpu. Don't think 5000 and 5100 Iris graphics really matters that much either. 28W parts are just about CPU-performance. All depends on where they want to take it.IntelUser2000 - Tuesday, June 25, 2013 - link

The 28W Iris Graphics 5100 is 30% faster in Bioshock 2 and Tomb Raider.IntelUser2000 - Tuesday, June 25, 2013 - link

Compared to the HD 5000 I mean. :PPenti - Wednesday, June 26, 2013 - link

Sounds reasonable when you factor in much faster cpu, and slightly faster (clocks) gpu.icrf - Tuesday, June 25, 2013 - link

Honest question, not trying to troll: is there a purpose to better graphics outside from gaming or professional applications? Have we already reached a baseline of UI acceleration for common office / browsing / content consumption tasks? Basically, if I'm not running Crysis or Photoshop, should I care? Will I notice anything?hova - Tuesday, June 25, 2013 - link

You will notice it when playing high res videos on the web and also when scrolling through heavy websites (if the browser takes good usage of the GPU).By far the biggest purpose is for higher resolution "retina like" screens. And all this is just for the "regular" office/web user. Like you said gamers and professionals will also like having more graphic performance in a more portable form factor. It's a great win/win for everyone.