The Crucial/Micron M500 Review (960GB, 480GB, 240GB, 120GB)

by Anand Lal Shimpi on April 9, 2013 9:59 AM ESTAnandTech Storage Bench 2011

Two years ago we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

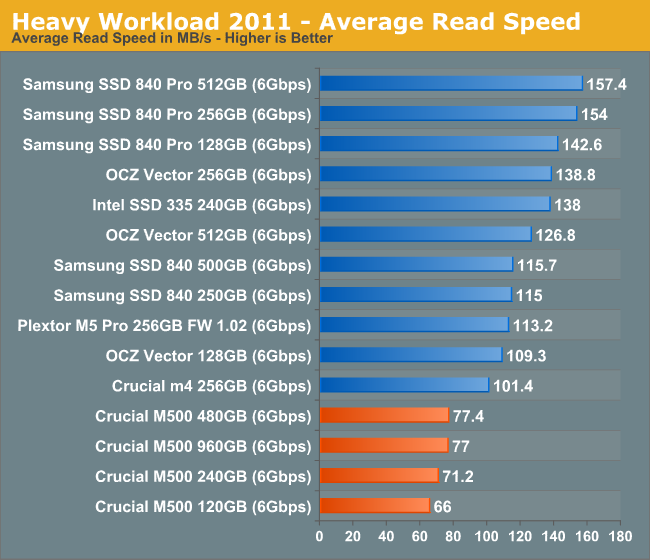

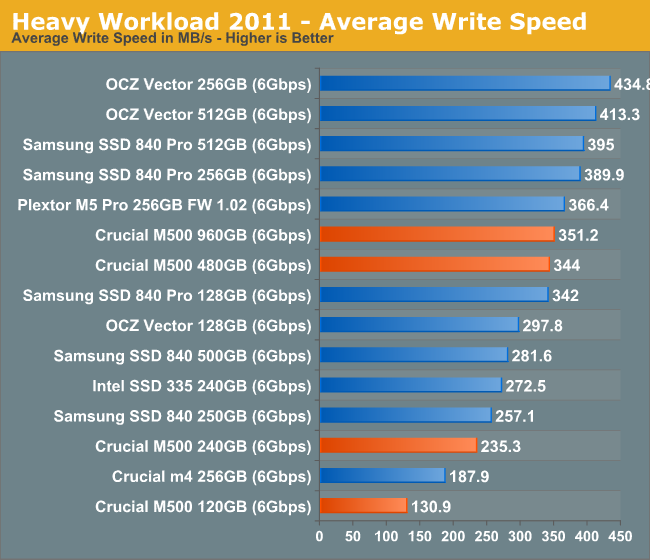

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running in 2010.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011 - Heavy Workload

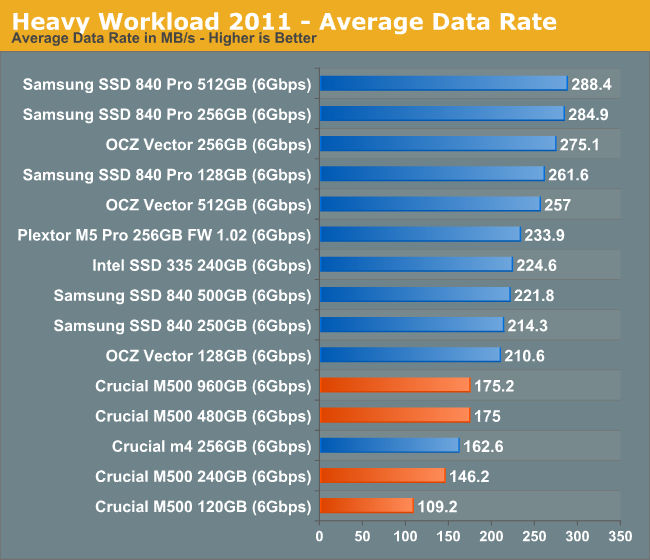

We'll start out by looking at average data rate throughout our new heavy workload test:

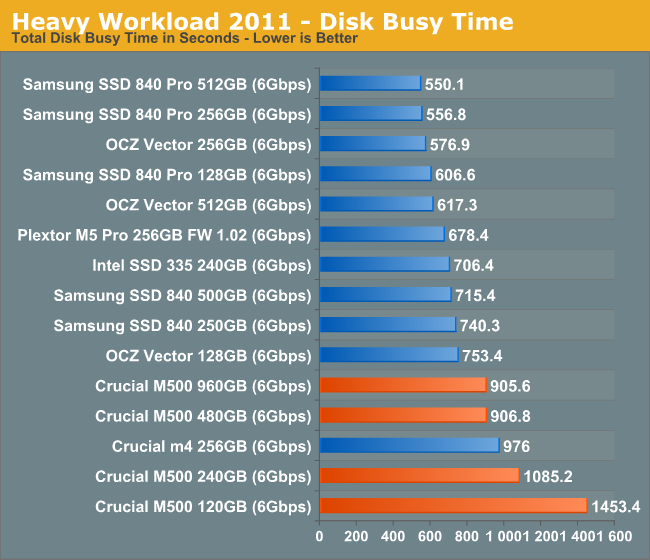

Our heavy workload from 2011 illustrates the culmination of everything we've shown thus far: the M500 can even be slower than the outgoing m4. There's no doubt in my mind that this is a result of the tradeoffs associated with moving to 128Gbit NAND die. The M500's performance is by no means bad, but it's definitely below what we've come to expect from Intel and Samsung flagships.

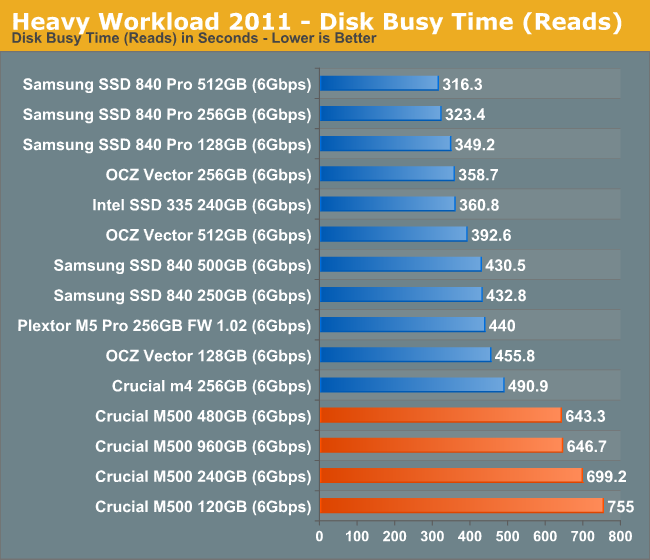

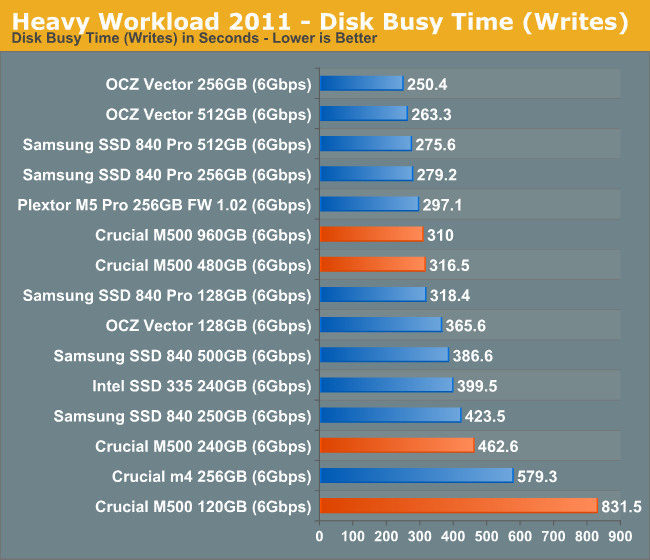

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

111 Comments

View All Comments

Solid State Brain - Saturday, April 13, 2013 - link

In theory, the spare area can be only configured on a clean drive, which means one would have to secure erase it (and therefore lose all data) and then create a partition smaller than the drive's maximum user capacity. The remaining unused (raw, unpartitioned) capacity should then be used by the drive as spare area for wear leveling operations, in addition to the factory OP area (usually derived from the GiB->GB capacity difference). In practice it *should* be sufficient to notify the drive that the empty space is actually empty with a TRIM command before resizing the partition.In your case the Samsung Magician software allows to double the drive's factory spare area (no other adjustment possible, at least in version 4). It doesn't perform a secure erase, so perhaps it isn't really necessary after all.

I don't know however if the Samsung 840 controller actually actively detects when a certain portion of the drive is "raw/unpartitioned". Theory dictates that it shouldn't be able to discern that without the OS somehow telling it so.

If a partition-wide TRIM operation is enough, then one can increase overprovisioning manually on an live/used system by:

1) Performing a full-system TRIM command with the Windows 8 integrated "drive defrag/optimization" tool (or with the "fstrim" command line tool in Linux, although this works only on ext4 partitions), or with dedicated third party utilities (some commercial defragmentation software performs a system-wide trim on SSDs instead of regular defrag).

2) Resize the last partition manually with Computer Management>Disk Management>Shrink Partition.

Anyway, in practice all this hassle is going to benefit you only if you routinely perform dozens of gigabytes of sustained writes per day in a possibly trim-less environment. I doubt very much that most users would be able to feel any difference with their workloads.

AlB80 - Saturday, April 13, 2013 - link

"Total NAND on-board" and "DRAM" values are specified in "GB" and "MB", but it should be "GiB" and "MiB".JellyRoll - Saturday, April 13, 2013 - link

Shut up JohnW lolJellyRoll - Saturday, April 13, 2013 - link

There is a huge misstatement in the article..."I introduced a new method of characterizing performance: looking at the latency of individual operations over time."First: it isnt individual operations, several thousand are taking place per one second interval.

Second: Anand did not introduce this type of testing, it was a blatant copying of other another tech websites testing.

JellyRoll - Saturday, April 13, 2013 - link

There is a huge misstatement in the article..."I introduced a new method of characterizing performance: looking at the latency of individual operations over time."First: it isnt individual operations, several thousand are taking place per one second interval.

Second: Anand did not introduce this type of testing, it was a blatant copying of other another tech websites testing.

twtech - Sunday, April 14, 2013 - link

I think it's kind of interesting in the comments, people are looking at the performance figures and saying, "Oh, it doesn't perform as well as a Samsung 840 Pro, so I'm disappointed."I have a couple computers booting off an M4 (slower than the M500), and one that has a Samsung 830 as the boot drive. The Samsung is quite a bit faster in benchmarks, but do I notice? Nope, not really. The jump to having any SSD at all is significant. The jump from one SSD to another - provided neither have something like firmware issues causing stuttering as some old models did - is negligible.

I think the more important factor here is that we have a nearly 1TB SSD for $600 - less than what 512GB drives were selling for 1 year ago. That's big enough that many users may not even need a separate mechanical storage drive.

JellyRoll - Sunday, April 14, 2013 - link

Part of the issue is the unrealistic test parameters. Testing with such ridiculously severe workloads is not irepresentative of a real-world use.Wolfpup - Monday, April 15, 2013 - link

Unfortunately I couldn't wait for the launch of the M500...had to "make due" with a 512GB M4. Oh well, it's still a great drive!random2 - Monday, April 15, 2013 - link

I cannot imagine anyone who doesn't have some sort of tech background, trying to read these articles. Granted I am no certificated IT professional, I have been very interested in hardware and software for over a decade, and have been a reader of Anandtech for almost as long. Which brings me to this. Can we not have some of the terms abbreviated or otherwise, hyper-linked at least to an article providing further explanation?Case in point; ONFI 3.0

af3 - Tuesday, April 16, 2013 - link

I was thinking of ordering a $350 256G Lacie Thunderbolt Rugged external SSD for the purposes of booting another OS without needing to use space on my internal/main (SSD) drive.Can anyone tell me whether there might be a superior (in terms of performance and cost) alternative that might utilize something like one of these new Micron drives?

Does anyone know whether or not the Lacie is fast and whether or not I might have something better by getting another external Thunderbolt device and installing one of these Micron drives?