Intel SSD 525 Review (240GB)

by Anand Lal Shimpi on January 30, 2013 1:42 AM ESTAnandTech Storage Bench 2011

Two years ago we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running in 2010.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011 - Heavy Workload

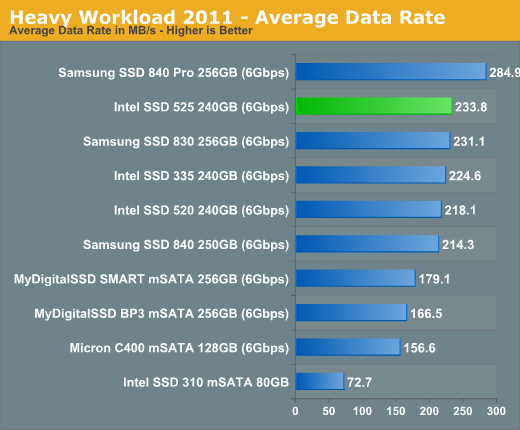

We'll start out by looking at average data rate throughout our new heavy workload test:

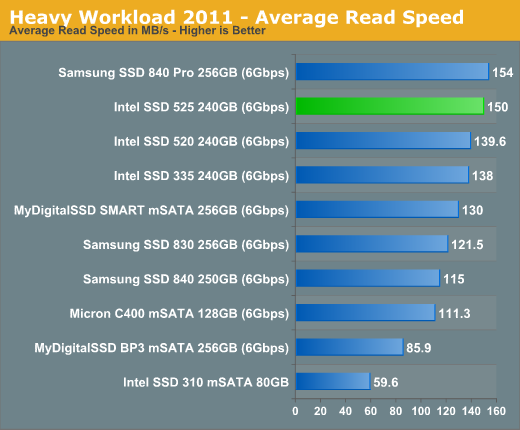

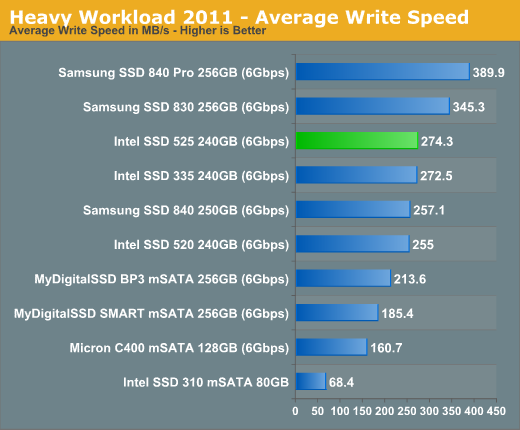

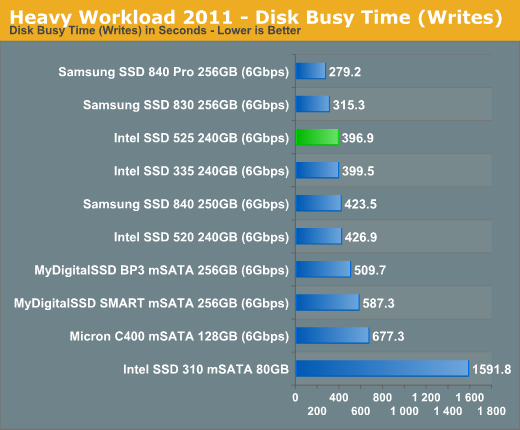

The 525 does manage to pull a small but tangible advantage over the 520 in our heavy workload test. The performance advantage seems to be largely due to improvement in write speed if we look at the breakdown:

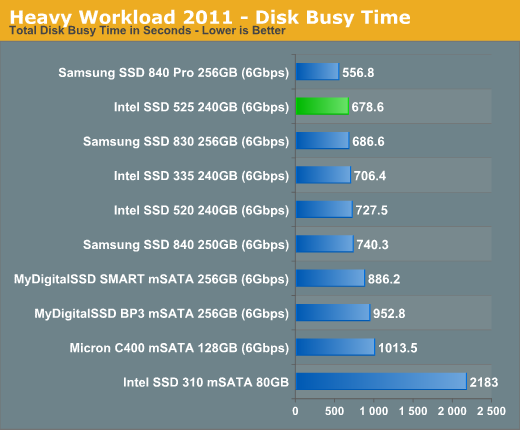

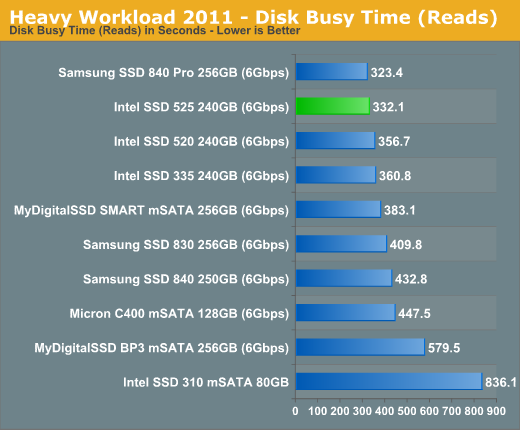

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

21 Comments

View All Comments

philipma1957 - Wednesday, January 30, 2013 - link

meaker10 have you used a msi gt60?critical_ - Wednesday, January 30, 2013 - link

I'm using Startech's SAT2MSAT25. It is a "passthrough" design so it'll work at 6Gbps is your controller supports it.HyperJuni - Wednesday, January 30, 2013 - link

I was hoping for a comparison with the m4/C400 mSATA 256GB, since it seems to differ a bit in performance from the 128GB model, and would be better suited as a "direct competitor" to the 240GB 525 for the same of comparison IMHO.Too bad you didn't include it in the charts, Anand.

nathanddrews - Wednesday, January 30, 2013 - link

Since IOPS consistency improves significantly when setting aside 25% spare area, what is the practical effect in real world? Has this been documented using the AT Storage Bench? Under default conditions, the 840 Pro dominates the top of the charts, but does it still retain the crown after being "stroked"? Just curious...SanX - Wednesday, January 30, 2013 - link

Is it burning hot for its size? Will it fry your eggs?SanX - Wednesday, January 30, 2013 - link

damn cellphone spell-correction typo and lack of edit option like on cheap websites: ***incompressible***dealcorn - Wednesday, January 30, 2013 - link

The prospect of the S3700 technology in a consumer drive has appeal except that the S3700 uses too much power. Is Intel's approach inherently inefficient or is it reasonable that Intel can tune the technology differently for the consumer market to achieve competitive efficiency?name99 - Wednesday, January 30, 2013 - link

"Why does Intel continue to use a third party SATA controller in many of its flagship drives? Although I once believed this was an issue with Intel having issues on the design front, I now believe that a big part of it has to do with the Intel SSD group being more resource constrained than other groups within the company.

"

This seems strangely short sighted. How is flash controlled on mobile devices?

Obviously performance is substantially lower. It's not clear to me how that lowering is split between

- cheaper, lower-end flash

- only one rank (or whatever flash their call their equivalent), ie limited parallelism

- a dumb controller.

However there doesn't seem to be any aspect of the problem that is inherently power limited.

Which implies that if Intel wanted a way to make their perpetually lagging Atom SOCs a little more noteworthy, one way to do so would be to work on them having flash support that was substantially faster than what's available on ARM today.

emvonline - Thursday, January 31, 2013 - link

Sandforce controllers are high performance, have no DRAM need, and allow both SF standard and custom firmware. Until SF drops the ball on performance or support I would look for more SSDs to be based on the SF design, not less. Enterprise is different ball game where ASPs/margins are much higher so custom controller might make sense (volumes are much lower though). If other 3rd party controllers mature, I expect them to gain market share as well.damnintel - Wednesday, March 13, 2013 - link

heyyyy check this out damnintel dot com