Dragging Core2Duo into 2013: Time for an Upgrade?

by Ian Cutress on January 15, 2013 12:30 PM EST- Posted in

- CPUs

As any ‘family source of computer information’ will testify, every so often a family member will want an upgrade. Over the final few months of 2012, I did this with my brother’s machine, fitting him out with a Sandy Bridge CPU, an SSD and a good GPU to tackle the newly released Borderlands 2 with, all for free. The only problem he really had up until that point was a dismal FPS in RuneScape.

The system he had been using for the two years previous was an old hand-me-down I had sold him – a Core2Duo E6400 with 2x2 GB of DDR2-800 and a pair of Radeon HD4670s in Crossfire. While he loves his new system with double the cores, a better GPU and an SSD, I wondered how much of an upgrade it had really been.

I have gone through many upgrade philosophies over the decade. My current one to friends and family that ask about upgrades is that if they are happy installing new components. then upgrade each component to one of the best in its class one at a time, rather than at an overall mediocre setup, as much as budget allows. This tends towards outfitting a system with a great SSD, then a GPU, PSU, and finally a motherboard/CPU/memory upgrade with one of those being great. Over time the other two of that trio also get upgraded, and the cycle repeats. Old parts are sold and some cost is recouped in the process, but at least some of the hardware is always on the cutting edge, rather than a middling computer shop off-the-shelf system that could be full of bloatware and dust.

As a result of upgrading my brother's computer, I ended up with his old CPU/motherboard/memory combo, full of dust, sitting on top of one of my many piles of boxes. I decided to pick it up and run the system with a top range GPU and an SSD through my normal benchmarking suite to see how it faired to the likes of the latest FM2 Trinity and Intel offerings, both at stock and with a reasonable overclock. Certain results piqued my interest, but as for normal web browsing and such it still feels as tight as a drum.

The test setup is as follows:

Core2Duo E6400 – 2 cores, 2.13 GHz stock

2x2 GB OCZ DDR2 PC8500 5-6-6

MSI i975X Platinum PowerUp Edition (supports up to PCIe 1.1)

Windows 7 64-bit

AMD Catalyst 12.3 + NVIDIA 296.10 WHQL (for consistency between older results)

My recent testing procedure in motherboard reviews pairs the motherboard with an SSD and a HD7970/GTX580, and given my upgrading philosophy above, I went with these for comparable results. The other systems in the results used DDR3 memory in the range of 1600 C9 for the i3-3225 to 2400 C9 for the i7-3770K.

The Core2Duo system was tested at stock (2.13 GHz and DDR2-533 5-5-5) and with a mild overclock (2.8 GHz and DDR2-700 5-5-6).

Gaming Benchmarks

Games were tested at 2560x1440 (another ‘throw money at a single upgrade at a time’ possibility) with all the eye candy turned up, and results were taken as the average of four runs.

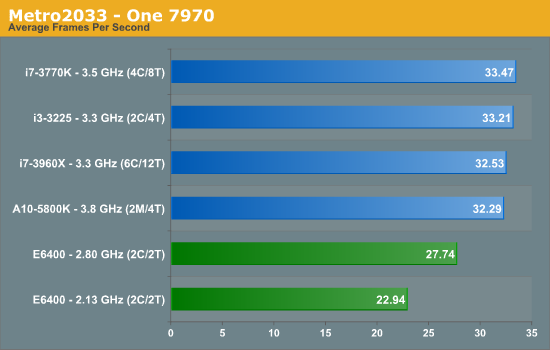

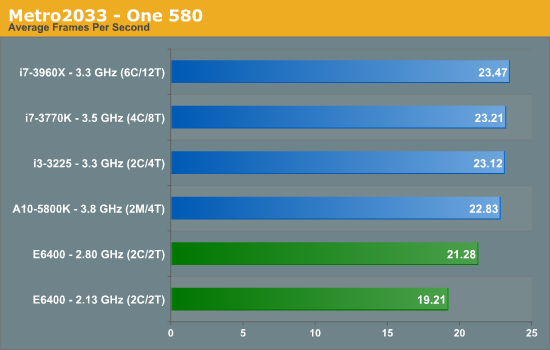

Metro2033

While an admirable effort by the E6400, and overclocking helps a little, the newer systems get that edge. Interestingly the difference is not that much, with an overclocked E6400 being within 1 FPS of an A10-5800K at this resolution and settings while using a 580.

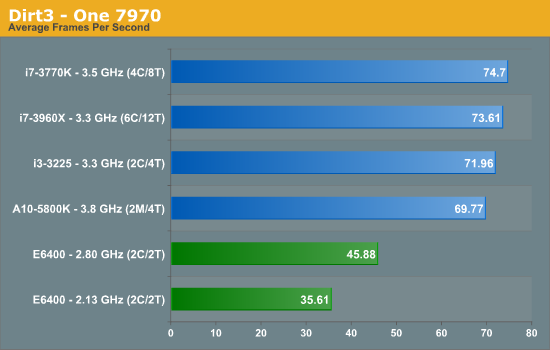

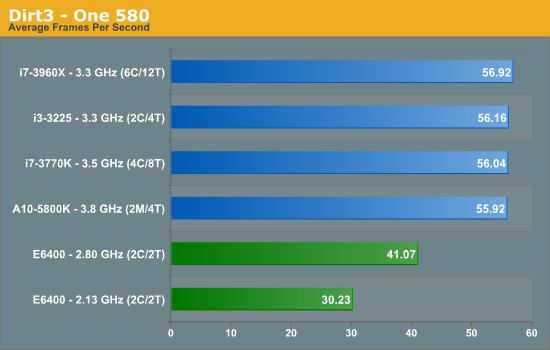

Dirt3

The bump by the overclock makes Dirt3 more playable, but it still lags behind the newer systems.

Computational Benchmarks

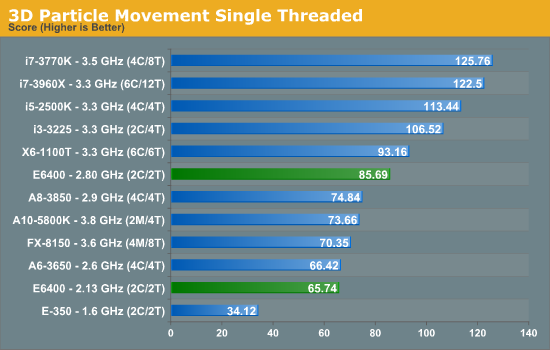

3D Movement Algorithm Test

This is where it starts to get interesting. At stock the E6400 lags at the bottom but within reach of an FX-8150 4.2 GHz , but with an overclock the E6400 at 2.8 GHz easily beats the Trinity-based A10-5800K at 4.2 GHz. Part of this can be attributed to the way the Bulldozer/Piledriver CPUs deal with floating point calculations, but it is incredible that a July 2006 processor can beat an October 2012 model. One could argue that a mild bump on the A10-5800K would put it over the edge, but in our overclocking of that chip anything above 4.5 GHz was quite tough (we perhaps got a bad sample to OC).

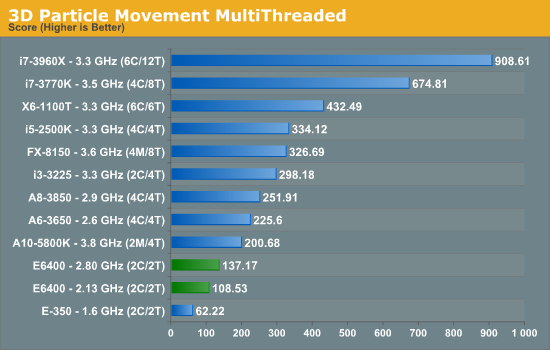

Of course the situation changes when we hit the multithreaded benchmark, with the two cores of the E6400 holding it back. However, if we were using a quad core Q6600, stock CPU performance would be on par with the A10-5800K in an FP workload, although the Q6600 would have four FP units to calculate with and the A10-5800K only has two (as well as the iGPU).

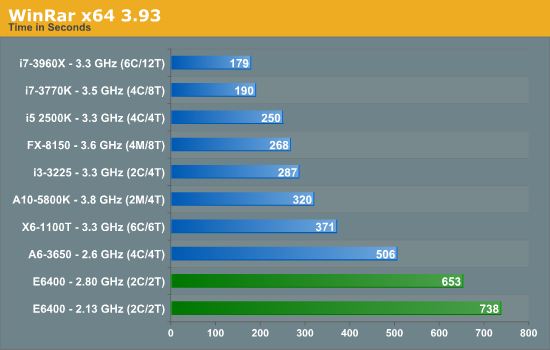

WinRAR x64 3.93 - link

In a variable threaded workload, the DDR2 equipped E6400 is easily outpaced by any modern processor using DDR3.

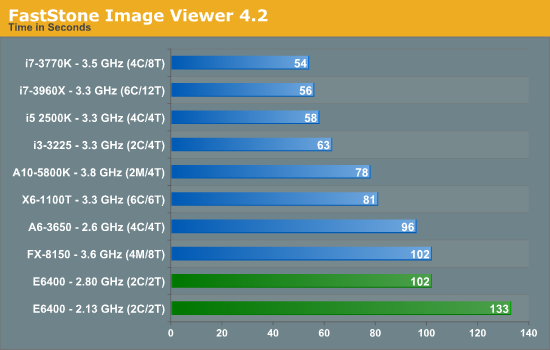

FastStone Image Viewer 4.2 - link

Despite FastStone being single threaded, the increased IPC of the later generations usually brings home the bacon - the only difference being the Bulldozer based FX-8150, which is on par with the E6400.

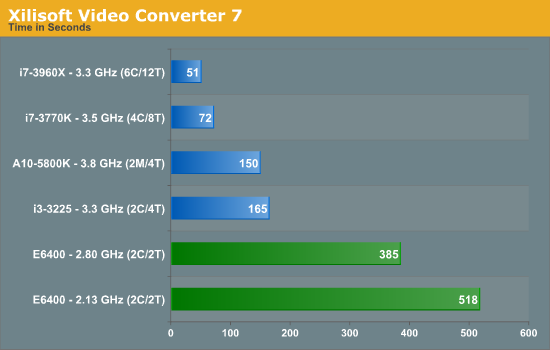

Xilisoft Video Converter

Similarly with XVC, more threads and INT workloads win the day.

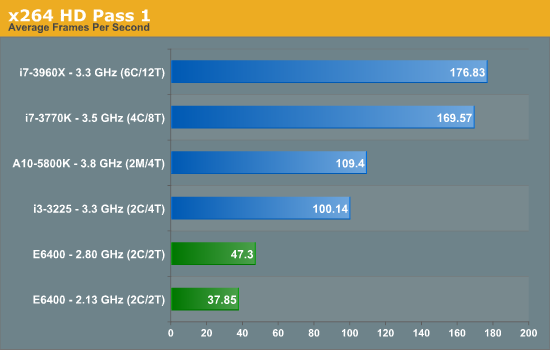

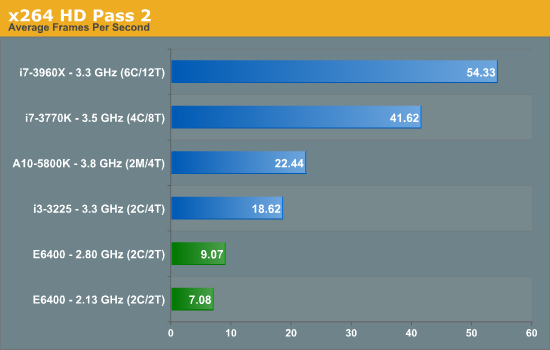

x264 HD Benchmark

Conclusions

When I start a test session like this, my first test is usually 3DPM in single thread mode. When I got that startling result, I clearly had to dig deeper, but the conclusion produced by the rest of the results is clear. In terms of actual throughput benchmarks, the E6400 is comparatively slow to all the modern home computer processors, either limited by cores or by memory.

This was going to be obvious from the start.

In the sole benchmark which does not rely on memory or thread scheduling and is purely floating point based the E6400 gives a surprise result, but nothing more. In our limited gaming tests the E6400 copes well at 2560x1440, with that slight overclock making Dirt3 more playable.

But the end result is that if everything else is upgraded, and the performance boost is cost effective, even a move to an i3-3225 or A10-5800K will yield real world tangible benefits, alongside all the modern advances in motherboard features (USB 3.0, SATA 6 Gbps, mSATA, Thunderbolt, UEFI, PCIe 2.0/3.0, Audio, Network). There are also significant power savings to be had with modern architectures.

My brother enjoys playing his games at a more reasonable frame rate now, and he says normal usage has sped up by a bit, making watching video streams a little smoother if anything. The only question is where Haswell will come in to this, and is a question I look forward to answering.

136 Comments

View All Comments

Peanutsrevenge - Tuesday, January 15, 2013 - link

Your brothers old hardware's not much different to mine ( E5400 @ 3.3 (used to be 3.5 but it's getting old), 4GB @ 800, Dual GTS250s, 120GB HyperX 3K SSD).While it certainly can be frustrating slow when it comes to computation, I can still run most of the newest games well enough @ >= medium settings.

While I recently had enough cash to upgrade to an i3, SLI mobo and 8GB, I really couldn't find myself able to justify it still due to the lack of a major step in performance, or rather, due to the continued stubbornness of the old 775.

Hopefully I'll actually get a full time permanent job soon so it'll be easier to stomach a decent upgrade (K series and xxx(x)GPU).

themossie - Tuesday, January 15, 2013 - link

The 'newer' E7200 (2.53 ghz, 45nm) continues to serve me well. The E7200 still makes an awesome home server, with exceptionally low power consumption (even decent by today's standards!) and more horsepower than any 3 Atoms ever built. Until this week, I ran my home server (with 2 VMs on top of Windows) off of it.This week, it's my desktop again...

My Phenom II motherboard went kaput last week, so I swapped back to the old Core 2 Duo+motherboard as my desktop until a replacement arrives. With RAID SSDs and a good graphics card, I have no complaints except the 4 gb of ram, which is why I upgraded in the first place - at the time, 8 gb of DDR2 cost as much as the Microcenter Phenom II CPU+mobo deal and 8 gigs of DDR3.

For those who aren't power users or serious gamers, any 45nm Core 2 Duo should last at least a couple more years with an SSD and enough RAM. Any upgrade less than a Sandy Bridge or Ivy Bridge i5 isn't worthy.

benamoo - Saturday, January 19, 2013 - link

I have a very similar system (E7300 with 4GB of DDR2-800 + a HD4670 GPU) which I mainly use as an HTPC with occasional gaming at 1366x768. Overall I'm very satisfied with it.My main concern is the power consumption. I know it's based on a newer 45nm architecture (the reason I chose this particular CPU was its power efficiency back in 2008).

I just wanna know how much would I benefit from using a modern, say Ivy Bridge Core i3 instead of my current rig? From a power consumption standpoint. Since I can't build a new PC right now I thought It'd be better to just upgrade what I currently have? Maybe add an SSD and a new GPU.

Since you mentioned you used yours as a server which might have been on 24/7 I thought you'd know the estimated power consumption?

Any ideas on that?

Thanks in advance.

themossie - Sunday, January 20, 2013 - link

I don't have any way to measure power consumption, but TomsHardware (http://www.tomshardware.com/reviews/intel-e7200-g3... shows a E7200+G31 idling at 31 watts with integrated graphics and an efficient, low-output power supply.The G31 is dated even by Socket 775 standards, so with underclocking/undervolting and a better motherboard, you can probably drop that - quick and dirty, either use SpeedStep to lower the multiplier or drop the FSB from 1066 to 800Mhz. then reduce voltage until it starts crashing :-)

I know the Radeon 4670 was very efficient for its day, but not sure what might be a good upgrade. First idea that comes to mind is G45+integrated graphics?

benamoo - Sunday, March 10, 2013 - link

Thanks for the info, and sorry for the super-late reply!About the GPU upgrade, the best option seems to be AMD Radeon 7750. It's really power-efficient and is the fastest graphics card right now that doesn't require an auxiliary power input.

tech.noob.fella - Wednesday, January 16, 2013 - link

how much of a difference will haswell make to graphics performance if my computer already has a discrete graphics unit??themossie - Wednesday, January 16, 2013 - link

From Core 2 Duo to Haswell, or something else? What kind of programs do you run? You could be CPU, GPU, IO or RAM limited depending on the workload.tech.noob.fella - Wednesday, January 16, 2013 - link

ivy bridge....I dont actuaaly have one, just wanted to know if I should grab the currently shown series 7 chronos/ultra or wait for haswell equipped ones....how much will the difference be??themossie - Wednesday, January 16, 2013 - link

Since the Series 7 Chronos and Ultra both have a discrete GPU, the difference between the Ivy Bridge and Haswell integrated graphics won't matter at all.lukarak - Wednesday, January 16, 2013 - link

I'm using a 4 year old X58 i7-920 system. It has since been upgraded with 24 GB of the cheapest ram and a new graphics card. Aside from USB 3, i don't see any reason to upgrade in next 4 years. Long gone are the days where you couldn't run mp3s on a 486 or a divx on Pentium II 266, or a 1080p x.264 on a C2D in a laptop.