Corsair Neutron & Neutron GTX: All Capacities Tested

by Kristian Vättö on December 19, 2012 1:10 PM ESTAnandTech Storage Bench 2011

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. Anand assembled the traces out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally we kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system. Later, however, we created what we refer to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. This represents the load you'd put on a drive after nearly two weeks of constant usage. And it takes a long time to run.

1) The MOASB, officially called AnandTech Storage Bench 2011—Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. Our thinking was that it's during application installs, file copies, downloading, and multitasking with all of this that you can really notice performance differences between drives.

2) We tried to cover as many bases as possible with the software incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II and WoW are both a part of the test), as well as general use stuff (application installing, virus scanning). We included a large amount of email downloading, document creation, and editing as well. To top it all off we even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011—Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential; the rest ranges from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

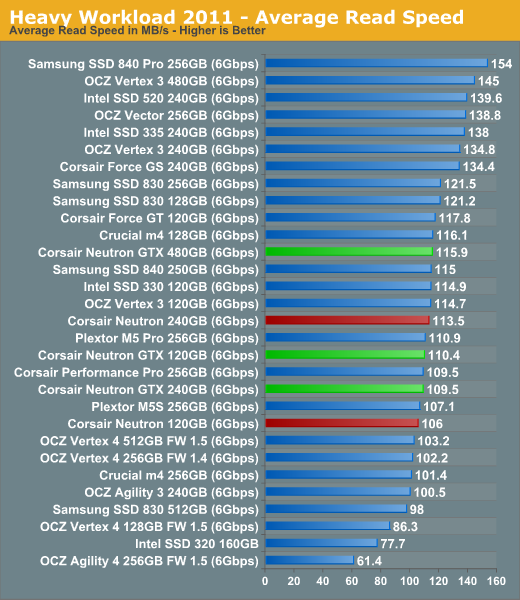

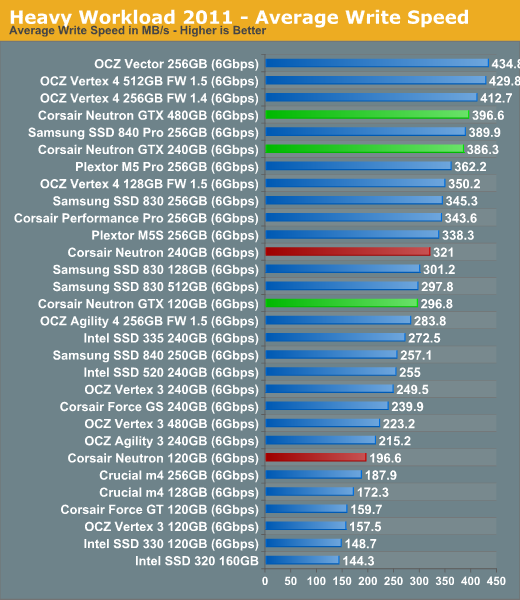

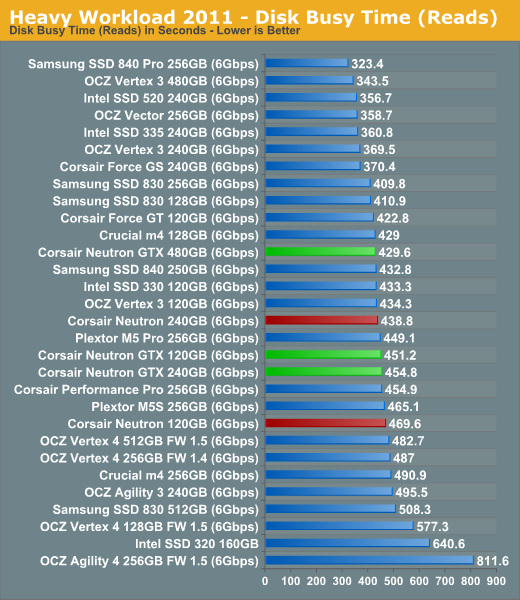

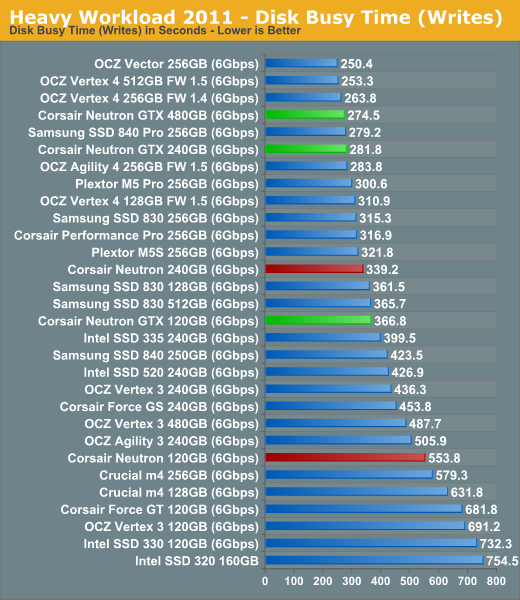

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result we're going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time we'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, we will also break out performance into reads, writes, and combined. The reason we do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. It has lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback, as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

We don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea. The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests.

AnandTech Storage Bench 2011—Heavy Workload

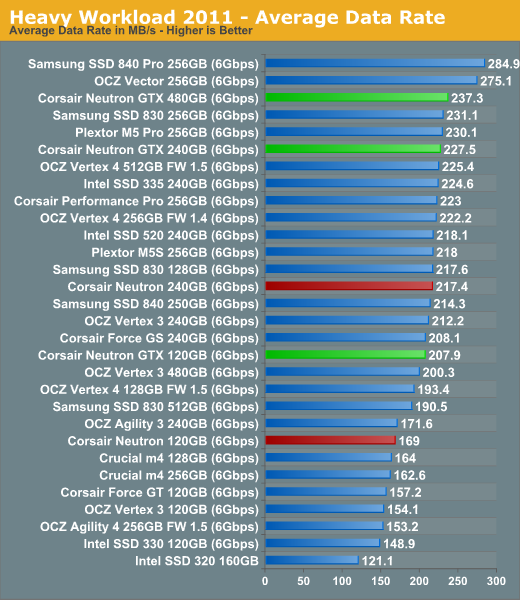

We'll start out by looking at average data rate throughout our new heavy workload test:

The 480GB Neutron GTX enjoys a small speed increase over the 240GB version but in general the overall performance follows the same pattern as our synthetic tests. The only truly significant difference in performance is between the 120GB models where the Neutron GTX beats the regular Neutron by 23%. Neutron GTX is actually a very high performer at 120GB although I have to note that we have not tested the 128GB version of Samsung SSD 840 Pro and OCZ Vector yet.

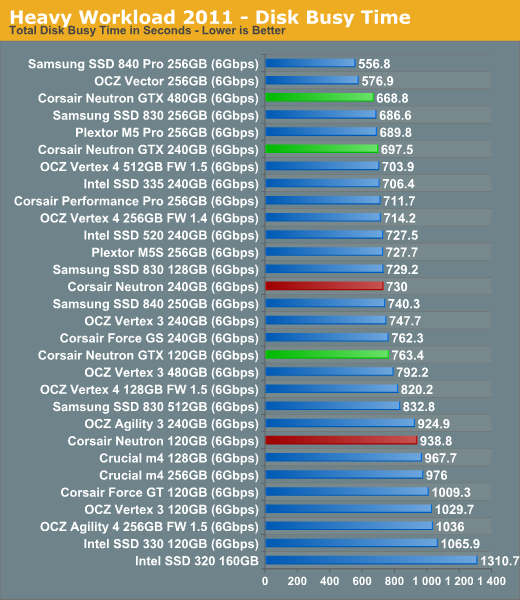

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

44 Comments

View All Comments

Oxford Guy - Sunday, December 23, 2012 - link

Ad hominem much?Plenty of buyers would be interested in knowing that the 830, for instance, tops the charts in terms of power usage under load, particularly given the fact that Samsung's "full specs" advertised number is impossibly low.

People have been tricked by this, which is exactly why Samsung publishes that low number.

Ever heard of laptop battery life? What about heat? I suppose not.

Kristian Vättö - Saturday, December 22, 2012 - link

The figures Samsung reports are with Device Initiated Power Management (DIPM) enabled. That's a feature that is usually only found on laptops but it can be added to desktop systems as well.With DIPM disabled, Samsung rates the idle power at 0.349W, which supports our figures (we got 0.31W).

The same goes for active power, Samsung rates it at 3.55W (sequential write) and 2.87W (4KB random write QD32). The 0.069W figure comes from the average power draw using Mobile Mark 2007, which is something we don't use.

Oxford Guy - Sunday, December 23, 2012 - link

So, in a laptop, the load power for the 830 amazingly plummets from, what 5+ watts, to .13 watts?That's really amazing. I guess the next thing to ask is why these amazing results aren't part of the published charts.

Cold Fussion - Saturday, December 22, 2012 - link

I think the power consumption tests are particularly useless. How come you don't test power consumption under some typical workload and heavy workload so we can see how much energy they use?Kristian Vättö - Sunday, December 23, 2012 - link

Because we don't have the equipment for that. With a standard multimeter we can only record the average peak current, so we have to use an IOmeter test for each number (recording the peak while running e.g. Heavy suite would be useless).Good power measurement equipment can cost thousands of dollars. Ultimately the decision is up to Anand but I don't think he is willing to spend that much money on just one test, especially when it can somewhat be tested with a standard multimeter. Besides, desktop users don't usually care about the power consumption at all, so that is another reason why such investment might not be the most worthwhile.

Oxford Guy - Sunday, December 23, 2012 - link

And we know only desktop users buy SSDs. No one ever buys them for laptops.lmcd - Monday, December 24, 2012 - link

Howabout you buy the equipment for them, if it's such a great investment?Cold Fussion - Tuesday, December 25, 2012 - link

That line of thinking is flawed. If you're only catering to desktop users, why even present the power consumption figures at all? The 3-5w maximum power consumption of an SSD which will largely be idle is not at all significant compared to the 75 watts the cpu is pulling while gaming or the 150watts the gpu is pulling.The tests as they are server no real purpose. It would be like trying to measure power-efficiency of a cpu purely by it's maximum power consumption. I don't believe a basic datalogger is going to run into the 1000s.

Kristian Vättö - Tuesday, December 25, 2012 - link

I didn't say we only cater desktop users, but the fact is that some of our readers are desktop users and hence don't care about the power consumption tests. It's harder to justify buying expensive equipment when some will not be interested in the tests.Don't get me wrong, I would buy the equipment in a heartbeat if someone gave me the money. However, I'm not the one pulling the strings on that. If you have suggestions on affordable dataloggers, feel free to post them. All I know is that the tool that was used in the Clover Trail efficiency article costs around $3000.

Cold Fussion - Tuesday, December 25, 2012 - link

But it doesn't cater to mobile users because the data provided is simply not of any real use. I can go to my local retail electronics store and buy a data-logging multimeter for $150-$250 AUD, I am almost certain that you can purchase one cheaper than that in the US from a retail outlet or online.