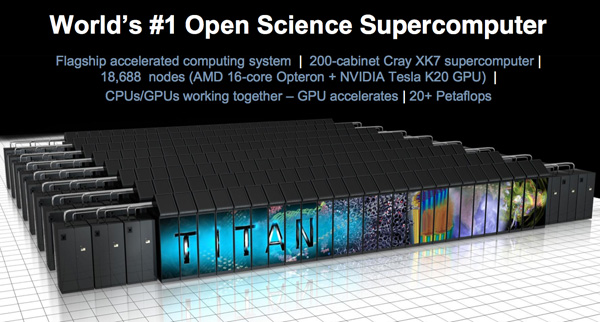

Inside the Titan Supercomputer: 299K AMD x86 Cores and 18.6K NVIDIA GPUs

by Anand Lal Shimpi on October 31, 2012 1:28 AM EST- Posted in

- CPUs

- IT Computing

- Cloud Computing

- HPC

- GPUs

- NVIDIA

Applying for Time on Titan

The point of building supercomputers like Titan is to give scientists and researchers access to hardware they wouldn't otherwise have. In order to actually book time on Titan, you have to apply for it through a proposal process.

There's an annual call for proposals, based on which time on Titan will be allocated. The machine is available to anyone who wants to use it, although the problem you're trying to solve needs to be approved by Oak Ridge.

If you want to get time on Titan you write a proposal through a program called Incite. In the proposal you ask to use either Titan or the supercomputer at Argonne National Lab (or both). You also outline the problem you're trying to solve and why it's important. Researchers have to describe their process and algorithms as well as their readiness to use such a monster machine. Any program will run on a simple computer, but to need a supercomputer with hundreds of thousands of cores the requirements are very strict. As a part of the proposal process you'll have to show that you've already run your code on machines that are smaller, but similar in nature (e.g. 1/3 the scale of Titan).

Your proposal would then be reviewed twice - once for computational readiness (can it run on Titan) and once for scientific peer review. The review boards rank all of the proposals received, and based on those rankings time is awarded on the supercomputers.

The number of requests outweighs the available compute time by around 3x. The proposal process is thus highly competitive. The call for proposals goes out once a year in April, with proposals due in by the end of June. Time on the supercomputers is awarded at the end of October with the accounts going live on the first of January. Proposals can be for 1 - 3 years, although the multiyear proposals need to renew each year (proving the time has been useful, sharing results, etc...).

Programs that run on Titan are typically required to run on at least 1/5 of the machine. There are smaller supercomputers available that can be used for less demanding tasks. Given how competitive the proposal process is, ORNL wants to ensure that those using Titan actually have a need for it.

Once time is booked, jobs are scheduled in batch and researchers get their results whenever their turn comes up.

The end user costs for using Titan depend on what you're going to do with the data. If you're a research scientist and will publish your findings, the time is awarded free of charge. All ORNL asks is that you provide quarterly updates and that you credit the lab and the Department of Energy for providing the resource.

If, on the other hand, you're a private company wanting to do proprietary work you have to pay for your time on the machine. On Jaguar the rate was $0.05 per core hour, although with Titan ORNL will be moving to a node-hour billing rate since the addition of GPUs throws a wrench in the whole core-hour billing increment.

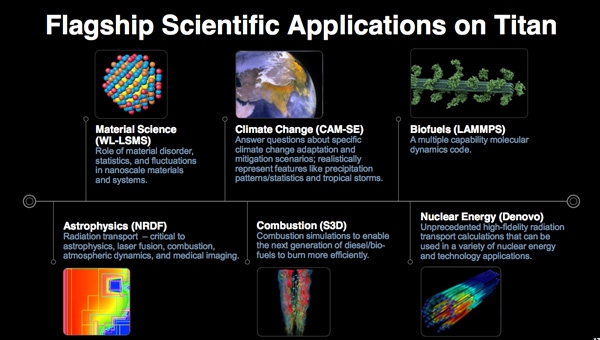

Supercomputing Applications

In the gaming space we use additional compute to model more accurate physics and graphics. In supercomputing, the situation isn't very different. Many of ORNL's supercomputing projects model the physically untestable (either for scale or safety reasons). Instead of getting greater accuracy for the impact of an explosion on an enemy, the types of workloads run at ORNL use advances in compute to better model the atmosphere, a nuclear reactor or a decaying star.

I never really had a good idea of specifically what sort of research was done on supercomputers. Luckily I had the opportunity to sit down with Dr. Bronson Messer, an astrophysicist looking forward to spending some time on Titan. Dr. Messer's work focuses specifically on stellar decay, or what happens immediately following a supernova. His work is particularly important as many of the elements we take for granted weren't present in the early universe. Understanding supernova explosions gives us unique insight into where we came from.

For Dr. Messer's studies, there's a lot of CUDA Fortran that's used although the total amount of code that runs on GPUs is pretty small. There may be 20K - 1M lines of code, but in that complex codebase you're only looking at tens of lines of CUDA code for GPU acceleration. There are huge speedups from porting those small segments of code to run on GPUs (much of that code is small because it's contained within a loop that gets pushed out in parallel to GPUs vs. executing serially). Dr. Messer tells me that the actual process of porting his code to CUDA isn't all that difficult, after all there aren't that many lines to worry about, but it's changing all of the data around to make the code more GPU friendly that is time intensive. It's also easy to screw up. Interestingly enough, in making his code more GPU friendly a lot of the changes actually improved CPU performance as well thanks to improved cache locality. Dr. Messer saw a 2x improvement in his CPU code simply by making data structures more GPU friendly.

Many of the applications that will run on Titan are similar in nature to Dr. Messer's work. At ORNL what the researchers really care about are covers of Nature and Science. There are researchers focused on how different types of fuels combust at a molecular level. I met another group of folks focused on extracting more efficiency out of nuclear reactors. These are all extremely complex problems that can't easily be experimented on (e.g. hey let's just try not replacing uranium rods for a little while longer and see what happens to our nuclear reactor). Scientists at ORNL and around the world working on Titan are fundamentally looking to model reality, as accurately as possible, so that they can experiment on it. If you think about simulating every quark, atom, molecule in whatever system you're trying to model (e.g. fuel in a combustion engine), there's a ton of data that you have to keep track of. You have to look at how each one of these elementary constituents impacts one another when exposed to whatever is happening in the system at the time. It's these large scale problems that are fundamentally driving supercomputer performance forward, and there's simply no letting up. Even at two orders of magnitude better performance than what Titan can deliver with ~300K CPU cores and 50M+ GPU cores, there's not enough compute power to simulate most of the applications that run on Titan in their entirety. Researchers are still limited by the systems they run on and thus have to limit the scope of their simulations. Maybe they only look at one slice of a star, or one slice of the Earth's atmosphere and work on simulating that fraction of the whole. Go too narrow and you'll lose important understanding of the system as a whole. Go too broad and you'll lose fidelity that helps give you accurate results.

Given infinite time you'll be able to run anything regardless of hardware, but for researchers (who happen to be human) time isn't infinite. Having faster hardware can help shorten run times to more manageable amounts. For example, reducing a 6 month runtime (which isn't unheard of for many of these projects) to something that can execute to completion in a single month can have a dramatic impact on productivity. Dr. Messer put it best when told me that keeping human beings engaged for a month is a much different proposition than keeping human beings engaged for half a year.

There are other types of applications that will run on Titan without the need for enormous runtimes, instead they need lots of repetitions. Doing hurricane simulation is one of those types of problems. ORNL was in between generations of supercomputers at one point and donated some compute time to the National Tornado Center in Oklahoma during that transition. During the time they had access to the ORNL supercomputer, their forecasts improved tremendously.

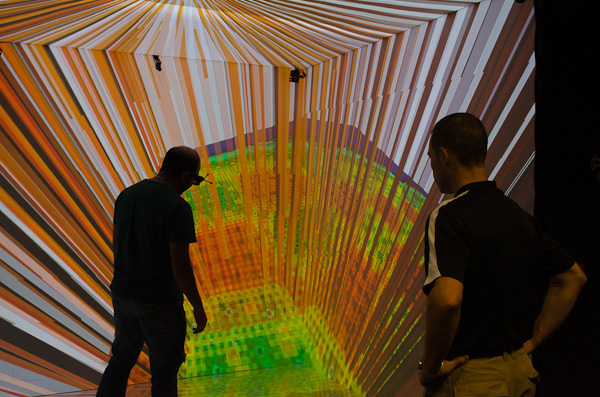

ORNL also has a neat visualization room where you can plot, in 3D, the output from work you've run on Titan. The problem with running workloads on a supercomputer is the output can be terabytes of data - which tends to be difficult to analyze in a spreadsheet. Through 3D visualization you're able to get a better idea of general trends. It's similar to the motivation behind us making lots of bar charts in our reviews vs. just publishing a giant spreadsheet, but on a much, much, much larger scale.

The image above is actually showing some data run on Titan simulating a pressurized water nuclear reactor. The video below explains a bit more about the data and what it means.

130 Comments

View All Comments

Ryan Smith - Wednesday, October 31, 2012 - link

We have other reasons to back our numbers, though I can't get into them. Suffice it to say, if we didn't have 100% confidence we would not have used it.RussianSensation - Wednesday, October 31, 2012 - link

Hey Ryan, what about this?http://www.brightsideofnews.com/news/2012/10/29/ti...

The Jaguar is thus renamed into Titan, and the sheer numbers are quite impressive:

46,645,248 CUDA Cores (yes, that's 46 million)

299,008 x86 cores

91.25 TB ECC GDDR5 memory

584 TB Registered ECC DDR3 memory

Each x86 core has 2GB of memory

1 Node = the new Cray XK7 system, consists of 16-core AMD Opteron CPU and one Nvidia Tesla K20 compute card.

The Titan supercompute has 18,688 nodes.

46,645,248 CUDA Cores / 18,688 Nodes = 2,496 CUDA cores per 1 Tesla K20 card.

Ryan Smith - Thursday, November 1, 2012 - link

Among other things: note that Titan has 6GB of memory per K20 (and this is published information).http://nvidianews.nvidia.com/Releases/NVIDIA-Power...

"The upgrade includes the Tesla K20 GPU accelerators, a replacement of the compute modules to convert the system’s 200 cabinets to a Cray XK7 supercomputer, and 710 terabytes of memory."

18,688 nodes, each with 32GB of RAM + 6GB of VRAM = 710,144 GB

(Press agencies are bad about using power of 10, hence "710" TB).

Ryan Smith - Thursday, November 1, 2012 - link

The 6GB number is also in the slide deck: http://images.anandtech.com/reviews/video/NVIDIA/T...RussianSensation - Wednesday, October 31, 2012 - link

Tom's Hardware reported that Titan Supercomputer Packs 46,645,248 Nvidia CUDA Coreshttp://www.tomshardware.com/news/oak-ridge-ORNL-nv...

46,645,248 CUDA Cores / 18,688 Tesla K20s also gives 2,496 CUDA cores per GPU, instead of 2,688.

ypsylon - Wednesday, October 31, 2012 - link

Great article. Fantastic way of showing to us tiny PC users what really big stuff looks like. Data center is one thing, but my word this stuff is, is... well that is Ultimate Computing Pr0n. For people who will never ever have a chance to visit one of the super computer centers it is quite something. Enjoyed that very much!@Guspaz

If we get that kind of performance in phones then it is really scary prospect. :D

twotwotwo - Wednesday, October 31, 2012 - link

We currently have 1-billion-transistor chips. We'd get from there to 128 trillion, or Titan-magnitude computers, after 17 iterations of Moore's Law, or about 25 years. If you go 25 years back, it's definitely enough of a gap that today's technology looks like flying cars to folks of olden times. So even if 128-trillion-transistor devices isn't exactly what happens, we'll have *something* plenty exciting on the other end.*Something*, but that may or may not be huge computers. It may not be an easy exponential curve all the way. We'll almost certainly put some efficiency gains towards saving cost and energy rather than increasing power, as we already are now. And maybe something crazy like quantum computers, rather than big conventional computers, will be the coolest new thing.

I don't imagine those powerful computers, whatever they are, will all be doing simulations of physics and weather. One of the things that made some of today's everyday tech hard to imagine was that the inputs involved (social graphs, all the contents of the Web, phones' networks and sensors) just weren't available--would have been hard, before 1980, to imagine trivially having a metric of your connectedness to an acquaintance (like Facebook's 'mutual friends') or having ads matching your interest.

I'm gonna say that 25 years out the data, power, and algorithms will be available to everyone to make things that look like Strong AI to anyone today. Oh, and the video games will be friggin awesome. If we don't all blow each other up in the next couple-and-a-half decades, of course. Any other takers? Whoever predicts it best gets a beer (or soda) in 25 years, if practical.

JAH - Wednesday, October 31, 2012 - link

Must've been a fun trip for a geek/nerd. I'm jealous!Question, what do they do with the old CPUs that got replaced? Resale, recycled, donation?

silverblue - Wednesday, October 31, 2012 - link

I'd wondering which model Opterons they threw in there. The Interlagos chips were barely faster and used more power than the Magny-Cours CPUs they were destined to replace, though I'm sure these are so heavily taxed that the Bulldozer architecture would shine through in the end.Okay, I've checked - these are 6274s, which are Interlagos and clocked at 2.2GHz base with an ACP of 80W and a TDP of 115W apiece. This must be the CPU purchase mentioned prior to Bulldozer's launch.

silverblue - Wednesday, October 31, 2012 - link

I WAS wondering, rather. Too early for posting, it seems.