Intel's Haswell Architecture Analyzed: Building a New PC and a New Intel

by Anand Lal Shimpi on October 5, 2012 2:45 AM ESTTSX

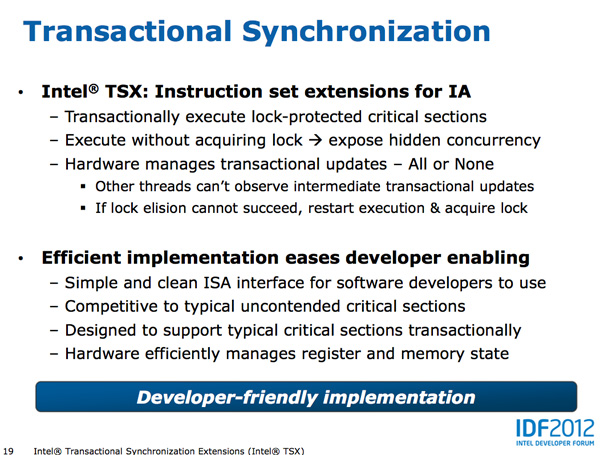

Johan did a great job explaining Haswell's Transactional Synchronization eXtensions (TSX), so I won't go into as much depth here. The basic premise is simple, although the implementation is quite complex.

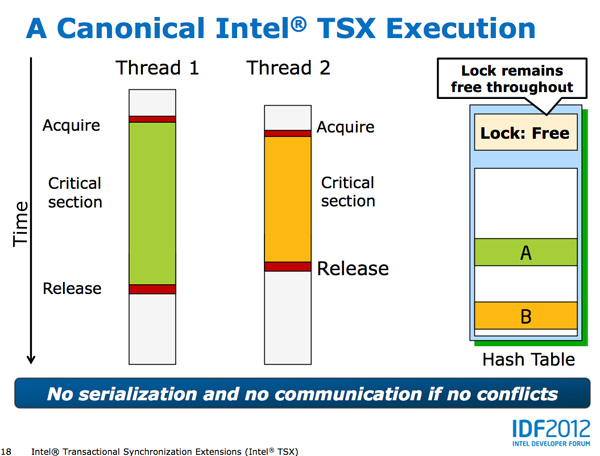

It's easy to demand well threaded applications from software vendors, but actually implementing code that scales well across unlimited threads isn't easy. Parallelizing truly independent tasks is the low hanging fruit, but it's the tasks that all access the same data structure that can create problems. With multiple cores accessing the same data structure, running independent of one another, there's the risk of two different cores writing to the same part of the same structure. Only one set of data can be right, but dealing with this concurrent access problem can get hairy.

The simplest way to deal with it is simply to lock the entire data structure as soon as one core starts accessing it and only allow that one core write access until it's done. Other cores are given access to the data structure, but serially, not in parallel to avoid any data integrity issues.

This is by far the easiest way to deal with the problem of multiple threads accessing the same data structure, however it also prevents any performance scaling across multiple threads/cores. As focused as Intel is on increasing single threaded performance, a lot of die area goes wasted if applications don't scale well with more cores.

Software developers can instead choose to implement more fine grained locking of data structures, however doing so obviously increases the complexity of their code.

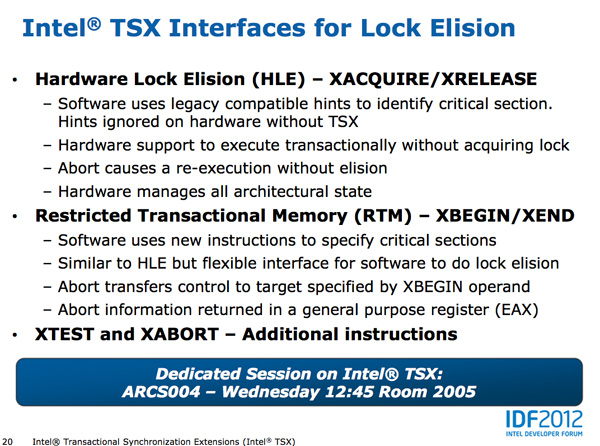

Haswell's TSX instructions allow the developer to shift much of the complexity of managing locks to the CPU. Using the new Hardware Lock Elision and its XAQUIRE/XRELEASE instructions, Haswell developers can mark a section of code for transactional execution. Haswell will then execute the code as if no hardware locks were in place and if it completes without issues the CPU will commit all writes to memory and enjoy the performance benefits. If two or more threads attempt to write to the same area in memory, the process is aborted and code re-executed traditionally with locks. The XAQUIRE/XRELEASE instructions decode to no-ops on earlier architectures so backwards compatibility isn't a problem.

Like most new instructions, it's going to take a while for Haswell's TSX to take off as we'll need to see significant adoption of Haswell platforms as well as developers embracing the new instructions. TSX does stand to show improvements in performance anywhere from client to server performance if implemented however, this is definitely one to watch for and be excited about.

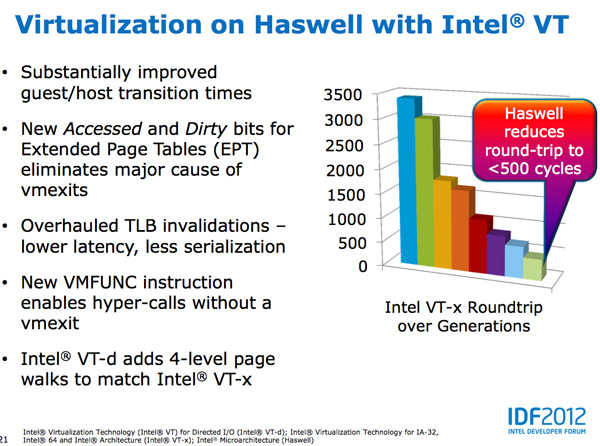

Haswell also continues improvements in virtualization performance, including big decreases to guest/host transition times.

245 Comments

View All Comments

Penti - Saturday, October 6, 2012 - link

Also FPU/SIMD has been a large part in later ARM designs and implementations. It's really a big deal as we saw with the chips lacking some of those parts. You shouldn't forget how important those bits are. Others have failed because they didn't take it seriously. That was 15-20 years ago even. Doesn't mean they are yet fighting x86-64 chips in high-end servers and workstation though. We will certainly see them entering that market by 2015 though.Arbee - Friday, October 5, 2012 - link

Cortex A9's big IPC improvement came from going out-of-order, which kind of ruins your argument.Similarly, the X360/PS3 PowerPC chips are strict in order and super ultra slow as a result - at 3.2 GHz they can't match a PowerMac G5 with out-of-order at 2.2 GHz. But I suspect that wasn't the point - Sony and MS can claim the eye-popping (in 2006) 3.2 GHz figure, and the heat production is certainly less than a PPC G5.

wumpus - Friday, October 5, 2012 - link

Has anyone seen an A9 in the wild? I don't doubt huge IPC improvements (back when O-O-O was new, it tended to double performance). My statement is that it will kill GIPS/W and that Intel can much more easily design a chip that can beat it in both raw performance and GIPS/W (note that your mention of heat production agrees with me).Also note I suspect that the goal of A9 is to keep the power low enough to keep it out of where Intel wants to go. A rough guess is that ARM might have a chance with dual issue o-o-o, but past that (roughly where Pentium Pro was designed) they can't really go.

ElvenLemming - Friday, October 5, 2012 - link

The Cortex A9 has been in most major phone/tablet SoCs for the past two or so years. Apple's A5, A5X; Samsung's Exynos 4210, 4212, 4412; TI's OMAP 4 series; Nvidia's Tegra 2 and 3.Cortex A15 is probably what you were thinking of that we've yet to see out in the wild. It's out-of-order like the A9, but with a great deal of other improvements.

ericore - Friday, October 5, 2012 - link

Currently AMD has the upper hand on the notebook segment on battery life. Haswell changes that, but as is always the case with Intel, they will be pricey. And that's why AMD will still have 50% of the market because vendors are cheap.Power savings are much less relevant on desktop front; I don't care so much about power as i do of heat. AMD X4 700, ship an awsome 4 core cpu for 75$. Technically, it has all that you need from a CPU. Add a Radeon 7770 (again cheap) and your golden. Ya Intel is faster, but both Intel and Nvidia have shitty low end products and that's even more true when you think of atom. 5-15% single threaded performance is not anything that is going to burry AMD lol.

On top of that, AMD has an atom KILLER, a contracts with all major console vendors.

Haswell will have surprisingly little impact on AMD; what I am saying is if you look at your own expectations, you'll realize they were highly inflated and you'll wonder why it didn't do more damage to AMD. I've explained the why. Nevertheless broadwell is a significant threat, and we'll probably see AMD start to lose market share (much more than with haswell) unless AMD can fight back and it will; but nobody knows if it will be enough.

A5 - Friday, October 5, 2012 - link

Uh, wow.Zink - Saturday, October 6, 2012 - link

http://www.tomshardware.com/reviews/gaming-cpu-rev...tipoo - Friday, October 5, 2012 - link

"Overall performance gains should be about 2x for GT3 (presumably with eDRAM) over HD 4000 in a high TDP part."Does this mean the regular GT3 without eDRAM cache will be twice the performance of the HD4000 and the one with the cache will be 4x? Or that the one with the cache will be 2x? In which case, what would the one with no cache perform like, with so many more EUs the first is probably correct, right?

tipoo - Friday, October 5, 2012 - link

"presumably with eDRAM"...So the GT3 in Haswel has over double the EUs of Ivy Bridge, but without the cache it doesn't even get to 2x the performance? Seems off to me, doesn't it seem like the GT3 on its own would be 2x the performance while the eDRAM cache would make for another 2x?DanNeely - Saturday, October 6, 2012 - link

It probably means that, like AMD, Intel is hitting the wall on memory bandwidth for IGPs. When it finally arrives, DDR4 will shake things up a bit; but DDR3 just isn't fast enough.