AMD A10-5800K & A8-5600K Review: Trinity on the Desktop, Part 1

by Anand Lal Shimpi on September 27, 2012 12:00 AM ESTStarcraft 2

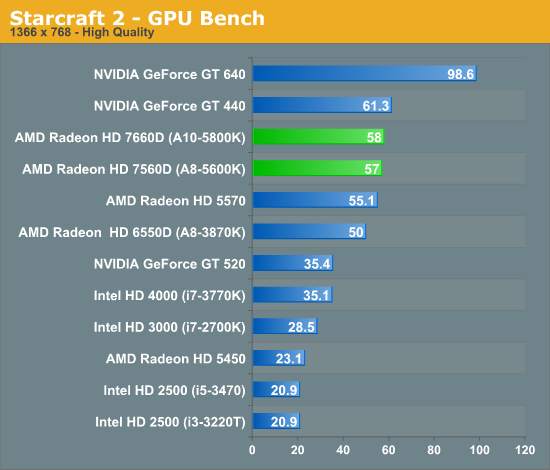

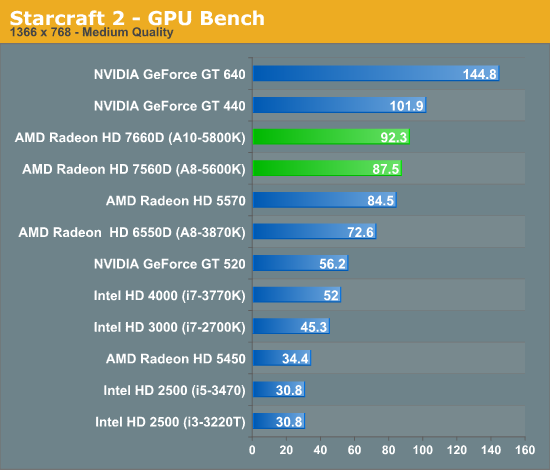

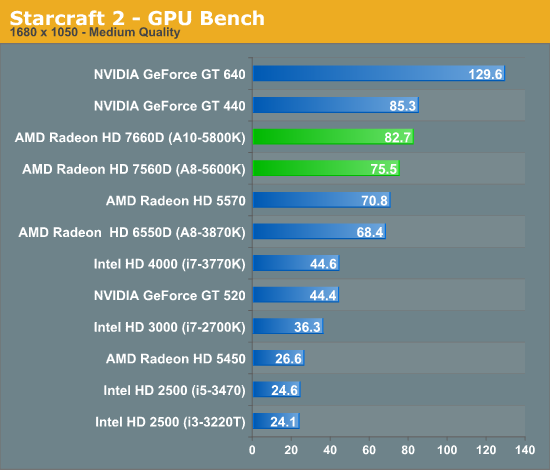

Our next game is Starcraft II, Blizzard's 2010 RTS megahit. Starcraft II is a DX9 game that is designed to run on a wide range of hardware, and given the growth in GPU performance over the years it's often CPU limited before it's GPU limited on higher-end cards.

Despite being heavily influenced by CPU performance, Starcraft 2 shows big gains when moving to Trinity. The improvement over Llano ranges from 16 - 27% in our tests. The performance advantage over Ivy Bridge is huge.

The Elder Scrolls V: Skyrim

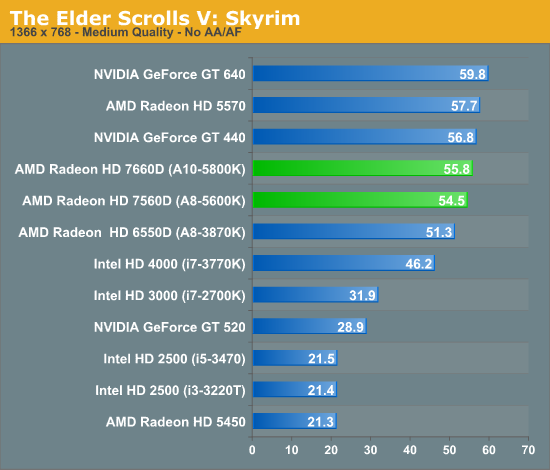

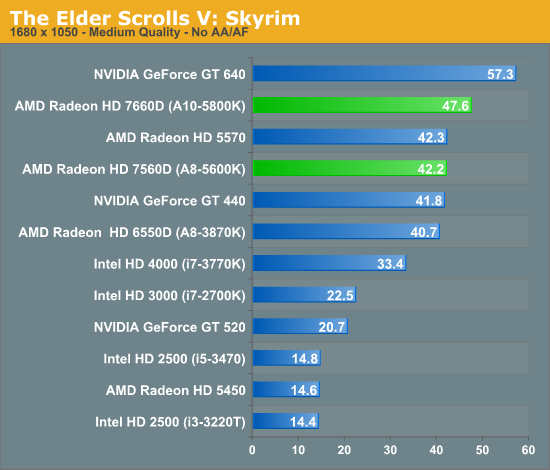

Bethesda's epic sword & magic game The Elder Scrolls V: Skyrim is our RPG of choice for benchmarking. It's altogether a good CPU benchmark thanks to its complex scripting and AI, but it also can end up pushing a large number of fairly complex models and effects at once. This is a DX9 game so it isn't utilizing any new DX11 functionality, but it can still be a demanding game.

We see some mild improvements over Llano in our Skyrim tests, and even Intel is able to catch up a bit. Trinity still does quite well, only NVIDIA's GeForce GT 640 can really deliver better performance than the top-end A10-5800K SKU.

139 Comments

View All Comments

Kougar - Friday, September 28, 2012 - link

Given no mention of a "preview" was mentioned in the title, it would have been nice if the The Terms of Engagement section was at the very top of the "review" to be completely forthright with your readership.I read down to that section and stopped, then went looking through the review for CPU benchmarks which didn't exist. Can thank The Tech Report for posting an editorial on AMD's "preview" clause before I realized what was going on.

Omkar Narkar - Friday, September 28, 2012 - link

would you guys review 5880k crossfired with HD 6670 ???because I've heard that when you pair it with high end GPU like HD7870 then integrated graphics cores doesn't work.

TheJian - Friday, September 28, 2012 - link

Why was this benchmark used in the two reviews before the 660TI launch, and here today, but not in the 660TI article Ryan Smith wrote? This is just more stuff showing bias. He could have easily ran it with the same patch as the two reviews before the 660TI launch article. Is is because in both of those two articles the 600 series dominated the 7970ghz edition and the 7950 Boost? This is at the very least, hard to explain.plonk420 - Monday, October 1, 2012 - link

are those discrete GPUs on the charts being run on the AMD board? or a Sandy/Ivy?seniordady - Monday, October 1, 2012 - link

Please,can you make some test to the CPU vs... not only to the GPU?ericore - Monday, October 1, 2012 - link

http://news.softpedia.com/news/GlobalFoundries-28n...Power leaking reduced by up to 550%; wow.

What an unintended coup for AMD haha all because of Global Foundries.

Take that Intel.

AMD is also first one working on Java GPU acceleration.

shin0bi272 - Tuesday, October 2, 2012 - link

This is cool if you want to game at 13x7 at low res... but who does that anymore? When you bump up games like BF3 or Crysis2 (which you didnt test but toms did) the FPS falls into the single digits. This cpus is fine if you dont really play video games or have a 17" CRT monitor. The thing that I think is funny about this is that in all the games a 100 dollar nvidia gpu beat the living snot out of this apu. Other than HTPC people who want video output without having to buy a video card or someone who doesnt play FPS games but wants to play farmville or minecraft no one will buy this thing. Yet people are still trying to make this thing out to be a gaming cpu/gpu combo and its just not going to satisfy anyone who buys it to play games on and thats disingenuous.Shadowmaster625 - Tuesday, October 2, 2012 - link

When you tested your GT440, you didnt do it on this hardware right? If you were to disable the trinity gpu and put a GT640 in its place, do you think it would still do better? Or would its score be pretty close to that of the iGPU??skgiven - Sunday, October 7, 2012 - link

No idea what the NVidia GT440 is doing there; where are the old AMD alternatives?Given the all to limited review I don't see the point in comparing this to NVidia's discrete GT640.

Firstly, it's not clear if you are comparing the APU's to a DDR3 GT640 version (of which there are two; April 797MHz and June 900MHz) or the GDDR5 version (all 65W TDP).

Secondly, the GT640 has largely been superseded by the GTX650 (64W TDP).

So was your comparison the 612GFlops model, the 691, or 729 GFlops version?

Anyway, the GTX650 is basically the same card but has is rated as 812GFlops (30% faster than the April DDR3 model). Who knows, maybe you intended to add these details along with the GTX650Ti, in a couple of days?

If you are going to compare these APU to discrete entry level cards, you need to add a few more cards. Clearly the A10-5800k falls short against Intels more recent processors for most things (nothing new there), but totally destroys anything Intel has when it comes to gaming, so there is no point in over-analysing that. It wins that battle hands down, so the real question is, how does it perform compared to other gaming APU's and discrete entry level cards?

I'm not sure why you stuck to the same 1366 screen resolution? Can this card not operate at other frequencies, or can the opposition not compete at higher resolutions?

1366 is common for laptops. I don't think these 100W chips are really intended for that market. They are for small desktops, home theatre, entry level (inexpensive) gaming systems.

These look good for basic gaming systems and in terms of performance per $ and Watt, even for some office systems, but their niche is very limited. If you want a good home theatre/desktop/gaming system, throw in a proper discrete GPU and operate at a more sensible 1680 or 1920 for real HD quality.