The 2012 MacBook Air (11 & 13-inch) Review

by Anand Lal Shimpi on July 16, 2012 12:53 PM EST- Posted in

- Apple

- Mac

- MacBook Air

- Laptops

- Notebooks

The More Complicated (yet predictable) SSD Lottery

Apple continues to use a custom form factor and interface for the SSDs in the MacBook Air. This generation Apple opted for a new connector, so you can't swap drives between 2011 and 2012 models. I'd always heard reports of issues with the old connector from a manufacturing standpoint, so the change makes sense. The new SSD connector looks to be identical to the one used by the Retina Display equipped MacBook Pro, although rest of the SSD PCB is different.

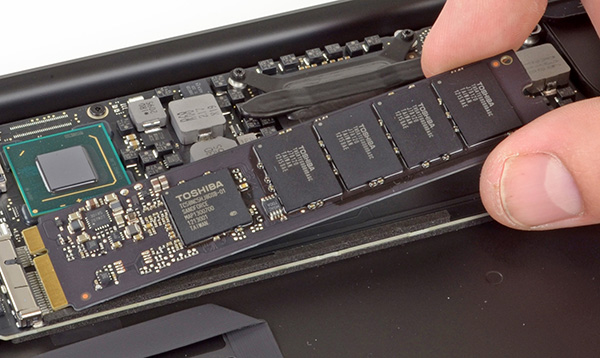

The Toshiba Branded SandForce SF-2200 controller in the 2012 MacBook Air - iFixit

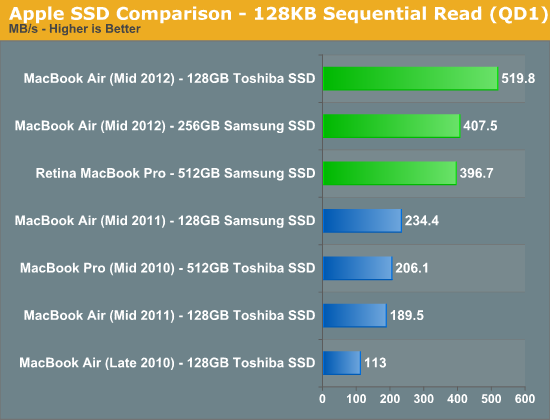

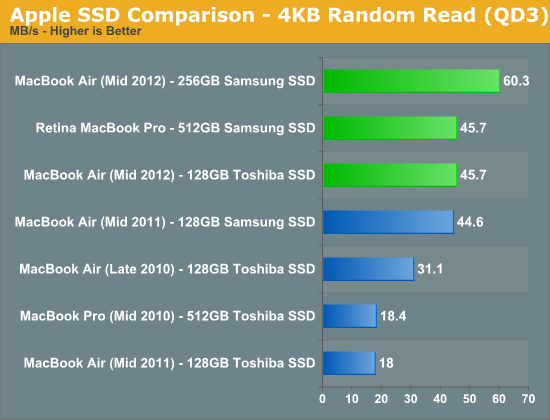

As always there are two SSD controller vendors populating the drives in the new MacBook Air: Toshiba and Samsung. The Samsung drives use the same PM830 controller found in the 2012 MacBook Pro as well as the MacBook Pro with Retina Display. The Toshiba drives use a rebranded SandForce SF-2200 controller. Both solutions support 6Gbps SATA and both are capable of reaching Apple's advertised 500MB/s sequential access claims.

While in the past we've recommended the Samsung over the Toshiba based drives, things are a bit more complicated this round because of the controller vendor Toshiba decided to partner with.

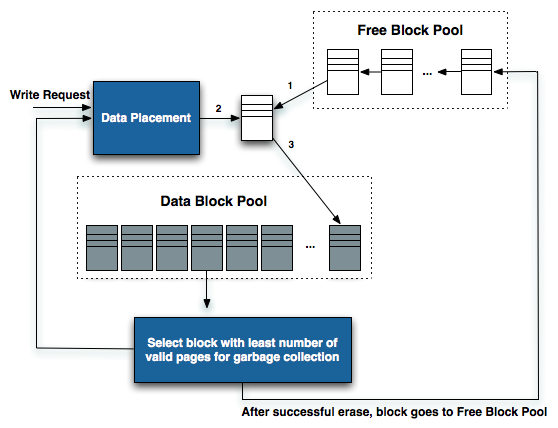

The write/recycle path in NAND flash based SSD

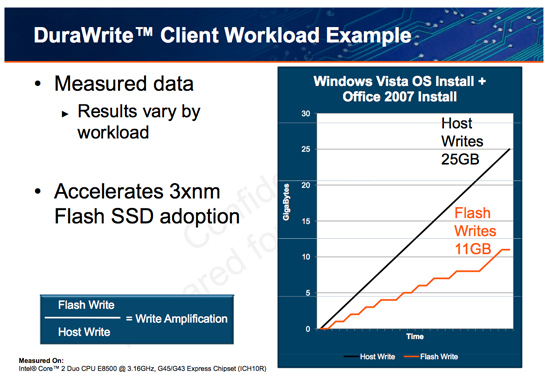

Samsung's PM830 works just like any other SSD controller. To the OS it presents itself as storage with logical block addresses starting from 0 all the way up to the full capacity of the drive. Reads and writes come in at specific addresses, and the controller maps those addresses to blocks and pages in its array of NAND flash. Every write that comes in results in data written to NAND. Those of you who have read our big SSD articles in the past know that NAND is written to at the page level (these days pages are 8KB in size), but can only be erased at the block level (typically 512 pages, or 4MB). This write/erase mismatch combined with the fact that each block as a finite number of program/erase cycles it can endure is what makes building a good SSD controller so difficult. In the best case scenario, the PM830 will maintain a 1:1 ratio of what the OS tells it to write to NAND and what it actually ends up writing. In the event that the controller needs to erase and re-write a block to optimally place data, it will actually end up writing more to NAND than the OS requested of it. This is referred to as write amplification, and is responsible for the performance degradation over time that you may have heard of when it comes to SSDs.

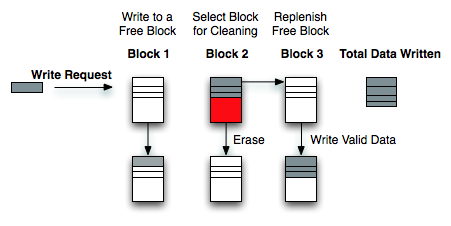

Write Amplification

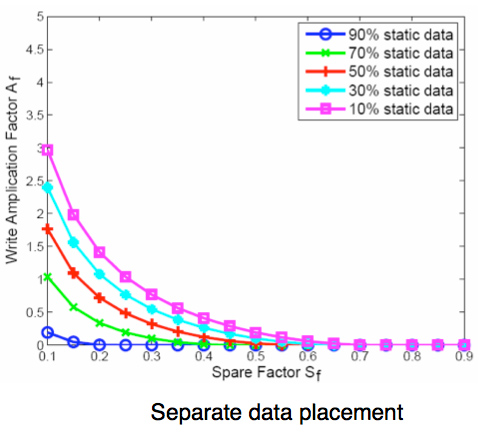

For most client workloads, with sufficient free space on your drive, Samsung's PM830 can keep write amplification reasonably low. If you fill the drive and/or throw a fragmented enough workload at it, the PM830 doesn't actually behave all that gracefully. Very few controllers do, but the PM830 isn't one of the best in this regard. My only advice is to try and keep around 20% of your drive free at all times. You can get by with less if you are mostly reading from your drive or if most of your writes are just big sequential blocks (e.g. copying big movies around). I explain the relationship between free space and write amplification here.

Write Amplification vs. Spare Area, courtesy of IBM Zurich Research Laboratory

The Toshiba controller works a bit different. As I already mentioned, Toshiba's controller is actually a rebranded SandForce controller. SandForce's claim to fame is the ability to commit less data to NAND than your OS writes to the drive. The controller achieves this by using a hardware accelerated compression/data de-duplication engine that sees everything in the IO stream.

The drive still presents itself as traditional storage with an array of logical block addresses. The controller still keeps track of mapping LBAs to NAND pages and blocks. However, because of the compression/dedupe engine, not all data that's written to the controller is actually written to NAND. Anything that's compressible, is compressed before being written. It's decompressed on the fly when it's read back. All of the data is still tracked, the drive still is and appears to be the capacity that is advertised (you don't get any extra space), you just get extra performance. After all, writing nothing is always faster than writing something.

Writing less data to NAND can improve performance over time by keeping write amplification low. There are also impacts on NAND endurance, but as I've shown in the past, endurance isn't a concern for client drives and usage models. Writing less also results in a slight reduction in component count: there's no external DRAM found on SandForce based drives. The PM830 SSD features a 256MB DDR2 device on-board, while the Toshiba based drive has nothing - just NAND and the controller. This doesn't end up making the Toshiba drive substantially cheaper as SandForce instead charges a premium for its controller. In the case of the PM830, both user data and LBA-to-NAND mapping tables are cached in DRAM. In the case of the Toshiba drive, a smaller on-chip cache is used since there's typically less data being written to the NAND itself.

SandForce's approach is also unique in that performance varies depending on the composition of the data written to the drive.

PC users should be well familiar with SandForce's limitations, but this is the first time that Apple has officially supported the controller under OS X. As such I thought I'd highlight some of the limitations so everyone knows exactly what they're getting into.

Any data that's random in composition, or already heavily compressed, isn't further reduced by Toshiba's SandForce controller. As SandForce's architecture is designed around the assumption that most of what we interact with is easily compressible, when a SF controller encounters data that can't be compressed it performs a lot slower.

Special thanks to AnandTech reader KPOM for providing the 256GB Samsung results

The performance impact is pretty much limited to writing. We typically use Iometer to measure IO performance as it's an incredibly powerful tool. You can define transfer size, transfer locality (from purely sequential all the way to purely random) and even limit your tests to specific portions of the drive, among other features. Later versions of Iometer introduced the ability to customize the composition of each IO transfer. For simplicity, whenever Iometer goes to write anything to disk it's a series of repeating bytes (all 0s, all 1s, etc...). Prior to SandForce based SSDs this didn't really matter. SandForce's engine will reduce these IOs to their simplest form. A series of repeating bytes can easily be represented in a smaller form (one byte and a record of how many times it repeats). Left at its default settings, SandForce drives look amazing in Iometer - even faster than the PM830 based Samsung drive that Apple uses. Even more impressive, since very little data is actually being written to the drive, you can run default Iometer workloads for hours (if not days) on end without any degradation in performance. Doing so only tells us part of the story. While frequently used OS and application files are easily compressed, most files aren't.

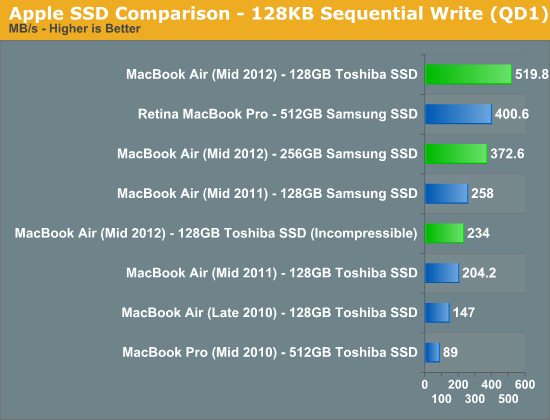

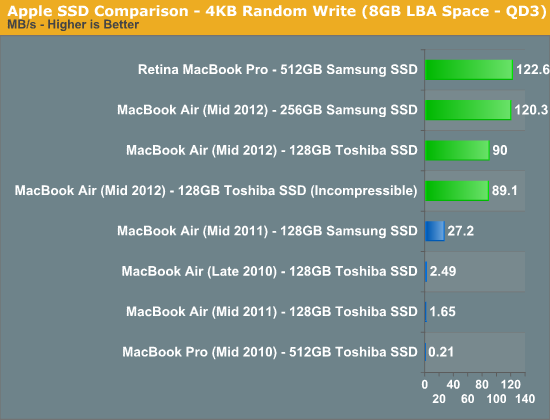

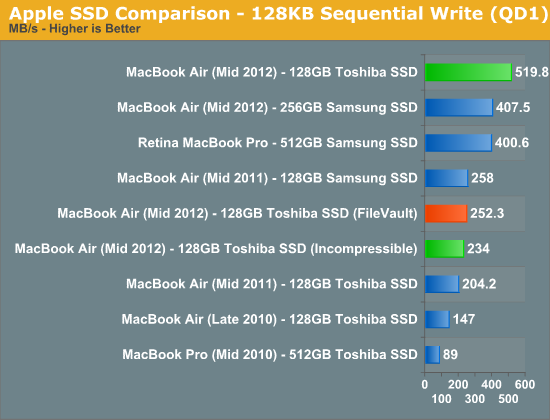

Thankfully, later versions of Iometer include the ability to use random data in each transfer. There's still room for some further compression or deduplication, but it's significantly reduced. In the write speed charts below you'll see two bars for the Toshiba based SSD, the one marked incompressible uses Iometer's random data setting, the other one uses the default write pattern.

When fed easily compressible data, the Toshiba/SandForce SSD performs insanely well. Even at low queue depths it's able to hit Apple's advertised "up-to" performance spec of 500MB/s. Random write performance isn't actually as good as Samsung's, but it's more likely to maintain these performance levels over time.

Therein lies the primary motivator behind SandForce's approach to flash controller architecture. Large sequential transfers are more likely to be heavily compressed (e.g. movies, music, photos), while the small, pseudo-random accesses are more likely easily compressible. The former is rather easy for a SSD controller to write at high speeds. Break up the large transfer, stripe it across all available NAND die, write as quickly as possible. The mapping from logical block addresses to pages in NAND flash is also incredibly simple. Fewer entries are needed in mapping tables, making the read and write of these large files incredibly easy to track/manage. It's the small, pseudo-random operations that cause problems. The controller has to combine a bunch of unrelated IOs in order to get good performance, which unfortunately leaves the array of flash in a highly fragmented state - bringing performance down for future IO operations. If SandForce's compression can reduce the number of these small IOs (which it manages to do very well in practice), then the burden really shifts to dealing with large sequential transfers - something even the worst controllers can do well.

It's really a very clever technology, one that has been unfortunately marred by a bunch of really bad firmware problems (mostly limited to PCs it seems).

The downside in practice is the performance when faced with these incompressible workloads. Our 4KB random write test doesn't actually drop in performance, but if we ran it for long enough you'd see a significant decrease in performance. The sequential write test however shows an immediate reduction of more than half. If you've been wondering why your Toshiba SSD benchmarks slower than someone else's Samsung, check to see what sort of data the benchmark tool is writing to the drive. The good news is that even in this state the Toshiba drive is faster than the previous generation Apple SSDs, the bad news is the new Samsung based drive is significantly quicker.

What about in the real world? I popped two SSDs into a Promise Pegasus R6, created a RAID-0 array, and threw a 1080p transcode of the Bad Boys Blu-ray disc on the drive. I then timed how long it took to copy the movie to the Toshiba and Samsung drives over Thunderbolt:

| Real World SSD Performance with Incompressible Data | ||||

| Copy 13870MB H.264 Movie | 128GB Toshiba SSD | 512GB Samsung SSD | ||

| Transfer Time | 59.97 s | 31.59 s | ||

| Average Transfer Rate | 231.3 MB/s | 439.1 MB/s | ||

The results almost perfectly mirrored what Iometer's incompressible tests showed us (which is why I use those tests so often, they do a good job of modeling the real world). The Samsung based Apple SSD is able to complete the file copy in about half the time of the Toshiba drive. Pretty much any video you'd have on your machine will be heavily compressed, and as a result will deliver the worst case performance on the Toshiba drive.

Keep in mind that to really show this difference I had to have a very, very fast source for the transfer. Unless you've got a 6Gbps SSD over USB 3.0 or Thunderbolt, or a bunch of hard drives you're copying from, you won't see this gap. The difference is also less pronounced if you're copying from and to the same drive. Whether or not this matters to you really depends on how often you move these large compressed files around. If you do a lot of video and photo work with your Mac, it's something to pay attention to.

There's another category of users who will want to be aware of what you're getting into with the Toshiba based drive: anyone who uses FileVault or other full disk encryption software.

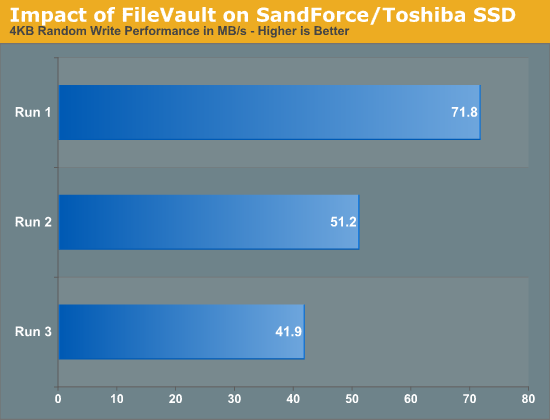

Remember, SandForce's technology only works on files that are easily compressed. Good encryption should make every location on your drive look like a random mess, which wreaks havoc on SandForce's technology. With FileVault enabled, all transfers look incompressible - even those small file writes that I mentioned are usually quite compressible earlier.

After enabling FileVault I ran our Iometer write tests on the drives again, performance is understandably impacted:

Also look at what happens to our 4KB random write test if we repeat it a few times back to back:

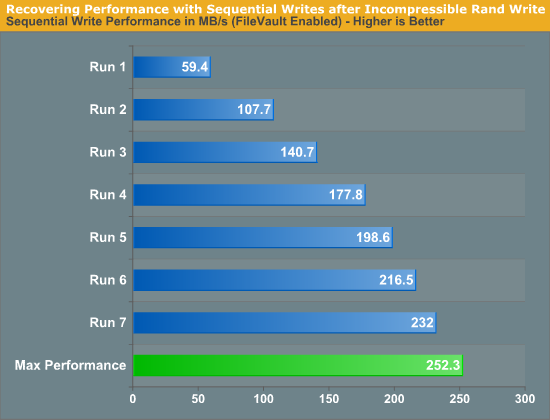

That trend will continue until the drive's random write performance is really bad. Sequential write passes will restore performance up to ~250MB/s, but it takes several passes to get it there:

If you're going to be using FileVault, stay away from the Toshiba drive.

This brings us to the next problem: how do you tell what drive you have?

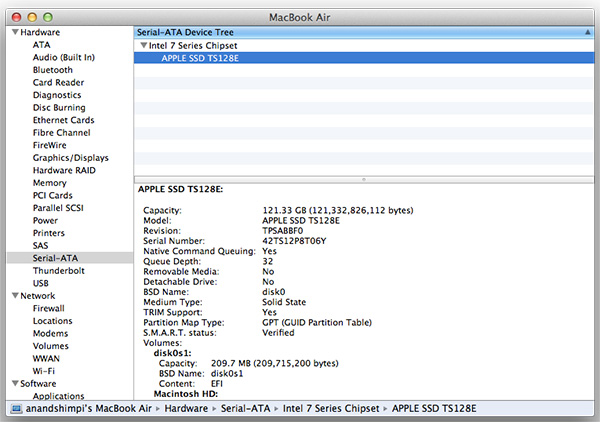

As of now Apple has two suppliers for the SSD controllers in all of its 2012 Macs: Toshiba and Samsung. If you run System Information (click the Apple icon in the upper left > About this Mac > System Report) and select Serial ATA you'll see the model of your SSD. Drives that use Toshiba's 6Gbps controller are labeled Apple SSD TSxxxE (where xxx is your capacity, e.g. TS128E for a 128GB drive), while 6Gbps Samsung drives are labeled Apple SSD SMxxxE. Unfortunately this requires you to already purchase and open up your system. It's a good thing that Apple stores are good about accepting returns.

There's another option that seems to work, for now at least. It seems as if all 256GB and 512GB Apple SSDs currently use Samsung controllers, while Toshiba is limited to the 64GB and 128GB capacities. There's no telling if this trend will hold indefinitely (even now it's not a guarantee) but if you want a better chance of ending up with a Samsung based drive, seek out a 256GB or larger capacity. Note that this also means that the rMBP exclusively uses Samsung controllers, at least for now.

I can't really blame Toshiba for this as even Intel has resorted to licensing SandForce's controllers for its highest performing drives. I will say that Apple doesn't seem to be fond of inconsistent user experiences across its lineup. I wouldn't be surprised if Apple sought out a third SSD vendor at some point.

190 Comments

View All Comments

name99 - Tuesday, July 17, 2012 - link

The most likely reason for the USB3 problems is devices that are just slightly out of spec, demanding more power than USB3 can deliver. The larger rMBP is willing to give them this extra power, the smaller MBAs cannot.This is a very common problem these days with flash storage. Look at a table of the power demands of various disks for either startup or sustained writes --- it is depressing how many are almost (but just over) the USB3 limit --- and there are plenty of manufacturers (yeah, OCZ, I'm looking at you) who are quite willing to sell you a "USB3" drive which kinda sorta appears to run until you generate a long series of sustained writes at which point it hangs.

So why does the failure for these drives appear at plugin time?

One possibility is that they need a burst of juice to start themselves going, another is that they negotiate with the host and can't negotiate as much power as they want. (I don't know the USB3 power negotiation protocol.)

It would be an interesting experiment, IMHO, for Anand to try the problematic devices again with a Y cable that could deliver extra USB power from a second port.

jospoortvliet - Wednesday, July 18, 2012 - link

On a related note - neither can anyone sell the nice magsafe(2) adaptors, it seems. Quite annoying - I don't really get why it's legal that Apple can stop others from making those? I get they have patents on it, but wasn't the idea of the patent system to ensure inventors get decently renumerated, not to let them block others from using their inventions? I thought you HAVE to license your patents for a 'reasonable' fee... Still, all other laptops come without a magsafe-like plug...Romberry - Tuesday, July 17, 2012 - link

...or drowning in Kool-Aid. Apple cripples other OS's on their hardware (and does so for no good technical reason at all.) See my previous comment for more. Or take a look at Ed Bott's recent article on another site concerning battery life on a Mac running Windows. Or fire up Google.KPOM - Tuesday, July 17, 2012 - link

Here's what Ed Bott said:"I don’t blame Apple for this terrible performance. They’ve focused their engineering resources on their own hardware and their own operating system. For Apple, Boot Camp is a tool to use occasionally, when you need to run a Windows program without virtualization software getting in the way."

It's always been obvious that Boot Camp drivers are subpar, though it is getting better. However, most people aren't buying Macs to run Windows as the primary OS. They do enough to get Windows running occasionally, but they rightfully spend their time and effort optimizing their hardware for OS X. What's the issue there?

Incidentally, the new Boot Camp drivers do enable AHCI support, so TRIM, etc. will work in Windows 7 and Windows 8. They also generally deliver decent performance (the Core i7 bug notwithstanding). Apple doesn't need to make Boot Camp available at all.

Spunjji - Tuesday, July 17, 2012 - link

No good technical reason, for sure. Business reasons, on the other hand... There are many people out there who don't get the technical side of it, they just get what works and what doesn't. They see Windows running poorly on the same hardware, so they assume that with all things being equal that Windows is the source of the problem. Makes a simple kind of sense unless you know that Apple are responsible for tying the 2 together. They could easily do it properly, but they never will. Because they're Captialist Pigs! Yaaayyy! :DFreakie - Tuesday, July 17, 2012 - link

Wait wut... Are you being sarcastic? o_O It's not Apple's choice to allow other OS's on their laptop, it's the hardwork of the programmers for the other OS's that allow their OS's to be compatible with an incredibly large range of hardware. Unlike OSX which can't even figure out it's own small range of hardware. It's out of Microsoft's own decision that it can run on non-partnered computers that you build yourself, or buy from a company that doesn't make Windows computers. In fact. Apple puts specific features in OSX to make it not run on any hardware but theirs and users have to hack in order to get it to run on anything else.Apple should be hanged for their nonexistent allowances of other OS's.

KPOM - Tuesday, July 17, 2012 - link

Uh, without Boot Camp, it would be very difficult to run Windows, because Windows doesn't support Apple's implementation of EFI (which predates Windows' support for UEFI). Also, they do write drivers for their components. Microsoft's stock drivers don't support all the hardware used.Freakie - Tuesday, July 17, 2012 - link

Yes, Microsoft uses a new, more advanced, and completely 64bit compatible version of EFI. Just because Apple did it first, doesn't mean they somehow do it better now xP Though from what I've seen, the ability to put Windows on a Mac without any modifications isn't a fault of Windows, but differences between the two OS's as well as Apple's insistence of only allowing Windows on their system under their own terms. If Apple was more open about things, then hackers would have had to work less =PI also never said they didn't write their own drivers? o_O I mean, they obviously don't write all of their own drivers 100%, as I am sure that the hardware manufacturers have to give them something to start with.

And Microsoft's stock drivers are actually quite impressive. Of course they can' support every little peripheral, but they do an amazing job of supporting a countless range of combinations of hardware. There is no doubt that Microsoft's view on how it develops drivers is much more open than Apple's. Microsoft support DIY builders and system integrators as well, while Apple does not.

KPOM - Tuesday, July 17, 2012 - link

Boot Camp came out before Windows supported EFI at all. Apple has no real incentive to switch to UEFI because it doesn't really offer significant benefits, and they'd have to modify UEFI anyway to keep OS X proprietary.My point wasn't about UEFI, though. It is about Apple enabling other operating systems to run on their computers. They actively added that capability when they didn't need to.

Your point about MS' openness vs. Apple is well known. Microsoft is primarily a software company. Up to now, they haven't cared whether you installed Windows 7 on an Apple, a Dell, HP, or something you built yourself. However, with Windows RT, they are going partly the Apple route, since they won't sell the OS separately, and will individually approve manufacturers and designs. So apparently even they recognize there are advantages to a closed model.

Spunjji - Tuesday, July 17, 2012 - link

"When they didn't need to" <- What nonsense is this? A market requirement translates as a need. They would have lost all of the customers out there who require genuine Windows applications running under non-virtualised Windows. This is a non-trivial portion of the market.Apple do not do anything out of the generosity of their hearts. What wealthy company does?