The next-gen MacBook Pro with Retina Display Review

by Anand Lal Shimpi on June 23, 2012 4:14 AM EST- Posted in

- Mac

- Apple

- MacBook Pro

- Laptops

- Notebooks

Driving the Retina Display: A Performance Discussion

As I mentioned earlier, there are quality implications of choosing the higher-than-best resolution options in OS X. At 1680 x 1050 and 1920 x 1200 the screen is drawn with 4x the number of pixels, elements are scaled appropriately, and the result is downscaled to 2880 x 1800. The quality impact is negligible however, especially if you actually need the added real estate. As you’d expect, there is also a performance penalty.

At the default setting, either Intel’s HD 4000 or NVIDIA’s GeForce GT 650M already have to render and display far more pixels than either GPU was ever intended to. At the 1680 and 1920 settings however the GPUs are doing more work than even their high-end desktop counterparts are used to. In writing this article it finally dawned on me exactly what has been happening at Intel over the past few years.

Steve Jobs set a path to bringing high resolution displays to all of Apple’s products, likely beginning several years ago. There was a period of time when Apple kept hiring ex-ATI/AMD Graphics CTOs, first Bob Drebin and then Raja Koduri (although less public, Apple also hired chief CPU architects from AMD and ARM among other companies - but that’s another story for another time). You typically hire smart GPU guys if you’re building a GPU, the alternative is to hire them if you need to be able to work with existing GPU vendors to deliver the performance necessary to fulfill your dreams of GPU dominance.

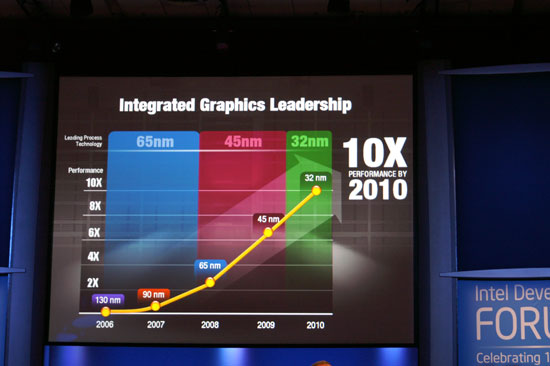

In 2007 Intel promised to deliver a 10x improvement in integrated graphics performance by 2010:

In 2009 Apple hired Drebin and Koduri.

In 2010 Intel announced that the curve had shifted. Instead of 10x by 2010 the number was now 25x. Intel’s ramp was accelerated, and it stopped providing updates on just how aggressive it would be in the future. Paul Otellini’s keynote from IDF 2010 gave us all a hint of what’s to come (emphasis mine):

But there has been a fundamental shift since 2007. Great graphics performance is required, but it isn't sufficient anymore. If you look at what users are demanding, they are demanding an increasingly good experience, robust experience, across the spectrum of visual computing. Users care about everything they see on the screen, not just 3D graphics. And so delivering a great visual experience requires media performance of all types: in games, in video playback, in video transcoding, in media editing, in 3D graphics, and in display. And Intel is committed to delivering leadership platforms in visual computing, not just in PCs, but across the continuum.

Otellini’s keynote would set the tone for the next few years of Intel’s evolution as a company. Even after this keynote Intel made a lot of adjustments to its roadmap, heavily influenced by Apple. Mobile SoCs got more aggressive on the graphics front as did their desktop/notebook counterparts.

At each IDF I kept hearing about how Apple was the biggest motivator behind Intel’s move into the GPU space, but I never really understood the connection until now. The driving factor wasn’t just the demands of current applications, but rather a dramatic increase in display resolution across the lineup. It’s why Apple has been at the forefront of GPU adoption in its iDevices, and it’s why Apple has been pushing Intel so very hard on the integrated graphics revolution. If there’s any one OEM we can thank for having a significant impact on Intel’s roadmap, it’s Apple. And it’s just getting started.

Sandy Bridge and Ivy Bridge were both good steps for Intel, but Haswell and Broadwell are the designs that Apple truly wanted. As fond as Apple has been of using discrete GPUs in notebooks, it would rather get rid of them if at all possible. For many SKUs Apple has already done so. Haswell and Broadwell will allow Apple to bring integration to even some of the Pro-level notebooks.

To be quite honest, the hardware in the rMBP isn’t enough to deliver a consistently smooth experience across all applications. At 2880 x 1800 most interactions are smooth but things like zooming windows or scrolling on certain web pages is clearly sub-30fps. At the higher scaled resolutions, since the GPU has to render as much as 9.2MP, even UI performance can be sluggish. There’s simply nothing that can be done at this point - Apple is pushing the limits of the hardware we have available today, far beyond what any other OEM has done. Future iterations of the Retina Display MacBook Pro will have faster hardware with embedded DRAM that will help mitigate this problem. But there are other limitations: many elements of screen drawing are still done on the CPU, and as largely serial architectures their ability to scale performance with dramatically higher resolutions is limited.

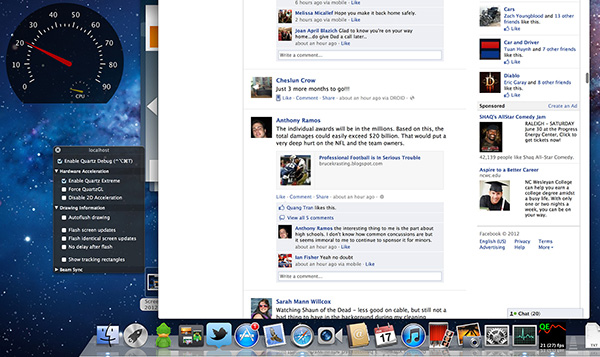

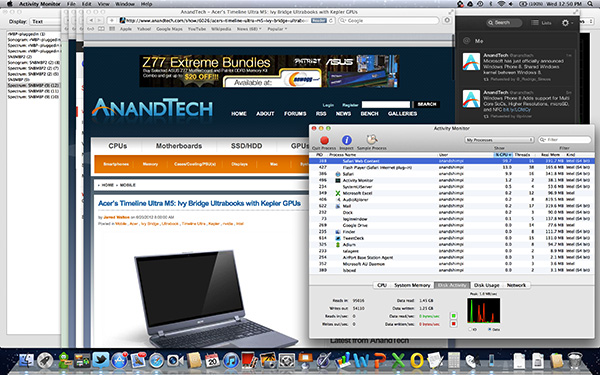

Some elements of drawing in Safari for example aren’t handled by the GPU. Quickly scrolling up and down on the AnandTech home page will peg one of the four IVB cores in the rMBP at 100%:

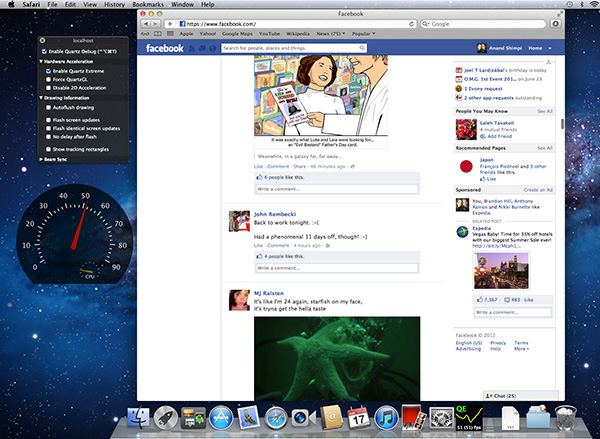

The GPU has an easy time with its part of the process but the CPU’s workload is borderline too much for a single core to handle. Throw a more complex website at it and things get bad quickly. Facebook combines a lot of compressed images with text - every single image is decompressed on the CPU before being handed off to the GPU. Combine that with other elements that are processed on the CPU and you get a recipe for choppy scrolling.

To quantify exactly what I was seeing I measured frame rate while scrolling as quickly as possible through my Facebook news feed in Safari on the rMBP as well as my 2011 15-inch High Res MacBook Pro. While last year’s MBP delivered anywhere from 46 - 60 fps during this test, the rMBP hovered around 20 fps (18 - 24 fps was the typical range).

Scrolling in Safari on a 2011, High Res MBP - 51 fps

Scrolling in Safari on the rMBP - 21 fps

Remember at 2880 x 1800 there are simply more pixels to push and more work to be done by both the CPU and the GPU. It’s even worse in those applications that have higher quality assets: the CPU now has to decode images at 4x the resolution of what it’s used to. Future CPUs will take this added workload into account, but it’ll take time to get there.

The good news is Mountain Lion provides some relief. At WWDC Apple mentioned the next version of Safari is ridiculously fast, but it wasn’t specific about why. It turns out that Safari leverages Core Animation in Mountain Lion and more GPU accelerated as a result. Facebook is still a challenge because of the mixture of CPU decoded images and a standard web page, but the experience is a bit better. Repeating the same test as above I measured anywhere from 20 - 30 fps while scrolling through Facebook on ML’s Safari.

Whereas I would consider the rMBP experience under Lion to be borderline unacceptable, everything is significantly better under Mountain Lion. Don’t expect buttery smoothness across the board, you’re still asking a lot of the CPU and GPU, but it’s a lot better.

471 Comments

View All Comments

Mumrik - Monday, June 25, 2012 - link

Anand, on page 4 you categorize the rMBP as a consumer device: "At 220 pixels per inch it’s easily the highest density consumer notebook panel shipping today.", but back on page 2 you made a deal of of calling it a pro "appliance" and pointed out that it wasn't a consumer device.

Other than that - this DPI improvement really needs to get moving. It's been so many years and we've essentially been standing still since LCDs took over and the monitor business became a race towards the bottom. IPS, high DPI and native support for it in software PLEASE. 120hz would be nice too.

dwade123 - Monday, June 25, 2012 - link

I don't understand why Apple doesn't take advantage of their lead in Thunderbolt. This machine screams for E-GPU with GTX 670!Spunjji - Tuesday, June 26, 2012 - link

Probably because right now the user experience would be poor. See Anand's comments about sound and USB cutting out when high-bandwidth transfers are occurring. That would be catastrophic mid-game and would definitely lead me to return the hardware as unfit for purpose. Apple have had their slip-ups but they rarely release hardware that is unfit for purpose.inaphasia - Monday, June 25, 2012 - link

Does Apple have some sort of exclusive deal (ie monopoly with an expiration date) on these displays, or can anybody (HP, Asus, Lenovo etc) use them if they want to?wfolta - Monday, June 25, 2012 - link

In recent years, Apple has been the King of the Supply Chain due to Tim Cook. He's now the CEO. I doubt that there will be many retina 15" screens available for Apple's competitors for a year or more.Even if Apple didn't lock up the supply chain, Apple's competitors have been running towards lower resolutions, or the entertainment-oriented 16:9 1920x1080 (aka 1080p), so it will take them a while to pivot towards higher-density displays even if they were growing on trees.

Constructor - Thursday, June 28, 2012 - link

Apple has been paying huge sums (in the Billions of Dollars!) to component manufacturers in advance to have them develop specific components such as this one, even paying for factories to be built for manufacturing exclusively for Apple for a certain time.It is also possible that Apple has licensed certain patents from various (other) manufacturers for their exclusive use which might preclude open-market sales of the same components even after the exclusive deal with Apple is up, because the display manufacturer may not be able to keep using these same patents.

In short: The chances for PC manufacturers to get at them just by waiting for them to drop into the market eventually don't look too good.

After all, none of the other Retina displays have appeared in other products yet. And the iPhone 4 is already two years old.

So either the non-Apple-supplying component manufacturers or the PC builders will have to actually pay for their own development. And given their mostly dismal profit margins and relatively low volumes in the premium segment, I wouldn't hold my breath.

Shanmugam - Monday, June 25, 2012 - link

Anand and Team,Excellent review again.

When is the MacBook Air Mid 2012 review coming? I really want to see the battery life improvement, I can see that it almost tops out at 8Hours for light work load for 13" MBA.

Cannot wait!!!

smozes - Monday, June 25, 2012 - link

Anand states: "[E]nough to make me actually want to use the Mac as a portable when at home rather than tethered to an external panel. The added portability of the chassis likely contributes to that fact though."I work with an external display at home, and given that there are none yet at this caliber, I'm wondering about doing away with the external display and working only with the rMBP. In the past I've always needed external displays for viewing more info, and I'm curious if this is no longer necessary.

Has anyone tried doing away with an external display and just using the rMBP on a stand with a mouse and keyboard? Since the display includes more info than a cinema display, and given healthy eyesight, would this setup be as ergonomic and efficient?

boeush - Monday, June 25, 2012 - link

For several years, I've been using 17'' notebooks with 1920x1200 displays. That resolution had been more than enough for the 17'' form factor; having even such a resolution on a 15'' screen is going overboard, and doing it on an 11'' tablet is just plane bonkers. I don't see the individual pixels on my laptop's screen, and I'd wager neither would most other people unless they use magnifying lenses.I really don't get the point of wasting money on over-spec'ed hardware, and burning energy pushing all those invisible pixels.

I'd rather have reasonable display resolutions matched to the actual physiological capabilities of the human eye, and spend the rest of the cost and power budgets on either weight reductions, or better battery life, or higher computing performance, or more powerful 3G/4G/Wi-Fi radios, etc.

The marketing-hype idiocy of "retina displays" now appears to be driving the industry from one intolerable extreme (of crappy pannels with sup-par resolutions) right into the diametrically opposite insanity -- that of ridiculously overbuilt hardware.

Why can't we just have cost-effective, performance-balanced, SANE designs anymore?

darkcrayon - Monday, June 25, 2012 - link

Reminds me of comments when the 3rd gen iPad screen was introduced. You have a review which both subjectively (from an extremely experienced user) and objectively from tests shows this is the best display ever for a laptop. Yet people ignore all of that and say it's a waste... I think it would be a waste if it didn't actually... You know... Provide a visibly dramatic level of improvement. And its better to make a large jump bordering on "overkill" than to make tiny incremental steps with something like display resolution- fragmentation/etc being what it is,