Intel Core i5 3470 Review: HD 2500 Graphics Tested

by Anand Lal Shimpi on May 31, 2012 12:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- GPUs

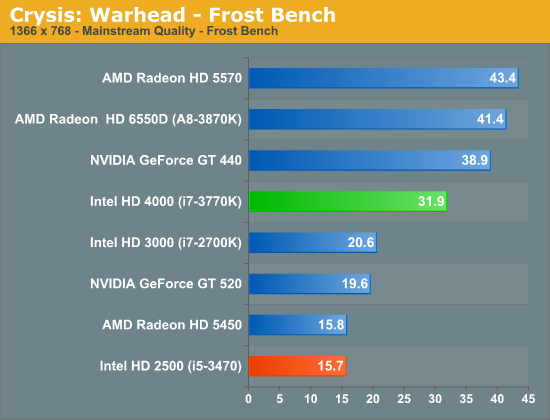

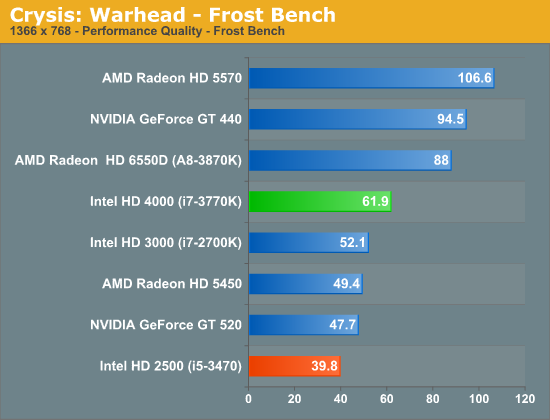

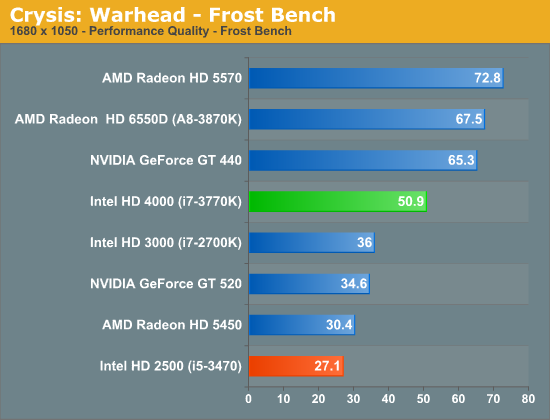

Crysis: Warhead

Our first graphics test is Crysis: Warhead, which in spite of its relatively high system requirements is the oldest game in our test suite. Crysis was the first game to really make use of DX10, and set a very high bar for modern games that still hasn't been completely cleared. And while its age means it's not heavily played these days, it's a great reference for how far GPU performance has come since 2008. For an iGPU to even run Crysis at a playable framerate is a significant accomplishment, and even more so if it can do so at better than performance (low) quality settings.

While Crysis on the HD 4000 was downright impressive, the HD 2500 is significantly slower.

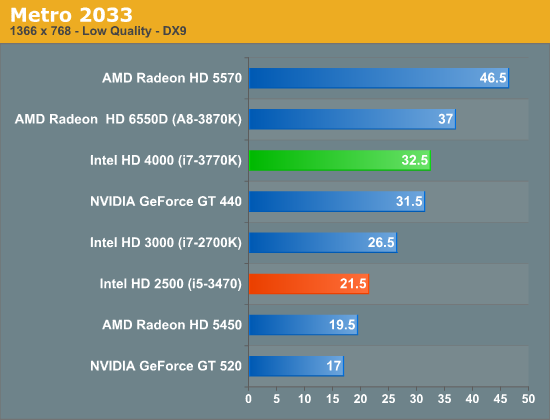

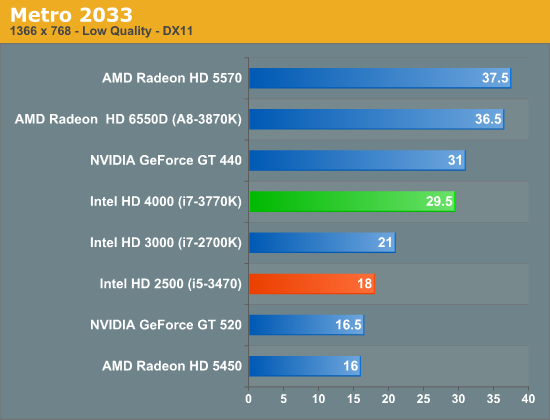

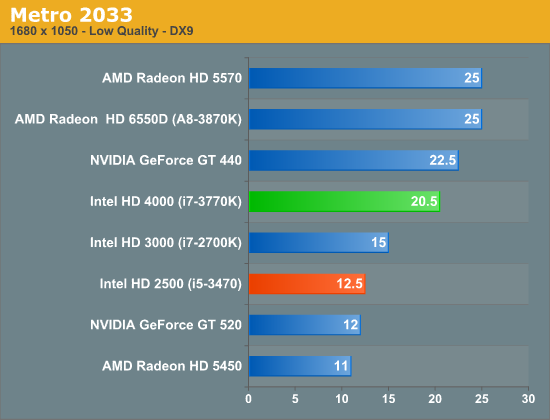

Metro 2033

Our next graphics test is Metro 2033, another graphically challenging game. Since IVB is the first Intel GPU to feature DX11 capabilities, this is the first time an Intel GPU has been able to run Metro in DX11 mode. Like Crysis this is a game that is traditionally unplayable on Intel iGPUs, even in DX9 mode.

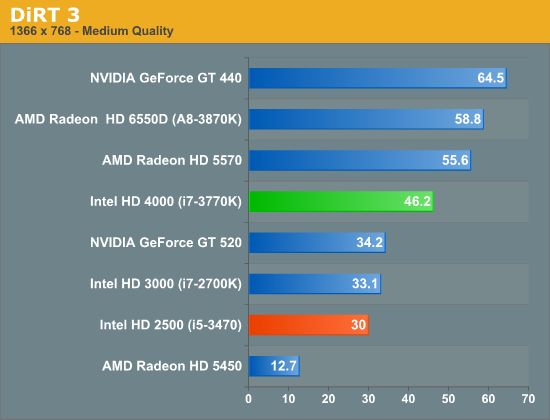

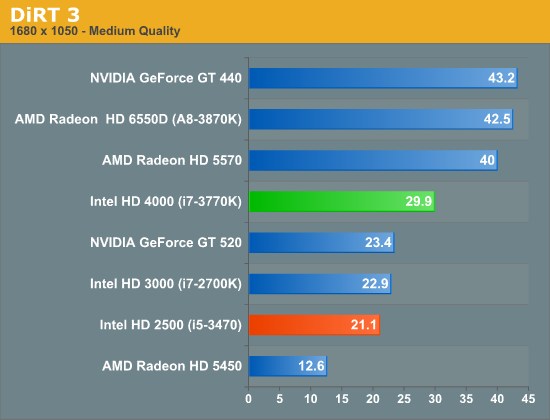

DiRT 3

DiRT 3 is our next DX11 game. Developer Codemasters Southam added DX11 functionality to their EGO 2.0 engine back in 2009 with DiRT 2, and while it doesn't make extensive use of DX11 it does use it to good effect in order to apply tessellation to certain environmental models along with utilizing a better ambient occlusion lighting model. As a result DX11 functionality is very cheap from a performance standpoint, meaning it doesn't require a GPU that excels at DX11 feature performance.

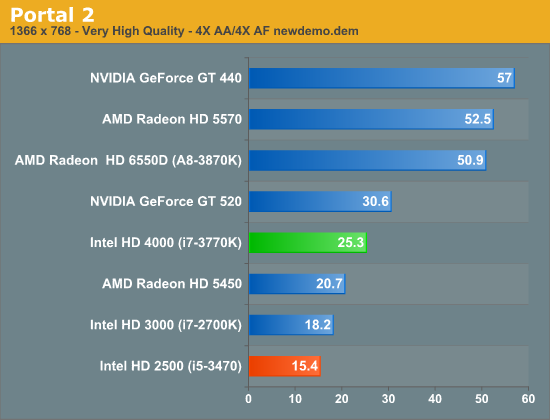

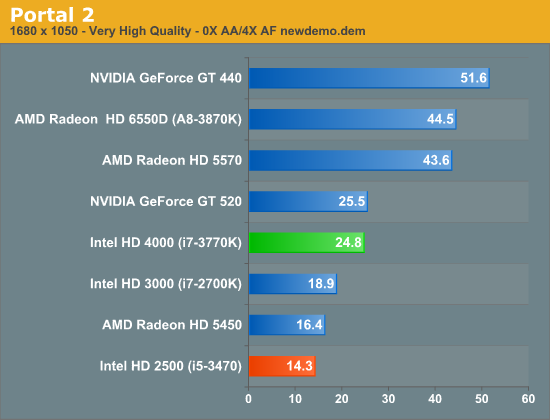

Portal 2

Portal 2 continues to be the latest and greatest Source engine game to come out of Valve's offices. While Source continues to be a DX9 engine, and hence is designed to allow games to be playable on a wide range of hardware, Valve has continued to upgrade it over the years to improve its quality, and combined with their choice of style you’d have a hard time telling it’s over 7 years old at this point. From a rendering standpoint Portal 2 isn't particularly geometry heavy, but it does make plenty of use of shaders.

It's worth noting however that this is the one game where we encountered something that may be a rendering error with Ivy Bridge. Based on our image quality screenshots Ivy Bridge renders a distinctly "busier" image than Llano or NVIDIA's GPUs. It's not clear whether this is causing an increased workload on Ivy Bridge, but it's worth considering.

Ivy Bridge's processor graphics struggles with Portal 2. A move to fewer EUs doesn't help things at all.

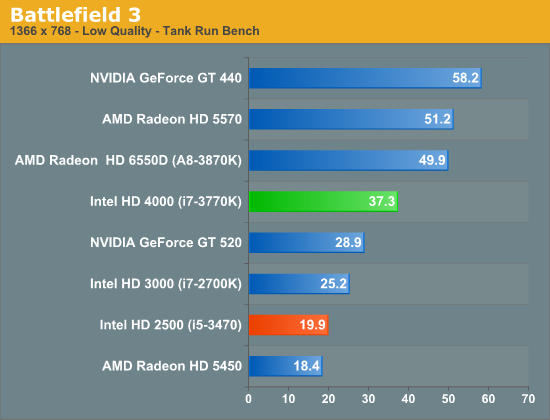

Battlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it was the first AAA DX10+ game. Consequently it makes no attempt to shy away from pushing the graphics envelope, and pushing GPUs to their limits at the same time. Even at low settings Battlefield 3 is a handful, and to be able to run it on an iGPU would no doubt make quite a few traveling gamers happy.

The HD 4000 delivered a nearly acceptable experience in single player Battlefield 3, but the HD 2500 falls well below that. At just under 20 fps, this isn't very good performance. It's clear the HD 2500 is not made for modern day gaming, never mind multiplayer Battlefield 3.

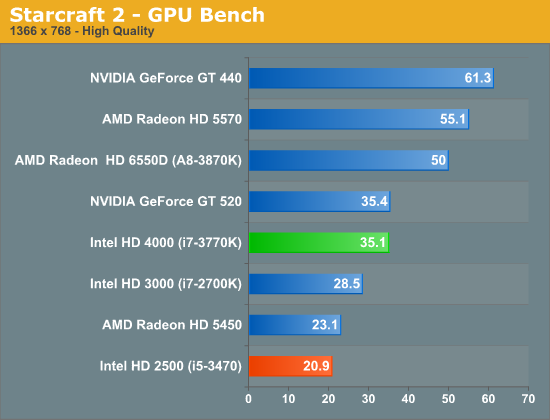

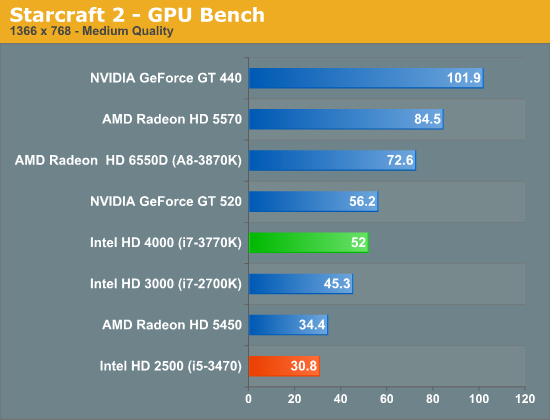

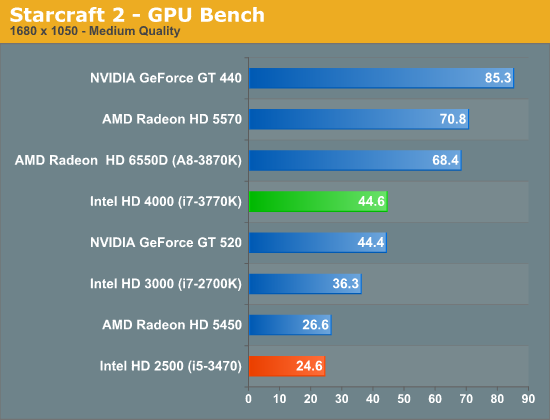

Starcraft 2

Our next game is Starcraft II, Blizzard’s 2010 RTS megahit. Starcraft II is a DX9 game that is designed to run on a wide range of hardware, and given the growth in GPU performance over the years it's often CPU limited before it's GPU limited on higher-end cards.

Starcraft 2 performance is borderline at best on the HD 2500. At low enough settings the HD 2500 can deliver an ok experience, but it's simply not fast enough.

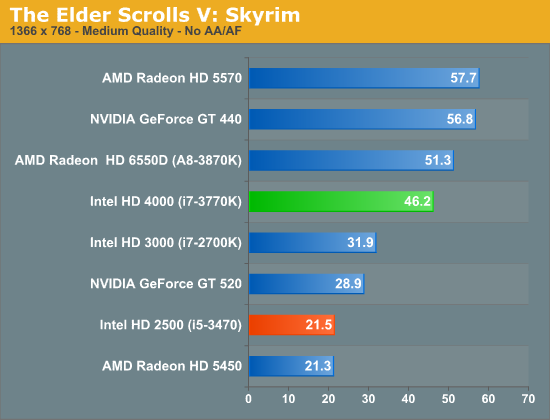

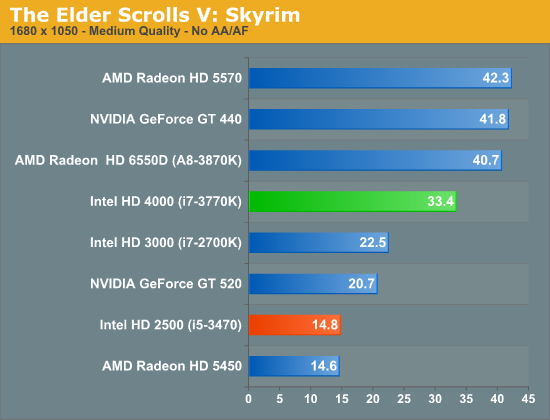

Skyrim

Bethesda's epic sword & magic game The Elder Scrolls V: Skyrim is our RPG of choice for benchmarking. It's altogether a good CPU benchmark thanks to its complex scripting and AI, but it also can end up pushing a large number of fairly complex models and effects at once. This is a DX9 game so it isn't utilizing any of IVB's new DX11 functionality, but it can still be a demanding game.

At lower quality settings, Intel's HD 4000 definitely passed the threshold for playable in Skyrim on average. The HD 2500 is definitely not in the same league however. At 21.5 fps performance is marginal at best, and when you crank up the resolution to 1680 x 1050 the HD 2500 simply falls apart.

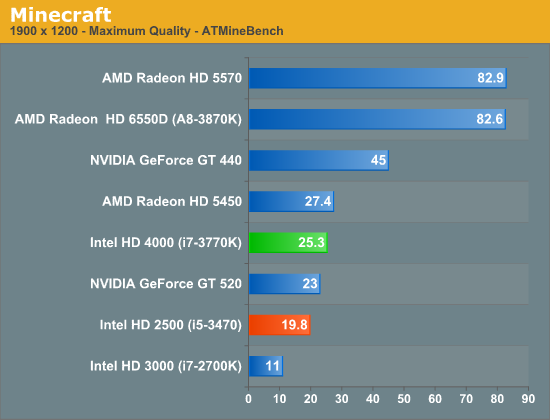

Minecraft

Switching gears for the moment we have Minecraft, our OpenGL title. It's no secret that OpenGL usage on the PC has fallen by the wayside in recent years, and as far major games go Minecraft is one of but a few recently released major titles using OpenGL. Minecraft is incredibly simple—not even utilizing pixel shaders let alone more advanced hardware—but this doesn't mean it's easy to render. Its use of massive amounts of blocks (and the overdraw that creates) means you need solid hardware and an efficient OpenGL implementation if you want to hit playable framerates with a far render distance. Consequently, as the most successful OpenGL game in quite some number of years (at over 5.5mil copies sold), it's a good reminder for GPU manufacturers that OpenGL is not to be ignored.

Our test here is pretty simple: we're looking at lush forest after the world finishes loading. Ivy Bridge's processor graphics maintains a significant performance advantage over the Sandy Bridge generation, making this one of the only situations where the HD 2500 is able to significantly outperform Intel's HD 3000. Minecraft is definitely the exception however as whatever advantage we see here is purely architectural.

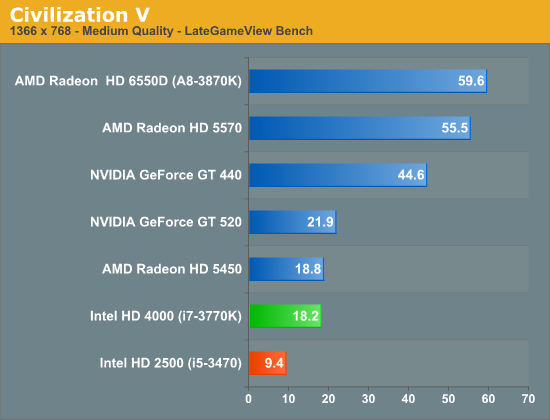

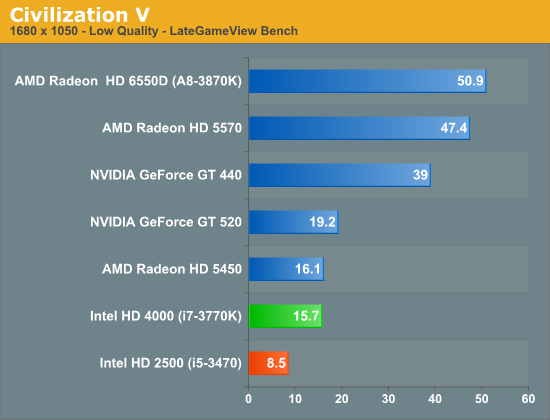

Civilization V

Our final game, Civilization V, gives us an interesting look at things that other RTSes cannot match, with a much weaker focus on shading in the game world, and a much greater focus on creating the geometry needed to bring such a world to life. In doing so it uses a slew of DirectX 11 technologies, including tessellation for said geometry, driver command lists for reducing CPU overhead, and compute shaders for on-the-fly texture decompression. There are other games that are more stressful overall, but this is likely the game most stressing of DX11 performance in particular.

Civilization V was an extremely weak showing on the HD 4000 when we looked at it last month, and it's even worse on the HD 2500. Civ players need not bother with Intel's processor graphics, go AMD or discrete.

67 Comments

View All Comments

Ryan Smith - Thursday, May 31, 2012 - link

Your completely right. We were in a rush and copied that passage from our original IVB review, which is no longer applicable.SteelCity1981 - Thursday, May 31, 2012 - link

Intel could have at least called it a 3500 and slap 2 more EU's onto it.fic2 - Thursday, May 31, 2012 - link

Agreed. I don't understand why Intel basically stood pat on the low end HD.But then again like everyone else I never understood why the HD3000 was only in the K series and maybe 5% of K series users don't have discreet gpu so the HD3000 isn't being used.

CeriseCogburn - Monday, June 11, 2012 - link

Good point. Lucid logic tried to fix that some, and did a decent job, and don't forget quick sync, plus now with zero core amd cards, and even low idle power 670's and 680's, leaving on SB K chip hd3000 cores looks even better - who isn't trained in low power if they have a video card, after all it's almost all people rail about for the last 4 years.So if any of that constant clamor for a few watts power savings has any teeth whatsoever, every person with an amd card before this last gen will be using the SB HD3000 and then switching on the fly to gaming with lucid logic.

n9ntje - Thursday, May 31, 2012 - link

So this must be a midrange desktop chip? Horrendous performance on the graphics side from Intel again.Very curious how AMD's trinity dekstop will perform, at the same pricerange it will be obvious it will obliterate Intel's offerings on the graphics side. What's more impressive AMD is still on 32nm..

7Enigma - Thursday, May 31, 2012 - link

For me this IS the perfect chip. No use for the GPU so cheaper = better. I would need a K model though for OC'ing potential, but I'm glad to see that if I can't have my CPU-only (no GPU) chip, at least I can have a hacked down version that is more in line with a traditional CPU.silverblue - Thursday, May 31, 2012 - link

What Intel should really be doing here is offering the 4000 on all i3s and some i5s to offset the reduced CPU performance. If you want to give AMD something to think about, HD 4000 on an Ivy Bridge dual core is very much the right way of going about it.CeriseCogburn - Friday, June 1, 2012 - link

Then Intel has a lame trinity level cpu next to a losing gpu.I think Intel will stick with it's own implementations, don't expect to be hired as future product manager.

ShieTar - Thursday, May 31, 2012 - link

Interestingly enough, Intel will also happily sell you what is basically the same chip, without any GPU, 100 MHz slower but with 2MB extra L3-Cache for the same price. They call that offer Xeon E3-1220V2. And it is 69W TDP, not 77W as the i5-3470.Who knows, the bigger Cache might even make it the better CPU for a not-overclocking gamer. If normal boards support it.

Pazz - Thursday, May 31, 2012 - link

Anand,Following on from your closing statement with regards to the HD 4000 being the miniumum, will you be doing a review of the 3570K? Surely with this model being the lowest budget Ivy HD4000 chip, it'll be a fairly popular option for many system builders and OEM's.