Intel SSD 520 Review: Cherryville Brings Reliability to SandForce

by Anand Lal Shimpi on February 6, 2012 11:00 AM ESTThe Intel SSD 520

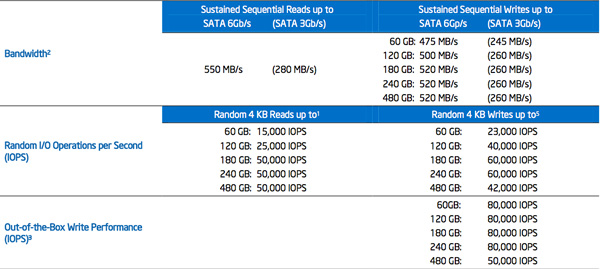

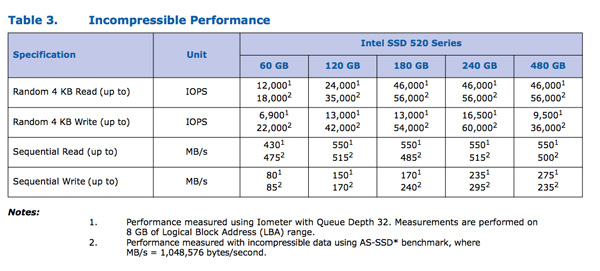

Intel sent us a 240GB and 60GB SSD 520 for review, but the performance specs of the entire family are in the table below:

The Intel SSD 520 is available in both 9.5mm and 7mm versions, with the exception of the 480GB flavor that only comes in a 9.5mm chassis. The 520's uses Intel's standard 7mm chassis with a 2.5mm removable plastic adapter that we've seen since the X25-M G2. The plastic adapter allows the drive to fit in bays designed for 9.5mm drives. Note that Intel doesn't ship shorter screws with the 9.5mm drives so you can't just remove the plastic adapter and re-use the existing screws if your system only accepts a 7mm drive.

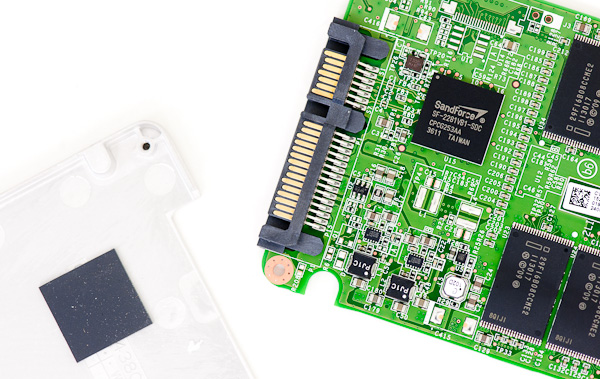

Inside the drive we see the oh-so-familiar SandForce SF-2281 controller and Intel 25nm MLC NAND. The controller revision appears unchanged from other SF drives we've seen over the past year. The PCB design is unique to the 520, making it and the custom Intel firmware the two noticeable differences between this and other SF-2281 drives.

Intel uses the metal drive chassis as a heatsink for the SF-2281 controller

The SF-2281 Controller

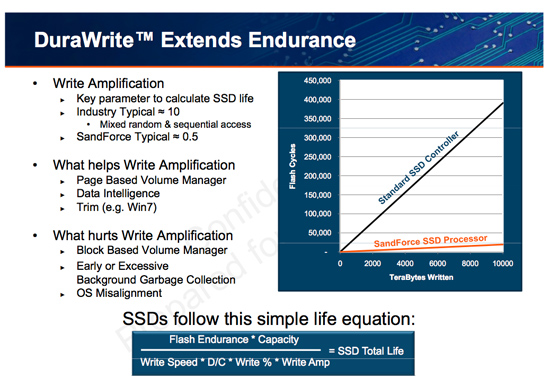

I've explained how the SF-2281 works in the past, but for those of you who aren't familiar with the technology I'll provide a quick recap. Tracking the location of data written to an SSD ends up being one of the most difficult things a controller has to do. There are a number of requirements that must be met. Data can't be written to the same NAND cells too frequently and it should be spread out across as many different NAND die as possible (to improve performance). For large sequential transfers, meeting these (and other) requirements isn't difficult. Problems arise when you've got short bursts of random data that can't be combined. The end result is leaving the drive in a highly fragmented state that is suboptimal for achieving good performance.

You can get around the issue of tracking tons of data by simply not allowing small groups of data to be written. Track data at the block level, always requiring large writes, and your controller has a much easier job. Unfortunately block mapping results in very poor small file random write performance as we've seen in earlier architectures so this approach isn't very useful for anything outside of CF/SD cards for use in cameras.

A controller can rise to the challenge by having large amounts of cache (on-die and externally) to help deal with managing huge NAND mapping tables. Combine tons of fast storage with a fast controller and intelligent firmware and you've got a good chance of building a high performance SSD.

SandForce's solution leverages the work smart not hard philosophy. SF controllers reduce the amount of data that has to be tracked on NAND by compressing any data the host asks to write to the drive. From the host's perspective, the drive wrote everything that was asked of it, but from the SSD's perspective only the simplest representation of the data is stored on the drive. Running real-time compression/de-duplication algorithms in hardware isn't very difficult and the result is great performance for a majority of workloads (you can't really write faster than a controller that doesn't actually write all of the data to NAND). The only limit to SandForce's technology is that any data that can't be compressed (highly random bits or data that's already compressed) isn't written nearly as quickly.

Intel does a great job of spelling out the differences in performance depending on the type of data you write to the SSD 520, but it's something that customers of previous Intel SSDs haven't had to worry about. Most client users stand to benefit from SandForce's technology and it's actually very exciting for a lot of enterprise workloads as well, but you do need to pay attention to what you're going to be doing with the drive before deciding on it.

The Intel SSD Toolbox

The Intel SSD 520 works flawlessly with the latest version of Intel's SSD Toolbox. The toolbox allows you to secure erase the drive from within Windows, and it also allows you to perform firmware updates and pull SMART info from the drive. Unlike other SandForce toolboxes, Intel's software works fine with Intel's RST drivers installed.

The Test

| CPU | Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011, AS SSD & ATTO |

| Motherboard: | Intel DH67BL Motherboard |

| Chipset: | Intel H67 |

| Chipset Drivers: | Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Corsair Vengeance DDR3-1333 2 x 2GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

138 Comments

View All Comments

Lord 666 - Monday, February 6, 2012 - link

Is there a link or reference on compatibility for 320 drives in HP servers?One element that was not mentioned was encryption of the 520 and use within servers.

Mr Perfect - Monday, February 6, 2012 - link

Intel is also quick to point out that while other SF-2281 manufacturers can purchase the same Intel 25nm MLC NAND used on the 520, only Intel's drives get the absolute highest quality bins and only Intel knows how best to manage/interact with the NAND on a firmware level.Intel forgot to mention that they don't have to pay a markup on their own flash. Or do they intend to pass that on the consumer? Maybe accounting steps in and makes the SSD department pay up so the flash division doesn't loose profit?

Jovec - Monday, February 6, 2012 - link

Actually, the probably do pay a markup. Often times with large companies a division actually "buys" resources/parts from another. Yes, it is mostly accounting, but the flash division can book flash sales to their SSD division as revenue. The SSD division cannot just factor in flash memory "at cost" when developing their SSD product lines.NitroWare - Monday, February 6, 2012 - link

From what you can disclose, what has Intel acutally being doing during development ? Have they been debugging a branch of the firmware or have they been doing accelerated lifetime testing as part of their QANot much use debugging the firmware ( although required for OEM ) if the things don't last as long as others in real world use specially enterprise.

Intel is only offering 5yrs because they can, because they have tighter control over the project but not full control. Its a token guesture. I wonder if the terms of the warranty cover any applications or just typical desktop/notebook use.

Intel only 'covered' overclocking recently, so it would not be suprising if there are some clauses in the terms of use of Cheryville.

Most of the launch reviews have claimed the drive is 'more reliable'? The consumer is supposed to take their word for it? SSD are not cheap and EVERY Intel product ever made has errata, many marked as nofix, many marked as fix in sw, many as fix in next hw.

The e-ink on the embargoed reviews isn't even dry and claims of reliabililty.

P54C had erratta, PIIX4 had errata, many slot1 Desktop boards had erratta, FWH had erratta, ICH5 had errata, some early 775 boards needed a BOM update list to support core2duo, many GMA have erratta, SNB-E has erratta.

The firmware issue for other OEM customers can be seen more critical than Intel getting their product right. Many users or resellers do not even know there is a firmware update for their product. Look what happened with Crucial, the fix for their problems only came out recently.

Kingston were even still shipping outdated specs on the boxes on their product and nowhere is there any mention that the user should even consider looking for firmware. As of Novemeber/December last year, that particular drive did not have 332 preloaded at the factory.

SSD vendors are still claiming the fix is 'optional and should only be applied if issues occur' to cover themselves. This drive being released is just more shiny or is a swiss army knife with more tools in it. It does not change the status quo with SF or any other third party controller.

Validation Lists are lacking for many SSDs as some are seen as enthusiast toys yet and It is very hard to answer somonewhen they ask if an enthusiast grade SSD using SF is suitable for a server, especially if the server is to use a RAID HBA and Linux.

I will also note that the testbed used for this review is using an older version of the Intel RST Driver, the changelog for each release is quite signficant and there is focus on improving SSD performance and reliabililty.

The latest BIOS for motherboards updates the Intel RST OROM improving compatibilty for AHCI, especially RAID. Latest stable Intel RST should be used by end user. Intel is churning out the updates more frequently than the past which is good to see.

Then theres third party controllers. AMD is really lax with SATA updates, let alone the third parties. How is a professional supposed to put trust in a third party SATA or USB controller when the shipping firmware is labeled 'version 0.9' ?

I am even on the fence about reviewers using stable platforms for reviews. I like to update drivers whenever possible and recommend drivers be kept up to date, I can understand the need to keep a stable platform, but in the real world bugs get fixed, updates get released and people apply these updates.

I am really glad Intel took their time with this product, as there is a pointless marketing flooding of identical SSDs but what now and as we know Intel take their time and do things right with foward looking farsightness but in real world use it will take the masses a long time to discover any flaws especially if they are of a life-expectancy nature.

Anand Lal Shimpi - Monday, February 6, 2012 - link

It's far more complex than just accelerated lifetime testing. Intel's validation encompasses that as well as widespread compatibility testing, field testing and workload testing. As we've seen in the past, it's by no means perfectly complete but it's generally more robust than anything the competition is able to do on a regular basis.In our review we presented a situation where the 520 was indeed more reliable than standard SF-2281 drives.

We use a stable platform for performance tests but we always do functional testing with the latest available drivers/BIOSes (this was the case in Brian's BSOD testbed).

Take care,

Anand

NitroWare - Monday, February 6, 2012 - link

Anand I am with you on Intel's validation processes being 200% of whats needed and then some, many forget where Intel products end up - they end up in places of serious use, enterprise desktops, developers, F1 Teams, Military, OEM and whitebox Integrators.There was even a quote from John Carmack in the PR...

One GPF or one BSOD thats driver related should not be tolerated and I am in the belief that any error can be debuged, traced and fixed by any sw or hw vendor. Its more the fact that do they want to for whatever reason inernal or external.

You are right in your latter definition of reliable and I at least know that if you say that word to your readers you can back it up, but everyone else took the bait on a general level which is why I waited for your personal overview as you would say more than just that 'it is fast'

You were one of the few who bothered to dive deeper to uncover the truth behind all SSD in your vlog on youtube and edtorials.

The way I am defining reliable is over the life cycle of the drive though, that you can depend on the drive to always work and not die/lose data randomly.

Field testing - that worked great for the Vertex with ICH10R.. I think there are still conemporary boards out there that don' have patches for the early Vertexs.

Its worse with laptops, I am aware of Thinkpads with issues with third party SSDs.

Several server /hosting companies are interesting in putting 520s in RAID 10 with a HBA and have told me they went with those simply because the 710 is too expensive, the 710 is A$700 for 100GB at the moment where as the 520 is several hundred less let alone a 'generic' 240GB 2281 drive.

When you are quoting a client for a server, they may be picky on the final price and downgrade it .

The things better work. The irony is the HBAs in question are LSI and IMO I think LSI do not care (yet) about SSD interop with their own companion products. .

Morg. - Tuesday, February 7, 2012 - link

Intel's validation is great.Some issues arise from the chipsets ... a spot where Intel (even when they're not doing shady stuff) can easily make important adjustments knowingly.

Overall I would still think the Intel drive will be more *reliable* but when you're talking raid arrays .. who cares ?

And putting two vertex3-s instead of an intel 710 is called upgrading : more resilience, more speed, more space for the same price.

(the minimal fine-tuning related to ssd power states is a mere detail on a server that's not even running windows in the first place)

Same goes for consumers imho .. if you're going to market a 3% reliability advantage at 45% price, I sure hope nobody buys it.

Morg. - Tuesday, February 7, 2012 - link

You presented nothing Anand, you just said what Intel paid you to say.You cannot measure that this disk is more reliable because you have NOT tested a thousand drives in a thousand configs, i.e. the scenarios that actually caused so many vertex 3's to fail.

So the only conclusion one can draw from your review, you included, is that the computer that had a BSOD-ing SF-2281 did not BSOD with the intel 520 .

And that is not what I would call "indeed more reliable".

OTOH, gotta keep your sponsor money and I respect that - just cut the bullcrap ;)

seapeople - Tuesday, February 7, 2012 - link

Brilliant, you've figured it out! The reason Anand has been recommending SandForce drives for the last three years is because Intel told him they would eventually get on board with SandForce, and therefore paid him to preemptively pump up the SandForce drive reputation.This is sheer genius.

NitroWare - Wednesday, February 8, 2012 - link

"Overall I would still think the Intel drive will be more *reliable* but when you're talking raid arrays .. who cares ?"When the HBA vendor or even motherboard sata controller hasnt validated RAID SSD proply it does matter. Look at the lists for many popular RAID HBA, not all mechanical HDD are listed,even ones which are intended for RAID.

"And putting two vertex3-s instead of an intel 710 is called upgrading : more resilience, more speed, more space for the same price."

Two instead of 1 - fair enough but RAID 1 will no eliminate any bugs in a drive especially if the drives are identical and have logic flaws rather than part failure.

Say you had two M4s with the counter issue in RAID, RAID doesn't solve anything and server/data redundancy comes in. Drive RAID helps for when a drive may fail randomly. If all the drives in your array have the same flaw/known issue then you might be in trouble.

"the minimal fine-tuning related to ssd power states is a mere detail on a server that's not even running windows in the first place"

Firmware is firmware. AHCI is AHCI. I don't think anyone has done finite analysis on wether any issues can come up in Linux with the multitiude of controller hw and firmware, drivers, kernels combinations and so on.

Good RAID HBA are only validated for particular OSes. Just too many combinations.

"Same goes for consumers imho .. if you're going to market a 3% reliability advantage at 45% price, I sure hope nobody buys it. "

Consumers will never buy the more expensive part. Enthusiasts are cheapskates.

These drives arn't meant for the average consumer. They are meant for power users , enthusiasts, pros and entry enterprise. 3% matters. By that notion, you disapprove of ECC in workstations and servers as the spend is too much even though this isn't for Consumer.

"You cannot measure that this disk is more reliable because you have NOT tested a thousand drives in a thousand configs, i.e. the scenarios that actually caused so many vertex 3's to fail."

At a launch like this, the product is only available a short time before launch. To do indepth projects such as long testing over time is something some vendors are not interested in with some media and some regions.

How many users are willing to wait, 1,2,3 months for the outcome of a long term test for something 'this hot' . They will go buy the Force 3 for $180 because they can't wait.

"So the only conclusion one can draw from your review, you included, is that the computer that had a BSOD-ing SF-2281 did not BSOD with the intel 520 ."

WHat did the other embargo reviews do ? they all ran the standard suite and claimed it was faster and more reliable. How many did a plugtest with AMD, JMB, ASMedia, Marvel, Intel, Apple SATA., HBA, etc

"OTOH, gotta keep your sponsor money and I respect that - just cut the bullcrap "

I do not see anyone else in a similar position to him championing that SF improve their products. In all of his vlogs and editorials he explains the difference in operation between SandForce and Intel fairly objectively. Anything further is irrelevant.

I do not think the industry has been at the mercy of a third party vendor where there is a cloud over the reliability of their product for some time. We have to go back several years when certain video and audio vendors had poor or non existent support, but even those are accessories and did not affect data.