Intel's Medfield & Atom Z2460 Arrive for Smartphones: It's Finally Here

by Anand Lal Shimpi on January 10, 2012 8:00 PM ESTThe CPU

Medfield is the platform, Penwell is the SoC and the CPU inside Penwell is codenamed Saltwell. It's honestly not much different than the Bonnell core used in the original Atom, although it does have some tweaks for both power and performance.

Almost five years ago I wrote a piece on the architecture of Intel's Atom. Luckily (for me, not Intel), Atom's architecture hasn't really changed over the years so you can still look back at that article and have a good idea of what is at the core of Medfield/Penwell. Atom is still a dual-issue, in-order architecture with Hyper Threading support. The integer pipeline is sixteen stages long, significantly deeper than the Cortex A9's. The longer pipeline was introduced to help reduce Atom's power consumption by lengthening some of the decode stages and increasing cache latency to avoid burning through the core's power budget. Atom's architects, similar to those who worked on Nehalem, had the same 2:1 mandate: every new feature added to the processor's design had to deliver at least a 2% increase in performance for every 1% increase in power consumption.

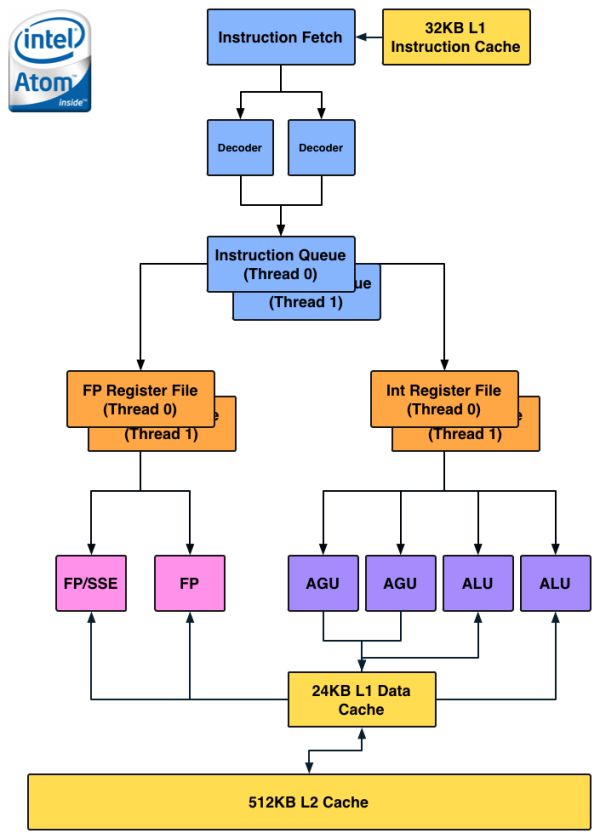

Atom is a very narrow core as the diagram below will show:

There are no dedicated integer multiply or divide units, that's all shared with the FP hardware. Intel duplicated some resources (e.g. register files, queues) to enable Hyper Threading support, but stopped short of increasing execution hardware to drive up efficiency. The tradeoff seems to have worked because Intel is able to deliver performance better than a dual-core Cortex A9 from a single HT enabled core. Intel also lucks out because while Android is very well threaded, not all tasks will continually peg both cores in a dual-core A9 machine. At higher clock speeds (1.5GHz+) and with heavy multi-threaded workloads, it's possible that a dual-core Cortex A9 could outperform (or at least equal) Medfield but I don't believe that's a realistic scenario.

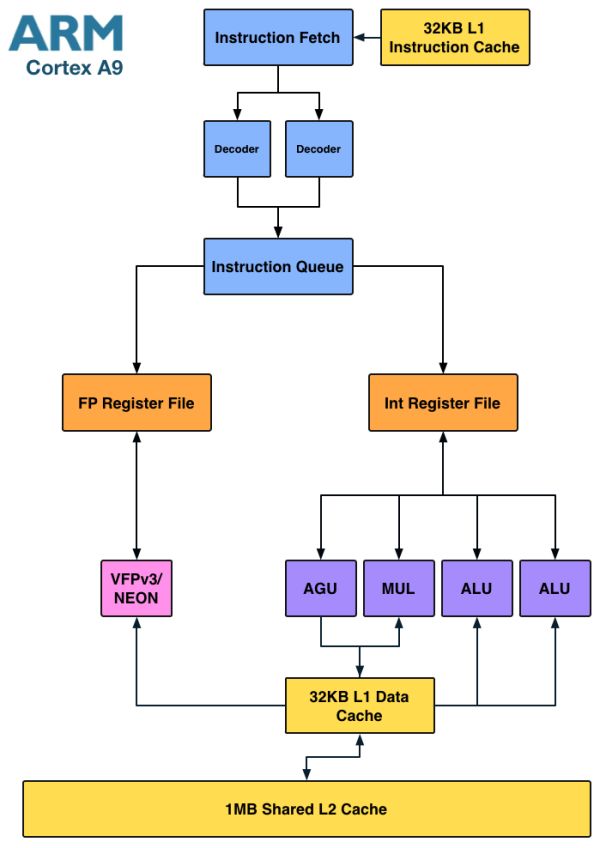

Architecturally the Cortex A9 doesn't look very different from Atom:

Here we see a dedicated integer multiply unit (shared with one of the ALU ports) but only a single port for FP/NEON. It's clear that the difference between Atom and the Cortex A9 isn't as obvious at the high level. Instead it's the lower level architectural decisions that gives Intel a performance advantage.

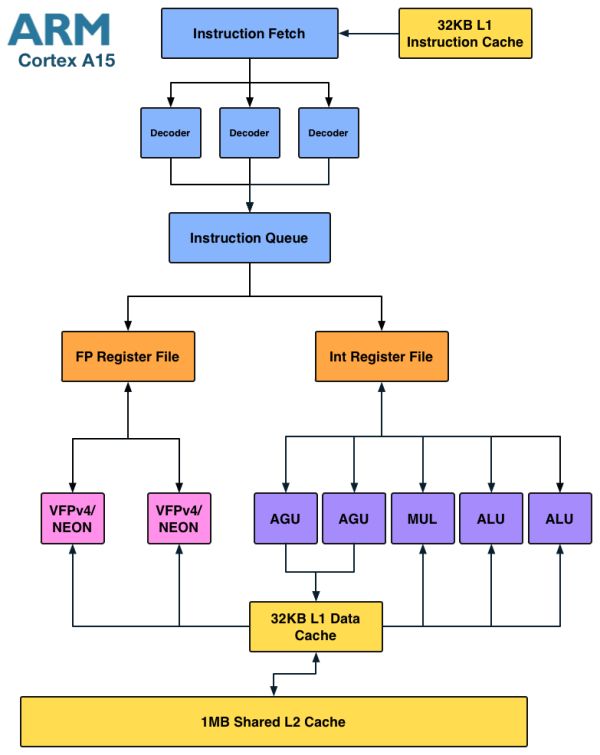

Where Intel is in trouble is if you look at the Cortex A15:

The A15 is a far more modern design, also out of order but much wider than A9. I fully expect that something A15-class can outperform Medfield, especially if the former is in a dual-core configuration. Krait falls under the A15-class umbrella so I believe Medfield has the potential to lose its CPU performance advantage within a couple of quarters.

Enhancements in Saltwell

Although the CPU core is mated to a 512KB L2 cache, there's a separate 256KB low power SRAM that runs on its own voltage plane. This ULP SRAM holds CPU state and data from the L2 cache when the CPU is power gated in the deepest sleep state. The reasoning for the separate voltage plane is simple. Intel's architects found that the minimum voltage for the core was limited by Vmin for the ULP SRAM. By putting the two on separate voltage planes it allowed Intel to bring the CPU core down to a lower minimum power state as Vmin for the L2 is higher than it is for the CPU core itself. The downside to multiple power islands is an increase in die area. Since Medfield is built on Intel's 32nm LP process while the company transitions to 22nm, spending a little more on die area to build more power efficient SoCs isn't such a big deal. Furthermore, Intel is used to building much larger chips, making Medfield's size a relative nonissue for the company.

The die size is actually very telling as it's a larger SoC than a Tegra 2 with two Cortex A9s despite only featuring a single core. Granted the rest of the blocks around the core are different, but it goes to show you that the CPU core itself (or number of cores) isn't the only determination of the die size of an SoC.

The performance tweaks come from the usual learnings that take place over the course of any architecture's lifespan. Some instruction scheduling restrictions have been lifted, memory copy performance is up, branch predictor size increased and some microcode flows run faster on Saltwell now.

Clock Speeds & Turbo

Medfield's CPU core supports several different operating frequencies and power modes. At the lowest level is its C6 state. Here the core and L2 cache are both power gated with their state is saved off in a lower power on-die SRAM. Total power consumption in C6 of the processor island is effectively zero. This isn't anything new, Intel has implemented similar technologies in desktops since 2008 (Nehalem) and notebooks since 2010 (Arrandale).

When the CPU is actually awake and doing something however it has a range of available frequencies: 100MHz all the way up to 1.6GHz in 100MHz increments.

The 1.6GHz state is a burst state and shouldn't be sustained for long periods of time, similar to how Turbo Boost works on Sandy Bridge desktop/notebook CPUs. The default maximum clock speed is 1.3GHz, although just as is the case with Turbo enabled desktop chips, you can expect to see frequencies greater than 1.3GHz on a fairly regular basis.

Power consumption along the curve is all very reasonable:

| Medfield CPU Frequency vs. Power | ||||||

| 100MHz | 600MHz | 1.3GHz | 1.6GHz | |||

| SoC Power Consumption | ~50mW | ~175mW | ~500mW | ~750mW | ||

Since most ARM based SoCs draw somewhere below 1W under full load, these numbers seem to put Medfield in line with its ARM competitors - at least on the CPU side.

It's important to pay attention to the fact that we're dealing with similar clock frequencies to what other Cortex A9 vendors are currently shipping. Any performance advantages will either be due to Medfield boosting up to 1.6GHz for short periods of time, inherently higher IPC and/or a superior cache/memory interface.

164 Comments

View All Comments

Griswold - Thursday, January 12, 2012 - link

How is "too little too late" going to help Intel? By the time products with this trash flock to market, it'll be up against A15 and look like the thing from yesteryear it really is..iwod - Tuesday, January 10, 2012 - link

It was always only a matter of time before Intel get a x86 CPU with their superior manufacturing down to ARM SoC level.And since new science discovery is pushing Moores Law's Limit further and further away, intel has a much better fighting chance.

The problem is how much does an Intel SoC cost compare to a reference design ARM made SoC in TSMC?

Griswold - Thursday, January 12, 2012 - link

Come back in a year and ask again... thats when this hits the shelves.Computer Bottleneck - Tuesday, January 10, 2012 - link

Thank you for the article!Is there any chance we could see a teardown and analysis of the Intel Medfield reference design platform in the next 6 months?

I think it would be very interesting to compare Intel's progress in chip integration over the next few years. (ie, Compare Medfield reference platform to Silvermont reference platform to Airmont reference platform, etc)

jwcalla - Tuesday, January 10, 2012 - link

I just got an ulcer thinking about how Android fragmentation is going to be taken to a whole new level."There's already support for targeting both ARM and x86 architectures in the Android NDK so anything developed going forward should be ok so long as the developer is aware of x86."

heh

right

That's sunshine and lollipops right there.

It isn't enough to worry about 4,000 different CPUs and five active versions of the OS, but now we have to worry about two completely incompatible instruction sets too. All for the glory of producing apps that make no money on this platform. Suddenly iOS seems even more attractive.

hechacker1 - Wednesday, January 11, 2012 - link

It's only niche apps that require specific machine code that won't work. Otherwise the interpreted bytecode should just work.It's similar to when Apple moved from PPC to x86. You just had to recompile the program with the new toolchain and it would create a universal binary. Except here, it isn't even necessary to recompile the majority of the time.

If anything, with the introduction of Android 4.0, we will finally have a common base for phones, tablets, and the one or two smart TVs. Sure it will require an upgrade for most users stuck on older unsupported Android versions, but that will come with time.

nofumble62 - Wednesday, January 11, 2012 - link

the difference now is all about peripheral and IO design. The ARM advantage has shrinken to almost zero.tipoo - Wednesday, January 11, 2012 - link

Shrunken. Sorry.dealcorn - Wednesday, January 11, 2012 - link

ARM earned it's dominance of the mobile space with affordable, superior power efficiency. Now, Intel waltz's in with a 5 year old design for a space it used to know nothing about and it has superior power efficiency. Is there some reason to think this is in any way not a replay of the old Intel vs RISC story?It is hard to take ARM seriously when Intel's old design from a period when it was generally clueless is superior to what ARM markets today. However, we would not be here without ARM's historic contribution. Also, the market for garage door openers is not going away.

aury - Wednesday, January 11, 2012 - link

"superior power efficiency"how is this chip superior, itt uses more power than Samsungs old A9 cortex, and Samsung's implementation isn't even the the most power efficient, let alone that the A9 is an old chip to begin with