Why Ivy Bridge Is Still Quad-Core

by Kristian Vättö on December 5, 2011 1:35 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

A couple days ago, we published our Ivy Bridge Desktop Lineup Overview in which we mentioned that Ivy Bridge will remain a quad-core solution. There are dozens of forum posts with people asking why there's no hex-core Ivy Bridge, so now seems like a good time to address the question. Fundamentally, Ivy Bridge is a die shrink of Sandy Bridge (a "tick" in Intel's world), and that usually means either the core count or frequency is increased due to the lower power consumption of the smaller process node. Thus, instead of hex-core, we get a chip that looks much the same as a year-old Sandy Bridge, only with improved efficiency and some other moderate tweaks to the design. Let's go through some of the elements that influence the design of a new processor, and when we're done we will have hopefully clarified why Ivy Bridge remains a quad-core solution.

Marketing

If we look at the situation from the marketing standpoint first, having a hex-core Ivy Bridge die would more or less kill the just released Sandy Bridge E. Sure, IVB is about five months away, but I doubt Intel wants to relive the Sandy Bridge vs. Nehalem (i7-9xx) situation--even Bloomfield vs. Lynnfield was quite bad. If Intel created a hex-core IVB die, they would have to also substantially cut the prices of SNB-E. The current cheapest hex-core SNB-E is $555, while IVB hex-core would most likely be priced at $300~$400 since it's aimed at the mainstream; otherwise very few SNB-E systems would be sold. Even then, most consumers would opt for the IVB platform due to cheaper motherboard costs and lower TDP. PCIe 3.0 should also make 16 lanes fine for dual-GPU setups, reducing the market for SNB-E even more.

Differentiating the lineup by keeping Ivy Bridge quad-core allows some market for SNB-E among enthusiast consumers. Ivy Bridge E isn't coming before H2 2012 anyway so SNB-E must please the high-end until IVB-E hits. In the end, we still recommend SNB-E primarily for servers and workstations where the extra memory channels, PCIe lanes, and dual-socket support are more important, but the lack of hex-core IVB parts at least gives the platform a bit more of an advantage.

Evolution from traditional CPU to SoC

There are more than just marketing reasons, though. If we look at the following die shots, we can see that CPUs are becoming increasing similar to SoCs.

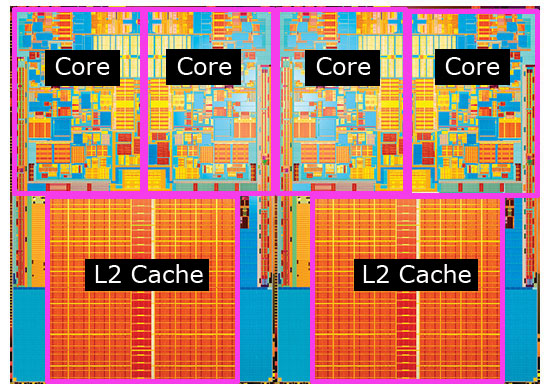

Quad-core Kentsfield package (2006)

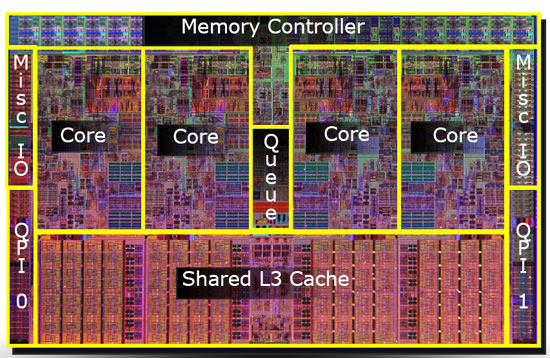

Quad-core Nehalem die (2008)

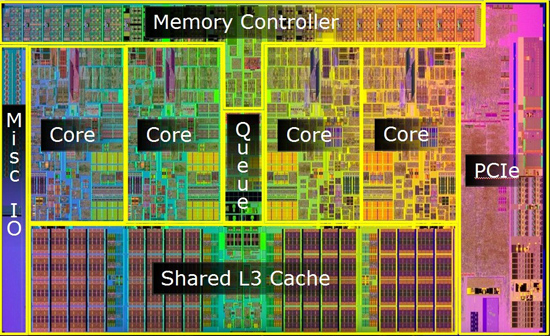

Quad core Lynnfield die (2009)

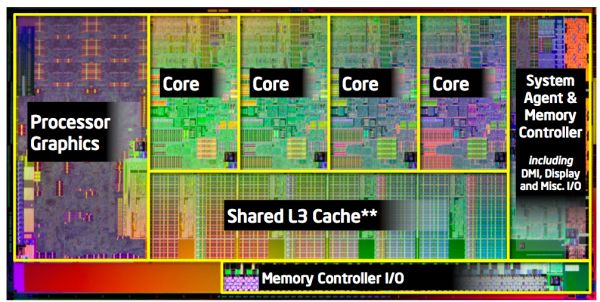

Quad-core Sandy Bridge die (2011)

These four (well, techically three because Kentsfield consists of two dual-core Conroe dies) chips are the only "real" quad-core CPUs from Intel. There are quad-core Gulftown Xeons, and there will soon be quad-core SNB-E CPUs, but they all have more cores on the actual die; some of them have just been disabled. Comparing the die shots, we notice that our definition of CPU has changed a lot in only five years or so. Kentsfield is a traditional CPU, consisting of processing cores and L2 cache. In 2008, Nehalem moved the memory controller onto the CPU die. In 2009, Lynnfield brought on-die PCIe controller, which allowed Intel to get rid of the Northbridge-Southbridge combination and replace it with their Platform Controller Hub. A year and a half later, Westmere (e.g. Arrandale and Clarkdale) brought us on-package graphics--note that it was on-package, not on-die as the GPU was on a separate die. It wasn't until Sandy Bridge that we got on-die graphics. The SNB graphics occupy roughly 25% of the total die area, or the space of three cores if you prefer to look at it that way, and IVB's graphics (a "tock" on the GPU side, as opposed to a "tick") will occupy even more space.

While we don't have a close-up die shot of Ivy Bridge (yet), we do know its approximate die size and the layout should be similar to the Sandy Bridge die as well. Anand estimated the die size to be around 162mm^2 for what appears to be the quad-core die (dual-core SNB with GT2 is 149mm^2, and even with the more complex IGP we wouldn't expect dual-core IVB to be larger). That's a 25% reduction in the die size when compared with quad-core SNB die (216mm^2). A 22nm quad-core SNB die would measure in at 102mm^2 with perfect scaling and assuming all the logic/architecture is the same; however, scaling is never perfect and we know there are a few new additions to IVB, so 162mm^2 for IVB die sounds right. Transistor wise, IVB counts in at around 1.4 billion, a 20.7% increase over quad-core SNB.

To the point, today's CPUs have much more than just CPU cores in them. We could easily have had a hex-core 32nm SNB die at the same die size if the graphics and memory controller were not on-die .We've actually got a pretty good reference point with SNB and Gulftown; accouting for the larger L3 cache and extra QPI link, Gulftown checks in at 240mm^2, though TDP is higher than SNB thanks to the extra cores. The same applies to Ivy Bridge. If Intel took away the graphics, or even kept the same die size as SNB, a hex-core would be more or less given. Instead, Intel has chosen to boost the graphics and decrease the die size.

Subjectively, this is not a bad decision. Intel needs to increase graphics performance, and will do just that in IVB. Intel's IGP solutions account for over 50% of the PC marketshare, yet the graphics are their Achilles' Heel. All modern laptops have integrated graphics (though many still opt to go discrete-only or use switchable graphics), and having more CPU cores isn't that useful if your system will be severely handicapped by a weak GPU. We've also shown in numerous articles how hex-core scaling over quad-core is largely unnecessary on desktop workloads (more on this below). Increasing the graphics' EU count and complexity while also adding CPU cores would have led to a larger than ideal die, not to mention the increased complexity and cost. Remember, Moore's Law was more an observation of the ideal size/complexity relationship of microprocessors rather than pure transistor count, and smaller die sizes generally improve yields in addition to being less expensive.

Performance

While six cores is obviously 50% more than four cores, the increase in cores isn't proportional to the increase in performance. More cores put off more heat and hence clock speeds must be lower, unless the TDP is increased. Intel couldn't have achieved the 77W TDP at reasonable clock speeds if Ivy Bridge was hex-core. On top of that, there is still plenty of software that is not fully multithreaded or fails to scale linearly with core count, so you would rarely be using all six cores (plus six more virtual cores thanks to Hyper-Threading). More cores will only help if you can actually use them, while higher frequencies universally improve performance (all other things being equal). We can give some clear examples of this with a few graphs from our Sandy Bridge E review.

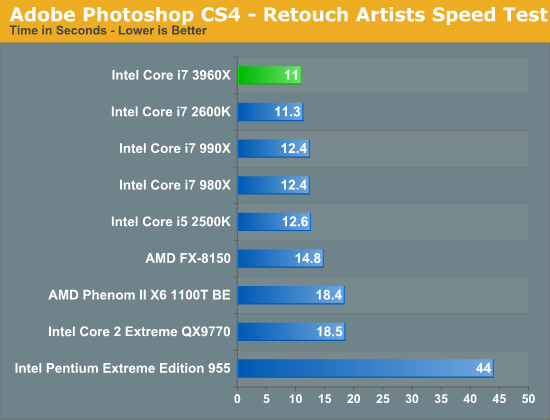

Photoshop is a prime example of software that has limited multithreading. We used the older CS4 in our tests, but CS5 isn't any better, unfortunately. Photoshop can actively take advantage of four threads, and thus the hex-core i7-3960X isn't really faster than quad-core i7-2600K. The slight difference is most likely due to the difference in Turbo (3.9GHz vs 3.8GHz) or the quad-channel vs. dual-channel memory configuration. There are also a few peaks where more than four threads are used, thus i7-2600K is faster than i5-2500K thanks to Hyper-Threading, on top of the extra cache and higher Turbo of course.

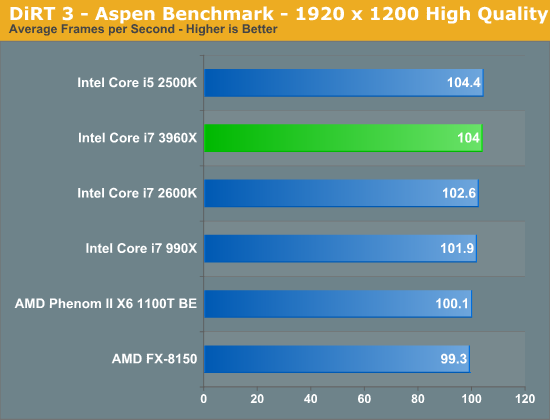

In general, games are horribly multithreaded. DiRT 3 is an example of a typical game engine, and adding more cores and enabling Hyper-Threading actually hurts the performance. There are only a handful of games that benefit from more cores, although there are still obstacles to overcome even then (see below).

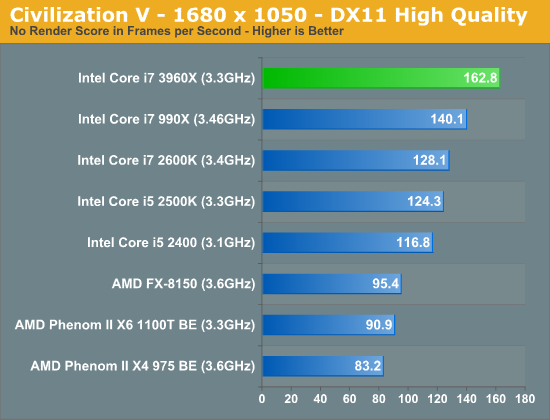

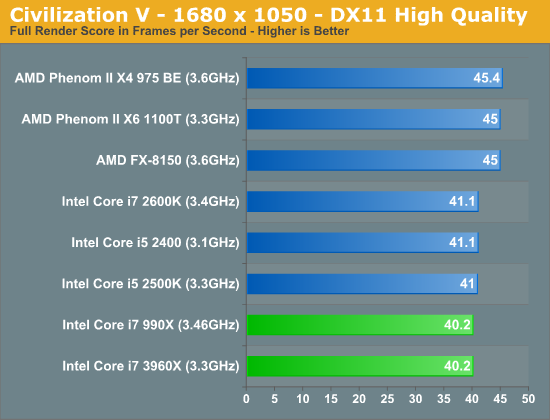

Civilization V fits in the handful of games that can scale across multiple cores. However, you will still be bottlenecked by your GPU in GPU bound scenarios (like in the second graph), which makes the usefulness of more cores questionable in this case. It's irrelevant whether you get 60 or 120 FPS in CPU bound scenarios if the real gaming performance is ultimately bound by your GPU speed.

The above graphs are biased in the sense that they are for tests where SNB-E is roughly on-par with regular quad-core SNB. However, keep in mind that we are comparing 130W hex-core and 95W quad-core; a 77W hex-core part might need lower clock speeds and could perform worse in limited-threaded tasks (depending on the Turbo speeds of course). In general, tasks like video encoding, 3D rendering, and archiving scale well with additional cores, but how many consumers run these tasks on a day-to-day basis? If you know you will be doing a lot of CPU intensive work that can benefit from additional cores, SNB-E (and later IVB-E) will always be an option--though you'll give up Quick Sync and the integrated graphics in the process. For most consumers, higher frequencies will likely prove far more useful due to the limited multithreading of everyday applications.

There is also the AMD point of view. Bulldozer hasn't exactly been a success story and there is no real competition in the high-end CPU market because of that. Intel could skip Ivy Bridge altogether and their position at the top of the performance charts would still hold. With no real competition, there's no need to push the performance much higher. Four cores is enough to keep the performance higher than AMD's, and reducing the TDP as a side effect is a big plus, especially when thinking about the future and ARM. As another point of comparison with AMD, look at Llano: it's a quad-core CPU that focuses more on improved graphics. For example, the now rather "old" Lynnfield i5-750 (quad-core, no Hyper-Threading) is able to surpass the CPU performance of Llano, but that hasn't stopped plenty of people from picking up Llano as an inexpensive solution that provides all the performance needed for most tasks.

Wrap-Up

When looking at the big picture, there really aren't any compelling reasons why Intel should have gone with hex-core design for Ivy Bridge. Just like the Sandy Bridge vs. Gulftown comparison, IVB vs. SNB-E looks like a good use of market segmentation. Sure, some enthusiasts will argue that having a quad-core CPU is so 2007, but don't let the number of cores fool you. The only thing that 2007 and 2012 quad-cores share is the core count; otherwise they are very different animals (see for example i7-2600K vs Q6600). It also appears that even without additional cores or clock speed improvements, Ivy Bridge will be around 15% faster clock for clock than Sandy Bridge (according to Intel's own tests; a deeper performance analysis will come soon).

Increasing the frequencies and boosting the clock for clock performance yields increased performance in every CPU bound task, and improving the quality of the on-die graphics helps in other areas. In contrast, increasing the core count only helps if the software has proper multithreading and can scale to additional cores--both of which are easier said than done. Given all of the possibilities, it would appear that Intel has done the right thing, and in the process there's no need to try and convince consumers into believing that they need more cores than they actually do.

79 Comments

View All Comments

name99 - Tuesday, December 6, 2011 - link

" Thus, instead of hex-core, we get a chip that looks much the same as a year-old Sandy Bridge, only with improved efficiency and some other moderate tweaks to the design. Let's go through some of the elements that influence the design of a new processor, and when we're done we will have hopefully clarified why Ivy Bridge remains a quad-core solution."The answer to this question is trivial. More cores solves a problem that almost no-one has --- and a few enthusiasts screaming that their usage models can easily work out 10 or 20 or 100 cores is not going to change that. It was fairly easy for dual core CPUs to provide real value to most users --- with modern OSs, there's enough background work of one sort or another that this frequently pays off. Quad core is a much harder sell.

In spite of Intel's work, Apple's GCD work, etc, highly threaded (or even slightly threaded) "core" apps remain rare. The main browsers make little use of more than two cores --- and the only reason they give two cores a workout is through the OS shunting graphics, OS work and some UI (all low CPU load) onto a second core. Launch apps is still too slow (anything where I have to wait is "too slow") but, as far as I know, dyload fixups are single threaded. iTunes (which yes, I know, is the crappiest "major app" in existence) resolutely uses only one core, even though parts of it appear to be multi-threaded.

Given this situation, dual core with hyperthreading is good enough for almost every user. And it's going to remain that way until the major browsers become more threaded, iTunes becomes more threaded, Intels supposed "run a separate thread to pre-warm caches" technology ever becomes real, etc etc.

This is a fact. I run Mathematica, so would consider myself a power user, and I'll be getting a hyperthreaded quadcore Ivy Bridge iMac next year, but I fully expect that 99% of the time it will have 2 threads or less active --- even Mathematica will only exercise eight threads for rare operations.

So it makes NO sense for Intel to spend effort on CPUs with more cores. Far more sensible is to concentrate on

- single threaded performance (tough, and you can't fault them for the work they have done so far)

- power usage (again tough, again they've been doing a really good job --- though one suspects there is room for a BIG.little strategy on their CPUs --- essentially the equivalent of turbo-ing down)

- special purpose hardware that can solve real problems. This is harder in their world than in the phone/tablet world because on phones/tablets one expects that only a single app will use this special hardware, that background apps will shut down, etc --- whatever is most convenient to get the feature to work. We have some of this with AES and QuickSync.

Even so --- if they had a low-power CPU on board that could run the background OS stuff, plus dedicated HW to play movies and music, would that help use cases like light web browsing or email while listening to music, or full screen movie viewing?

- I suspect there is scope for Intel to conserve power outside the CPU in RAM. One could imagine the memory controller (more or less in conjunction with the OS) being allowed to decide that only one (of two) or 1, 2, 3 (of 4) memory DIMMs really needed to be powered up, given the current active working set, and so shutting the other down.

The basic issue is --- expending transistors on what people can't use is foolish. People want

- single threaded performance

- low power

Spend transistors on THOSE.

hardrock_ram - Tuesday, December 6, 2011 - link

This of course makes perfect sense. Besides, if Intel adds more cores to their dekstop CPU`s, they have to add even more in their Xeon lineup. Many people, including myself, build workstations with Xeon and Opteron partly to get cores. We pay a premium that Intel will loose otherwise. Xeon obviously have advantages beyond cores, but people like me, who use them like a generic network node in 3D rendering (not critical) might be inclined to buy desktop counterparts if they would offer the same amount of "power".Just my 100 dollars ...

bigboxes - Tuesday, December 6, 2011 - link

I've been waiting until Ivy Bridge to upgrade my Nehalem processor. I really wanted to go to a hex-core processor, but wanted to do so with Ivy Bridge. Man, I wish AMD was more competitive with Intel as they are with nVidia.charleski - Tuesday, December 6, 2011 - link

Everything I see about IVB makes me think that this design is really targeted at laptops. Lower TDP and improved IGP make it very desirable for power-constrained situations, but doesn't really offer anything for the desktop.The only non-mobile systems that would benefit at all would be HTPCs.

Laptops are, of course, a massive market that dwarfs the enthusiast power/speed-hungry segment, so I can't blame Intel for this, especially when SNB will be trouncing AMD for performance for the foreseeable future. But I think it's clear that if you're building a desktop there's very little point waiting around for IVB since Intel isn't planning any major jumps in performance for this sector over the next 18 months.

iollmann - Wednesday, January 4, 2012 - link

Desktops are dead, man! They just don't know it yet. Desktops are being disrupted by portables which are in turn being disrupted by post-PC devices. Once the economies of scale drop off, it is good bye big iron.It's probably for the best. Too much power is being wasted in our global obsession with computing. There is no reason to have a 500W space heater under your desk when a new 35W machine is just as good.

dealcorn - Wednesday, December 7, 2011 - link

Prior to convicting Intel of some sort of core deficiency at 22nm, it may be helpful to see what MIC brings to the table. Is MIC helpful in video trans-coding workloads, for example?Dribble - Wednesday, December 7, 2011 - link

The IB quads look barely faster then the SB ones. The one reason for producing a hex core would be to give us SB owners a good reason to upgrade, and hence spend money on Intel products.Death666Angel - Wednesday, December 7, 2011 - link

Any idea how useful the IGP of IVB will be for desktop gamers? Will there finally be a reliable technology that will let us disable the dGPU in normal workloads or use the IGP for QuickSync....?Wolfpup - Thursday, January 5, 2012 - link

Ugh. Enough for at least another core and some cache. At best that sits idle. At worst you're stuck with "switchable" graphics nonsense.*sigh*

It does give AMD a better shot-if Intel's wasting that much die area, AMD can sell something with the same useful number of transistors for less, or pocket more money, or put more transistors towards useful work, etc.

I mean geez... 16PCIe lanes? All those transistors wasted on Intel video? I hate how they're trying to shove that down our throats.

Heck, my G74 notebook counts towards Intel's video sales figures, I suppose...