Intel Haswell Info: Single Chip for Ultrabooks, GT3 GPU for Mobile, LGA-1150 for Desktop

by Anand Lal Shimpi on November 9, 2011 5:37 PM ESTVR-Zone spotted a bunch of very interesting slides about Haswell over at Chiphell. The slides and information both look fairly believable so let's get with the analyzing shall we?

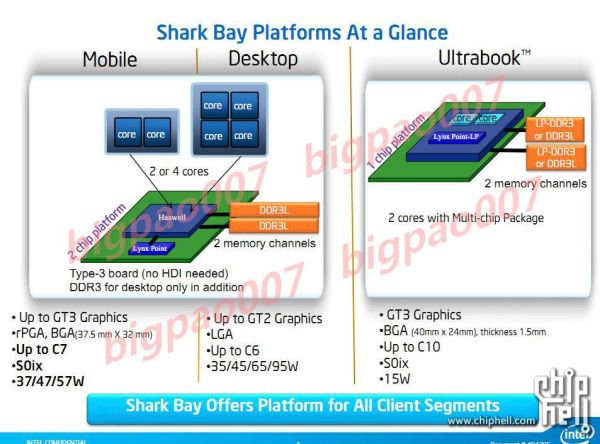

Haswell is Intel's next tock, meaning it's a brand new architecture. Haswell will debut sometime in 2013 on Intel's 22nm process, first introduced with Ivy Bridge at the beginning of 2012. The information on Haswell spans three major platforms: notebooks, desktops and Ultrabooks.

Integration

Haswell for Ultrabooks will be available in a 15W TDP, similar to where SNB based Ultrabooks are today. The big news here is Intel will move the PCH (Platform Controller Hub) onto the same package as the CPU, making the Ultrabook version of Haswell a single chip solution. With Sandy Bridge you needed two parts from Intel, the CPU and the PCH, with Haswell you only need the Haswell MCP (multi-chip package). That's two individual die on a single package, often the precursor to outright die integration (perhaps at 14nm?). The combined MCP should require a smaller footprint than the CPU + PCH arrangement we have today, allowing for less cramped (or smaller) motherboards and potentially even larger batteries in Ultrabooks. This is a huge move as it really starts to blur the line between Ultrabook and tablet hardware.

While Haswell for Ultrabooks tops out at two cores, you can get 2 or 4 core versions in notebooks and desktops.

Faster Graphics

Both the Ultrabook and notebook Haswell platforms will feature one of three different on-die GPU configurations: GT1, GT2 or GT3. Desktop Haswell will only be offered (as of now) in GT1 or GT2 configurations. No word on the differences between each configuration, but the fact that there are three in Haswell (vs 2 in SNB/IVB) indicates Intel may be exploring an ultra high performance GPU option to further encroach on discrete mobile GPU territory. An even higher performance GPU option for Haswell is something we hinted at in our Ivy Bridge architecture discussion.

Lower Power Memory & A New Socket

The list of memory support is also fairly power optimized. All three Haswell targets will support DDR3L, while the desktop version can use regular DDR3 and the Ultrabook version can use LPDDR3. All three implementations feature two memory channels.

It's important to note that despite Haswell's retarget to focus on 10 - 20W TDPs, the architecture appears to be capable of scaling nearly as high as Sandy Bridge (95W desktop parts will be available, although TDPs may not be directly comparable). This makes sense given that a single architecture can usually span an order of magnitude of TDPs without losing its edge.

Other Haswell features include integrated voltage regulators (should simplify things on the motherboard side), AVX 2.0 instruction support and of course things like AES-NI and Hyper Threading. Haswell will require a new socket: LGA-1150 for desktops.

Final Words

Nothing here is really all that surprising. We knew that faster integrated graphics was coming, I am curious to see just how powerful this GT3 option will be. The true test is whether or not it will be enough to steer customers like Apple away from including a discrete GPU in their 15-inch Macbook Pro for example. From what I've heard, much of Intel's integrated graphics roadmap has been strongly "encouraged" by Apple.

The move to a single-chip solution for a high-end x86 CPU is a pretty significant step. That line between tablets and notebooks is going to become mighty blurry come 2013.

36 Comments

View All Comments

GreenEnergy - Wednesday, November 9, 2011 - link

http://www.anandtech.com/show/1770Kinda funny to see. With Haswell it all happend, and beyond with the mobile CPUs.

Its a huge realestate on motherboards that is integrated and removed to reduce footprint and power consumption, while boosting performance.

Anand Lal Shimpi - Wednesday, November 9, 2011 - link

+1000 for remembering this :)Filiprino - Wednesday, November 9, 2011 - link

FFFFFFUUUU Intel. 4 cores, 4 cores. 4 cores!!We want moar cores. Me hungry. Cores come to me. In big quantities. Now.

At least, for the mainstream, they should up the number to 6 cores. For the enthusiast platform, 8 or 10 cores is out of question.

eddman - Wednesday, November 9, 2011 - link

Why? Developers can't even write proper programs that can take full advantage of current quads, or maybe they are lazy.Besides, not every kind of software can benefit from more cores. Read this:

http://en.wikipedia.org/wiki/Amdahl's_law

I personally think more than 4 cores for desktops is just a waste of transistors.

nofumble62 - Thursday, November 10, 2011 - link

otherwise they are just marketing number like you find in the BulldozyFiliprino - Thursday, November 10, 2011 - link

Of course, not every software can get benefits from parallelization, but I know how to get benefit out of it.What Intel is doing now is a waste of sillicon. Ask the people who has bought Core i7 2X00K. Wow, Integrated graphics on an overclockable processor is so useful. People who buy those procs have a discrete card.

Heavy multitasking, rendering, encoding, virtualization and games are software that gets or will get benefit from added core.

Come on, we are talking about haswell and its refresh (2013-2014). Today's DirectX 11 can do multithreaded rendering. Imagine what level of efficiencies and perfection DirectX 12 will get on that matter. In a time not so distant in the future we will start seeing games using multiple threads for rendering different parts of a scene. With one GPU it will be possible, and if you add more GPUs the possibilities or options increase, like you could have one GPU rendering some objects and the other GPU rendering other ones, having the load distributed with simple DirectX directives if you want, instead of the actual model where the driver has to do all the work of taking load into account and splitting the frame in a not so granular way that also it presents issues with games (scalability issues or erratic behaviour).

The drivers should provide unified virtual memory, something that's already being worked on and for example Radeon HD 7000 series will have a unified memory model, making it easier to share data between GPUs and main memory. In two years that will be improved to the right level.

The key is get graphics multiGPU threading/processing in the same way today you get normal application multiprocessing and multitrheading.

moozoo - Wednesday, November 9, 2011 - link

I used to think that way. The future was more big cores. No the future is with custom accelerators (video encoding/decoding) and unified shader GPU "cores" that accelerate compute and graphics.Filiprino - Thursday, November 10, 2011 - link

You also need more cores to control those accelerators.Roland00Address - Wednesday, November 9, 2011 - link

The socket they are referring to is the mainstream socket with 1150 pins. It is only going to be 4 cores, who knows how many cores will be on the enthusiast platform.frozentundra123456 - Thursday, November 10, 2011 - link

I agree. Even though not many applications now use more that 4 cores, we are talking almost 2 years before the platform comes out. Then if you expect the computer to last 2 to 4 years, we are looking at perhaps 6 years to the end of use of one of these chips. I cant believe that at least 6 cores wont be needed by then for the mainstream. When dual cores first came out, people said there was not enough software for them, not we are in the same place with 4 or 6 cores.Maybe AMDs moar cores will allow them to catch up eventually. I dont think Intel should rely on superior architecture and IPC to the exclusion of increasing cores eventually.