Apple iPhone 4S: Thoroughly Reviewed

by Anand Lal Shimpi & Brian Klug on October 31, 2011 7:45 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 4S

Final Words

Putting out a new chassis design, whether large or small, requires a ton of resources and effort. There are up front design, tooling, prototyping and manufacturing costs that have to be recouped over the life of the product. The newer the product, the less likely Apple is to re-use its design. We saw this with the first generation iPhone and Apple TV, both of which saw completely new designs in their second incarnations. Have a look at Apple’s more mature product lines and you’ll see a much longer design lifespan. The MacBook Pro is going on three years since a major redesign and the Mac Pro is even longer at four (six if you count the Power Mac G5 as an early rev of the design). Apple uses design as a competitive advantage. In markets where it feels more confident or less driven to compete, designs are allowed to live on for longer - improving the bottom line but removing one reason to upgrade. In the most competitive markets however, Apple definitely leans on a rapidly evolving design as a strength. The iPhone is no exception to this rule.

The evolution of iPhone (Left to right: iPhone 4S, iPhone 4, iPhone 3GS, iPhone 1)

Thus far Apple has shown that it’s willing to commit to a 2-year design cycle with the iPhone. I would go as far as to say that from a design standpoint, Apple isn’t terribly pressured to evolve any quicker. There are physical limits to device thickness if you’re concerned with increasing performance and functionality. Remember, the MacBook Air only happened once Moore’s Law gave us fast-enough CPUs at the high-end that we could begin to scale back TDP for the mainstream. Smartphones are nowhere near that point yet. The iPhone 4S, as a result, is another stop along the journey to greater performance. So how does it fare?

The original iPhone 4 design was flawed. Although Apple downplayed the issue publicly, it solved the deathgrip antenna problem with the CDMA iPhone 4. The iPhone 4S brings that fix to everyone. If you don’t remain stationary with your phone in an area with good coverage, the dual-chain antenna diversity introduced with the iPhone 4S is a tangible and significant improvement over the previous GSM iPhone 4. In North Raleigh, AT&T’s coverage is a bit on the sparse side. I get signal pretty much everywhere, but the quality of that signal isn’t all that great. The RSSI at my desk is never any better than -87dBm, and is more consistently around -94. Go down to my basement and the best you’ll see is -112dBm, and you’re more likely to see numbers as low as -130 thanks to some concrete walls and iron beams. The iPhone 4’s more sensitive cellular stack made it possible to receive phonecalls and text messages down there, although I couldn’t really carry on a conversation - particularly if I held the phone the wrong way. By comparison, the iPhone 3GS could not do any of that. The iPhone 4S’ antenna diversity makes it so that I can actually hold a conversation down there or pull ~1Mbps downstream despite the poor signal strength. This is a definite improvement in the one area that is rarely discussed in phone reviews: the ability to receive and transmit a cellular signal. The iPhone 4 already had one of the most sensitive cellular stacks of any smartphone we’d reviewed, the 4S simply makes it better.

Performance at the edge of reception is not the only thing that’s improved. If you’re on a HSPA+ network (e.g. AT&T), overall data speeds have shifted upwards. As our Speedtest histograms showed, the iPhone 4S is about 20% faster than the 4 in downstream tests. Best case scenario performance went up significantly as a result of the move to support HSPA+ 14.4. While the iPhone 4 would top out at around 6Mbps, the 4S is good for nearly 10Mbps. We’re still not near LTE speeds, but the 4S does make things better across the spectrum regardless of cellular condition.

The improvements don’t stop at the radio, Apple significantly upgraded the camera on the 4S. It’s not just about pixel count, although the move to 8MP does bring Apple up to speed there, overall quality is improved. The auto whitebalance is much better than the 4, equalling the Samsung Galaxy S 2 and setting another benchmark for the rest of the competition to live up to. Sharpness remains unmatched by any of the other phones we’ve reviewed thus far, whether in the iOS or Android camp. Performance outside of image quality has also seen a boost. The camera launches and fires off shots much quicker than its predecessor.

Our only complaint about the camera has to do with video. Apple is using bitrate rather than more complex encoding schemes to deliver better overall image quality when it comes to video. The overall result is good, but file sizes are larger than they needed to be had Apple implemented hardware support for High Profile H.264.

Then there’s the A5 SoC. When we first met the A5 in the iPad 2 it was almost impossible to imagine that level of performance, particularly on the GPU side, in a smartphone. As I hope we’ve proven through our analysis of both the solution and its lineage, Apple is very committed to the performance race in its iOS devices. Apple more than doubled the die size going from the A4 to the A5 (~53mm^2 to ~122mm^2) on the same manufacturing process. Note that in the process Apple didn’t integrate any new functionality onto the SoC, the additional transistors were purely for performance. To be honest, I don’t expect the pursuit to slow down anytime soon.

The gains in CPU and GPU speed aren’t simply academic. The 4S is noticeably faster than its predecessor and finally comparable in its weakest areas to modern day Android smartphones. In the past, iOS could guarantee a smooth user experience but application response and web page loading times were quickly falling behind the latest wave of dual-core Android phones. The 4S brings the iPhone back up to speed.

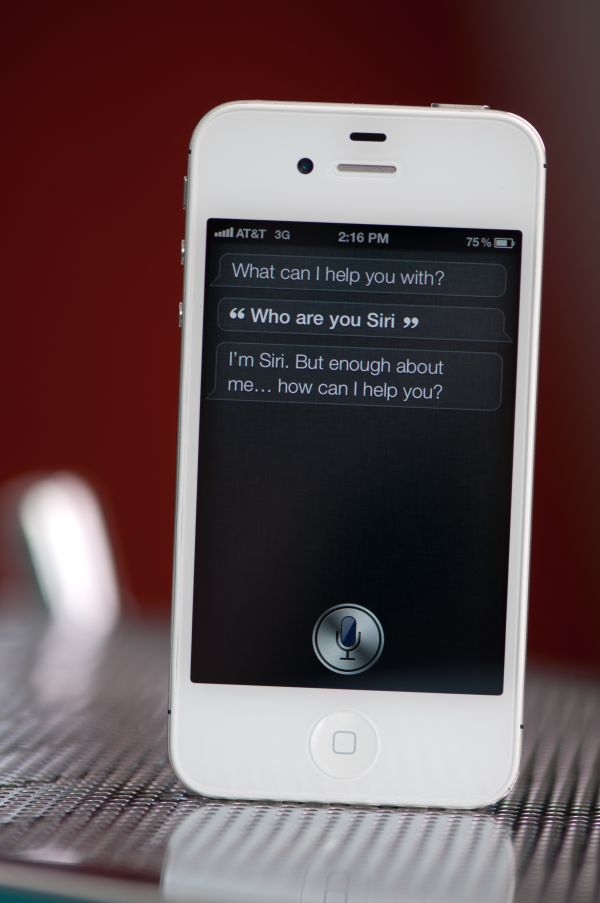

On the software side, there’s Siri. The technology is a nod to decades of science fiction where users talk to an omnipotent computer that carries out complex calculations and offers impartial, well educated advice when needed. In practice, Siri is far away from being anywhere close to that. Through an admittedly expansive database of patterns, Siri is able to give the appearance of understanding and depth. That alone is enough to convince many mainstream consumers. The abstraction of Wolfram Alpha alone is a significant feature, as I’m not sure how many out-of-the-loop smartphone users would begin to use it as a tool had it not been for Siri. But what about for power users, is Siri a game changer?

There are a few areas that Siri does improve user experience. Making appointments and setting alarms are very natural and quite convenient thanks to Siri. There’s still the awkwardness of giving your phone verbal commands, but if no one is looking I find that it’s quicker to deal with calendar stuff via Siri than by manually typing it in. Setting alarms via Siri actually offers an accuracy benefit as well. Whereas I’ve all too frequently set an alarm for 7PM instead of 7AM because I didn’t definitively swipe the day/night roller, Siri doesn’t let me make that mistake. Searching for restaurants or figuring out how much to tip are nice additions as well.

Text dictation is a neat feature for sure, but to be honest I’m still not likely to rely on it for sending or replying to messages. It’s convenient while driving but the accuracy isn’t high enough to trust it with sending messages to important contacts.

Siri is a welcome addition, but not a life changer. As Apple continues to expand Siri’s database and throws more compute at the problem (both locally on the phone and remotely in iCloud), we’ll hopefully see the technology mature into something more like what years of science fiction moves have promised us.

From a hardware perspective, the iPhone 4S is a great upgrade to the iPhone 4. If the 4 was your daily driver, despite the lack of physical differences, the 4S is a noticeable upgrade. While not quite the speed improvement we saw when going from the iPhone 3G to the 3GS, the 4S addresses almost every weakness of the iPhone 4.

The biggest issue is timing one’s upgrade. History (and common sense) alone tell us that in about 12 months we’ll see another iPhone. If you own an iPhone 4 and typically upgrade yearly, the 4S is a no-brainer. If you want to keep your next phone for two years, I’d wait until next year when it’s possible you’ll see a Cortex A15 based iPhone from Apple with Qualcomm’s MDM9615 (or similar) LTE modem. The move to 28/32nm should keep power in check while allowing for much better performance.

If you own anything older than an iPhone 4 (e.g. 2G/3G/3GS), upgrading to the 4S today is a much more tempting option. The slower Cortex A8 is pretty long in the tooth by now and anything older than that is ARM11 based, which I was ready to abandon two years ago.

199 Comments

View All Comments

doobydoo - Friday, December 2, 2011 - link

Its still absolute nonsense to claim that the iPhone 4S can only use '2x' the power when it has available power of 7x.Not only does the iPhone 4s support wireless streaming to TV's, making performance very important, there are also games ALREADY out which require this kind of GPU in order to run fast on the superior resolution of the iPhone 4S.

Not only that, but you failed to take into account the typical life-cycle of iPhones - this phone has to be capable of performing well for around a year.

The bottom line is that Apple really got one over all Android manufacturers with the GPU in the iPhone 4S - it's the best there is, in any phone, full stop. Trying to turn that into a criticism is outrageous.

PeteH - Tuesday, November 1, 2011 - link

Actually it is about the architecture. How GPU performance scales with size is in large part dictated by the GPU architecture, and Imagination's architecture scales better than the other solutions.loganin - Tuesday, November 1, 2011 - link

And I showed it above Apple's chip isn't larger than Samsung's.PeteH - Tuesday, November 1, 2011 - link

But chip size isn't relevant, only GPU size is.All I'm pointing out is that not all GPU architectures scale equivalently with size.

loganin - Tuesday, November 1, 2011 - link

But you're comparing two different architectures here, not two carrying the same architecture so the scalability doesn't really matter. Also is Samsung's GPU significantly smaller than A5's?Now we've discussed back and forth about nothing, you can see the problem with Lucian's argument. It was simply an attempt to make Apple look bad and the technical correctness didn't really matter.

PeteH - Tuesday, November 1, 2011 - link

What I'm saying is that Lucian's assertion, that the A5's GPU is faster because it's bigger, ignores the fact that not all GPU architectures scale the same way with size. A GPU of the same size but with a different architecture would have worse performance because of this.Put simply architecture matters. You can't just throw silicon at a performance problem to fix it.

metafor - Tuesday, November 1, 2011 - link

Well, you can. But it might be more efficient not to. At least with GPU's, putting two in there will pretty much double your performance on GPU-limited tasks.This is true of desktops (SLI) as well as mobile.

Certain architectures are more area-efficient. But the point is, if all you care about is performance and can eat the die-area, you can just shove another GPU in there.

The same can't be said of CPU tasks, for example.

PeteH - Tuesday, November 1, 2011 - link

I should have been clearer. You can always throw area at the problem, but the architecture dictates how much area is needed to add the desired performance, even on GPUs.Compare the GeForce and the SGX architectures. The GeForce provides an equal number of vertex and pixel shader cores, and thus can only achieve theoretical maximum performance if it gets an even mix of vertex and pixel shader operations. The SGX on the other hand provides general purpose cores that work can do either vertex or pixel shader operations.

This means that as the SGX adds cores it's performance scales linearly under all scenarios, while the GeForce (which adds a vertex and a pixel shader core as a pair) gains only half the benefit under some conditions. Put simply, if a GeForce core is limited by the number of pixel shader cores available, the addition of a vertex shader core adds no benefit.

Throwing enough core pairs onto silicon will give you the performance you need, but not as efficiently as general purpose cores would. Of course a general purpose core architecture will be bigger, but that's a separate discussion.

metafor - Tuesday, November 1, 2011 - link

I think you need to check your math. If you double the number of cores in a Geforce, you'll still gain 2x the relative performance.Double is a multiplier, not an adder.

If a task was vertex-shader bound before, doubling the number of vertex-shaders (which comes with doubling the number of cores) will improve performance by 100%.

Of course, in the case of 543MP2, we're not just talking about doubling computational cores.

It's literally 2 GPU's (I don't think much is shared, maybe the various caches).

Think SLI but on silicon.

If you put 2 Geforce GPU's on a single die, the effect will be the same: double the performance for double the area.

Architecture dictates the perf/GPU. That doesn't mean you can't simply double it at any time to get double the performance.

PeteH - Tuesday, November 1, 2011 - link

But I'm not talking about relative performance, I'm talking about performance per unit area added. When bound by one operation adding a core that supports a different operation is wasted space.So yes, doubling space always doubles relative performance, but adding 20 square millimeters means different things to the performance of different architectures.