Apple iPhone 4S: Thoroughly Reviewed

by Anand Lal Shimpi & Brian Klug on October 31, 2011 7:45 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 4S

Display

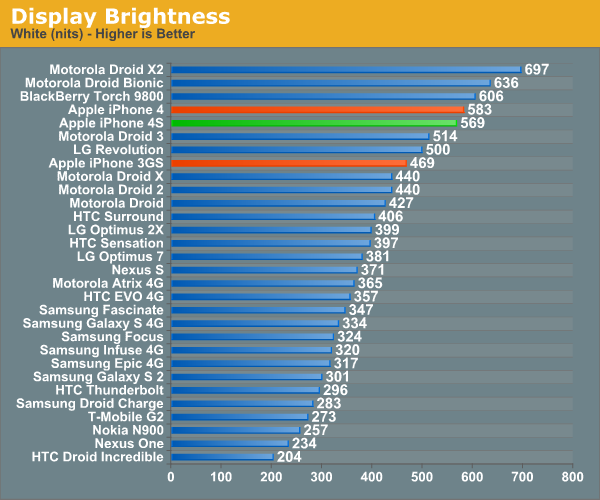

Though many expected Apple to redesign everything around a 4“ display, the display on the 4S superficially identical. The 4S includes the same size and resolution display as the 4, namely a 3.54” IPS panel with 960x640 resolution. We’ve been over this a few times already in the context of the iPhone 4 and the CDMA iPhone 4, but it bears going over again.

In retrospect, moving up to 4“ would’ve gone against Apple’s logical approach to maintaining a DPI-agnostic iOS, and it makes sense to spread the cost of changing display resolution across two generations, which is what we see now. While Android is gradually catching up in the DPI department, OEMs on that side of the fence are engaged in a seemingly endless battle over display size. You have to get into Apple’s head and understand that from their point of view, 3.5” has always been the perfect size - there’s a reason it hasn’t changed at all.

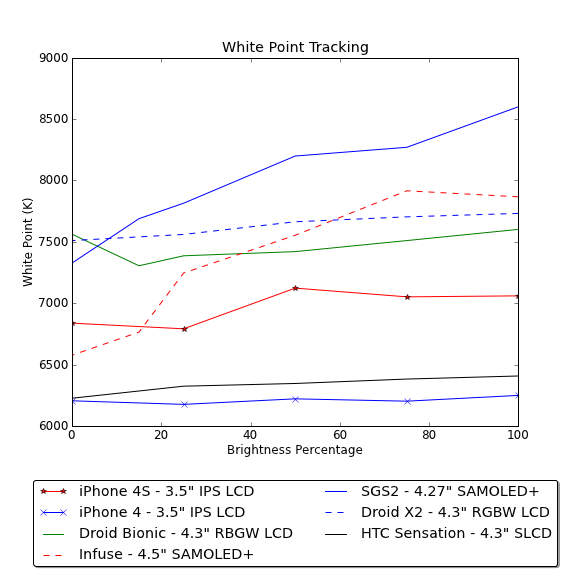

I’ve been through a few 4s myself, and alongside the CDMA iPhone 4, have seen the white point of the retina display gradually shift over time. While I don’t have that original device anymore, even now the 4S seems to have shifted slightly compared to a very recently manufactured 4 I had on hand, and appears to have a different color temperature. We’ve been measuring brightness and white point on smartphone displays at a variety of different brightness settings, and the 4S isn’t spared the treatment. I also tossed in my 4 for comparison purposes. The data really speaks for itself.

The first chart shows white point at a number of brightness values set in settings. You can see the iPhone 4 and 4S differ and straddle opposite sides of 6500K. I would bet that Apple has some +/- tolerance value for these displays from 6500K, and the result is what you see here. Thankfully the lines are pretty straight (so it doesn’t change as you vary brightness), but this variance is why you see people noting that one display looks warmer or cooler than the other. I noted this behavior with the CDMA iPhone 4, and suspect that many people still carrying around launch GSM/UMTS iPhone 4 devices will perceive the difference more than those who have had their devices swapped.

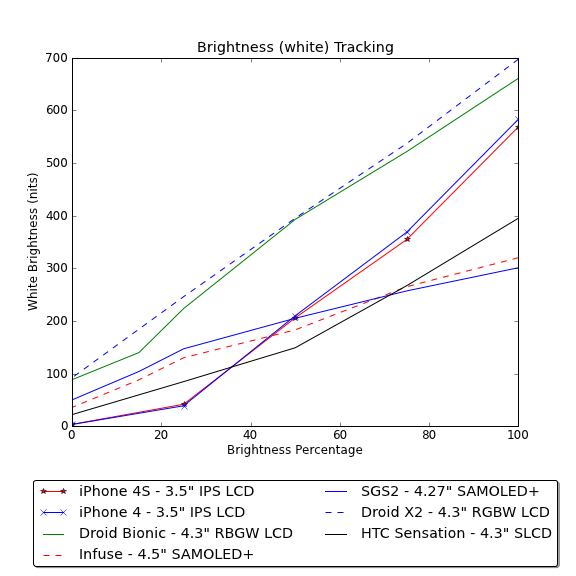

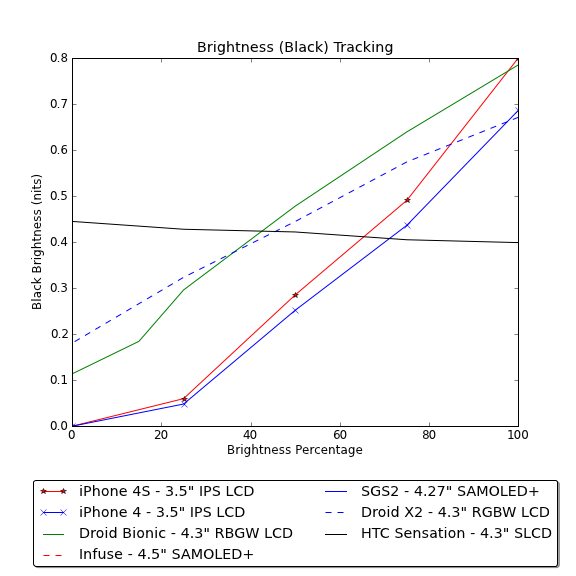

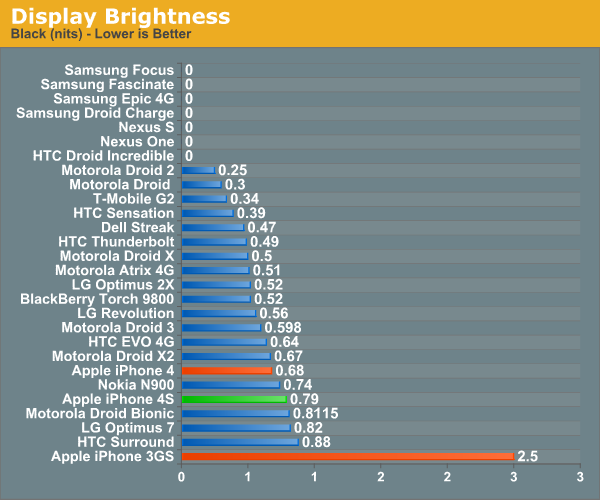

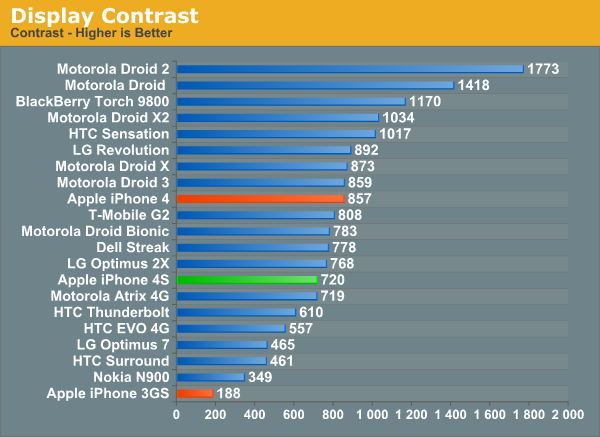

The next two charts show display brightness at various settings for solid black and white on the display.

The 4S and 4 displays follow roughly the same curve, however there is a definite shift in contrast resulting from higher black levels on the 4S display. I’ve seen a few anecdotal accounts of the 4S display being less contrasty, and again this is the kind of shift that unfortunately happens over time with displays. I’ve updated our iPhone 4 result on the graph with the latest of a few I’ve been through.

Unfortunately the 4S falls short of the quoted 800:1 contrast ratio, whereas the 4 previously well exceeded it (the earliest 4 we saw had a contrast value of 951). Rumor has it that Apple has approved more panel vendors to make the retina display, I have no doubt that we’re seeing these changes in performance as a result of multiple sourcing.

199 Comments

View All Comments

doobydoo - Friday, December 2, 2011 - link

Its still absolute nonsense to claim that the iPhone 4S can only use '2x' the power when it has available power of 7x.Not only does the iPhone 4s support wireless streaming to TV's, making performance very important, there are also games ALREADY out which require this kind of GPU in order to run fast on the superior resolution of the iPhone 4S.

Not only that, but you failed to take into account the typical life-cycle of iPhones - this phone has to be capable of performing well for around a year.

The bottom line is that Apple really got one over all Android manufacturers with the GPU in the iPhone 4S - it's the best there is, in any phone, full stop. Trying to turn that into a criticism is outrageous.

PeteH - Tuesday, November 1, 2011 - link

Actually it is about the architecture. How GPU performance scales with size is in large part dictated by the GPU architecture, and Imagination's architecture scales better than the other solutions.loganin - Tuesday, November 1, 2011 - link

And I showed it above Apple's chip isn't larger than Samsung's.PeteH - Tuesday, November 1, 2011 - link

But chip size isn't relevant, only GPU size is.All I'm pointing out is that not all GPU architectures scale equivalently with size.

loganin - Tuesday, November 1, 2011 - link

But you're comparing two different architectures here, not two carrying the same architecture so the scalability doesn't really matter. Also is Samsung's GPU significantly smaller than A5's?Now we've discussed back and forth about nothing, you can see the problem with Lucian's argument. It was simply an attempt to make Apple look bad and the technical correctness didn't really matter.

PeteH - Tuesday, November 1, 2011 - link

What I'm saying is that Lucian's assertion, that the A5's GPU is faster because it's bigger, ignores the fact that not all GPU architectures scale the same way with size. A GPU of the same size but with a different architecture would have worse performance because of this.Put simply architecture matters. You can't just throw silicon at a performance problem to fix it.

metafor - Tuesday, November 1, 2011 - link

Well, you can. But it might be more efficient not to. At least with GPU's, putting two in there will pretty much double your performance on GPU-limited tasks.This is true of desktops (SLI) as well as mobile.

Certain architectures are more area-efficient. But the point is, if all you care about is performance and can eat the die-area, you can just shove another GPU in there.

The same can't be said of CPU tasks, for example.

PeteH - Tuesday, November 1, 2011 - link

I should have been clearer. You can always throw area at the problem, but the architecture dictates how much area is needed to add the desired performance, even on GPUs.Compare the GeForce and the SGX architectures. The GeForce provides an equal number of vertex and pixel shader cores, and thus can only achieve theoretical maximum performance if it gets an even mix of vertex and pixel shader operations. The SGX on the other hand provides general purpose cores that work can do either vertex or pixel shader operations.

This means that as the SGX adds cores it's performance scales linearly under all scenarios, while the GeForce (which adds a vertex and a pixel shader core as a pair) gains only half the benefit under some conditions. Put simply, if a GeForce core is limited by the number of pixel shader cores available, the addition of a vertex shader core adds no benefit.

Throwing enough core pairs onto silicon will give you the performance you need, but not as efficiently as general purpose cores would. Of course a general purpose core architecture will be bigger, but that's a separate discussion.

metafor - Tuesday, November 1, 2011 - link

I think you need to check your math. If you double the number of cores in a Geforce, you'll still gain 2x the relative performance.Double is a multiplier, not an adder.

If a task was vertex-shader bound before, doubling the number of vertex-shaders (which comes with doubling the number of cores) will improve performance by 100%.

Of course, in the case of 543MP2, we're not just talking about doubling computational cores.

It's literally 2 GPU's (I don't think much is shared, maybe the various caches).

Think SLI but on silicon.

If you put 2 Geforce GPU's on a single die, the effect will be the same: double the performance for double the area.

Architecture dictates the perf/GPU. That doesn't mean you can't simply double it at any time to get double the performance.

PeteH - Tuesday, November 1, 2011 - link

But I'm not talking about relative performance, I'm talking about performance per unit area added. When bound by one operation adding a core that supports a different operation is wasted space.So yes, doubling space always doubles relative performance, but adding 20 square millimeters means different things to the performance of different architectures.