Sandy Bridge Memory Scaling: Choosing the Best DDR3

by Jared Bell on July 25, 2011 1:55 AM ESTMemory Scaling with Overclocking

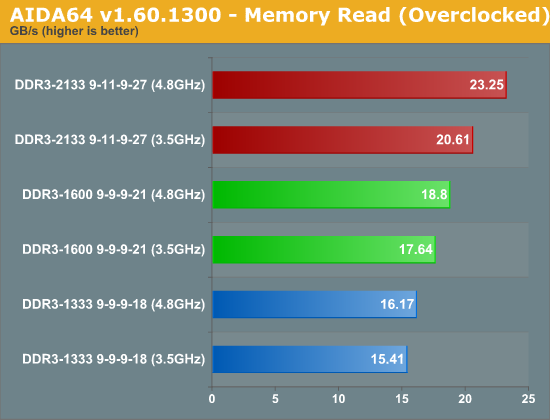

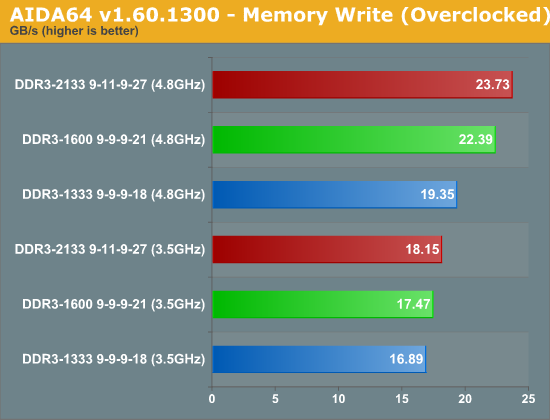

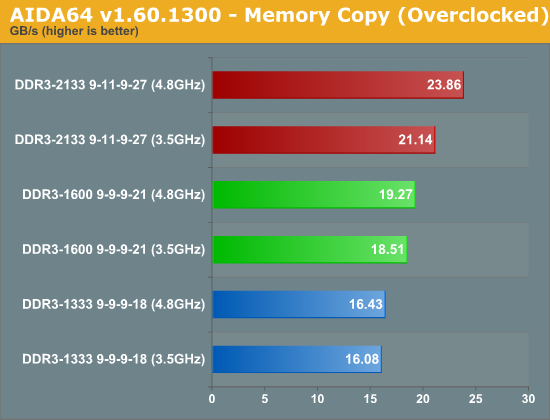

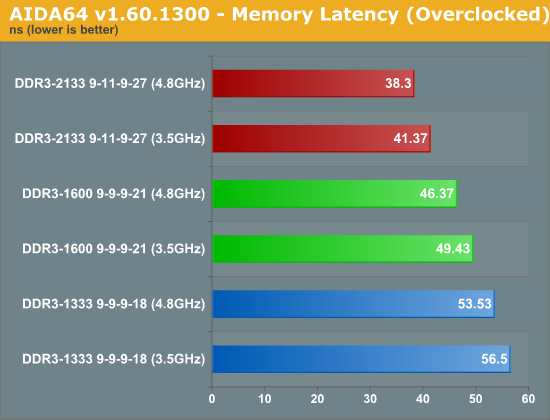

What happens when we increase the CPU clock speed on our Core i7-2600K from the default 3.5GHz to 4.8GHz; how will that affect memory performance? To find out, I ran the memory bandwidth tests again comparing DDR3-1333 CL9, DDR3-1600 CL9, and DDR3-2133 CL9 at both 3.5GHz and 4.8GHz CPU clock speeds. I also ran the most bandwidth intensive real-world test along with the least bandwidth intensive real-world test at the overclocked CPU speed to see if the faster CPU clock speed made any difference here as well.

The AIDA64 memory benchmark shows that memory bandwidth does scale with CPU clock speed. Going from DDR3-1333 to DDR3-1600 showed a 14% boost on our stock CPU while showing a 16% boost on our overclocked CPU. Stepping up from DDR3-1333 to DDR3-2133 saw a 33% increase on the stock CPU and a 43% increase on our overclocked CPU. The copy and latency tests showed similar results. What's more impressive is that the write test showed a much larger 15% increase from DDR3-1333 to DDR3-1600 on the overclocked CPU compared to 3% on the stock CPU. Going from DDR3-1333 to DDR3-2133 increased write performance by 22% when overclocked compared to 7% when stock. While it's interesting to see how an overclocked CPU affects raw memory bandwidth, I'm much more interested to see how it affects our real-world benchmarks.

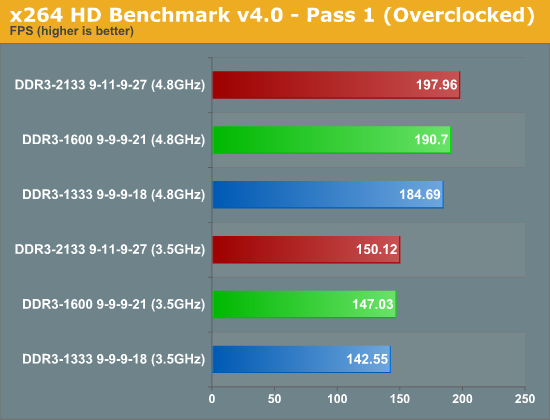

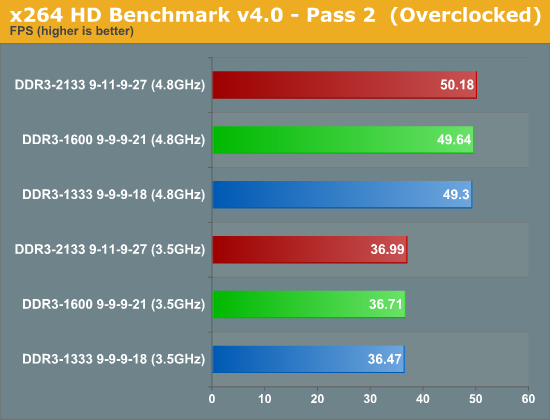

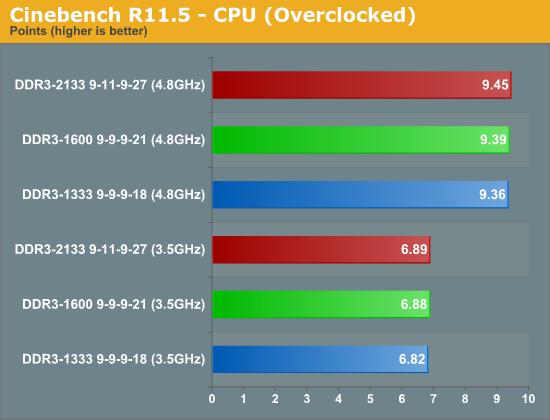

The extra bandwidth gained with the overclocked CPU doesn't exactly translate into much. The first pass of the x264 test reveals a 7% advantage for DDR3-2133 over DDR3-1333 on our overclocked CPU while the stock CPU shows a 5% increase. The increase for DDR3-1600 over DDR3-1333 is 3% for both our overclocked and stock CPUs. Once we move on to the second pass, there's no discernible advantage for faster memory on our overclocked system. The Cinebench test results are every bit as unimpressive with overclocking as at stock: overclocked or not, faster memory makes no real difference (though the faster CPU clock speed definitely helps a lot).

76 Comments

View All Comments

mga318 - Monday, July 25, 2011 - link

You mentioned Llano at the end, but in the Llano reviews & tests, memory bandwidth was tested primarily with little reference to latency. I'd be curious as to which is more important with a higher performance IGP like Llano's. Would CAS 7 (or 6) be preferrable over 1866 or 2166 speeds wtih CAS 8 or 9?DarkUltra - Monday, July 25, 2011 - link

How about testing Valves particle benchmark or a source based game at low reslution with a non-geometry limited 3d card (fermi) and overclocked cpu? Valve did an incredible job with their game engine. They used a combination of fine-grained and coarse threading to max out all the cpu cores. Very few games can do that today, but may in the future.DarkUltra - Monday, July 25, 2011 - link

Why test with 4GB? RAM is cheap, most people who buy the premium 2600K should pair it with two 4GB modules. I imagine Windows would require 4GB ram and games the same in the future. Just look at all the .net developers out there, .net usually results in incredible memory bloated programs.dingetje - Monday, July 25, 2011 - link

hehe yeah.net sucks

Atom1 - Monday, July 25, 2011 - link

Most algorithms on CPU platform are optimized to have their data 99% of time inside the CPU cache. If you look at the SisSoft Sandra where there is a chart of bandwidth as a function of block size copied you can see that CPU cache is 10-50x faster than global memory depending on the level. Linpack here is no exception. The primary reason for success of linpack is its ability to have data in CPU cache nearly all of the time. Therefore, if you do find an algorithm which can benefit considerably from global memory bandwidth, you can be sure it is a poor job on the programmers side. I think it is a kind of a challenge to see which operations and applications do take a hit when the main memory is 2x faster or 2x slower. I would be interested to see where is the breaking point, when even well written software starts to take a hit.DanNeely - Monday, July 25, 2011 - link

That's only true for benchmarks and highly computationally intensive apps (and even there many problem classes can't be packed into the cache or written to stream data into it). In the real world where 99% of software's performance is bound by network IO, HD IO, or user input trying to tune data to maximize the CPU cache is wasted engineering effort. This is why most line of business is written using java or .net, not C++; the finer grained memory control of the latter doesn't benefit anything while the higher level nature of the former allows for significantly faster development.Rick83 - Monday, July 25, 2011 - link

I think image editing (simple computation on large datasets) and engineering software (numerical simulations) are two types of application that benefit more than average from memory bandwidth, and in the second case, latency.But, yeah, with CPU caches reaching the tens of Megabytes, Memory bandwidth and latency is getting less important for many problems.

MrSpadge - Wednesday, July 27, 2011 - link

True.. large matrix operations love bandwidth and low latency never hurts. I've seen ~13% speedup on part of my Matlab code going from DDR3-1333 CL9 to DDR3-1600 CL9 on an i7 870!MrS

Patrick Wolf - Monday, July 25, 2011 - link

You don't test CPU gaming benchmarks at normal settings cause you may become GPU limited so why do it here?http://www.xbitlabs.com/articles/memory/display/sa...

dsheffie - Monday, July 25, 2011 - link

....uh...Linpack is just LU which in turn is just DGEMM. DGEMM has incredible operand reuse (O(sqrt(cache size)).