Intel Z68 Chipset & Smart Response Technology (SSD Caching) Review

by Anand Lal Shimpi on May 11, 2011 2:34 AM ESTAnandTech Storage Bench 2011

With the hand timed real world tests out of the way, I wanted to do a better job of summarizing the performance benefit of Intel's SRT using our Storage Bench 2011 suite. Remember that the first time anything is ever encountered it won't be cached and even then, not all operations afterwards will be cached. Data can also be evicted out of the cache depending on other demands. As a result, overall performance looks more like a doubling of standalone HDD performance rather than the multi-x increase we see from moving entirely to an SSD.

Heavy 2011—Background

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

First, some details:

1) The MOASB, officially called AnandTech Storage Bench 2011—Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011—Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011—Heavy Workload

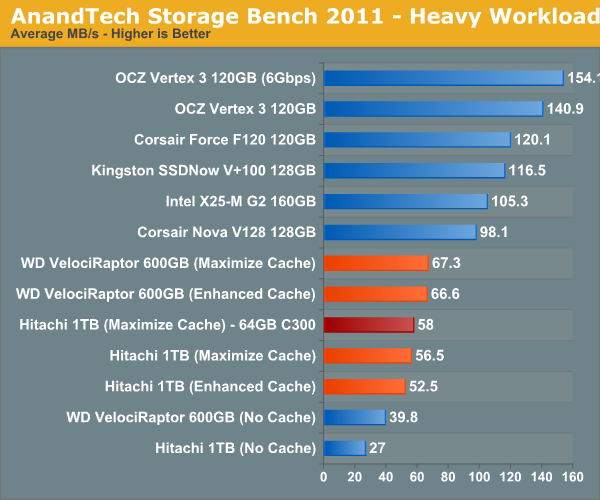

We'll start out by looking at average data rate throughout our new heavy workload test:

For this comparison I used two hard drives: 1) a Hitachi 7200RPM 1TB drive from 2008 and 2) a 600GB Western Digital VelociRaptor. The Hitachi 1TB is a good large, but aging drive, while the 600GB VR is a great example of a very high end spinning disk. With a modest 20GB cache enabled, the 3+ year old Hitachi drive is easily 41% faster than the VelociRaptor. We're still not into dedicated SSD territory, but the improvement is significant.

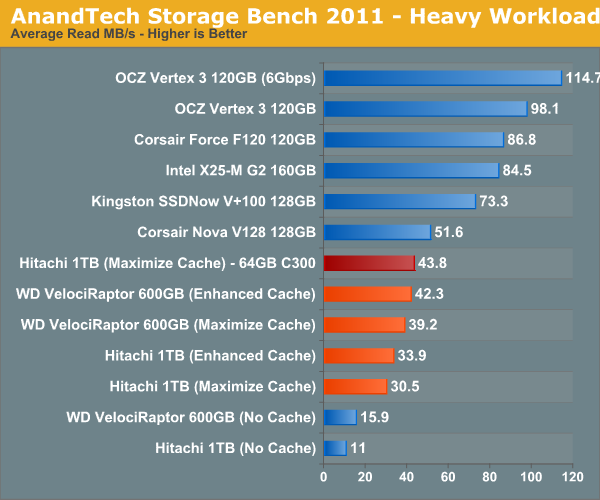

I also tried swapping the cache drive out with a Crucial RealSSD C300 (64GB). Performance went up a bit but not much. You'll notice that average read speed got the biggest boost from the C300 as a cache drive since it does have better sequential read performance. Overall I am impressed with Intel's SSD 311, I just wish the drive were a little bigger.

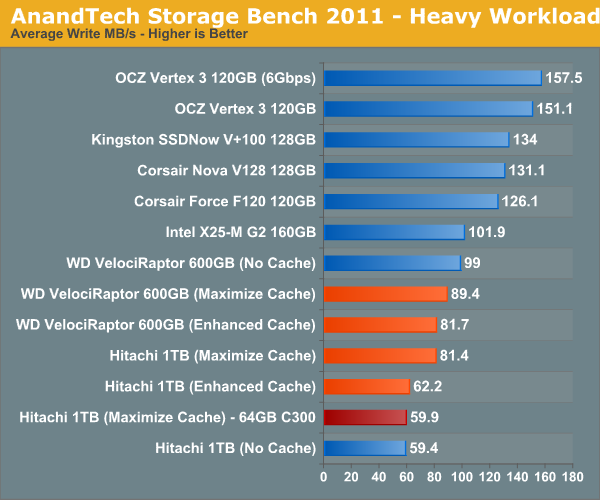

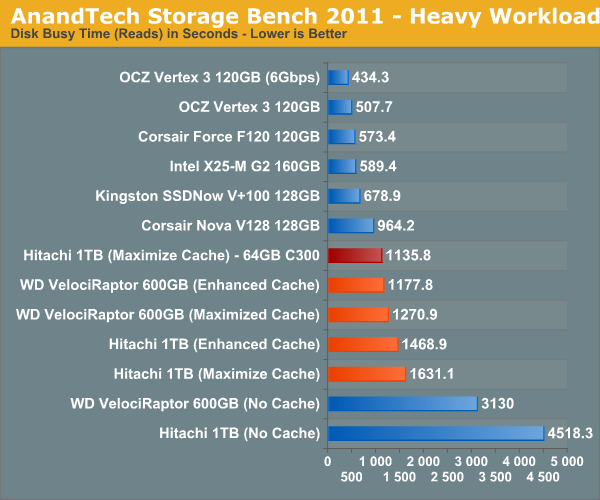

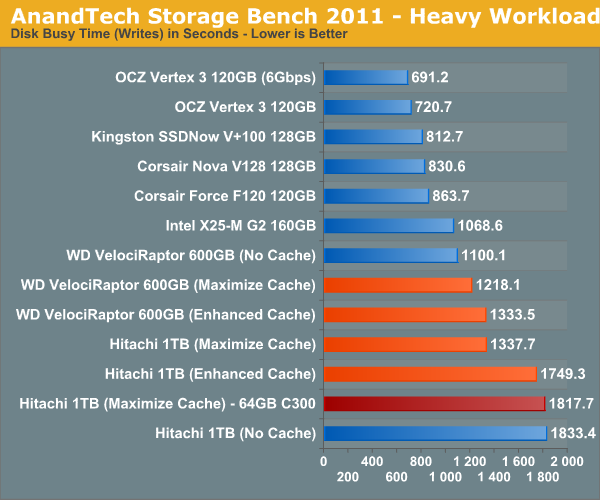

The breakdown of reads vs. writes tells us more of what's going on:

This isn't too unusual—pure write performance is actually better with the cache disabled than with it enabled. The SSD 311 has a good write speed for its capacity/channel configuration, but so does the VelociRaptor. Overall performance is still better with the cache enabled, but it's worth keeping in mind if you are using a particularly sluggish SSD with a hard drive that has very good sequential write performance.

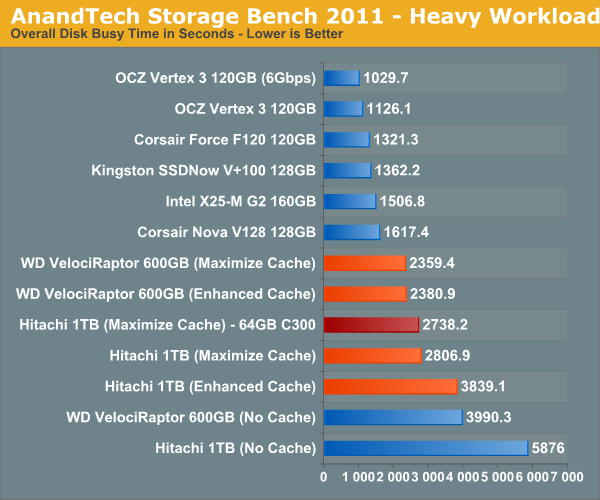

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

106 Comments

View All Comments

KayDat - Wednesday, May 11, 2011 - link

I know this bears zero relevance to Z68...but that CGI girl that Lucid used in their software is downright creepy.SquattingDog - Wednesday, May 11, 2011 - link

I tend to agree - maybe if she had some hair it would help...lolRamarC - Wednesday, May 11, 2011 - link

Seems that it would be better to designate a partition to be cached and other partitions uncached. With only a 20GB cache SSD, ripping from BD to .MP4 could easily cause cache evictions.And, will this work with a mixed Rapid Storage array? I typically run hard drives in pairs, and mirror (raid 1) the first 120GB and stripe the remaining so I've got a fault-protected 120GB boot device and a 1700GB speedster. In this case, I'd only want the boot device cached.

ganeshts - Wednesday, May 11, 2011 - link

This looks like a valid concern. For HTPCs, there is usually a data partition separate from the boot / program files partition. Usage of the SSD cache for the data partition makes no sense at all.velis - Wednesday, May 11, 2011 - link

I agree with validity of this proposal, but must also comment on (non)sensicality of caching the data partition:I for one was disappointed when I read that multi-MB writes are not (write) cached. This is the only thing that keeps my RAID-5 storage slow. And a nice 32GB cache would be just the perfect thing for me. That's the largest I ever write to it in a single chunk.

So instead of 100MB/s speeds I'm still stuck with 40 down to 20MB/s - as my raid provides.

Still - this is not the issue at all. I have no idea why manufacturers always think they know it all. Instead of just providing a nice settings screen where one could set preferences they just hard-code them...

fb - Wednesday, May 11, 2011 - link

SRT is going to be brilliant for Steam installs, as you're restricted to keeping all your Steam apps on one drive. Wish I had a Z68. =)LittleMic - Wednesday, May 11, 2011 - link

Actually, you can use a unix trick known as symbolic link to move the installed game elsewhere.On WindowsXP, you can use Junction,

On Windows Vista and 7, the tool mklink is provided with the OS.

jonup - Wednesday, May 11, 2011 - link

Can you elaborate on this or provide some links?Thanks in advance!

LittleMic - Wednesday, May 11, 2011 - link

Consider c:\program files\steam\...\mygame that is taking a lot of place.You can move the directory to d:\mygame for instance then you can use the command

vista/7 (you need to be administrator to be able to do so)

mklink /d c:\program files\steam\...\mygame d:\mygame

xp (administrator rights required too)

junction c:\program files\steam\...\mygame d:\mygame

to create the link.

The trick is that steam will still find its data in c:\program files\steam\...\mygame but they will be physically located on d:\mygame.

Junction can be found here :

http://technet.microsoft.com/fr-fr/sysinternals/bb...

LittleMic - Wednesday, May 11, 2011 - link

Update : see arthur449 suggestion.Steam mover is doing this exact operation with a nice GUI.