Westmere-EX: Intel's Flagship Benchmarked

by Johan De Gelas on May 19, 2011 1:30 PM EST- Posted in

- IT Computing

- Intel

- Xeon

- Cloud Computing

- Westmere-EX

Intel's Best x86 Server CPU

The launch of the Nehalem-EX a year ago was pretty spectacular. For the first time in Intel's history, the high-end Xeon did not have any real weakness. Before the Nehalem-EX, the best Xeons trailed behind the best RISC chips in either RAS, memory bandwidh, or raw processing power. The Nehalem-EX chip was well received in the market. In 2010, Intel's datacenter group reportedly brought in $8.57 billion, an increase of 35% over 2009.

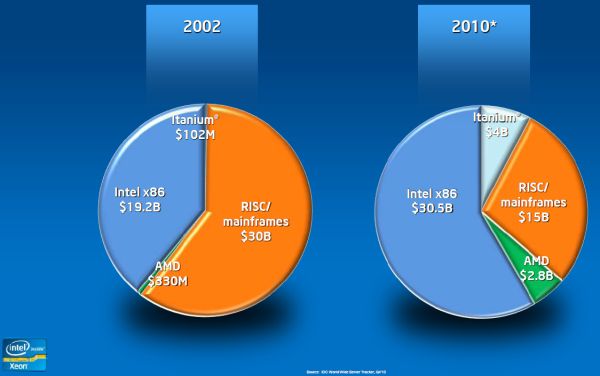

The RISC server vendors have lost a lot of ground to the x86 world. According to IDC's Server Tracker (Q4 2010), the RISC/mainframe market share has halved since 2002, while Intel x86 chips now command almost 60% of the market. Interestingly, AMD grew from a negligble 0.7% to a decent 5.5%.

Only one year later, Intel is upgrading the top Xeon by introducing Westmere-EX. Shrinking Intel's largest Xeon to 32nm allows it to be clocked slightly higher, get two extra cores, and add 6MB L3 cache. At the same time the chip is quite a bit smaller, which makes it cheaper to produce. Unfortunately, the customer does not really benefit from that fact, as the top Xeon became more expensive. Anyway, the Nehalem-EX was a popular chip, so it is no surprise that the improved version has persuaded 19 vendors to produce 60 different designs, ranging from two up to 256 sockets.

Of course, this isn't surprising as even mediocre chips like Intel Xeon 7100 series got a lot of system vendor support, a result of Intel's dominant position in the server market. With their latest chip, Intel promises up to 40% better performance at slightly lower power consumption. Considering that the Westmere-EX is the most expensive x86 CPU, it needs to deliver on these promises, on top of providing rich RAS features.

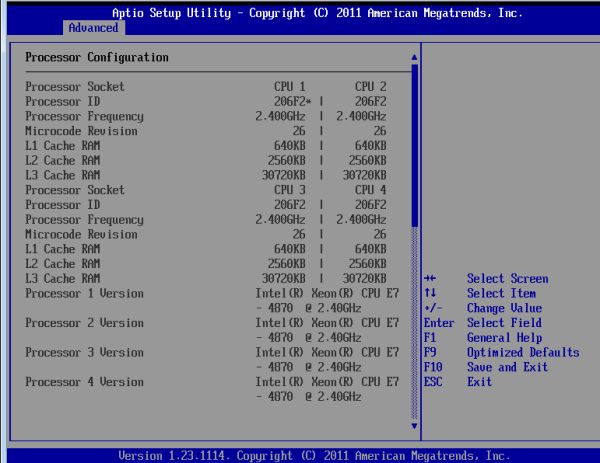

We were able to test Intel's newest QSSC-S4R server, with both "normal" and new "low power" Samsung DIMMs.

Some impressive numbers

The new Xeon can boast some impressive numbers. Thanks to its massive 30MB L3 cache it has even more transistors than the Intel "Tukwilla" Itanium: 2.6 billion versus 2 billion transistors. Not that such items really matter without the performance and architecture to back it up, but the numbers ably demonstrate the complexity of these server CPUs.

| Processor Size and Technology Comparison | ||||

| CPU | transistors count (million) | Process |

Die Size (mm²) |

Cores |

| Intel Westmere-EX | 2600 | 32 nm | 513 | 10 |

| Intel Nehalem-EX | 2300 | 45 nm | 684 | 8 |

| Intel Dunnington | 1900 | 45 nm | 503 | 6 |

| Intel Nehalem | 731 | 45 nm | 265 | 4 |

| IBM Power 7 | 1200 | 45 nm | 567 | 8 |

| AMD Magny-cours | 1808 (2x 904) | 45 nm | 692 (2x 346) | 12 |

| AMD Shanghai | 705 | 45 nm | 263 | 4 |

62 Comments

View All Comments

Casper42 - Thursday, May 19, 2011 - link

HP makes the BL620c G7 Blade server that is a 2P Nehalem EX (soon to offer Westmere EX)And believe it or not, but the massive HP DL980 G7 (8 Proc Nehalem/Westmere EX is actually running 4 pair of EX CPUs. HP has a custom ASIC Bridge chip that brings them all together. This design MIGHT actually support running the 2P models as each Pair goes through the bridge chip.

Dell makes the R810 and while its a 4P Server, the memory subsystem actually runs best when its run as a 2P Server. That would be a great platform for the 2P CPUs as well.

mczak - Friday, May 20, 2011 - link

2P E7 looks like a product for a very, very small niche to me. In terms of pure performance, a 2P Westmere-EP pretty much makes up for the deficit in cores with the higher clock - sure it also has less cache, but for the vast majority of cases it is probably far more cost effective (not to mention at least idle power consumption will be quite a bit lower). Which relegates the 2P E7 to cases where you don't need more performance than 2P Westmere-EP, but depend on some of the extra features (like more memory possible, RAS, whatever) the E7 offers.DanNeely - Thursday, May 19, 2011 - link

If anyone wants an explanation of what changed between these types of memory, simmtester.com has a decent writeup and illustrations. Basically each LR-DIMM has a private link to the buffer chip, instead of each dimm having a very high speed buffer daisychained to the next dimm on the channel.http://www.simmtester.com/page/news/showpubnews.as...

jcandle - Saturday, May 21, 2011 - link

I love the link but your comment is a bit misleading.The main difference isn't the removal of the point-to-point connections but the reversion to a parallel configuration similar to classic RDIMMs. The issues with FBDIMMs stemmed from their absurdly clocked serial bus that required 4x greater operating frequency over the actual DRAM clock.

So in dumb terms... FBDIMM = serial, LRDIMM = parallel

ckryan - Thursday, May 19, 2011 - link

It must be very, very difficult to generate a review for server equipment. Once you get into this class of hardware it seems as though there aren't really any ways to test it unless you actually deploy the server. Anyway, kudos for the effort in trying to quantify something of this caliber.Shadowmaster625 - Thursday, May 19, 2011 - link

Correct me if I'm wrong, but isnt an Opteron 6174 just $1000? And it is beating the crap out of this "flagship" intel chip by a factor of 3:1 in performance per dollar, and beats it in performance per watt also? And this is the OLD AMD architecture? This means that Interlagos could pummel intel by something like 5:1. At what point does any of this start to matter?You know it only costs $10,000 for a quad opteron 6174 server with 128GB of RAM?

alpha754293 - Thursday, May 19, 2011 - link

That's only true IF the programs/applications that you're running on it isn't licensed by sockets/processor/core-counts.How many of those Opteron systems would it take to match the performance? And the cost of the systems? And the cost of the software, if they're licensed on a per core basis?

tech6 - Thursday, May 19, 2011 - link

Software licensing is a part of the overall picture (particularly if you have to deal with Oracle) but the point is well taken that AMD delivers much better bang for the buck than Intel. An analysis of performance/$ would be an interesting addition to this article.erple2 - Thursday, May 19, 2011 - link

The analysis isn't too hard. If you're licensing things on a per core cost (Hello, Oracle, I'm staring straight at you), then how much does the licensing cost have to be per core before you've made up that 20k difference in price (assuming AMD = 10k, intel = 30k)? Well, it's simple - 20k/8 cores per server more for the AMD = $2500 cost per core. Now, if you factor in that on a per core basis, the intel server is between 50 and 60% faster, things get worse for AMD. Assuming you could buy a server from AMD that was 50% more powerful (via linearly increasing core count), that would be 50% more of a server, but remember each server has 20% more cores. So it's really about 60% more cores. Now you're talking about an approximately 76.8 core server. That's 36 more cores than intel. So what's the licensing cost gotta be before AMD isn't worth it for this performance level? well, 20k/36 = $555 per core.OK, fair enough. Maybe things are licensed per socket instead. You still need 50% more sockets to get equivalent performance. So that's 2 more sockets (give or take) for the AMD to equal the intel in performance. Assuming things scale linearly with price, that "server" will cost roughly 15k for the AMD server. Licensing costs now have to be more than 7.5k (15k difference in price between the AMD and intel servers divided by 2 extra sockets) higher per socket to make the intel the "better deal" per performance. Do you know how much an Oracle Suite of products costs? I'll give you a hint. 7.5k isn't that far off the mark.

JarredWalton - Thursday, May 19, 2011 - link

There are so many factors in the cost of a server that it's difficult to compare on just price and performance. RAS is a huge one -- the Intel server targets that market far more than the AMD used. Show me two identical servers in all other areas other than CPU type and socket count, and then compare the pricing. For example, here are two similar HP ProLiant setups:HP ProLiant DL580 G7

http://h10010.www1.hp.com/wwpc/us/en/sm/WF06b/1535...

2 x Xeon E7-4830

64GB RAM

64 DIMM slots

Advanced ECC

Online Spare

Mirrored Memory

Memory Lock Step Mode

DIMM Isolation

Total: $13,679

+$4000 for 2x E7-4830

HP ProLiant DL585 G7

http://h10010.www1.hp.com/wwpc/us/en/sm/WF06b/1535...

4 x Opteron 6174

64GB RAM

32 DIMM slots

Advanced ECC

Online Spare

Total: $14,889

Now I'm sure there are other factors I'm missing, but seriously, those are comparable servers and the Intel setup is only about $3000 more than the AMD equivalent once you add in the two extra CPUs. I'm not quite sure how much better/worse the AMD is relative to the Intel, but when people throw out numbers like "OMG it costs $20K more for the Intel server" by comparing an ultra-high-end Intel setup to a basic low-end AMD setup, it's misguided at best. By my estimates, for relatively equal configurations it's more like $3000 extra for Intel, which is less than a 20% increase in price--and not even factoring in software costs.