The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTRandom Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

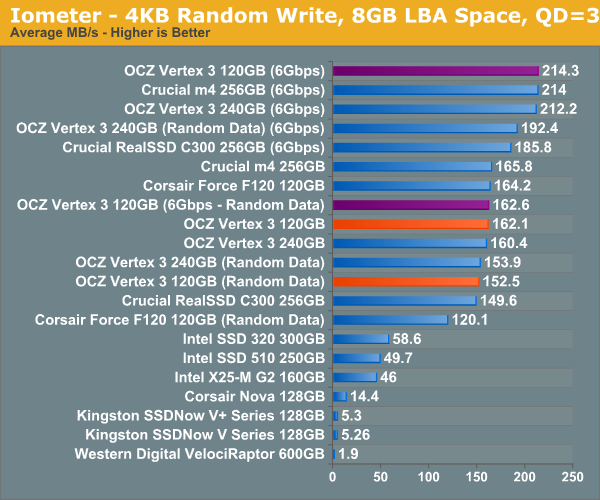

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Peak performance on the 120GB Vertex 3 is just as impressive as the 240GB pre-production sample as well as the m4 we just tested. Write incompressible data and you'll see the downside to having fewer active die, the 120GB drive now delivers 84% of the performance of the 240GB drive. In 3Gbps mode the 240 and 120GB drives are identical.

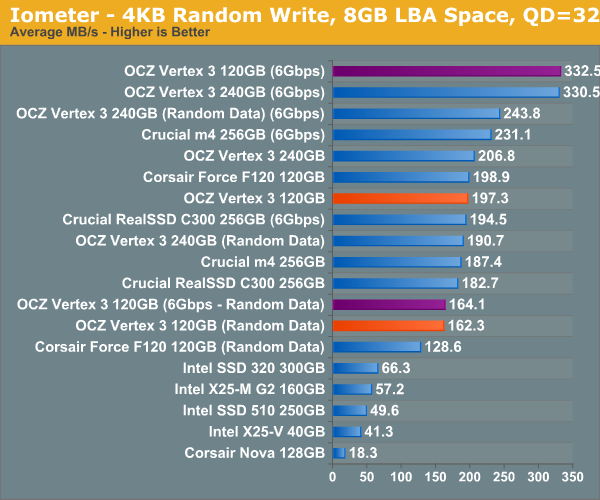

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

At high queue depths the gap between the 120 and 240GB Vertex 3s grows a little bit when we're looking at incompressible data.

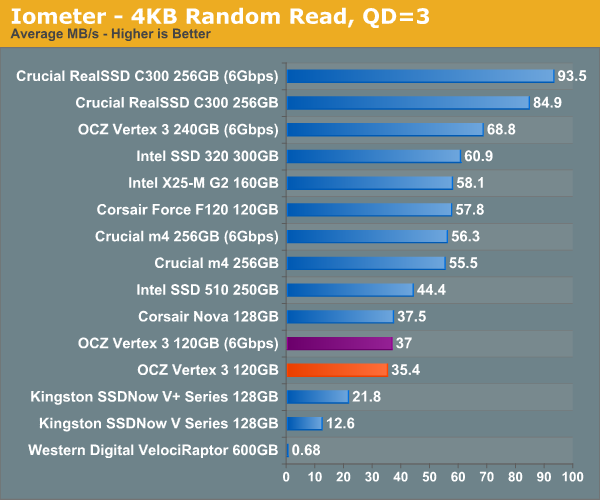

Random read performance is what suffered the most with the transition from 240GB to 120GB. The 120GB Vertex 3 is slower than the 120GB Corsair Force F120 (SF-1200, similar to the Vertex 2) in our random read test. The Vertex 3 is actually about the same speed as the old Indilinx based Nova V128 here. I'm curious to see how this plays out in our real world tests.

Sequential Read/Write Speed

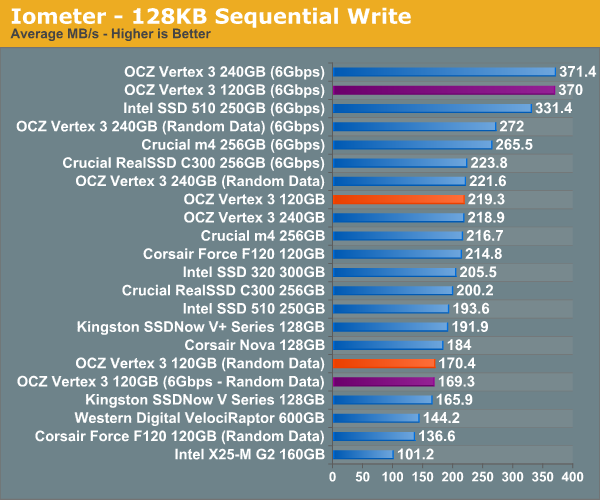

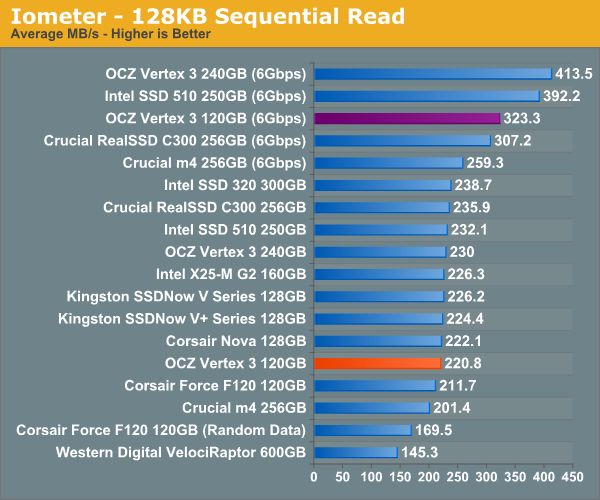

To measure sequential performance I ran a 1 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

Highly compressible sequential write speed is identical to the 240GB drive, but use incompressible data and the picture changes dramatically. The 120GB has far fewer NAND die to write to in parallel and in this case manages 76% of the performance of the 240GB drive.

Sequential read speed is also lower than the 240GB drive. Compared to the SF-1200 drives there's still a big improvement as long as you've got a 6Gbps controller.

153 Comments

View All Comments

jjj - Wednesday, April 6, 2011 - link

any chance of a comparison soon for the new gen SSDs running on p67 vs the non native sata 3 controllers out there(the marvell controller on many 1366 and 1155 boards or/and some cheap PCIe sata3 cards) and maybe an AMD system too?A5 - Wednesday, April 6, 2011 - link

I think they did a comparison in the P67 article. The P67 controller is the fastest, followed by AMD (it's within a few %), and then the 3rd part controllers are a good bit slower.Movieman420 - Wednesday, April 6, 2011 - link

What more can I say? I've been chomping at the bit over this issue ever since SR broke the story. As a loong time Ocz customer (ok...fanboy..lol) I couldn't believe Ocz was behaving like that. The max speed rating using the fastest test available is excusable...like you said, if Ozc would have went the altruistic route then the competition would have take full advantage in about 1 millisecond. After finding out about the inevitable switch to 25nm I quickly ordered another drive for my existing array from a lesser known vendor that I hoped was still selling older stock. I received the drive and to my dismay it was a 25nm/64Gb piece. Adding this drive to my existing array of 34nm/32Gb drives would have a definite negative effect. Which brings me to my point."After a dose of public retribution OCZ agreed to allow end users to swap 25nm Vertex 2s for 34nm drives, they would simply have to pay the difference in cost. OCZ realized that was yet another mistake and eventually allowed the swap for free."

This is only partially true. Replacements were offered based on drives that formatted below IDEMA capacity. If your drive formatted to the correct size, you were not eligible to swap. The only problem is that the 64Gb dies were also used in Vertex 2/Agility 2 drives that feature 28 percent over-provisioning (i.e. 50, 100, 200gb models). In this case the decreased capacity was 'hidden' for lack of a better term. This is where I locked horns with them. The exchange was only offered for the 60'E' and 120'E' drives even tho many others suffered the same performance issue for the same reason. I had to raise a bit of hell before they agreed to replace my 64nm/64Gb 'non-E' drive with a 34nm replacement. At first they would only swap for another 25nm drive and I stated that my issue was with performance NOT die size. They ended up replacing my drive with a 34nm model only because it would have put a hurting on my existing raid array of 34nm drives...they made it clear that this was an exception since I had a raid array that would be negatively affected. So anyone who bought a 28 percent OP drive with 64Gb nand chips was DENIED any sort of exchange unless a raid array was involved. As far as I know, that policy still stands unless Ryan or Alex decides to make good on the exchange for 28 percent OP, non 'E' 64Gb die drives which are internally identical to the 'E' drives just with a different amount of OP set by the firmware. While I may have been 'lucky' if you will because I had an array involved, there's people out there that purchased a high OP model which if anything should be a slightly better performer and instead it's the complete opposite. Charge a premium for the more expensive NAND? Absolutely! Just don't offer a half hearted exchange that doesn't cover all models affected...and not just for the ones whose OP doesn't hide the issue.

CloudFire - Wednesday, April 6, 2011 - link

thanks anand! really glad you put some pressure on Ocz. I hope other companies will follow suite as well. Here's to hoping you'd continue to do the right thing for us consumers in the future! :DDennis.Huang - Wednesday, April 6, 2011 - link

Thank you for the review and for your actions on behalf of customers. This was a great review for me as a new person to SSDs. Do you have any thoughts of the performance of the 480GB version of the Vertex 3 and/or do you plan to do a review on that version too?kensiko - Thursday, April 7, 2011 - link

I saw some number on the OCZ forum, I think it came from Ryder, for the 480GB and it performs even better than the 240.kensiko - Thursday, April 7, 2011 - link

Here:IO METER (QD=1) 2008 on P67 SATAIII

120GB 240GB 480GB

4KB Random READ 16.31 15.58 17.77

4KB Random WRITE 14.45 14.97 15.99

128KB Seq. READ 190.23 255.17 355.89

128KB Seq WRITE 345.21 342.99 313.98

bennymankin - Wednesday, April 6, 2011 - link

Please include Vertex 2 120GB, as it is probably one of the most popular drives out there.Thank you.

kensiko - Thursday, April 7, 2011 - link

The F120 does it, but true it's not the 25nmShark321 - Friday, April 8, 2011 - link

I concur. Vertex 2 120 GB should be compared to Vertex 3 120GB. I suspect the differences will be minimal on SATA II. It's basically the same product, with slight controller and firmware changes.