The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTTRIM Performance

In our Vertex 3 preview I mentioned a bug/performance condition/funnythingthathappens with SF-1200 based drives. If you write incompressible data to all LBAs on the drive (e.g. fill the drive up with H.264 videos) and fill the spare area with incompressible data (do it again without TRIMing the drive) you'll actually put your SF-1200 based SSD into a performance condition that it can't TRIM its way out of. Completely TRIM the drive and you'll notice that while compressible writes are nice and speedy, incompressible writes happen at a max of 70 - 80MB/s. In our Vertex 3 Pro preview I mentioned that it seemed as if SandForce had nearly fixed the issue. The worst I ever recorded performance on the 240GB drive after my aforementioned fill procedure was 198MB/s - a pretty healthy level.

The 120GB drive doesn't mask the drop nearly as well. The same process I described above drops performance to the 100 - 130MB/s range. This is better than what we saw with the Vertex 2, but still a valid concern if you plan on storing/manipulating a lot of highly compressed data (e.g. H.264 video) on your SSD.

The other major change since the preview? The 120GB drive can definitely get into a pretty fragmented state (again only if you pepper it with incompressible data). I filled the drive with incompressible data, ran a 4KB (100% LBA space, QD32) random write test with incompressible data for 20 minutes, and then ran AS-SSD (another incompressible data test) to see how low performance could get:

| OCZ Vertex 3 120GB - Resiliency - AS SSD Sequential Write Speed - 6Gbps | |||||

| Clean | After Torture | After TRIM | |||

| OCZ Vertex 3 120GB | 162.1 MB/s | 38.3 MB/s | 101.5 MB/s | ||

Note that the Vertex 3 does recover pretty well after you write to it sequentially. A second AS-SSD pass shot performance up to 132MB/s. As I mentioned above, after TRIMing the whole drive I saw performance in the 100 - 130MB/s range.

This is truly the worst case scenario for any SF based drive. Unless you deal in a lot of truly random data or plan on storing/manipulating a lot of highly compressed files (e.g. compressed JPEGs, H.264 videos, etc...), I wouldn't be too concerned about this worst-case scenario performance. What does bother me however is how much lower the 120GB drive's worst case is vs. the 240GB.

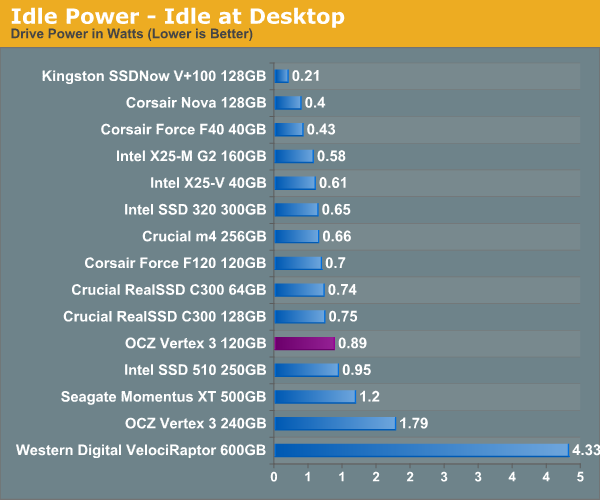

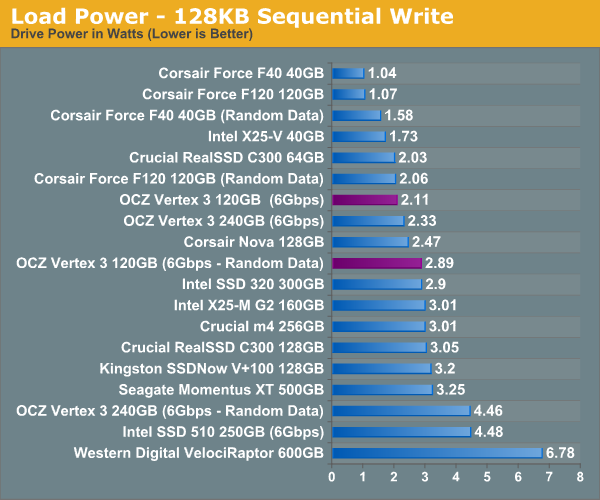

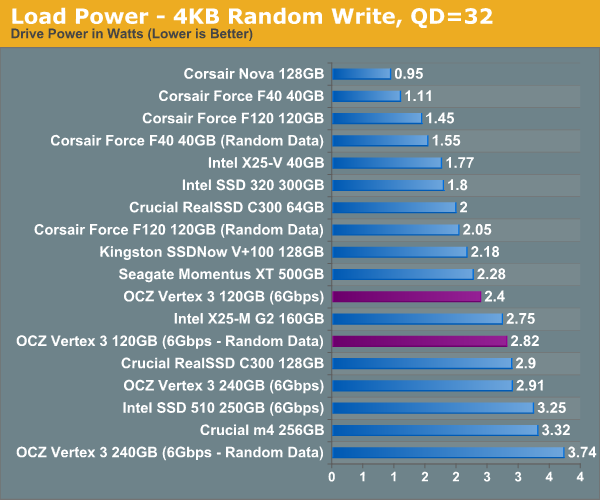

Power Consumption

Unusually high idle power consumption was a bug in the early Vertex 3 firmware - that seems to have been fixed with the latest firmware revision. Overall power consumption seems pretty good for the 120GB drive, it's in line with other current generation SSDs we've seen although we admittedly haven't tested many similar capacity drives this year yet.

153 Comments

View All Comments

dagamer34 - Wednesday, April 6, 2011 - link

Any idea when these are going to ship out into the wild? I've got a 120GB Vertex 2 in my 2011 MacBook Pro that I'd love to stick into my Windows 7 HTPC so it's more responsive.Ethaniel - Wednesday, April 6, 2011 - link

I just love how Anand puts OCZ on the grill here. It seems they'll just have to step it up. I was expecting some huge numbers coming from the Vertex 3. So far, meh.softdrinkviking - Wednesday, April 6, 2011 - link

"OCZ insists that there's no difference between the Spectek stuff and standard Micron 25nm NAND"Except for the fact that Spectek is 34nm I am assuming?

There surely must be some significant difference in performance between 25 and 34, right?

softdrinkviking - Wednesday, April 6, 2011 - link

sorry, i think that wasn't clear.what i mean is that it seems like you are saying the difference in process nodes is purely related to capacity, but isn't there some performance advantage to going lower as well?

softdrinkviking - Wednesday, April 6, 2011 - link

okay. forget it. i looked back through and found the part where you write about the 25nm being slower.that's weird and backwards. i wonder why it gets slower as it get smaller, when cpus are supposedly going to get faster as the process gets smaller?

are their any semiconductor engineers reading this article who know?

are the fabs making some obvious choice which trades in performance at a reduced node for cost benefits, in an attempt to increase die capacities and lower end-user costs?

lunan - Thursday, April 7, 2011 - link

i think because the chip get larger but IO interface to the controller remain the same (the inner raid). instead of addressing 4GB of NAND, now one block may consists of 8GB or 16GB NAND.in case of 8 interface,

4x8GB =32GB NAND but 8x8GB=64GB NAND, 8x16GB=128GB NAND

the smaller the shrink is, the bigger the nand, but i think they still have 8 IO interface to the controller, hence the time takes also increased with every shrinkage.

CPU or GPU is quite different because they implement different IO controller. the base architecture actually changes to accommodate process shrink.

they should change the base architecture with every NAND if they wish to archive the same speed throughput, or add a second controller....

I think....i may not be right >_<

lunan - Thursday, April 7, 2011 - link

for example the vertex 3 have 8GB NAND with 16(8 front and 8 back) connection to the controller. now imagine if the NAND is 16GB or 32 GB and the interface is only 16 with 1 controller?maybe the CPU approach can be done to this problem. if you wish to duplicate performace and storage, you do dual core (which is 1 cpu core beside the other)....

again...maybe....

softdrinkviking - Friday, April 8, 2011 - link

thanks for your reply. when i read it, i didn't realize that those figures were referring to the capacity of the die.as soon as i re-read it, i also had the same reaction about redesigning the controller, it seems the obvious thing to do,

so i can't believe that the controller manufacturer's haven't thought of it.

there must be something holding them back, probably $$.

the major SSD players all appear to be trying to pull down the costs of drives to encourage widespread adoption.

perhaps this is being done at the expense of obvious performance increases?

Ammaross - Thursday, April 7, 2011 - link

I think if you re-reread (yes, twice), you'll note that with the die shrink, the block size was upped from 4K to 8K. This is twice the space to be programmed or erased per write. This is where the speed performance disappears, regardless of the number of dies in the drive.Anand Lal Shimpi - Wednesday, April 6, 2011 - link

Sorry I meant Micron 34nm NAND. Corrected :)Take care,

Anand