OCZ Vertex 3 Preview: Faster and Cheaper than the Vertex 3 Pro

by Anand Lal Shimpi on February 24, 2011 9:02 AM ESTAnandTech Storage Bench 2011: Much Heavier

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles (again, I wasn't expecting to have to introduce this today so I'm a bit ill prepared) but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

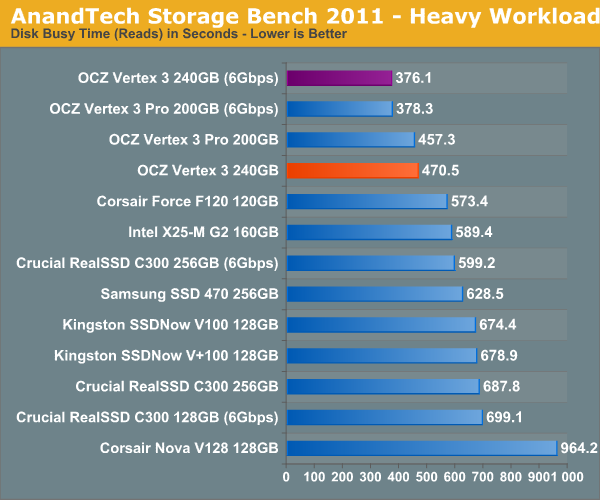

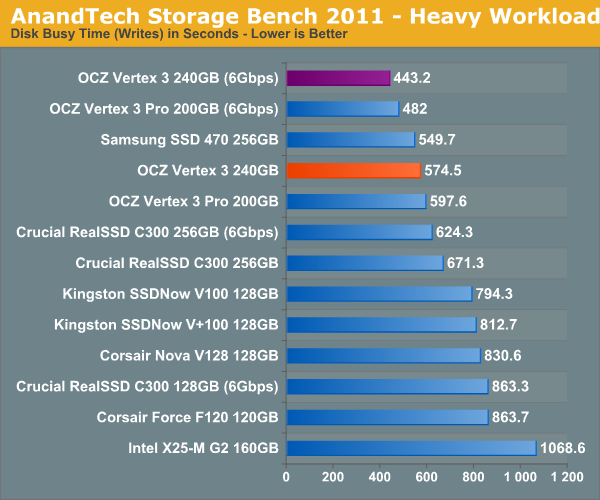

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

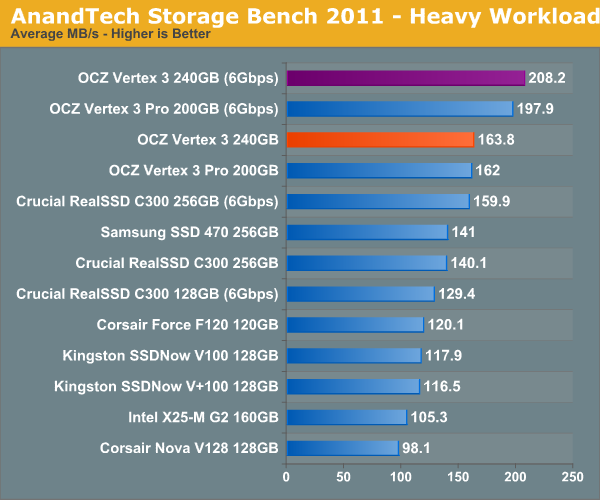

We'll start out by looking at average data rate throughout our new heavy workload test:

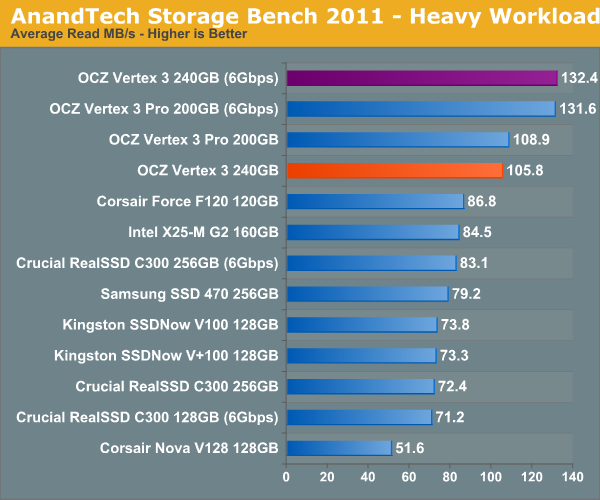

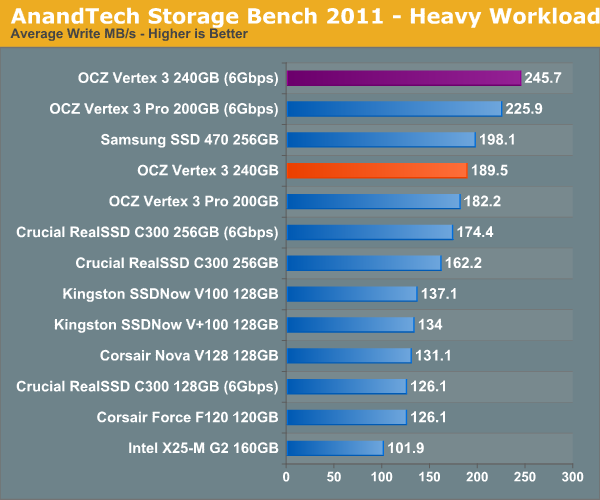

If we break out our performance results into average read and write speed we get a better idea for the Vertex 3's strengths:

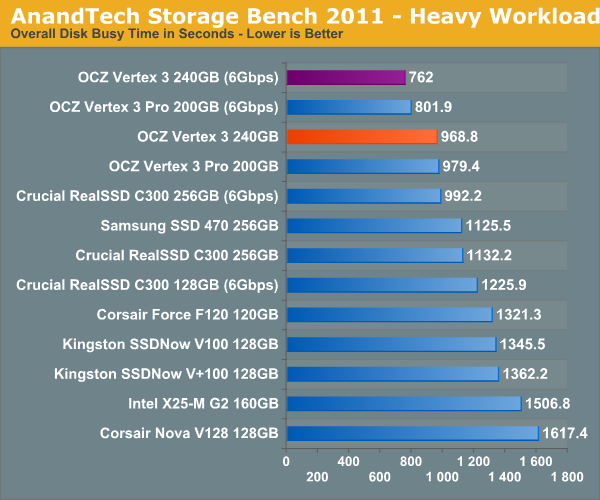

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

85 Comments

View All Comments

Mr Perfect - Thursday, February 24, 2011 - link

I hate to bring this up here, but this should be addressed and you seem to have a direct line to the OCZ CEO(all be it in sticky notes). Can AT look into the fiasco where OCZ shipped 8 channel 25nm Vertex 2 drives bearing the exact same name and model number as the origonal 16 channel 34nm drives, and then when customers noticed the difference decided to charge people an upgrade fee to get a 16 channel drive? True, OCX later relented after a negative backlash and gave people free exchanges for a 16 channel drive, but I can't think of a faster way to burn through customer good will then a bait and switch stunt like that. What happened?http://www.storagereview.com/ssds_shifting_25nm_na...

FunBunny2 - Thursday, February 24, 2011 - link

Yeah. AT *seems* to be easier on OCZ than storagereview. I can't speak for their quality overall as I've only read up on what they had to say about this OCZ Vertex nonsense. I'm not going to abandon AT. Yet, anyway.semo - Thursday, February 24, 2011 - link

It wasn't always the case. When Anand exposed jmicron's awfulness to the world, OCZ's CEO wasn't happy at all (the surprising thing is Anand actually mentioning this back then).The two things that surprise me the most are:

1. Why didn't Anandtech as a whole report on 25nm drives. AFAIK OCZ were the first with commercial 25nm drives. Now if a commercial x86 CPU was about to be released in the next 6 months, AT would be all over that story...

2. A lot of time has passed, and there is still no coverage on OCZ's dishonest practices. If a decent site (like AT) covered these drives early enough, a lot less people would have fallen in OCZ's trap.

Anand did mention in his previous article that he would look in to the issue.

I've seen the discussion and based on what I've seen it sounds like very poor decision making on OCZ's behalf. Unfortunately my 25nm drive didn't arrive before I left for MWC. I hope to have it by the time I get back next week and I'll run through the gamut of tests, updating as necessary. I also plan on speaking with OCZ about this. Let me get back to the office and I'll begin working on it

As far as old Vertex 2 numbers go, I didn't actually use a Vertex 2 here (I don't believe any older numbers snuck in here). The Corsair Force F120 is the SF-1200 representative of choice in this test.

Take care,

Anand

http://www.anandtech.com/show/4159/ocz-vertex-3-pr...

semo - Thursday, February 24, 2011 - link

"Now if a commercial x86 CPU" should have been "Now if a commercial 14nm x86 CPU". Usually new tech in the CPU, GPU and now SoC segments gets covered quite early and thoroughly. Somehow the 25nm Vertex 2 flew under the radar it seems...Anand Lal Shimpi - Thursday, February 24, 2011 - link

I've been working with OCZ behind the scenes on this. I've been tied up with the reviews you've seen this week (as well as some stuff coming next week) and haven't been able to snag a few 25nm drives for benchmarking. Needless to say I will make sure that the situation is rectified. I've already been speaking with OCZ's CEO on it for the past week :)Take care,

Anand

lyeoh - Thursday, February 24, 2011 - link

Hi Anand,Is it possible to do average and max latency measurements on the drive access (read/write) while under various loads (sequential, random, low queue depth, high queue depth)?

I'm thinking that for desktop use once a drive gets really fast, the max latency (and how often it occurs) would affect the user experience more.

A drive with 0.01 millisecond access but 500ms spikes every 5 secs under load might provide a worse user experience than a drive with a constant 0.1 millisecond access even though the former averages at about 80000 accesses per second while the latter only achieves 10000. Of course it does depend on what is delayed for 500ms. If it's just a bulk sequential transfer it might not matter, but if it delays the opening of a directory or small file it might matter.

This might also be important for some server use.

jimhsu - Thursday, February 24, 2011 - link

This is needed -- however there are a lot of technical problems when trying to assess max latency. One of those is reproducibility -- if the drive has a latency of 0.01ms, but a max of 250ms, how reliable is that data point? What if something just happens to be writing to the drive while you make that measurement? (This can easily be seen by trying to do 4K reads when doing a sequential write larger than the DRAM cache of the SSD, such as the Intel G2 drives). From my personal observations, website to website reproducibility of maximum latencies, as well as minimum frame rates in reviews, is extremely poor.Which is unfortunate, because they can impact user experience so heavily. Aside from strict laboratory-controlled conditions and testing with specialized equipment, I find it hard to conjecture how to do this.

semo - Thursday, February 24, 2011 - link

"haven't been able to snag a few 25nm drives"So OCZ are sending you Vertex drives for testing so early that they don't even have housing and you're stuggling to get a hold of drives that have been sold to consumers for the past month or two?

How come you have V3 drives months before their release date and AT does not yet even have a news article on the 25nm V2s?

I'm sorry Anand but this will be the 1st SSD article you've written that I will not be reading. This is in protest to OCZ's handling of this CONSUMER RIGHTS issue. I'm not protesting against AT but OCZ's propaganda which unfortunately is channeled through my No 1 favourite site.

Ryan Petersen, you know where to stick it.

seapeople - Thursday, February 24, 2011 - link

Yeah, I can't imagine why OCZ isn't knocking Anand's door down with review samples of 25nm SSD drives after that big customer snafu with their 25nm drives. I mean, they should be eager to have all the publicity on that issue as possible, right?And then why on earth would they rather send their newest, fastest SSD drives to a review site right now? They must know that Anand is probably busy reviewing the other SSD drives that are about to come out, so why would they bother him with their new stuff?

beginner99 - Thursday, February 24, 2011 - link

That's why I would just go with Intel. I know what you pay for. No custom firmwares and other funky stuff.