OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTSequential Read/Write Speed

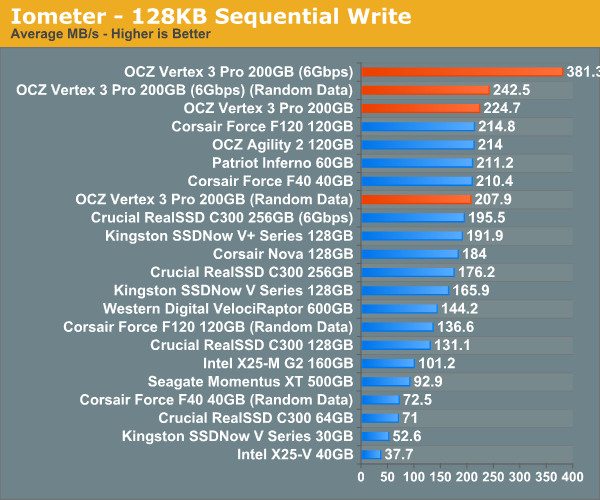

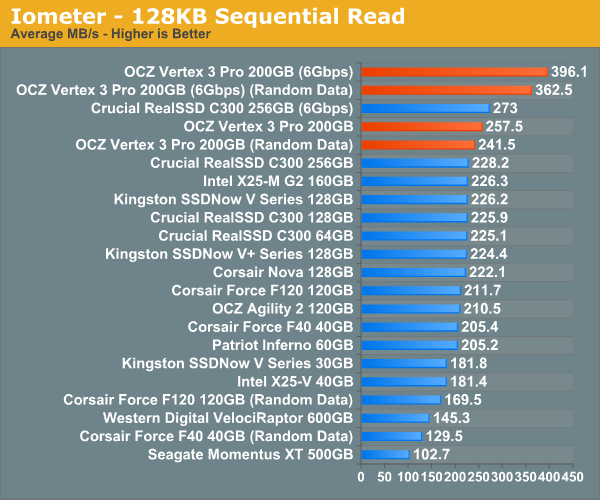

To measure sequential performance I ran a 3 minute long 128KB sequential test over the entire span of the drive at a queue depth of 1. The results reported are in average MB/s over the entire test length.

This is pretty impressive. The new SF-2500 can write incompressible data sequentially at around the speed the SF-1200 could write highly compressible data. In other words, the Vertex 3 Pro at its slowest is as fast as the Vertex 2 is at its fastest. And that's just at 3Gbps.

The Vertex 3 Pro really shines when paired with a 6Gbps controller. At low queue depths you're looking at 381MB/s writes, from a single drive, with highly compressible data. Write incompressible data and you've still got the fastest SSD on the planet.

Micron is aiming for 260MB/s writes for the C400, which is independent of data type. If Micron can manage 260MB/s in sequential writes that will only give it a minor advantage over the worst case performance of the Vertex 3 Pro, and put it at a significant disadvantage compared to OCZ's best case.

Initially, SandForce appears to have significantly improved performance handling in the worst case of incompressible writes. While the old SF-1200 could only deliver 63% of its maximum performance when dealing with incompressible data, the SF-2500 holds on to 92% of it over a 3Gbps SATA interface. Remove the SATA bottleneck however and the performance difference returns to what we're used to. Over 6Gbps SATA the SF-2500 manages 63% of maximum performance if it's writing incompressible data.

Note that the peak 6Gbps sequential write figures jump up to around 500MB/s if you hit the drive with a heavier workload, which we'll see a bit later.

Sequential read performance continues to be dominated by OCZ and SandForce. Over a 3Gbps interface SandForce improved performance by 20 - 40%, but over a 6Gbps interface the jump is just huge. For incompressible data we're talking about nearly 400MB/s from a single drive. I don't believe you'd even be able to generate the workloads necessary to saturate a RAID-0 of two of these drives on a desktop system.

144 Comments

View All Comments

FCss - Thursday, February 17, 2011 - link

"My personal desktop sees about 7GB of writes per day." maybe a stupid question but how do you check the amount of your daily writes?And one more question: if you have a 128Gb SSD and you leave let's say 40Gb unformated so the user can't fill up the disk, will the controller use this space the same way as it would belong to the spare area?

Quindor - Thursday, February 17, 2011 - link

I use a program called "HDDLED" for this. It shows you some easily accessible leds on your screen and if you hover over it, you can see the current and total disk usage since your PC was booted up.FCss - Thursday, February 17, 2011 - link

thanks, a great softwareBreit - Thursday, February 17, 2011 - link

isn't the totally written bytes to the drive since manufacturing be part of the smart data you can read from your drive? all you have to do then is noting down the value when you boot up your pc in the morning and subtract that from the actual value you read there the next day.Chloiber - Thursday, February 17, 2011 - link

Or you can just take the average..marraco - Thursday, February 17, 2011 - link

Vertex 2 takes advantage of unformated space. So OCZ advices to leave 20% of space unformated , (although to improve garbage collection, but it means that unformated space is used)7Enigma - Thursday, February 17, 2011 - link

Comon Anand! In your example you have 185GB free on a 256GB drive. I think that is the least likely scenario that paints an overly optimistic case in terms of write life. Everyone knows not to completely fill up their drive but are you telling me that the vast majority of users are going to have 78% of their drive free at all times? I just don't buy it.The more common scenario is that a consumer purchases a drive slightly larger then needed (due to how expensive these luxuries still are). So that 256GB drive probably will only have 20-40GB free. Do that and that 36 days for a single use of the NAND becomes ~5-8 days (no way to move static data around at this capacity level). Factor in write amplification (0.6X to 10X) and you lower the time to between 4-25 years for hitting that 3000X cap.

Still not a HUGE problem, but much more relevant then saying this drive will last for hundreds of years (not counting NAND lifespan itself).

7Enigma - Thursday, February 17, 2011 - link

Bah I thought the write amplification was 1.6X. That changes the numbers considerably (enough that the point is moot). I still think the example in the article was not a normal circumstance but it seems to still not be an issue.<pie to face>

mark53916 - Thursday, February 17, 2011 - link

Encrypted files are not compressible, so you won't get any advantagefrom the hardware write compression.

7Enigma - Thursday, February 17, 2011 - link

Hi Anand,Looks like one of the numbers is incorrect in this chart. Right now it shows LOWER performance after TRIM then when the drive was completely full. The 230MB/sec value seems to be incorrect.