OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTThe Test

Note that I've pulled out our older results for the Kingston V+100. There were a couple of tests that had unusually high performance which I now believe was due the drive being run with a newer OS/software image than the rest of the older drives. I will be rerunning those benchmarks in the coming week.

I should also note that this is beta hardware running beta firmware. While the beta nature of the drive isn't really visible in any of our tests, I did attempt to use the Vertex 3 Pro as the primary drive in my 15-inch MacBook Pro on my trip to MWC. I did so with hopes of exposing any errors and bugs quicker than normal, and indeed I did. Under OS X on the MBP with a full image of tons of data/apps, the drive is basically unusable. I get super long read and write latency. I've already informed OCZ of the problem and I'd expect a solution before we get to final firmware. Often times actually using these drives is the only way to unmask issues like this.

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011 |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

Random Read/Write Speed

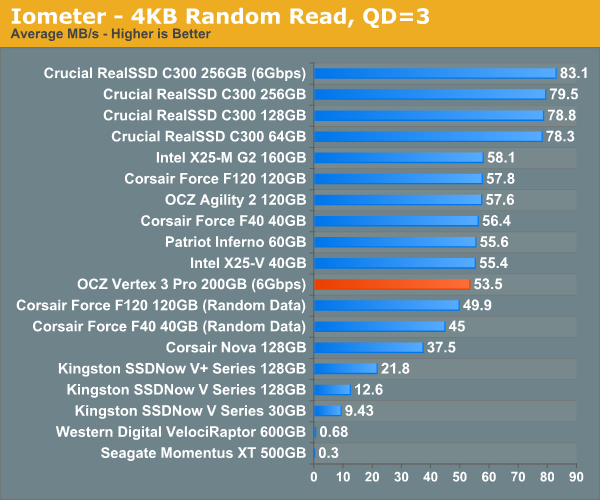

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

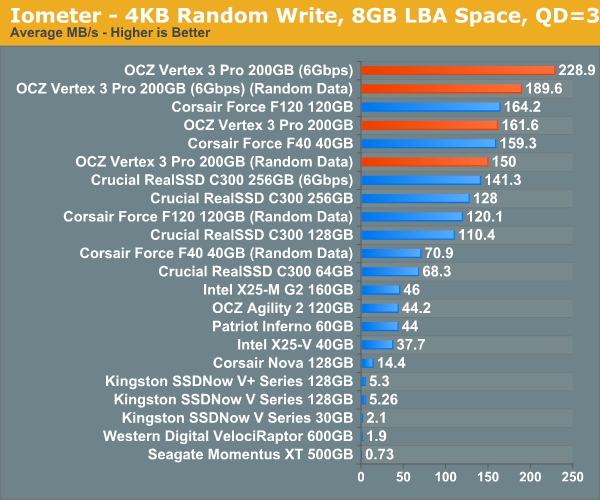

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Random write performance is much better on the SF-2500, not that it was bad to begin with on the SF-1200. In fact, the closest competitor is the SF-1200, the rest don't stand a chance.

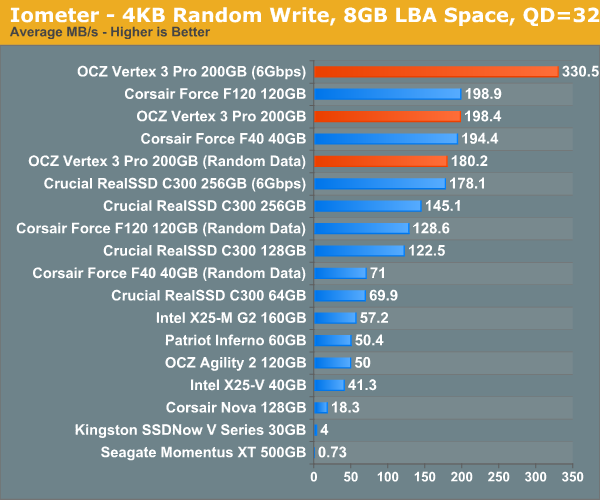

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

Ramp up the queue depth and there's still tons of performance on the table. At 3Gbps the performance of the Vertex 3 Pro is actually no different than the SF-1200 based Corsair Force, the SF-2500 is made for 6Gbps controllers.

144 Comments

View All Comments

HangFire - Friday, February 18, 2011 - link

As a software engineer, I can tell you that temp files are used over in-memory storage because the s/w was originally written that way, and no bug report concerning them will ever reach high priority status because it is ranked as a system configuration issue that can be fixed by the user.In other words, inertia of the "good enough" file writing code (written when RAM was sparse) will prevent software from being re-written to more optimal in-memory usage. The long backlog of truly important bugs taking precedence insures that.

You have a good point about ramdisks competing with disk caching. What is optimal depends on your application load, and to some extent your storage subsystem.

cdillon - Thursday, February 17, 2011 - link

The idea of moving the page-file to a RAM disk makes my head hurt. That's just retarded. You'd do better to turn off paging entirely, but that's also of questionable benefit because paging isn't really that hard on your SSD.Putting the temp directory along with browser caches and other non-critical frequently-written data is not a bad idea as long as you don't over-do it. The only problem with putting the temp drive on a volatile software-based RAM drive is that any software installation you do that requires a reboot with intermediate installer files kept in the temp directory which are expected to be there on the next reboot is going to fail.

Qapa - Saturday, February 19, 2011 - link

Hi Anand,I second this request :)

A few changes though:

- DISABLE page file

--- no matter if you have SSD or HDD, windows writes to the page file even if only using 10% of RAM), so you decrease writes to disk which does 2 thing: increase life of disk and increase speed of system. possibly both only marginally, but that's what benchs would show;

- browser caches

--- for sure this is one of the most wasteful disk writing and it should be more and more a great amount of writes since we are ever more on the web

- temporary folders

--- as someone else mentions you could come into problems if you need a install-reboot-finish_install kind of instalation

--- and I agree, with the sw engineer - if it works it won't get changed, so programs will put stupid stuff to files just because that was the way they did it at some point in time

I think a 1-2Gb RAM Disk is more than enough for browser and temp files, considering an initial starting RAM size of 4-8Gb of RAM. And yes, I do believe this improves system performance.

Can you do the benchs?

Thanks for the site - all reviews - and hope you can add this request as another review.

shawkie - Thursday, February 17, 2011 - link

I notice that the Intel SSD 510 has just started to appear on some retailer websites. It looks like it is SATA 6Gbs and comes in 120GB and 250GB versions. Pricing looks pretty high at this point.BansheeX - Thursday, February 17, 2011 - link

Color me unexcited. SSD is fast and reliable enough for people to want it. The price per GB isn't coming down anywhere near as fast as other technologies. I paid $200 1.5 years ago for an 80GB SSD drive that goes for $180 today.chrysrobyn - Friday, February 18, 2011 - link

Maybe 80GB for $200 is good enough for you, but I need twice that capacity, and I'm unwilling to pay more than $200. The next generation of SSDs that are coming out between now and May are going to come far closer to that price point for me.seapeople - Friday, February 18, 2011 - link

The point is that 1.5 years ago the OP purchased a SSD for $2.5/GB which had anywhere from a 2x-30x performance improvement over its predecessor (HD's), and here we are in 2011 reading a review about the next generation SSD which uses smaller, cheaper flash with half the available write-cycle life which is going to sell for... $5/GB and get a 1.2x-3x performance improvement over its predecessor (initial SSD's).What's next? A solid state drive that reads and writes at 2,000 GB/s and sells for $10,000 for the 1 TB model? Oh I can't wait for that.

ABR - Saturday, February 19, 2011 - link

I have to agree. Year after year we see more and more mind-boggling performance improvements over regular HDDs, but little or no price drop. Perhaps the materials costs are just insurmountable and the replacement of HDDs won't be happening after all. SSDs will be like SLR digital cameras -- premium and professional use only, and pricing a previous generation of amateur users out of a market they used to be in.FunBunny2 - Saturday, February 19, 2011 - link

From what I see: as each feature size drop in the NAND, the controller has to get increasingly more byzantine, needs more cache, and so on just to maintain performance. Word is that IMFT 25nm includes an ECC engine on die!!!Aernout - Saturday, February 19, 2011 - link

Maybe we wil hear more of the hybride disks like the momentus XT from seagate in the future, for 'standard' users they can offer a lot.now they have a 4 gb flash with 500 gb but its 10 months old.

I think lots of people are hoping they will multiply those specs.

I'm thinking of getting one for my laptop, but then on the otherside i am not sure if i will use 500 gig on my laptop, maybe i should buy a 64 ssd in stead.