OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTAnandTech Storage Bench 2011: Much Heavier

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles (again, I wasn't expecting to have to introduce this today so I'm a bit ill prepared) but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

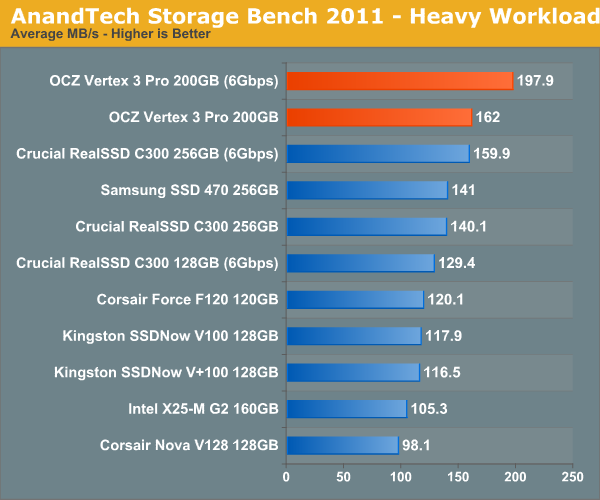

We'll start out by looking at average data rate throughout our new heavy workload test:

The Vertex 3 Pro on a 6Gbps interface is around 24% faster than Crucial's RealSSD C300. Note that the old SF-1200 (Corsair Force F120) can only deliver 60% of the speed of the new SF-2500. Over a 3Gbps interface the Vertex 3 Pro is quick, but only 15% faster than the next fastest 3Gbps drive. In order to get the most out of the SF-2500 you need a 6Gbps interface.

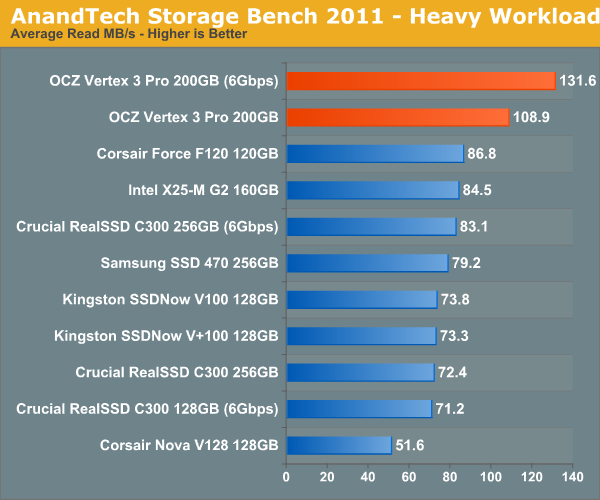

If we break out our performance results into average read and write speed we get a better idea for the Vertex 3 Pro's strengths:

The SF-2500 is significantly faster than its predecessor and all other drives in terms of read performance. Good read speed is important as it influences application launch time as well as overall system responsiveness.

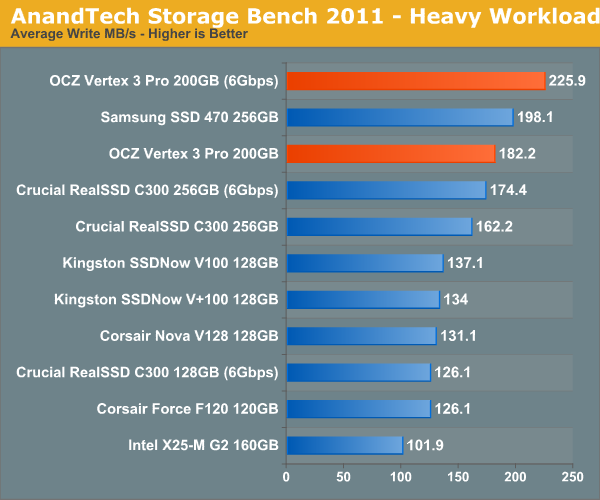

Average write speed is still class leading, but this benchmark uses a lot of incompressible data - you'll note that the Vertex 3 Pro only averages 225.9MB/s - barely over its worst case write speed. It's in this test that I'm expecting the new C400 to do better than SandForce.

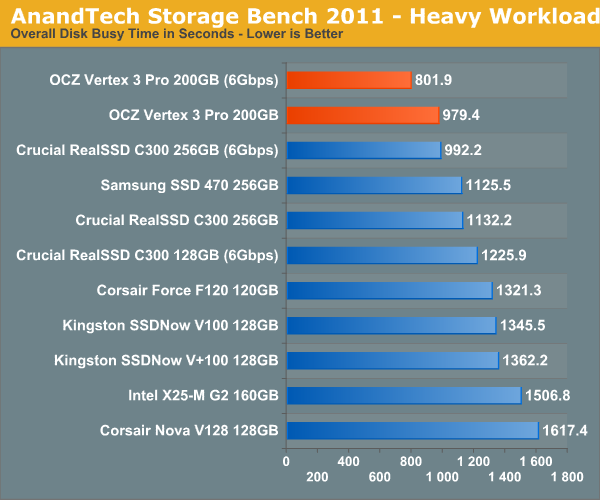

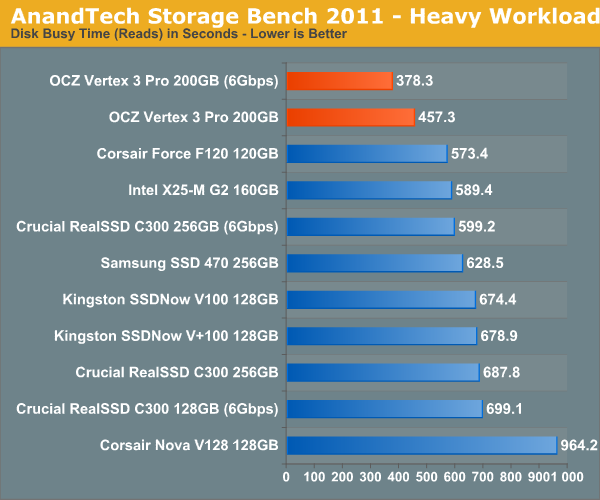

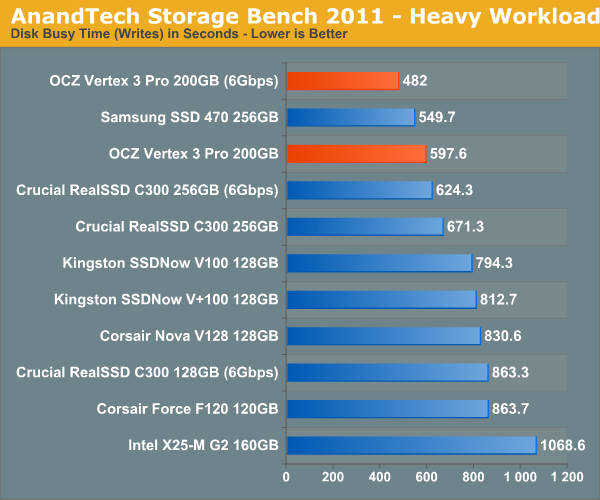

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

144 Comments

View All Comments

semo - Saturday, February 19, 2011 - link

Thanks for looking in to the issue Anand. Could you also find out whether Revo drives are affected as well?I'm surprised that Anandtech did not make any mention of the 25nm drives (it could have warned a lot of people of the shortcomings)

erson - Thursday, February 17, 2011 - link

Anand, on page 3 - "In this case 4GB of the 256GB of NAND is reserved for data parity and the remaining 62GB is used for block replacement (either cleaning or bad block replacement)."I believe that should be 52GB instead of 62GB.

Keep up the good work!

Anand Lal Shimpi - Thursday, February 17, 2011 - link

So there's technically only 186GiB out of 256GiB of NAND available for user consumption. 4GiB is used for RAISE, the remaining 66GiB (the 62GiB is a typo) is kept as spare area.Take care,

Anand

marraco - Thursday, February 17, 2011 - link

Some data are written once, and never deleted. They are read again and again all the time.Such cells would last much longer than the rest.

I wish to know if the controller is smart enough to move that rarely written data to the most used cells. That would enlarge the life of those cells, and release the less used cells, whose life will last longer.

Chloiber - Thursday, February 17, 2011 - link

I'm pretty sure every modern Controller does that to a certain degree. It's called static and dynamic wear leveling.philosofa - Thursday, February 17, 2011 - link

Anand, you said that prices for the consumer Vertex 3 drives will probably be above those of the Vertex 2 series - is that a resultant increase in capacity, or will we see no (near term) price/size benefits from the move to 25nm nand?vhx - Thursday, February 17, 2011 - link

I am curious as to why there is no Vertex/2 comparison?jonup - Thursday, February 17, 2011 - link

Given the controversy with the currently shipped Vertex2s Anand chose to use F120 (similar if not identical to the Vertex2) .theagentsmith - Thursday, February 17, 2011 - link

Hello Anandgreat article as always and hope you're enjoying the nice city of Barcelona.

I've read some articles suggesting to create a RAM disk, easily done with PCs with 6-8GBs, and move all the temporary folders, as well as page file and browser caches to that.

They say this could bring better performance as well as reduce random data written to the SSD, albeit the last one isn't such a big problem as you said in the article.

Can you become a mythbuster and tell us if there are tangible improvements or if it just doesn't worth it? Can it make the system unstable?

Quindor - Thursday, February 17, 2011 - link

Maybe you missed this in the article, but as stated, with heavy usage of 7GB writing per day, it still will last you way beyond the warranty period of the drive. As such, maybe your temp files and browser cache, etc. to a ram drive won't really bring you much, because your drive is not going to die of it anyway.Better performance might be a different point. But the reason to buy an SSD is for great performance. Why then try to enhance this with a ram drive, that will only bring marginal performance gains. Doing so with a HDD might be a whole different thing together.

My idea is that these temp files are temp files, and that if keeping them in memory would be so much faster, the applications would do this themselves. Also, leaving more memory free might give windows disk caching the chance to do exactly the same as your ram drive is doing for you.