OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTThe Test

Note that I've pulled out our older results for the Kingston V+100. There were a couple of tests that had unusually high performance which I now believe was due the drive being run with a newer OS/software image than the rest of the older drives. I will be rerunning those benchmarks in the coming week.

I should also note that this is beta hardware running beta firmware. While the beta nature of the drive isn't really visible in any of our tests, I did attempt to use the Vertex 3 Pro as the primary drive in my 15-inch MacBook Pro on my trip to MWC. I did so with hopes of exposing any errors and bugs quicker than normal, and indeed I did. Under OS X on the MBP with a full image of tons of data/apps, the drive is basically unusable. I get super long read and write latency. I've already informed OCZ of the problem and I'd expect a solution before we get to final firmware. Often times actually using these drives is the only way to unmask issues like this.

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011 |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

Random Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

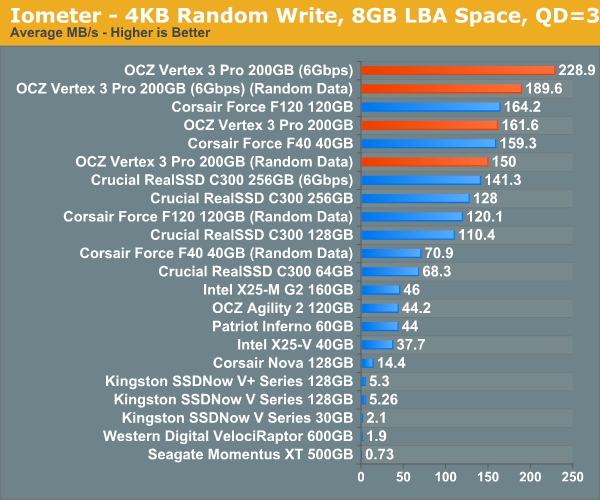

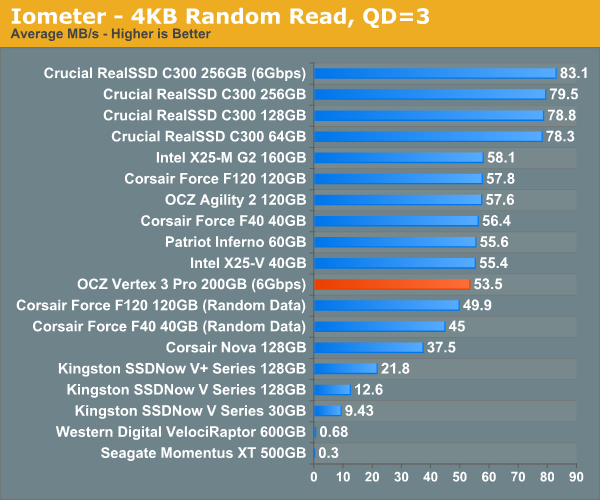

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Random write performance is much better on the SF-2500, not that it was bad to begin with on the SF-1200. In fact, the closest competitor is the SF-1200, the rest don't stand a chance.

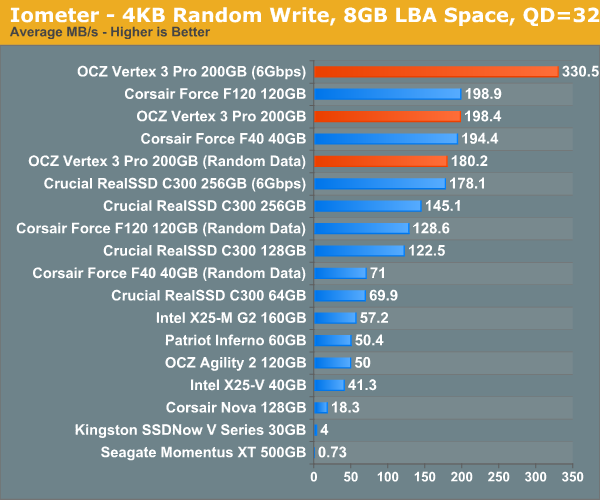

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

Ramp up the queue depth and there's still tons of performance on the table. At 3Gbps the performance of the Vertex 3 Pro is actually no different than the SF-1200 based Corsair Force, the SF-2500 is made for 6Gbps controllers.

144 Comments

View All Comments

semo - Saturday, February 19, 2011 - link

Thanks for looking in to the issue Anand. Could you also find out whether Revo drives are affected as well?I'm surprised that Anandtech did not make any mention of the 25nm drives (it could have warned a lot of people of the shortcomings)

erson - Thursday, February 17, 2011 - link

Anand, on page 3 - "In this case 4GB of the 256GB of NAND is reserved for data parity and the remaining 62GB is used for block replacement (either cleaning or bad block replacement)."I believe that should be 52GB instead of 62GB.

Keep up the good work!

Anand Lal Shimpi - Thursday, February 17, 2011 - link

So there's technically only 186GiB out of 256GiB of NAND available for user consumption. 4GiB is used for RAISE, the remaining 66GiB (the 62GiB is a typo) is kept as spare area.Take care,

Anand

marraco - Thursday, February 17, 2011 - link

Some data are written once, and never deleted. They are read again and again all the time.Such cells would last much longer than the rest.

I wish to know if the controller is smart enough to move that rarely written data to the most used cells. That would enlarge the life of those cells, and release the less used cells, whose life will last longer.

Chloiber - Thursday, February 17, 2011 - link

I'm pretty sure every modern Controller does that to a certain degree. It's called static and dynamic wear leveling.philosofa - Thursday, February 17, 2011 - link

Anand, you said that prices for the consumer Vertex 3 drives will probably be above those of the Vertex 2 series - is that a resultant increase in capacity, or will we see no (near term) price/size benefits from the move to 25nm nand?vhx - Thursday, February 17, 2011 - link

I am curious as to why there is no Vertex/2 comparison?jonup - Thursday, February 17, 2011 - link

Given the controversy with the currently shipped Vertex2s Anand chose to use F120 (similar if not identical to the Vertex2) .theagentsmith - Thursday, February 17, 2011 - link

Hello Anandgreat article as always and hope you're enjoying the nice city of Barcelona.

I've read some articles suggesting to create a RAM disk, easily done with PCs with 6-8GBs, and move all the temporary folders, as well as page file and browser caches to that.

They say this could bring better performance as well as reduce random data written to the SSD, albeit the last one isn't such a big problem as you said in the article.

Can you become a mythbuster and tell us if there are tangible improvements or if it just doesn't worth it? Can it make the system unstable?

Quindor - Thursday, February 17, 2011 - link

Maybe you missed this in the article, but as stated, with heavy usage of 7GB writing per day, it still will last you way beyond the warranty period of the drive. As such, maybe your temp files and browser cache, etc. to a ram drive won't really bring you much, because your drive is not going to die of it anyway.Better performance might be a different point. But the reason to buy an SSD is for great performance. Why then try to enhance this with a ram drive, that will only bring marginal performance gains. Doing so with a HDD might be a whole different thing together.

My idea is that these temp files are temp files, and that if keeping them in memory would be so much faster, the applications would do this themselves. Also, leaving more memory free might give windows disk caching the chance to do exactly the same as your ram drive is doing for you.