OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTThe Test

Note that I've pulled out our older results for the Kingston V+100. There were a couple of tests that had unusually high performance which I now believe was due the drive being run with a newer OS/software image than the rest of the older drives. I will be rerunning those benchmarks in the coming week.

I should also note that this is beta hardware running beta firmware. While the beta nature of the drive isn't really visible in any of our tests, I did attempt to use the Vertex 3 Pro as the primary drive in my 15-inch MacBook Pro on my trip to MWC. I did so with hopes of exposing any errors and bugs quicker than normal, and indeed I did. Under OS X on the MBP with a full image of tons of data/apps, the drive is basically unusable. I get super long read and write latency. I've already informed OCZ of the problem and I'd expect a solution before we get to final firmware. Often times actually using these drives is the only way to unmask issues like this.

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled) - for AT SB 2011 |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

Random Read/Write Speed

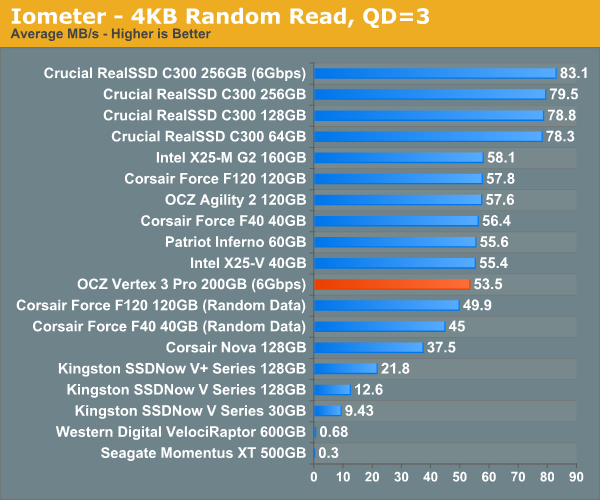

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

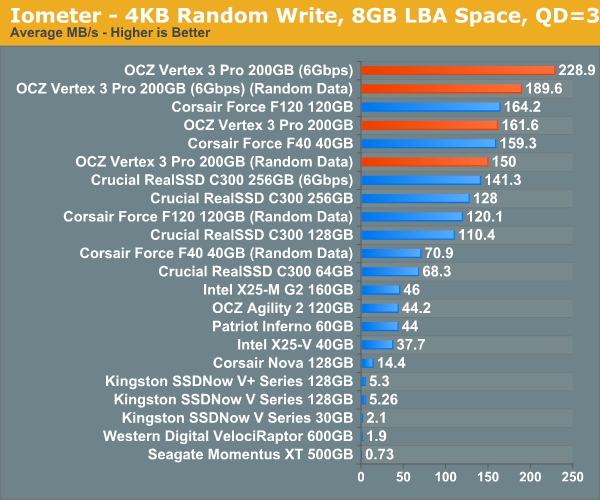

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

Random write performance is much better on the SF-2500, not that it was bad to begin with on the SF-1200. In fact, the closest competitor is the SF-1200, the rest don't stand a chance.

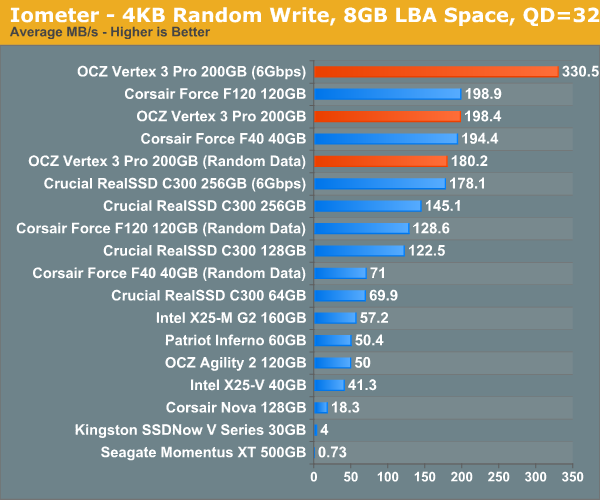

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

Ramp up the queue depth and there's still tons of performance on the table. At 3Gbps the performance of the Vertex 3 Pro is actually no different than the SF-1200 based Corsair Force, the SF-2500 is made for 6Gbps controllers.

144 Comments

View All Comments

Out of Box Experience - Tuesday, February 22, 2011 - link

Thanks for answering my questionand you are right

with over 50% of all PCs still running XP, it would indeed be stupid for the major SSD companies to overlook this important segment of the market

with their new SSDs ready to launch for Windows 7 machines, they should be releasing plug and play replacements for all the XP machines out there any day now..................NOT!

Are they stupid or what??

no conspiracy here folks

just the facts

Kjella - Thursday, February 24, 2011 - link

Fact: Most computers end their life with the same hardware they started with. Only a small DIY market actually upgrades their hard disk and migrates their OS/data. So what if 50% runs XP? 49% of those won't replace their HDD with an SSD anyway. They might get a new machine with an SSD though, and almost all new machines get Windows 7 now.Cow86 - Thursday, February 17, 2011 - link

Very interesting indeed....good article too. One has to wonder though - looking at what is currently happening with 25 nm NAND in vertex 2 drives, which have lower performance and reliability than their 34 nm brethren ánd are sold at the same price without any indication - how the normal Vertex 3 will fare...Hoping they'll be as good in that regard as the original vertex 2's, and I may well indeed jump on the SSD bandwagon this year :) Been holding off for lower price (and higher performance, if I can get it without a big price hike); I want 160 GB to be able to have all my games and OS on there.lecaf - Thursday, February 17, 2011 - link

Vertex 3 with 25 NAND will also suffer performance loss.It is not the NAND it self having the issue but the numbers of the chips. You get same capacity with half the chips, so the controller has less opportunity to write in parallel.

This is the same reason why with Crucial's C300 the larger (256) drive is faster than the smaller (128).

Speed will drop for smaller drivers but if price goes down this will be counterbalanced by larger capacity faster drives.

The "if" is very questionable of course considering that OCZ replaced NAND on current Vertex2 with no price cut (not even a change in part number; you just discover you get a slower drive after you mount it)

InsaneScientist - Thursday, February 17, 2011 - link

Except that there are already twice as many chips as there are channels (8 channels, 16 NAND chips - see pg 3 of the article), so halving the number of chips simply brings the channel to chip ratio down to 1:1, which is hardly a problem.It's when you have unused channels that things slow down.

lecaf - Thursday, February 17, 2011 - link

1:1 can be a problem... depending who is the bottleneck.If NAND speed saturates the channel bandwidth then I agree there is no issue, but if the channel has available bandwidth, it could use it to feed an extra NAND and speed up things.

But that's theory ... check benchmarks here:

http://www.storagereview.com/ocz_vertex_2_25nm_rev...

Chloiber - Thursday, February 17, 2011 - link

It's possible to use 25nm chips with the same capacity, as OCZ is trying to do right now with the 25nm replacements of the Vertex 2.Nentor - Thursday, February 17, 2011 - link

Why are they making these flash chips smaller if there are the lower performance and reliability problems?What is wrong with 34nm?

I can understand with cpu there are the benefits of less heat and such, but with the flash chips?

Zshazz - Thursday, February 17, 2011 - link

It's cheaper to produce. Less materials used and higher number of product output.semo - Thursday, February 17, 2011 - link

OCZ should spend less time sending out drives with no housing and work on correctly marketing and naming their 25nm Vertex 2 drives.http://forums.anandtech.com/showthread.php?t=21433...

How can OCZ get away with calling a 55GB drive "60GB" and then trying to bamboozle everyone with technicalities and SandForce marketing words and abbreviations is beyond me.

It wasn't too long when they were in hot water with their jmicron Core drives and now they're doing this?