OCZ Vertex 3 Pro Preview: The First SF-2500 SSD

by Anand Lal Shimpi on February 17, 2011 3:01 AM ESTThe Performance Degradation Problem

When Intel first released the X25-M, Allyn Malventano discovered a nasty corner case where the drive would no longer be able to run at its full potential. You basically had to hammer on the drive with tons of random writes for at least 20 minutes, but eventually the drive would be stuck at a point of no return. Performance would remain low until you secure erased the drive.

Although it shouldn't appear in real world use, the worry was that over time a similar set of conditions could align resulting in the X25-M performing slower than it should. Intel, having had much experience with similar types of problems (e.g. FDIV, Pentium III 1.13GHz), immediately began working on a fix and released the fix a couple of months after launch. The fix was nondestructive although you saw much better performance if you secure erased your drive first.

SandForce has a similar problem and I have you all and bit-tech to thank for pointing it out. In bit-tech's SandForce SSD reviews they test TRIM functionality by filling a drive with actual data (from a 500GB source including a Windows install, pictures, movies, documents, etc...). The drive is then TRIMed, and performance is measured.

If you look at bit-tech's charts you'll notice that after going through this process, the SandForce drives no longer recover their performance after TRIM. They are stuck in a lower performance state making the drives much slower when writing incompressible data.

You can actually duplicate the bit-tech results without going through all of that trouble. All you need to do is write incompressible data to all pages of a SandForce drive (user accessible LBAs + spare area), TRIM the drive and then measure performance. You'll get virtually the same results as bit-tech:

| AS-SSD Incompressible Write Speed | |||||

| Clean Performance | Dirty (All Blocks + Spare Area Filled) | After TRIM | |||

| SandForce SF-1200 (120GB) | 131.7MB/s | 70.3MB/s | 71MB/s | ||

The question is why.

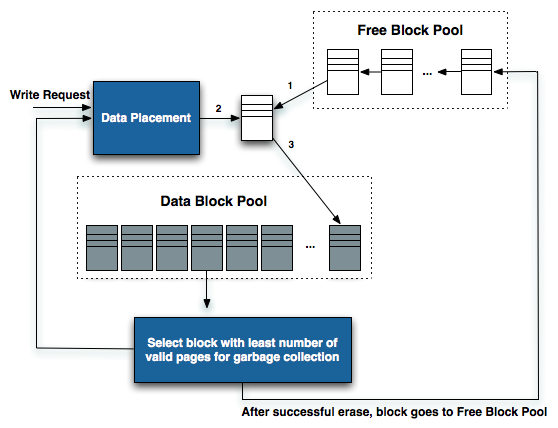

I spoke with SandForce about the issue late last year. To understand the cause we need to remember how SSDs work. When you go to write to an SSD, the controller must first determine where to write. When a drive is completely empty, this decision is pretty easy to make. When a drive is not completely full to the end user but all NAND pages are occupied (e.g. in a very well used state), the controller must first supply a clean/empty block for you to write to.

When you fill a SF drive with incompressible data, you're filling all user addressable LBAs as well as all of the drive's spare area. When the SF controller gets a request to overwrite one of these LBAs the drive has to first clean a block and then write to it. It's the block recycling path that causes the aforementioned problem.

In the SF-1200 SandForce can only clean/recycle blocks at a rate of around 80MB/s. Typically this isn't an issue because you won't be in a situation where you're writing to a completely full drive (all user LBAs + spare area occupied with incompressible data). However if you do create an environment where all blocks have data in them (which can happen over time) and then attempt to write incompressible data, the SF-1200 will be limited by its block recycling path.

So why doesn't TRIMing the entire drive restore performance?

Remember what TRIM does. The TRIM command simply tells the controller what LBAs are no longer needed by the OS. It doesn't physically remove data from the SSD, it just tells the controller that it can remove the aforementioned data at its own convenience and in accordance with its own algorithms.

The best drives clean dirty blocks as late as possible without impacting performance. Aggressive garbage collection only increases write amplification and wear on the NAND, which we've already established SandForce doesn't really do. Pair a conservative garbage collection/block recycling algorithm with you attempting to write an already full drive with tons of incompressible data and you'll back yourself into a corner where the SF-1200 continues to be bottlenecked by the block recycling path. The only way to restore performance at this point is to secure erase the drive.

This is a real world performance issue on SF-1200 drives. Over time you'll find that when you go to copy a highly compressed file (e.g. H264 video) that your performance will drop to around 80MB/s. However, the rest of your performance will remain as high as always. This issue only impacts data that can't be further compressed/deduped by the SF controller. While SandForce has attempted to alleviate it in the SF-1200, I haven't seen any real improvements with the latest firmware updates. If you're using your SSD primarily to copy and store highly compressed files, you'll want to consider another drive.

Luckily for SandForce, the SF-2500 controller alleviates the problem. Here I'm running the same test as above. Filling all blocks of the Vertex 3 Pro with incompressible data and then measuring sequential write speed. There's a performance drop, but it's no where near as significant as what we saw with the SF-1200:

| AS-SSD Incompressible Write Speed | |||||

| Clean Performance | Dirty (All Blocks + Spare Area Filled) | After TRIM | |||

| SandForce SF-1200 (120GB) | 131.7 MB/s | 70.3 MB/s | 71 MB/s | ||

| SandForce SF-2500 (200GB) | 229.5 MB/s | 230.0 MB/s | 198.2 MB/s | ||

It looks like SandForce has increased the speed of its block recycling engine among other things, resulting in a much more respectable worst case scenario of ~200MB/s.

Verifying the Fix

I was concerned that perhaps SandForce simply optimized for the manner in which AS-SSD and Iometer write incompressible data. In order to verify the results I took a 6.6GB 720p H.264 movie and copied it from an Intel X25-M G2 SSD to one of two SF drives. The first was a SF-1200 based Corsair Force F120, and the second was an OCZ Vertex 3 Pro (SF-2500).

I measured both clean performance as well as performance after I'd filled all blocks on the drive. The results are below:

| 6.6GB 720p H.264 File Copy (X25-M G2 Source to Destination) | |||||

| Clean Performance | Dirty (All Blocks + Spare Area Filled) | After TRIM | |||

| SandForce SF-1200 (120GB) | 138.6 MB/s | 78.5 MB/s | 81.7 MB/s | ||

| SandForce SF-2500 (200GB) | 157.5 MB/s | 158.2 MB/s | 157.8 MB/s | ||

As expected the SF-1200 drive drops from 138MB/s down to 81MB/s. The drive is bottlenecked by its block recycling path and performance never goes up beyond 81MB/s.

The SF-2000 however doesn't drop in performance. Brand new performance is at 157MB/s and post-torture it's still at 157MB/s. What's interesting however is that the incompressible file copy performance here is lower than what Iometer and AS-SSD would have you believe. Iometer warns that even its fully random data pattern can be defeated by drives with good data deduplication algorithms. Unless there's another bottleneck at work here, it looks like the SF-2000 is still reducing the data that Iometer is writing to the drive. The AS-SSD comparison actually makes a bit more sense since AS-SSD runs at a queue depth of 32 and this simple file copy is mostly at a queue depth of 1. Higher queue depths will make better use of parallel NAND channels and result in better performance.

144 Comments

View All Comments

sheh - Thursday, February 17, 2011 - link

Why's data retention down from 10 years to 1 year as the rewrite limit is approached?Does this mean after half the rewrites the retention is down to 5 years?

What happens after that year, random errors?

Is there drive logic (or standard software) to "refresh" a drive?

AnnihilatorX - Saturday, February 19, 2011 - link

Think about how Flash cell works. There is a thick Silicon Dixoide barrier separating the floating gate with the transistor. The reason they have a limited write cycle is because the Silion dioxide layer is eroded when high voltages are required to pump electrons to the floating gate.As the SO2 is damaged, it is easier for the electrons in the floating gate to leak, eventually when sufficient charge is leaked the data is loss (flipped from 1 to 0)

bam-bam - Thursday, February 17, 2011 - link

Thanks for the great preview! Can’t wait to get a couple of these new SDD’s soon.I’ll add them to an even more anxiously-awaited high-end SATA-III RAID Controller (Adaptec 6805) which is due out in March 2011. I’ll run them in RAID-0 and then see how they compare to my current set up:

Two (2) Corsair P256 SSD's attached to an Adaptec 5805 controller in RAID-0 with the most current Windows 7 64-bit drivers. I’m still getting great numbers with these drives, almost a year into heavy, daily use. The proof is in pudding:

http://img24.imageshack.us/img24/6361/2172011atto....

(1500+ MB/s read speeds ain’t too bad for SATA-II based SSD’s, right?)

With my never-ending and completely insatiable need-for-speed, I can’t wait to see what these new SATA-III drives with the new Sand-Force controller and a (good-quality) RAID card will achieve!

Quindor - Friday, February 18, 2011 - link

Eeehrmm.....Please re-evaluatue what you have written above and how to preform benchmarks.

I too own a Adaptec 5805 and it has 512MB of cache memory. So, if you run atto with a size of 256MB, this fits inside the memory cache. You should see performance of around 1600MB/sec from the memory cache, this is in no way related to what your subsystem storage can or cannot do. A single disk connected to it but just using cache will give you exactly the same values.

Please rerun your tests set to 2GB and you will get real-world results of what the storage behind the card can do.

Actually, I'm a bit surprised that your writes don't get the same values? Maybe you don't have your write cache set to write back mode? This will improve performance even more, but consider using a UPS or a battery backup cache module before doing so. Same thing goes for allowing disk cache or not. Not sure if this settings will affect your SSD's though.

Please, analyze your results if they are even possible before believing them. Each port can do around 300MB/sec, so 2x300MB/sec =/= 1500MB/sec that should have been your first clue. ;)

mscommerce - Thursday, February 17, 2011 - link

Super comprehensible and easy to digest. I think its one of your best, Anand. Well done!semo - Friday, February 18, 2011 - link

"if you don't have a good 6Gbps interface (think Intel 6-series or AMD 8-series) then you probably should wait and upgrade your motherboard first""Whenever you Sandy Bridge owners get replacement motherboards, this may be the SSD you'll want to pair with them"

So I gather AMD haven't been able to fix their SATA III performance issues. Was it ever discovered what the problem is?

HangFire - Friday, February 18, 2011 - link

The wording is confusing, but I took that to mean you're OK with Intel 6 or AMD 8.Unfortunately, we may never know, as Anand rarely reads past page 4 or 5 of the comments.

I am getting expected performance from my C300 + 890GX.

HangFire - Friday, February 18, 2011 - link

OK here's the conclusion from 3/25/2010 SSD/Sata III article:"We have to give AMD credit here. Its platform group has clearly done the right thing. By switching to PCIe 2.0 completely and enabling 6Gbps SATA today, its platforms won’t be a bottleneck for any early adopters of fast SSDs. For Intel these issues don't go away until 2011 with the 6-series chipsets (Cougar Point) which will at least enable 6Gbps SATA. "

So, I think he is associating "good 6Gbps interface) with 6&8 series, not "don't have" with 6&8.

semo - Friday, February 18, 2011 - link

Ok I think I get it thanks HangFire. I remember that there was an article on Anandtech that tested SSDs on AMD's chipsets and the results weren't as good as Intel's. I've been waiting ever since for a follow up article but AMD stuff doesn't get much attention these days.BanditWorks - Friday, February 18, 2011 - link

So if MLC NAND mortality rate ("endurance") dropped from 10,000 cycles down to 5,000 with the transition to 34nm manufacturing tech., does that mean that the SLC NAND mortality rate of 100,000 cycles went down to ~ 50,000?Sorry if this seems like a stupid question. *_*