OCZ's Fastest SSD, The IBIS and HSDL Interface Reviewed

by Anand Lal Shimpi on September 29, 2010 12:01 AM ESTTake virtually any modern day SSD and measure how long it takes to launch a single application. You’ll usually notice a big advantage over a hard drive, but you’ll rarely find a difference between two different SSDs. Present day desktop usage models aren’t able to stress the performance high end SSDs are able to deliver. What differentiates one drive from another is really performance in heavy multitasking scenarios or short bursts of heavy IO. Eventually this will change as the SSD install base increases and developers can use the additional IO performance to enable new applications.

In the enterprise market however, the workload is already there. The faster the SSD, the more users you can throw at a single server or SAN. There are effectively no limits to the IO performance needed in the high end workstation and server markets.

These markets are used to throwing tens if not hundreds of physical disks at a problem. Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things.

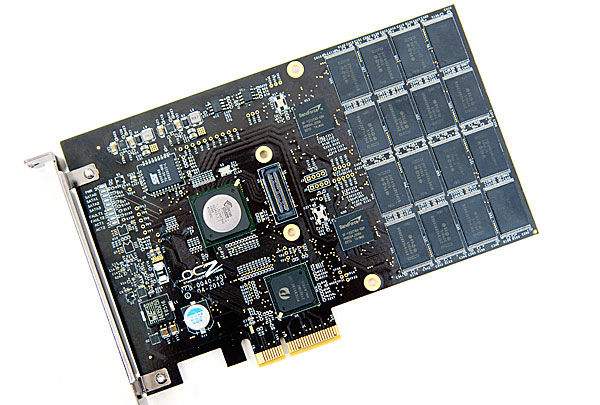

The appetite for performance is so great that many enterprise customers are finding the limits of SATA unacceptable. While we’re transitioning to 6Gbps SATA/SAS, for many enterprise workloads that’s not enough. Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers.

The OCZ RevoDrive, two SF-1200 controllers in RAID on a PCIe card

OCZ has been toying in this market for a while. The zDrive took four Indilinx controllers and put them behind a RAID controller on a PCIe card. The more recent RevoDrive took two SandForce controllers and did the same. The RevoDrive 2 doubles the controller count to four.

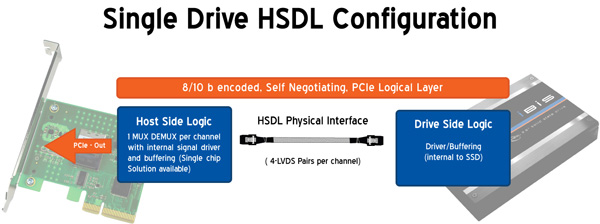

Earlier this year OCZ announced its intention to bring a new high speed SSD interface to the market. Frustrated with the slow progress of SATA interface speeds, OCZ wanted to introduce an interface that would allow greater performance scaling today. Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of aggregate bandwidth to a single SSD. It’s an absolutely absurd amount of bandwidth, definitely more than a single controller can feed today - which is why the first SSD to support it will be a multi-controller device with internal RAID.

Instead of relying on a SATA controller on your motherboard, HSDL SSDs feature a 4-lane PCIe SATA controller on the drive itself. HSDL is essentially a PCIe cable standard that uses a standard SAS cable to carry a 4 PCIe lanes between a SSD and your motherboard. On the system side you’ll just need a dumb card with some amount of logic to grab the cable and fan the signals out to a PCIe slot.

The first SSD to use HSDL is the OCZ IBIS. As the spiritual successor to the Colossus, the IBIS incorporates four SandForce SF-1200 controllers in a single 3.5” chassis. The four controllers sit behind an internal Silicon Image 3124 RAID controller. This is the same controller used in the RevoDrive which is natively a PCI-X controller, picked to save cost. The 1GB/s of bandwidth you get from the PCI-X controller is routed to a Pericom PCIe x4 switch. The four PCIe lanes stemming from the switch are sent over the HSDL cable to the receiving card on the motherboard. The signal is then grabbed by a chip on the card and passed through to the PCIe bus. Minus the cable, this is basically a RevoDrive inside an aluminum housing. It's a not-very-elegant solution that works, but the real appeal would be controller manufacturers and vendors designing native PCIe-to-HSDL controllers.

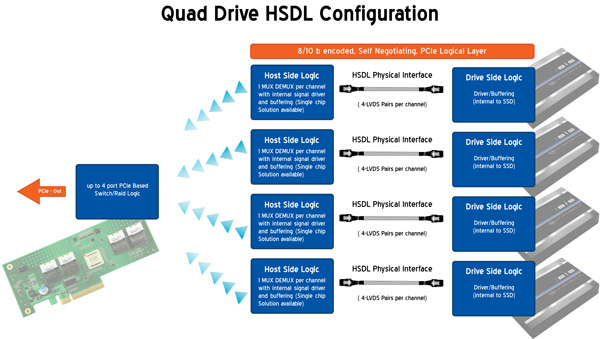

OCZ is also bringing to market a 4-port HSDL card with a RAID controller on board ($69 MSRP). You’ll be able to raid four IBIS drives together on a PCIe x16 card for an absolutely ridiculous amount of bandwidth. The attainable bandwidth ultimately boils down to the controller and design used on the 4-port card however. I'm still trying to get my hands on one to find out for myself.

74 Comments

View All Comments

Johnsy - Wednesday, September 29, 2010 - link

I would like to echo the comments made by disappointed1, particularly with regard to OCZ's attempt to introduce a proprietry standard when a cabling spec for PCIe already exists.It's all well and good having intimate relationships with representatives of companies who's products you review, but having read this (and a couple of other) articles, I do find myself wondering who the real beneficiary is. . .

63jax - Wednesday, September 29, 2010 - link

although i am amazed by those numbers you should put the ioDrive there as a standard.iwodo - Wednesday, September 29, 2010 - link

I recently posted on Anandtech forum about SSD - When we hit the laws of diminishing returnshttp://forums.anandtech.com/showthread.php?t=21068...

It is less then 10 days Anand seems to have answer every question we have discussed in the thread. From Connection Port to Software usage.

The review pretty much prove my point. After current Gen Sandforce SSD, we are already hitting the laws of diminishing returns. A SATA 6Gbps SSD, or even a Quad Sandforce SSD like IBIS wont give as any perceptible speed improvement in 90% of out day to day usage.

Until Software or OS takes advantage of massive IOs from SSD. Current Sandforce SSD would be the best investment in terms of upgrades.

iwodo - Wednesday, September 29, 2010 - link

I forgot to mention, with next gen SSD that will be hitting 550MB/s and even slightly more IOPS, there is absolutely NO NEED for HSDL in consumer space.While SATA is only Half Duplex, benchmarks shows no evidence such limitation has causes any latency problem.

davepermen - Thursday, September 30, 2010 - link

Indeed. the next gen intel ssd on sata3 will most likely deliver the same as this ssd, but without all the proprietary crap. sure, numbers will be lower. but actual performance will most likely be the same, much cheaper, and very flexible (just raid them if you want, or jbod them, or what ever).this stuff is bullshit for customers. it sounds like some geek created a funky setup to combine it's ssds to great performance, and that's it.

oh, other than that, i bet the latency will be higher on these ocz just because of all the indirections. and latency are the nr. one thing that make you feel the difference of different ssds.

in short, that product is absolute useless crap.

so far, i'm still happy on my intel gen1 and gen2. i'll wait a bit to find a new device that gives me a real noticable difference. and does not take away any of the flexibility i have right now with my simple 1-sata-drive setups.

anand and ocz, always a strange combination :)

viewwin - Wednesday, September 29, 2010 - link

I wonder what Intel thinks about a new competing cable design?davepermen - Thursday, September 30, 2010 - link

i bet they don't even know. not that they care. their ssds will deliver much more for the customer. easy, standards based connection existing in ANY actual system, raidability, trim, and most likely about the same performance experience as this device, but at a much much lower cost.tech6 - Wednesday, September 29, 2010 - link

Since this is really just a cable attached SSD card, I don't see the need for yet another protocol/connection standard. Also the concept of RAID upon RAID also seems somewhat redundant.I am also unclear as to what market this is aimed for. The price excludes the mass desktop market and yet it also isn't aimed at the enterprise data center - that only leaves workstation power users which are not a large market. Given the small target audience, motherboard makers will most likely not invest their resources in supporting HSDL on their motherboards.

Stuka87 - Wednesday, September 29, 2010 - link

Its a very interesting concept, and the performance is of course incredible. But like you mentioned, I just can't see the money being worth it at this point. It is simpler than building your own RAID, as you just plug it in and be done with it.But if motherboard makers can get on board, and the interface gains some traction, then I could certainly see it taking over SAS/SATA as the interface of choice in the future. I think OCZ is smart to offer it as a free and open standard. Offering a new standard for free has worked very well for other companies in the past. Especially when they are small.

nirolf - Wednesday, September 29, 2010 - link

<<Note that peak low queue-depth write speed dropped from ~233MB/s down to 120MB/s. Now here’s performance after the drive has been left idle for half an hour:>>Isn't this a problem in a server environment? Maybe some servers never get half an hour of idle.