OCZ's Fastest SSD, The IBIS and HSDL Interface Reviewed

by Anand Lal Shimpi on September 29, 2010 12:01 AM ESTTake virtually any modern day SSD and measure how long it takes to launch a single application. You’ll usually notice a big advantage over a hard drive, but you’ll rarely find a difference between two different SSDs. Present day desktop usage models aren’t able to stress the performance high end SSDs are able to deliver. What differentiates one drive from another is really performance in heavy multitasking scenarios or short bursts of heavy IO. Eventually this will change as the SSD install base increases and developers can use the additional IO performance to enable new applications.

In the enterprise market however, the workload is already there. The faster the SSD, the more users you can throw at a single server or SAN. There are effectively no limits to the IO performance needed in the high end workstation and server markets.

These markets are used to throwing tens if not hundreds of physical disks at a problem. Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things.

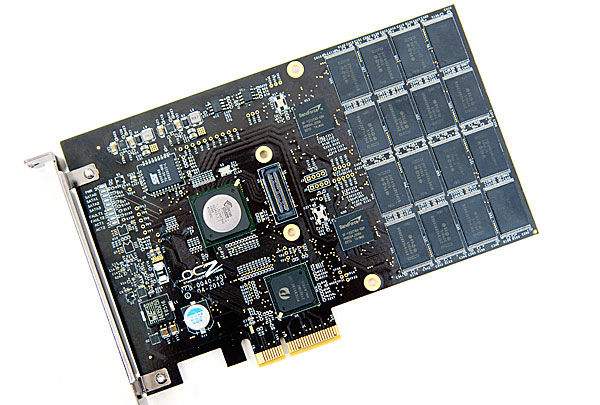

The appetite for performance is so great that many enterprise customers are finding the limits of SATA unacceptable. While we’re transitioning to 6Gbps SATA/SAS, for many enterprise workloads that’s not enough. Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers.

The OCZ RevoDrive, two SF-1200 controllers in RAID on a PCIe card

OCZ has been toying in this market for a while. The zDrive took four Indilinx controllers and put them behind a RAID controller on a PCIe card. The more recent RevoDrive took two SandForce controllers and did the same. The RevoDrive 2 doubles the controller count to four.

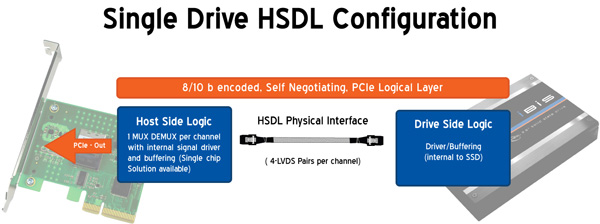

Earlier this year OCZ announced its intention to bring a new high speed SSD interface to the market. Frustrated with the slow progress of SATA interface speeds, OCZ wanted to introduce an interface that would allow greater performance scaling today. Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of aggregate bandwidth to a single SSD. It’s an absolutely absurd amount of bandwidth, definitely more than a single controller can feed today - which is why the first SSD to support it will be a multi-controller device with internal RAID.

Instead of relying on a SATA controller on your motherboard, HSDL SSDs feature a 4-lane PCIe SATA controller on the drive itself. HSDL is essentially a PCIe cable standard that uses a standard SAS cable to carry a 4 PCIe lanes between a SSD and your motherboard. On the system side you’ll just need a dumb card with some amount of logic to grab the cable and fan the signals out to a PCIe slot.

The first SSD to use HSDL is the OCZ IBIS. As the spiritual successor to the Colossus, the IBIS incorporates four SandForce SF-1200 controllers in a single 3.5” chassis. The four controllers sit behind an internal Silicon Image 3124 RAID controller. This is the same controller used in the RevoDrive which is natively a PCI-X controller, picked to save cost. The 1GB/s of bandwidth you get from the PCI-X controller is routed to a Pericom PCIe x4 switch. The four PCIe lanes stemming from the switch are sent over the HSDL cable to the receiving card on the motherboard. The signal is then grabbed by a chip on the card and passed through to the PCIe bus. Minus the cable, this is basically a RevoDrive inside an aluminum housing. It's a not-very-elegant solution that works, but the real appeal would be controller manufacturers and vendors designing native PCIe-to-HSDL controllers.

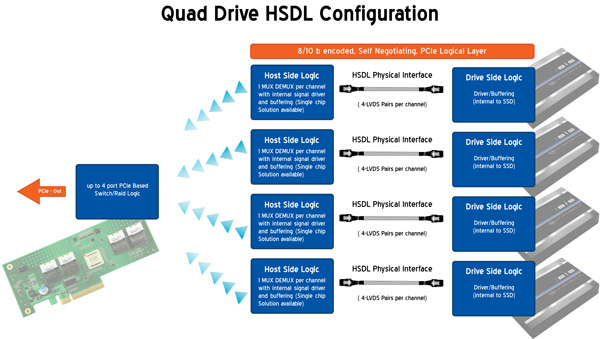

OCZ is also bringing to market a 4-port HSDL card with a RAID controller on board ($69 MSRP). You’ll be able to raid four IBIS drives together on a PCIe x16 card for an absolutely ridiculous amount of bandwidth. The attainable bandwidth ultimately boils down to the controller and design used on the 4-port card however. I'm still trying to get my hands on one to find out for myself.

74 Comments

View All Comments

Ushio01 - Wednesday, September 29, 2010 - link

My mistake.Kevin G - Wednesday, September 29, 2010 - link

I have had a feeling that SSD's would quickly become limited by the SATA specification. Much of what OCZ is doing here isn't bad in principle. Though I have plenty of questions about it.Can the drive controller fall back to SAS when plugged into a motherboard's SAS port? (Reading the article, I suspect a very strong 'no' answer.) Can the HSDL spec and drive RAID controller be adapted to support this?

What booting limitations exist? Is a RAID driver needed for the controller inside the IBIS drive?

Are the individual SSD controllers connected to the drive's internal RAID controller seen by the operating system or BIOS? (I'd guess 'no' but not explicitly stated.)

Is the RAID controller on the drive seen as a PCI-E device?

Is the single port host card seen by the system's operating system or is entirely passive? Is the four HDSL port card seen differently?

Does the SAS cabling handle PCI-E 3.0 signaling?

Is OCZ working on a native HDSL controller that'll convert PCI-E to ONFI? Would such a chip be seen as a regular old IDE device for easy OS installation and support for legacy systems? Would such a chip be able to support TRIM?

disappointed1 - Wednesday, September 29, 2010 - link

I've read Anandtech for years and I had to register and comment for the first time on what a poor article this is - the worst on an otherwise impressive site."Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers."

-And IBIS is not one of them - if there is a bunch of NAND behind a SATA RAID controller, then the final drive interface is STILL SATA.

"Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of bi-directional bandwidth to a single SSD."

-WRONG, HSDL pipes 4 PCIe 1.1 lanes to a PCIe to PCI-X bridge chip (as per the RevoDrive), connected to a SiI 3124 PCI-X RAID controller, out to 4 RAID'ed SF-1200 drives. And PCIe x4 is 1000MB/s bi-directional, or 2000MB/s aggregate - not that it matters, since the IBIS SSDs aren't going to see that much bandwidth anyway - only the regular old 300MB/s any other SATA300 drive would see. This is nothing new; it's a RevoDrive on a cable. We can pull this out of the article, but you're covering it up with as much OCZ marketing as possible.

Worse, it's all connected through a proprietary interface instead of the PCI Express External Cabling, spec'd Febuary 2007 (http://www.pcisig.com/specifications/pciexpress/pc...

By your own admission, you have a black box that is more expensive and slower than a native PCIe x4 2.0, 4 drive RAID-0. You can't upgrade the number of drives or the drive capacity, you can't part it out to sell, it's bigger than 4x2.5" drives, AND you don't get TRIM - the only advantage to using a single, monolithic drive. It's built around a proprietary interface that could (hopefully) be discontinued after this product.

This should have been a negative review from the start instead of a glorified OCZ press release. I hope you return to objectivity and fix this article.

Oh, and how exactly will the x16 card provide "an absolutely ridiculous amount of bandwidth" in any meaningful way if it's a PCIe x16 to 4x PCIe x4 switch? You'd have to RAID the 4 IBIS drives in software and you're still stuck with all the problems above.

Anand Lal Shimpi - Wednesday, September 29, 2010 - link

I appreciate you taking the time to comment. I never want to bring my objectivity into question but I will be the first to admit that I'm not perfect. Perhaps I can shed more light on my perspective and see if that helps clear things up.A standalone IBIS drive isn't really all that impressive. You're very right, it's basically a RevoDrive in a chassis (I should've mentioned this from the start, I will make it more clear in the article). But you have to separate IBIS from the idea behind HSDL.

As I wrote in the article the exciting thing isn't the IBIS itself (you're better off rolling your own with four SF-1200 SSDs and your own RAID controller). It's HSDL that I'm more curious about.

I believe the reason to propose HSDL vs. PCIe External Cabling has to do with the interface requirements for firmware RAID should you decide to put several HSDL drives on a single controller, however it's not something that I'm 100% on. From my perspective, if OCZ keeps the standard open (and free) it's a non-issue. If someone else comes along and promotes the same thing via PCIe that will work as well.

This thing only has room to grow if controller companies and/or motherboard manufacturers introduce support for it. If the 4-port card turns into a PCIe x16 to 4x PCIe x4 switch then the whole setup is pointless. As I mentioned in the article, there's little chance for success here but that doesn't mean I believe it's a good idea to discourage the company from pursuing it.

Take care,

Anand

Anand Lal Shimpi - Wednesday, September 29, 2010 - link

I've clarified the above points in the review, hopefully this helps :)Take care,

Anand

disappointed1 - Thursday, September 30, 2010 - link

Thanks for the clarification Anand.Guspaz - Wednesday, September 29, 2010 - link

But why is HSDL interesting in the first place? Maybe I'm missing some use case, but what advantage does HSDL present over just skipping the cable and plugging the SSD directly into the PCIe slot?I might see the concept of four drives plugged into a 16x controller, each getting 4x bandwidth, but then the question is "Why not just put four times the controllers on a single 16x card."

There's so much abstraction here... We've got flash -> sandforce controller -> sata -> raid controller -> pci-x -> pci-e -> HSDL -> pci-e. That's a lot of steps! It's not like there aren't PCIe RAID controllers out there... If they don't have any choice but to stick to SATA (and I'd point out that other manufacturers, like Fusion-IO decided to just go native PCIe) due to SSD controller availability, why not just use PCIe RAID controller and shorten the path to flash -> sandforce controller -> raid controller -> pci-e?

This just seem so convoluted, while other products like the ioDrive just bypass all this.

bman212121 - Wednesday, September 29, 2010 - link

What doesn't make sense for this setup is how it would be beneficial over just using a hardware RAID card like the Areca 1880. Even with a 4 port HSDL card you would need to tie the drives together somehow. (software raid? Drive pooling?) Then you have to think about redundancy. Doing raid 5 with 4 Ibis drives you'd lose an entire drive. Take the cards out of the drives, plug them all into one Areca card and you would have redundancy, RAID over all drives + hotswap, and drive caching. (RAM on the Areca card would still be faster than 4 ibis)Not seeing the selling point of this technology at all.

rbarone69 - Thursday, September 30, 2010 - link

In our cabinets filled with blade servers and 'pizza box' severs we have limited space and a nightmare of cables. We cannot afford to use 3.5" boxes of chips and we dont want to manage an extra bundle of cables. Having a small single 'extension cord' allows us to store storage in another area. So having this kind of bandwidth dense external cable does help.Cabling is a HUGE problem for me... Having fewer cables is better so building the controllers on the drives themselves where we can simply plug them into a port is attractive. Our SANs do exactly this over copper using iSCSI. Unfortunatly these drives aren't SANs with redundant controllers, power supplies etc...

Software raid systems forced to use the CPU for parity calcs etc... YUK Unless somehow they can be linked and RAID supported between the drives automagically?

For supercomputing systems that can deal with drive failures better, I can see this drive being the perfect fit.

disappointed1 - Wednesday, September 29, 2010 - link

Can the drive controller fall back to SAS when plugged into a motherboard's SAS port? (Reading the article, I suspect a very strong 'no' answer.) Can the HSDL spec and drive RAID controller be adapted to support this?-No, SAS is a completely different protocol and IBIS houses a SATA controller.

-HSDL is just a interface to transport PCIe lanes externally, so you could, in theory, connect it to an SAS controller.

What booting limitations exist? Is a RAID driver needed for the controller inside the IBIS drive?

-Yes, you need a RAID driver for the SiI 3124.

Are the individual SSD controllers connected to the drive's internal RAID controller seen by the operating system or BIOS? (I'd guess 'no' but not explicitly stated.)

-Neither, they only see the SiI 3124 SATA RAID controller.

Is the RAID controller on the drive seen as a PCI-E device?

-Probably.

Is the single port host card seen by the system's operating system or is entirely passive?

-Passive, the card just pipes the PCIe lanes to the SATA controller.

Is the four HDSL port card seen differently?

-Vaporware at this point - I would guess it is a PCIe x16 to four PCIe x4 switch.

Does the SAS cabling handle PCI-E 3.0 signaling?

-The current card and half-meter cable are PCIe 1.1 only at this point. The x16 card is likely a PCIe 2.0 switch to four PCIe x4 HDSLs. PCIe 3.0 will share essentially the same electrical characteristics as PCIe 2.0, but it doesn't matter since you'd need a new card with a PCIe 3.0 switch and a new IBIS with a PCIe 3.0 SATA controller anyway.

Is OCZ working on a native HDSL controller that'll convert PCI-E to ONFI? Would such a chip be seen as a regular old IDE device for easy OS installation and support for legacy systems? Would such a chip be able to support TRIM?

That's something I'd love to see and it would "do away with SATA as a final drive interface", but I expect it to come from Intel and not somebody reboxing old tech (OCZ).