OCZ's Fastest SSD, The IBIS and HSDL Interface Reviewed

by Anand Lal Shimpi on September 29, 2010 12:01 AM ESTTake virtually any modern day SSD and measure how long it takes to launch a single application. You’ll usually notice a big advantage over a hard drive, but you’ll rarely find a difference between two different SSDs. Present day desktop usage models aren’t able to stress the performance high end SSDs are able to deliver. What differentiates one drive from another is really performance in heavy multitasking scenarios or short bursts of heavy IO. Eventually this will change as the SSD install base increases and developers can use the additional IO performance to enable new applications.

In the enterprise market however, the workload is already there. The faster the SSD, the more users you can throw at a single server or SAN. There are effectively no limits to the IO performance needed in the high end workstation and server markets.

These markets are used to throwing tens if not hundreds of physical disks at a problem. Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things.

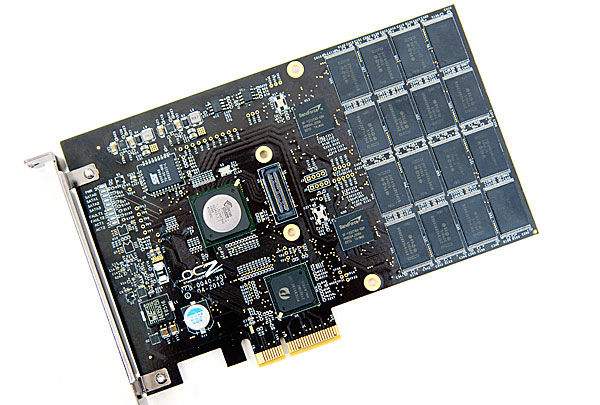

The appetite for performance is so great that many enterprise customers are finding the limits of SATA unacceptable. While we’re transitioning to 6Gbps SATA/SAS, for many enterprise workloads that’s not enough. Answering the call many manufacturers have designed PCIe based SSDs that do away with SATA as a final drive interface. The designs can be as simple as a bunch of SATA based devices paired with a PCIe RAID controller on a single card, to native PCIe controllers.

The OCZ RevoDrive, two SF-1200 controllers in RAID on a PCIe card

OCZ has been toying in this market for a while. The zDrive took four Indilinx controllers and put them behind a RAID controller on a PCIe card. The more recent RevoDrive took two SandForce controllers and did the same. The RevoDrive 2 doubles the controller count to four.

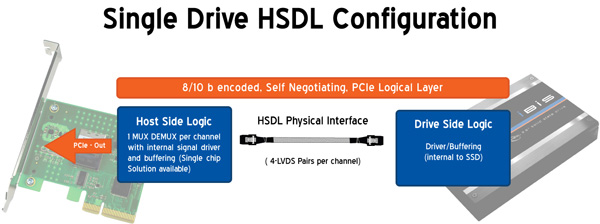

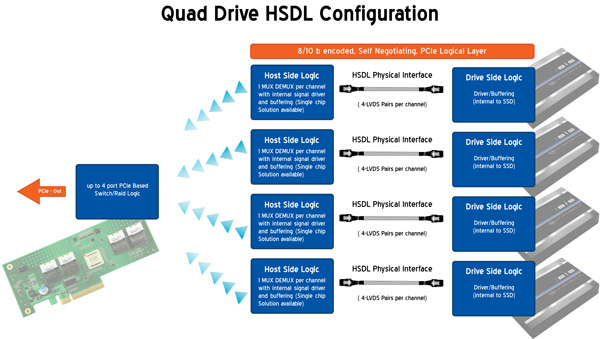

Earlier this year OCZ announced its intention to bring a new high speed SSD interface to the market. Frustrated with the slow progress of SATA interface speeds, OCZ wanted to introduce an interface that would allow greater performance scaling today. Dubbed the High Speed Data Link (HSDL), OCZ’s new interface delivers 2 - 4GB/s (that’s right, gigabytes) of aggregate bandwidth to a single SSD. It’s an absolutely absurd amount of bandwidth, definitely more than a single controller can feed today - which is why the first SSD to support it will be a multi-controller device with internal RAID.

Instead of relying on a SATA controller on your motherboard, HSDL SSDs feature a 4-lane PCIe SATA controller on the drive itself. HSDL is essentially a PCIe cable standard that uses a standard SAS cable to carry a 4 PCIe lanes between a SSD and your motherboard. On the system side you’ll just need a dumb card with some amount of logic to grab the cable and fan the signals out to a PCIe slot.

The first SSD to use HSDL is the OCZ IBIS. As the spiritual successor to the Colossus, the IBIS incorporates four SandForce SF-1200 controllers in a single 3.5” chassis. The four controllers sit behind an internal Silicon Image 3124 RAID controller. This is the same controller used in the RevoDrive which is natively a PCI-X controller, picked to save cost. The 1GB/s of bandwidth you get from the PCI-X controller is routed to a Pericom PCIe x4 switch. The four PCIe lanes stemming from the switch are sent over the HSDL cable to the receiving card on the motherboard. The signal is then grabbed by a chip on the card and passed through to the PCIe bus. Minus the cable, this is basically a RevoDrive inside an aluminum housing. It's a not-very-elegant solution that works, but the real appeal would be controller manufacturers and vendors designing native PCIe-to-HSDL controllers.

OCZ is also bringing to market a 4-port HSDL card with a RAID controller on board ($69 MSRP). You’ll be able to raid four IBIS drives together on a PCIe x16 card for an absolutely ridiculous amount of bandwidth. The attainable bandwidth ultimately boils down to the controller and design used on the 4-port card however. I'm still trying to get my hands on one to find out for myself.

74 Comments

View All Comments

clovis501 - Wednesday, September 29, 2010 - link

If this innovation will eventually make it's way down to personal computer, it could simplify board design by allowing us to do away with the ever-changing SATA standard. A PCI Bus for all drives, and so much bandwidth than any bus-level bottleneck would be a thing of the past. One Bus to rule them all!LancerVI - Wednesday, September 29, 2010 - link

One bus to rule them all! That's a great point. One can hope. That would be great!AstroGuardian - Wednesday, September 29, 2010 - link

I want AT to how me how long will it take to install Windows 7 from a fast USB stick, install all the biggest and IO hungry apps. Then i want to see how long will it take to start them all @ the same time having been put in the Start-up folder. Then i want to see how well would work 5 virtual machines doing some synthetic benchmarks (each one @ the same time) under windows 7.Than i will have a clear view of how fast these SSD monsters are.

Minion4Hire - Wednesday, September 29, 2010 - link

Well aren't you demanding... =pPerisphetic - Thursday, September 30, 2010 - link

...or the past.Well it's probably deja vu, sounds like it.

This is exactly what the MCA (Micro Channel architecture) bus did.back in the day. My IBM PS/2 35 SX's hard drive connected directly to this bus which was also the way the plug in cards connected...

jonup - Wednesday, September 29, 2010 - link

"Even our upcoming server upgrade uses no less than fifty two SSDs across our entire network, and we’re small beans in the grand scheme of things."That's why the prices of SSD stay so high. The demand on the server market is way to high. Manufacturers do not need to fight for the mainstream consumer.

mckirkus - Wednesday, September 29, 2010 - link

I hate to feed trolls but this one is so easy to refute...The uptake of SSDs in the enterprise ultimately makes them cheaper/faster for consumers. If demand increases so does production. Also enterprise users buy different drives, the tech from those fancy beasts typically ends up in consumer products.

The analogy is that big car manufacturers have race teams for bleeding edge tech. If Anand bought a bunch of track ready Ferraris it wouldn't make your Toyota Yaris more expensive.

Flash production will ramp up to meet demand. Econ 101.

jonup - Wednesday, September 29, 2010 - link

Except that there is a limited supply of NAND Flash supply is limited while the demand for Ferraris does affect the demand for Yarises. Further, advances in technologies does not have anything to do with the shortage for Flash. Still further, supply for flash is very inelastic due to the high cost of entry and possibly limited supply of raw materials (read silicon).p.s. Do yourself a favor, do not teach me economics.

jonup - Wednesday, September 29, 2010 - link

Sorry for the bump, but in my original massage I simply expressed my supprise. I was not aware of the fact that SSD are so widely available/used in the commercial side.Ushio01 - Wednesday, September 29, 2010 - link

I believe the random write and read graphs have been mixed up.