Quad Xeon 7500, the Best Virtualized Datacenter Building Block?

by Johan De Gelas on August 10, 2010 5:10 PM EST- Posted in

- IT Computing

Stress Testing the High End

Our previous vApus Mark I gave an idea on how well systems perform when running several virtualized “heavy duty applications”: complex network bandwidth gobbling web servers, large OLAP databases, and write intensive OLTP databases. Our benchmark was mostly based on vApus, a software client that fires off requests as if real users were stressing the server. Several client machines run with a vApus “slave” instance and a “master” vApus instance manages them (for example: start tests in sync) and collects the end results.

The first version of vApus had several limitations: it could simulate a maximum of about 1500 users per client (a limit of 32-bit Windows based software) and the number of clients to could be kept in sync was also limited. In the meantime, the core count of the servers that we test has been increasing at an almost ridiculous pace. When the first lines of vApus were written (at the end of 2006), octal core servers were considered the high-end. Only four years later we are now looking at 64-thread and 48-core monsters. Our ambitious way of benchmarking—simulating real-world users, not scripting benchmarks—resulted in scalability problems.

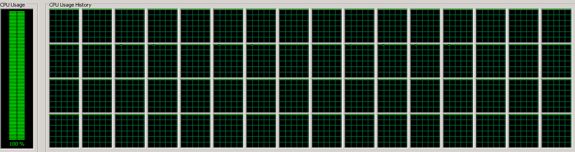

The lead developer of vApus, Dieter Vandroemme, decided to take all the lessons learned from 2.5 years of vApus development and apply them to a new vApus, built from scratch. Based on a new .Net 4.0 and 64-bit Windows foundation, and spending a lot of time on software tuning, Dieter came up with a new vApus Client that was capable of producing 10,000 threads in about 3.5 seconds; up to 15000 threads can be active on one client. If you know that every simulated user needs one thread, you’ll understand why this is very cool: we can now test extremely strong servers with only one humble client. A Core i7-750 (2.66GHz) needs only 20% CPU load to sustain 15000 “users” sending off SQL statements to the server. Our mighty 64-thread, 32-core quad Xeon X7560 at 2.26GHz was brought to its knees, as you can see below.

We were excited to see this happen: finally we tamed the beast with 64 threads. Yes, you can easily stress out a server with HPC benchmarks such as Linpack or SpecFP, but measuring the potential of a server using popular business software is no easy feat. We had to deal with severe thread contention at the client side for example. With several vApus instances, we are now ready to test the strongest servers including those coming out in the next few years. We are even able to stress test complete clusters of modern servers with just a few clients.

vApus' ultimate goal is not to stress servers to their maximum; we use it mostly for measuring response time at a given workload and to test stability of applications. But of course, we could not resist the chance to use it as a benchmark too. It was time to build a new benchmark, and vApus Mark II was born.

51 Comments

View All Comments

Ratman6161 - Wednesday, August 11, 2010 - link

Many products license on a per CPU basis. For Microsoft anyway, what they actually count is the number of sockets. For example SQL Server Enterprise retails for $25K per CPU. So an old 4 socket system with single cores would be 4 x $25K = $100K. A quad socket system with quad core CPUs would be a total of 16 cores but the pricing would still be 4 sockets x $25K = 100K. It used to be that Oracle had a complex formula for figuring this but I think they have now also gone to the simpler method of just counting sockets (though their enterprise edition is $47.5K).If you are using VMWare, they also charge per socket (last I knew) so two dual socket systems would cost the same as a single 4 socket system. Thing is though you need to have at least two boxes in order to enable the high availability (i.e. automatic failover) functionality.

Stuka87 - Wednesday, August 11, 2010 - link

For VMWare they have a few pricing structures. You can be charged per physical socket, or you can get an unlimited socket license (which is what we have, running one seven R910's). You just need to figure out if you really need the top tier license.semo - Tuesday, August 10, 2010 - link

"Did I mention that there is more than 72GHz of computing power in there?"Is this ebay?

Devo2007 - Tuesday, August 10, 2010 - link

I was going to comment on the same thing.1) A dual core 2GHz CPU does not equal "4GHz of computing power" - unless somehow you were achieving an exact doubling of performance (which is extremely rare if it exists at all).

2) Even if there was a workload that did show a full doubling of performance, performance isn't measured in MHz & GHz. A dual-core 2GHz Intel processor does not perform the same as a 2GHz AMD CPU.

More proof that the quality of content on AT is dropping. :(

mino - Wednesday, August 11, 2010 - link

You seem to know very little about the (40yrs old!) virtualization market.It flourishes from *comoditising* processing power.

Why clearly meant a joke, that statement of Johan, is much closer to the truth than most market "research" reports on x86.

JohanAnandtech - Wednesday, August 11, 2010 - link

Exactly. ESX resource management let you reserve CPU power in GHz. So for ESX, two 2.26 GHz cores are indeed a 4.5 GHz resource.duploxxx - Thursday, August 12, 2010 - link

sure you can count resources together as much as you want... virtually. But in the end a single process is still only able to handle the max ghz a single cpu can offer but can finish the request faster. That is exactly the thing why those Nehalem and gulf still hold against the huge core count of Magny cours.maeveth - Tuesday, August 10, 2010 - link

So I have nothing at all against AnandTech's recent articles on Virtualization however so far all of them have only looked at Virtualization from a compute density point of view.I currently am the administrator of a VMware environment used for development work and I run into I/O bottle necks FAR before I ever run into a compute bottleneck. In fact computational power is pretty much the LAST bottleneck I run into. My environment currently holds just short of 300 VMs, OS varies. We peak at approximately 10-12K IOPS.

From my experience you always have to look at potential performance in a virtual environment at a much larger perspective. Every bottleneck effects others in subtle ways. For example if you have a memory bottleneck, either host or guest based you will further impact your I/O subsystem, though you should aim to not have to swap. In my opinion your storage backend is the single most important factor when determining large-scale-out performance in a virtualized environment.

My environment has never once run into a CPU bottleneck. I use IBM x3650/x3650M2 with Dual Quad Xeons. The M2s use X5570s specifically.

While I agree having impressive magnitudes of "GHz" in your environment is kinda fun it hardly says anything about how that environment will preform in a real world environment. Granted it is all highly subject to work load patterns.

I also want to make it clear that I understand that testing on a such a scale is extremely cost prohibitive. As such I am sure AnandTech, Johan speficially, is doing the best he can with what resources he is given. I just wanted to throw my knowledge out there.

@ELC

Yes, software licensing is a huge factor when purchasing ESX servers. ESX is licensed per socket. It's a balancing act that depends on your work load however. A top end ESX license costs about $5500/year per socket.

mino - Wednesday, August 11, 2010 - link

However, IMO storage performance analysis is pretty much beyond AT's budget ballpark by an order of magnitude (or two).There is a reason this space is so happily "virtualized" by storage vendors AND customers to a "simple" IOPS number.

It is a science on its own. Often closer to black (empiric) magic than deterministic rules ...

Johan,

on the other hand, nothing prevents you form mentioning this sad fact:

Except edge cases, a good virtualization solution is build from the ground up with

1. SLA's

2. storage solution

3. licensing considerations

4. everything else (like processing architecture) dictated by the previous

JohanAnandtech - Wednesday, August 11, 2010 - link

I can only agree of course: in most cases the storage solution is the main bottleneck. However, this is aloso a result of the fact that most storage solutions out there are not exactly speed demons. Many storage solutions out there consist of overengineered (and overpriced) software running on outdated hardware. But things are changing quickly now. HP for example seems to recognize that a storage solution is very similar to a server running specialized software. There is more, with a bit of luck, Hitachi and Intel will bring some real competition to the table. (currently STEC has almost a monopoly on the enterprise SSD disks). So your number 2 is going to tumble down :-).