Fermi Goes Mobile: AVADirect's Clevo W880CU with GTX 480M

by Dustin Sklavos on July 7, 2010 11:45 PM ESTMobile Gaming Showdown

So we know that as far as 3DMark is concerned, NVIDIA's GeForce GTX 480M is the fastest mobile GPU available. But how does it fare in actual gaming situations?

Well, we keep saying it's the fastest mobile GPU available, and that's probably because it's the fastest mobile GPU available. How much faster? That's kind of a problem.

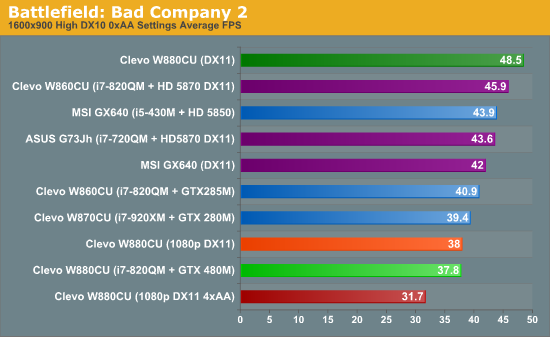

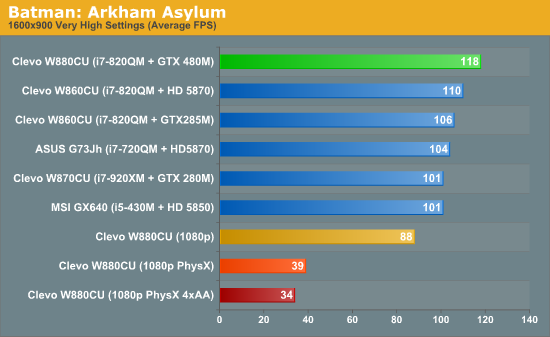

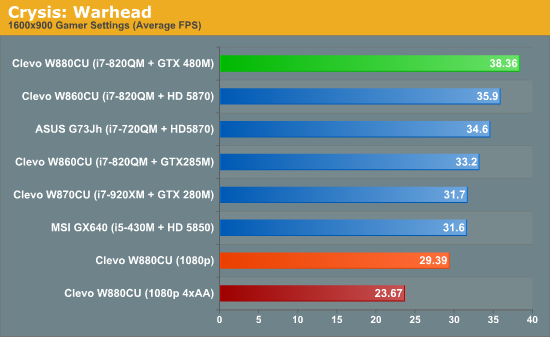

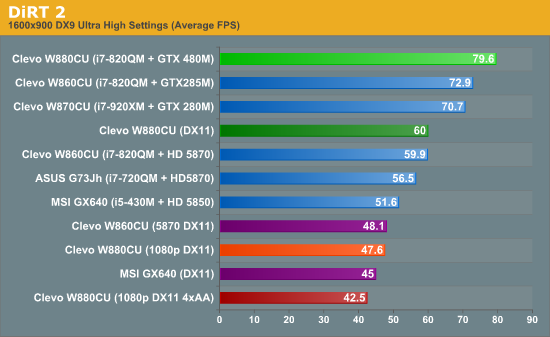

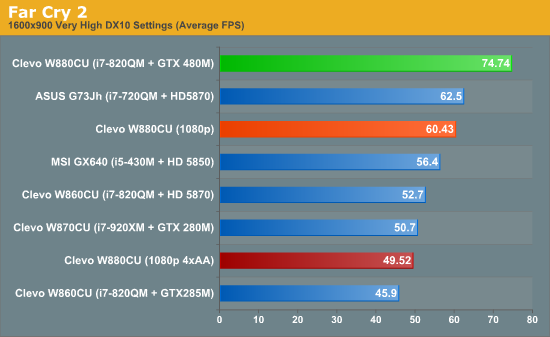

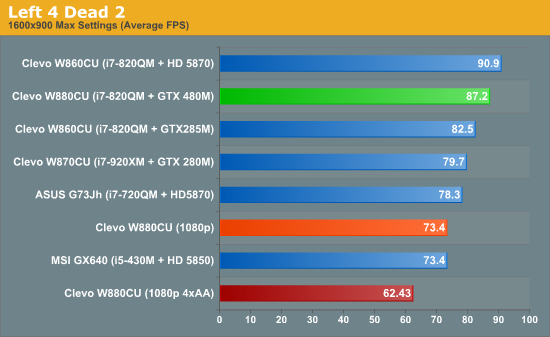

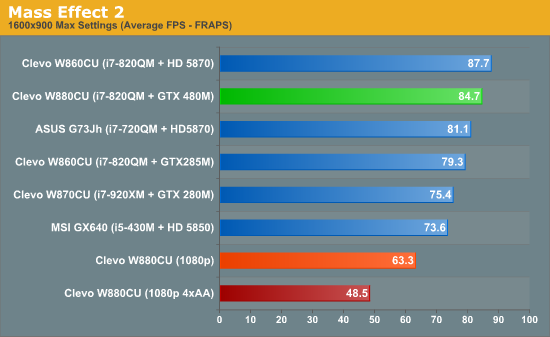

While the 480M takes the lead in most of the games we tested—it downright tears past the competition in Far Cry 2 and DiRT 2—in Mass Effect 2 and Left 4 Dead 2 it was actually unable to best the Mobility Radeon HD 5870. It's only when 4xAA is applied at 1080p that the 480M is able to eke out a win against the 5870 in those titles (we only showed the 4xAA results for the 480M, but you can see the other results in our W860CU review), but the margin of victory is a small one. Of course, Mass Effect 2 doesn't need ultra high frame rates and Left 4 Dead 2 (like all Source engine games) has favored ATI hardware.

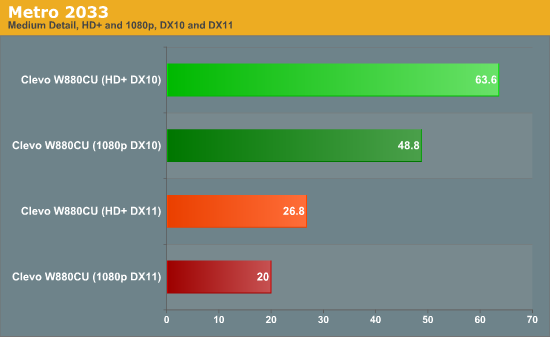

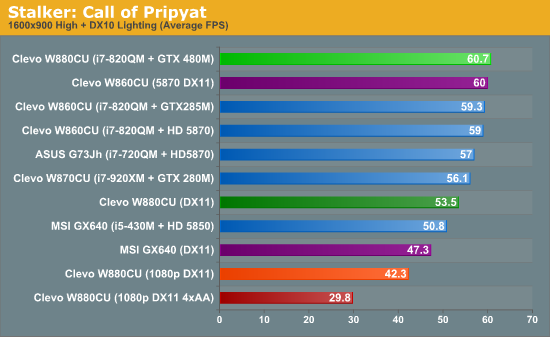

Ultimately, that seems to be the pattern here. The wins the 480M produces are oftentimes with the 5870 nipping at its heels; even compared to the 14-in-dog-years GTX 285M it only offers a moderate improvement in gaming performance. What we essentially have are baby steps between top-end GPUs, particularly when we're running DX10 games running at reasonable settings. DX11 titles may be more favorable; DiRT 2 gives the 480M a 25% lead while STALKER is a dead heat; early indication are that Metro 2033 also favors NVIDIA, though we lack 5870 hardware to run those tests. You can see that DX11 mode is punishing in Metro, regardless. Our look at the desktop GTX 480 suggests that NVIDIA has more potent tesselation hardware. Will it ultimately matter, or will game developers target a lower class of hardware to appeal to a wider installation base? We'll have to wait for more DX11 titles to come out before we can say for certain.

NVIDIA provided additional results in their reviewers' guide, which show the 480M leading the 5870 by closer to 30% on average. However, some of those are synthetic tests and often the scores aren't high enough to qualify as playable (i.e. Unigine at High with Normal tesselation scored 23.1 FPS compared to 17.3 on the 5870). Obviously, the benefit of the GTX 480M varies by game and by settings within that game. At a minimum, we feel games need to run at 30FPS to qualify as handling a resolution/setting combination effectively, and in many such situations the 480M only represents a moderate improvement over the previous 285M and the competing 5870. Is it faster? Yes. Is it a revolution? Unless the future DX11 games change things, we'd say no.

46 Comments

View All Comments

james.jwb - Thursday, July 8, 2010 - link

Forgot that I use magnification for this site. It's definitely the main cause of the huge performance hit, ouch! (dual-core, pretty fast machine really).I think it would be a lot easier if the space now used for the carousel became something static along the lines of Engadget's chunk for "top stories". It's nice to have something there to point out important reviews/news -- I wouldn't want to see the idea completely gone, it's just a carousel is so December 2009 :-)

Spoelie - Thursday, July 8, 2010 - link

While it seems generally true that power keeps increasing from generation to generation (3870, 4870, 5870), wasn't the big drop from the HD2900 series conveniently left out to make that statement stick?It's not really that power always increases, there's a ceiling which was reached a few generations ago and the only thing you can say is that the latest generations are generally closer to that ceiling than most of the ones before it. What the desktop GTX480 pulls is about the most what we will ever see in a desktop barring some serious cooling/housing/power redesigns.

bennyg - Thursday, July 8, 2010 - link

2900 was the P4 of the gfx card world regardings power/performance. It was only released because ATi had to have something, anything, in the marketplace. If ATi had as much cash in the bank as did Intel, they would have cancelled the 2900 like Intel did Larrabee.Thankfully the 2900 went on from its prematurity to underpin radeons 3, 4 and 5. Whereas Prescott was just brute force attempting to beat thermodynamics. Ask Tejas what won :)

JarredWalton - Thursday, July 8, 2010 - link

That's why I said "generally trending up". When the HD 2900 came out, I'm pretty sure most people had never even considered such a thing as a 1200W PSU. My system from that era has a very large for its time 700W PSU for example. The point of the paragraph is that while desktops have a lot of room for power expansion, there's a pretty set wall on notebooks right now. Not that I really want a 350W power brick.... :)7Enigma - Thursday, July 8, 2010 - link

Thank you for the article as many of us (from an interest standpoint and not necessarily from a buyer's standpoint) were waiting for the 480M in the wild.My major complaint with the article is that this is essentially a GPU review. Sure it's in a laptop since this is a notebook, but the only thing discussed here was the difference between GPU's.

With that being the case why is there no POWER CONSUMPTION numbers when gaming? It's been stated for almost every AVA laptop that these are glorified portable desktop computers with batteries that are essentially used only for moving from one outlet to the next.

I think the biggest potential pitfall for the new 480M is to see with performance only marginally better than the 5870 (disgusts me to even write that name due to the neutered design) is to see how much more power it is drawing from the wall during these gaming scenarios.

Going along with power usage would be fan noise, of which I see nothing mentioned in the review. Having that much more juice needed under load should surely make the fan noise increased compared to the 5870....right?

These are two very quick measurements that could be done to beef up the substance of an otherwise good review.

7Enigma - Friday, July 9, 2010 - link

Really no one else agrees? Guess it's just me then.....JarredWalton - Friday, July 9, 2010 - link

We're working to get Dustin a power meter. Noise testing requires a bit more hardware so probably not going to have that for the time being unfortunately. I brought this up with Anand, though, and when he gets his meter Dustin can respond (and/or update the article text).7Enigma - Monday, July 12, 2010 - link

Thanks Jarred!For all the other laptop types I don't think it matters but for these glorified UPS-systems it would be an important factor when purchasing.

Thanks again for taking the time to respond.

therealnickdanger - Thursday, July 8, 2010 - link

"Presently the 480M isn't supported in CS5; in fact the only NVIDIA hardware supported by the Mercury Playback Engine are the GeForce GTX 285 and several of NVIDIA's expensive workstation-class cards."I did the following with my 1GB 9800GT and it's an incredible boost. Multiple HD streams with effects without pausing.

http://forums.adobe.com/thread/632143

I figured out how to activate CUDA acceleration without a GTX 285 or Quadro... I'm pretty sure it should work with other 200 GPUs. Note that i'm using 2 monitors and there's a extra tweak to play with CUDA seamlessly with 2 monitors.

Here are the steps:

Step 1. Go to the Premiere CS5 installation folder.

Step 2. Find the file "GPUSniffer.exe" and run it in a command prompt (cmd.exe). You should see something like that:

----------------------------------------------------

Device: 00000000001D4208 has video RAM(MB): 896

Device: 00000000001D4208 has video RAM(MB): 896

Vendor string: NVIDIA Corporation

Renderer string: GeForce GTX 295/PCI/SSE2

Version string: 3.0.0

OpenGL version as determined by Extensionator...

OpenGL Version 2.0

Supports shaders!

Supports BGRA -> BGRA Shader

Supports VUYA Shader -> BGRA

Supports UYVY/YUYV ->BGRA Shader

Supports YUV 4:2:0 -> BGRA Shader

Testing for CUDA support...

Found 2 devices supporting CUDA.

CUDA Device # 0 properties -

CUDA device details:

Name: GeForce GTX 295 Compute capability: 1.3

Total Video Memory: 877MB

CUDA Device # 1 properties -

CUDA device details:

Name: GeForce GTX 295 Compute capability: 1.3

Total Video Memory: 877MB

CUDA Device # 0 not choosen because it did not match the named list of cards

Completed shader test!

Internal return value: 7

------------------------------------------------------------

If you look at the last line it says the CUDA device is not chosen because it's not in the named list of card. That's fine. Let's add it.

Step 3. Find the file: "cuda_supported_cards.txt" and edit it and add your card (take the name from the line: CUDA device details: Name: GeForce GTX 295 Compute capability: 1.3

So in my case the name to add is: GeForce GTX 295

Step 4. Save that file and we're almost ready.

Step 5. Go to your Nvidia Drivercontrol panel (im using the latest 197.45) under "Manage 3D Settings", Click "Add" and browse to your Premiere CS5 install directory and select the executable file: "Adobe Premiere Pro.exe"

Step 6. In the field "multi-display/mixed-GPU acceleration" switch from "multiple display performance mode" to "compatibilty performance mode"

Step 7. That's it. Boot Premiere and go to your project setting / general and activate CUDA

therealnickdanger - Thursday, July 8, 2010 - link

Sorry, I should have said for ANY CUDA card with 786MB RAM or more. It's quite remarkable.