Intel Kills Larrabee GPU, Will Not Bring a Discrete Graphics Product to Market

by Ryan Smith on May 25, 2010 2:15 PM ESTBill Kircos, Intel’s Director of Product & Technology PR, just posted a blog on Intel’s site entitled “An Update on our Graphics-Related Programs”. In the blog Bill addresses future plans for what he calls Intel’s three visual computing efforts:

The first is the aforementioned processor graphics. Second, for our smaller Intel Atom processor and System on Chip efforts, and third, a many-core, programmable Intel architecture and first product both of which we referred to as Larrabee for graphics and other workloads.

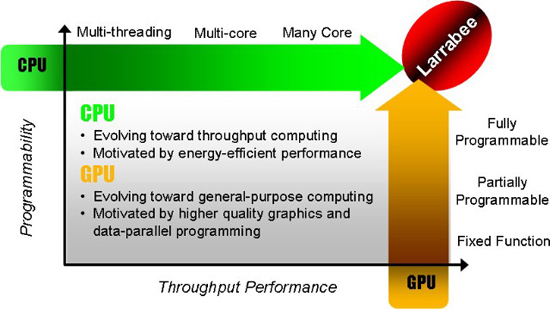

There’s a ton of information in the vague but deliberately worded blog post, including a clear stance on Larrabee as a discrete GPU: We will not bring a discrete graphics product to market, at least in the short-term. Kircos goes on to say that Intel will increase funding for integrated graphics, as well as pursue Larrabee based HPC opportunities. Effectively validating both AMD and NVIDIA’s strategies. As different as Larrabee appeared when it first arrived, Intel appears to be going with the flow after today’s announcement.

My analysis of the post as well as some digging I’ve done follows.

Intel Embraces Fusion, Long Live the IGP

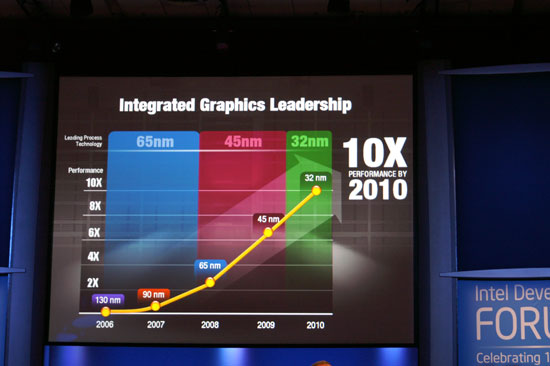

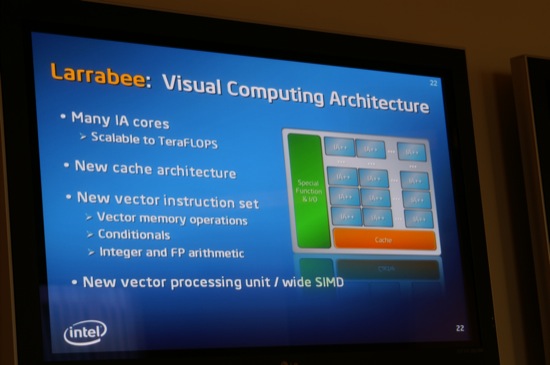

Two and a half years ago Intel put up this slide that indicated the company was willing to take 3D graphics more seriously:

By 2010, on a 32nm process, Intel’s integrated graphics would be at roughly 10x the performance of what it was in 2006. Sandy Bridge was supposed to be out in Q4 2010, but we’ll see it shipping in Q1 2011. It’ll offer a significant boost in integrated graphics performance. I’ve heard it may finally be as fast as the GPU in the Xbox 360.

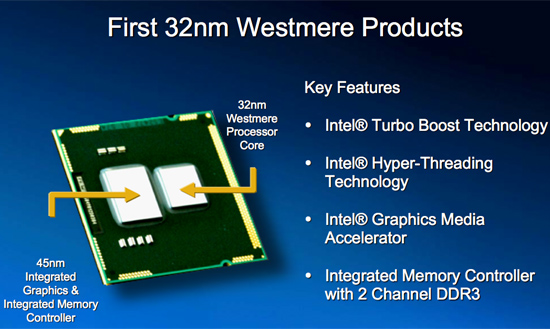

Intel made huge improvements to its integrated graphics with Arrandale/Clarkdale. This wasn’t an accident, the company is taking graphics much more seriously. The first point in Bill’s memo clarifies this:

Our top priority continues to be around delivering an outstanding processor that addresses every day, general purpose computer needs and provides leadership visual computing experiences via processor graphics. We are further boosting funding and employee expertise here, and continue to champion the rapid shift to mobile wireless computing and HD video – we are laser-focused on these areas.

There’s a troublesome lack of addressing the gaming market in this statement. A laser focus in mobile wireless computing and HD video sounds a lot like an extension of what Intel integrated graphics does today, and not what we hope it will do tomorrow. Intel does have a fairly aggressive roadmap for integrated graphics performance, so perhaps missing the word gaming was intentional to downplay the importance of the market that its competitors play in for now.

The current future of Intel graphics

The second point is this:

We are also executing on a business opportunity derived from the Larrabee program and Intel research in many-core chips. This server product line expansion is optimized for a broader range of highly parallel workloads in segments such as high performance computing. Intel VP Kirk Skaugen will provide an update on this next week at ISC 2010 in Germany.

In a single line Intel completely validates NVIDIA’s Tesla strategy. Larrabee will go after the HPC space much like NVIDIA has been doing with Fermi and previous Tesla products. Leveraging x86 can be a huge advantage in HPC. If both Intel and NVIDIA see so much potential in HPC for parallel architectures, there must be some high dollar amounts at stake.

| NVIDIA Tesla | Seismic | Supercomputing | Universities | Defence | Finance |

| GPU TAM | $300M | $200M | $150M | $250M | $230M |

The third point is the one that drives the nail in the coffin of the Larrabee GPU:

We will not bring a discrete graphics product to market, at least in the short-term. As we said in December, we missed some key product milestones. Upon further assessment, and as mentioned above, we are focused on processor graphics, and we believe media/HD video and mobile computing are the most important areas to focus on moving forward.

Intel wasn’t able to make Larrabee performance competitive in DirectX and OpenGL applications, so we won’t be getting a discrete GPU based on Larrabee anytime soon. Instead, Intel will be dedicating its resources to improving its integrated graphics. We should see a nearly 2x improvement in Intel integrated graphics performance with Sandy Bridge, and greater than 2x improvement once more with Ivy Bridge in 2012.

All isn’t lost though. The Larrabee ISA, specifically the VPU extensions, will surface in future CPUs and integrated graphics parts. And Intel will continue to toy with the idea of using Larrabee in various forms, including a discrete GPU. However, the primary focus has shifted from producing a discrete GPU to compete with AMD and NVIDIA, to integrated graphics and a Larrabee for HPC workloads. Intel is effectively stating that it sees a potential future where discrete graphics isn’t a sustainable business and that integrated graphics will become good enough for most of what we want to do, even from a gaming perspective. In Intel’s eyes, discrete graphics would only serve the needs of a small niche if we reach this future where integrated graphics is good enough.

Much like the integration of cache controllers and FPUs into the CPU, Intel expects the GPU to take the same path. The days of discrete coprocessors have always been numbered. One benefit of a tightly coupled CPU-GPU is the bandwidth present between the two, an advantage used by game consoles for years.

This does conflict (somewhat) with AMD’s strategy of a functional Holodeck in 6 years, but that’s why Intel put the “at least in the short-term” qualifier on their statement. I believe Intel plans on making integrated graphics, over the next 5 years, good enough for nearly all 3D gaming. I’m not sure AMD’s Fusion strategy is much different.

For years Intel made a business case for delivering cheap, hardly accelerated 3D graphics on aging process technologies. Intel has apparently recognized the importance of the GPU and is changing directions. Intel will commit more resources (both in development and actual transistor budget) to the graphics portion of its CPUs going forward. Sandy Bridge will be the beginning, the ramp from there will probably mimic what we saw ATI and NVIDIA do with their GPUs over the years with a constant doubling of transistor count. Intel has purposefully limited the GPU transistor budget in the past. From what I’ve heard, that limit is now gone. It will start with Sandy Bridge, but I don’t think we’ll be impressed until 2013.

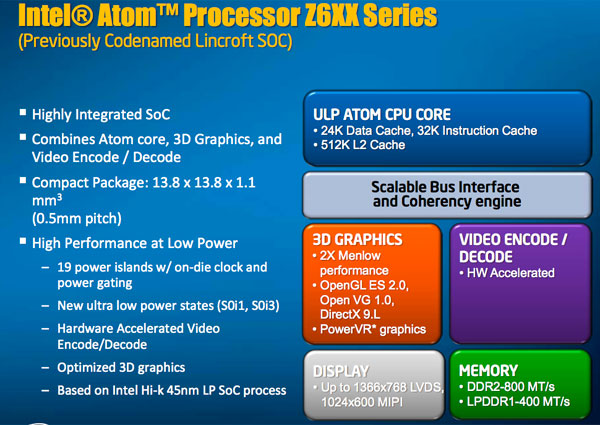

What About Atom & Moorestown?

Anything can happen, but by specifically calling out the Atom segment I get the impression that Intel is trying to build its own low power GPU core for use in SoCs. Currently the IP is licensed from Imagination Technologies, a company Intel holds a 16% stake in, but eventually Intel may build its own integrated graphics core here.

Previous Intel graphics cores haven’t been efficient enough to scale down to the smartphone SoC level. I get the impression that Intel has plans (if it is not doing so already) to create its own Atom-like GPU team to work on extremely low power graphics cores. This would ultimately eliminate the need for licensing 3rd party graphics IP in Intel’s SoCs. Planning and succeeding are two different things so only time will tell if Imagination has a long term future at Intel. The next 3 years are pretty much guaranteed to be full of Imagination graphics, at least in the Intel smartphone/SoC products.

Final Words

Intel cancelled plans for a discrete Larrabee graphics card because it could not produce one that was competitive with existing GPUs from AMD and NVIDIA in current games. Why Intel lacked the foresight to stop from even getting to this point is tough to say. The company may have been too optimistic or genuinely lacked the experience in building discrete GPUs, something it hadn’t done in more than a decade. Maybe it truly was Pat Gelsinger's baby.

This also validates AMD and NVIDIA’s strategy and their public responses to Larrabee. Those two often said that the most efficient approach to 3D graphics was not through x86 cores but through their own specialized, but programmable hardware. The x86-tax would effectively always put Larrabee at a disadvantage. When running Larrabee native code this would be less of an issue, but DX and OpenGL performance is another situation entirely. Intel executed too poorly, NVIDIA and most definitely AMD executed too well. Intel couldn’t put out a competitive Larrabee quickly enough, it fell too far behind.

A few years ago Intel attempted to enter the ARM SoC race with an ARM based chip of its own: XScale. Intel admitted defeat and sold off XScale, stating that it was too late to the market. Intel has since focused on the future of the SoC market with Moorestown. Rather than compete in a maturing market, Intel is now attempting to get a foot in the door on the next evolution of that market: high performance SoCs.

I believe this may be what Intel is trying with its graphics strategy. Seeing little hope for a profitable run at discrete graphics, Intel is now turning its eye to unexplored territory: the hybrid CPU-GPU. Focusing its efforts there, if successful, would be far easier and far more profitable than struggling to compete in the discrete GPU space.

The same goes for using Larrabee in the HPC space. NVIDIA is the most successful GPU company in HPC and even its traction has been limited. It’s still early enough that Intel could show up with Larrabee and take a big slice of the pie.

Clearly AMD sees value in the integrated graphics strategy as it spent over $5 billion acquiring ATI in order to bring Fusion to market. Next year we’ll see the beginnings of that merger come to fruition. Not only does Intel’s announcement today validate NVIDIA’s HPC strategy, but it also validates AMD’s acquisition of ATI. While Larrabee as a discrete GPU cast a shadow of confusion over the future of the graphics market, Intel focusing on integrated graphics and HPC is much more harmonious with AMD and NVIDIA’s roadmaps. We used to not know who had the right approach, now we have one less approach to worry about.

Whether Intel is committed enough to integrated graphics remains to be seen. NVIDIA has no current integrated graphics strategy (unless it can work out a DMI/QPI license with Intel). AMD’s strategy is very similar to what Intel is proposing today and it has been for some time, but AMD at least has a far more mature driver and ISV compatibility teams with its graphics cores. Intel has a lot of catching up to do in this department.

I’m also unsure what AMD and Intel see as the future of discrete graphics. Based on today’s trajectory you wouldn’t have high hopes for discrete graphics, but as today’s announcement shows: anything can change. Plus, I doubt the Holodeck will run optimally on an IGP.

55 Comments

View All Comments

iwodo - Thursday, May 27, 2010 - link

Xbox 360 GPU is really outdated compare to today's PC graphics. The reason you see better graphics for Xbox 360 is because,1. Xbox 360 runs a lower resolution.

2. It has much better optimized drivers and system software for gaming

3. Embedded memory for much higher bandwidth. You get zero cost AA on Xbox 360.

4. Intel Graphics Drivers support is POOR

5. No Games was ever designed to optimized for Intel Graphics.

The last two point could easily lost 50% of it maximum potential . While the Xbox 360 GPU, being technically slightly more the twice as fast as i3 GPU, be 4 times as fast due to Software difference.

Zak - Thursday, May 27, 2010 - link

Defence sounds like a process of de-fencing, i.e. removing a fence. I think you had "Defense" in mind, no?jamescox - Saturday, May 29, 2010 - link

Sorry if this has already been posted, but I don't have time right now to read the whole thread.How would you go to multiple chips (multiple sockets) with the gpu integrated into the cpu? Multi-socket boards in their current form factor are large and expensive. I have no doubt that Intel and AMD will integrate gpus into their cpus. It would be a waste to have 8 cpu cores be "standard" at 22 nm, so the mainstream will have a few cpu like cores, and an integrated gpu on a single board.

If you want to use the same chip across the board though, and use multiple chips/sockets for higher performance markets, then IMO, it makes more sense to change the system architecture to effectively integrate the cpu in with the gpu, and move the system memory out farther in the memory hierarchy. Just put the cpu-gpu on a graphics type board, with high-speed memory, and page in and out of the main system memory on the system board. This is a much better form factor for multiple processors rather than a large, expensive 4 socket board, for example. This also assumes that the software is capable of spreading the workload across multiple chips, which should get easier, or at least more efficient as you do more and more processing per pixel.

Other form factors are possible with different packaging options. We could have some memory chips in the same package, or even stacked on top of other chips. If you are going to use a lot of chips for just processing, then it may make sense to have a large amount of embedded memory in the chipset on the mainboard.

The massive single chip gpus that nvidia is still focusing on do not make too much sense, IMO. It would be better to target the "sweet spot" for the process tech, and use multiple chips for higher performance. Memory is still the limiting factor though, since it is too expensive to connect memory to multiple gpus on the same board. Would be better to share the memory some how, or package memory in with each chip. With paging the directly connected memory in and out of system memory, you should be able to make better use of the local memory to reduce duplication. Right now, most data is duplicated several places in system memory and graphics memory.

I didn't expect larrabee to do that well, since I expected it to take significantly more hardware to reach performance comparable to nvidia and amd offerings. Intel has the capacity to throw a lot more hardware at the problem, so I have to wonder if it is a software or power issue. If they have to throw a lot more hardware at the problem, then that would also mean a lot more power. Intel can't market a card that consumes 500 watts or something even if it has similar performance.

AnnonymousCoward - Sunday, May 30, 2010 - link

"Intel is effectively stating that...integrated graphics will become good enough...even from a gaming perspective."That's a bold conclusion, and I disagree. The quote said Intel missed milestones, and upon assessment, they decided to focus on IGP, HD video, and mobile. I don't see that as saying IGP will be good enough for gaming, but rather that Intel can't compete in discrete right now.

_PC_ - Sunday, May 30, 2010 - link

@ AnnonymousCoward1+