NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Tessellation & PhysX

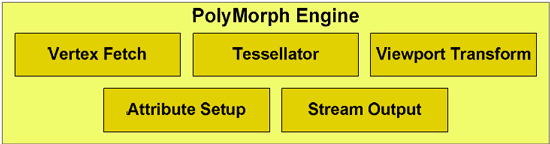

We’ll kick off our in-depth look at the performance of the GTX400 series with Tessellation and PhysX. These are two of the biggest features that NVIDIA is pushing with the GTX400 series, with tessellation in particular being the major beneficiary of NVIDIA’s PolyMorph Engine strategy.

As we covered in our GF100 Recap, NVIDIA seeks to separate themselves from AMD in spite of the rigid feature set imposed by DirectX 11. Tessellation is one of the ways they intend to do that, as the DirectX 11 standard leaves them plenty of freedom with respect to tessellation performance. To accomplish this goal, NVIDIA needs significantly better tessellation performance, which has lead to them having 14/15/16 tesselators through having that many PolyMorph Engines. With enough tessellation performance NVIDIA can create an obvious image quality improvement compared to AMD, all the while requiring very little on the part of developers to take advantage of this.

All things considered, NVIDIA’s claim of having superior tessellation performance is one of the easiest claims to buy, but all the same we’ve gone ahead and attempted to confirm it.

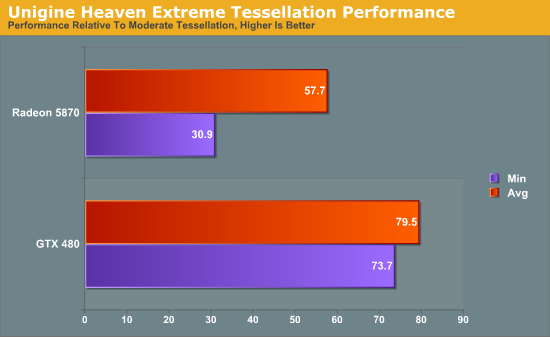

Our first tessellation test is the newly released Unigine Heaven 2.0 benchmark, which was released a few days ago. 2.0 added support for multiple levels of tessellation (with 1.0 having earned a reputation of using extreme levels of tessellation), which allows us to look at tessellation performance by varying tessellation levels. If the GTX 480’s tessellation capabilities are several times faster than the Radeon 5870’s as NVIDIA claims, then it should better handle the increased tessellation levels.

Since Heaven is a largely a synthetic benchmark at the moment (the DX11 engine isn’t currently used in any games) we’ll be focusing on the relative performance of cards to themselves in keeping with our editorial policy of avoiding synthetic GPU tests when possible.

Heaven: Moderate & Extreme Tessellation

Heaven has 4 tessellation levels: off, moderate, normal, extreme. For our test we’re using the moderate and extreme modes, comparing the performance of extreme as a percentage of moderate performance.

Starting with averages, the GTX 480 keeps 79% of its performance moving from moderate to extreme. On the Radeon 5870 however, the performance drop-off is much more severe, losing 42% of its performance to bring it down to 58%.

The minimum framerates are even more telling. The GTX 480 minimum framerates drop by 26% when switching to extreme tessellation. The Radeon 5870 is much worse off here, bringing in minimum framerates 69% lower when using extreme tessellation. From these numbers it’s readily apparent that the GTX 480 is much more capable of dealing with very high tessellation levels than the Radeon 5870 is.

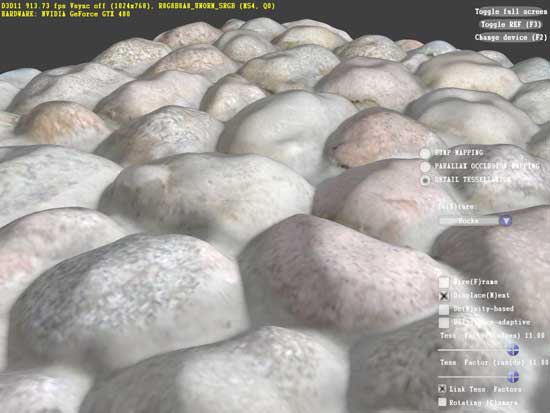

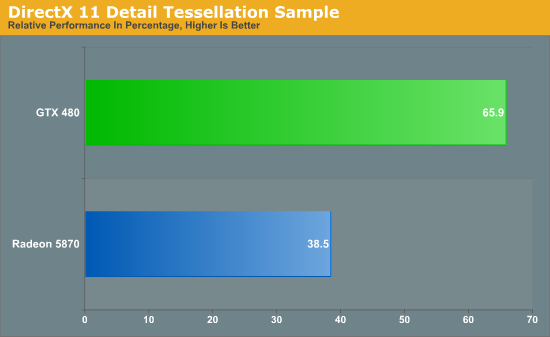

Our second tessellation test is similar in nature, this time taken from one of Microsoft’s DX11 sample programs: Detail Tessellation. Detail Tessellation is a simple scene where tessellation plus displacement mapping is used to turn a flat rock texture in to a simulated field of rocks by using tessellation to create the geometry. Here we measure the average framerate at different tessellation factors (7 and 11) and compare the framerate at the higher tessellation factor to the lower tessellation factor.

Looking at just the averages (the framerate is rather solid) we see that the GTX 480 retains 65% of its performance moving from factor 7 to factor 11. The Radeon 5870 on the other hand only retains 38% of its performance. Just as what we saw in Unigine, the GTX 480 takes a much lighter performance hit from higher tessellation factors than the Radeon 5870 does, driving home the point that the GTX 480 has a much more powerful tessellator.

With the results of these tests, there’s no reason to doubt NVIDIA’s claims about GF100’s tessellation abilities. All the data we have points GF100/GTX 480 being much more powerful than the Radeon 5000 series when it comes to tessellation.

But with that said, NVIDIA having a more powerful tessellator doesn’t mean much on its own. Tessellation is wholly dependent on game developers to make use of it and to empower users to adjust the tessellation levels. Currently every DX11 game using tessellation uses a fixed amount of it, so NVIDIA’s extra tessellation abilities are going unused. This doesn’t mean that tessellation will always be used like this, but it means things have to change, and counting on change is a risky thing.

NVIDIA’s superior tessellation abilities will require that developers offer a variable degree of tessellation in order to fully utilize their tessellation hardware, and that means NVIDIA needs to convince developers to do the extra work to implement this. At this point there’s no way for us to tell how things will go: NVIDIA’s superior tessellation abilities could be the next big thing that seperates them from AMD like they’re shooting for, or it could be the next DirectX 10.1 by being held back by weaker hardware. Without some better sense of direction on the future use of tessellation, we can’t make any recommendations based on NVIDIA’s greater tessellation performance.

Moving on we have PhysX, NVIDIA’s in-house physics simulation middleware. After picking up PhysX and its developer AGEIA in 2008, NVIDIA re-implemented PhysX hardware acceleration as a CUDA application, allowing their GPUs to physics simulations in hardware. NVIDIA has been pushing it on developers and consumers alike with limited success, and PhysX only finally had a breakthrough title last year with the critically acclaimed Batman: Arkham Asylum.

With Fermi’s greatly enhanced compute abilities, NVIDIA is now pushing the idea that PhysX performance will be much better on Fermi cards, allowing developers to use additional and more complex physics actions than ever before. In particular, with the ability to use concurrent kernels and the ability to do fast context switching, PhysX should have a reduced degree of overhead on Fermi hardware than it did on GT200/G80 hardware.

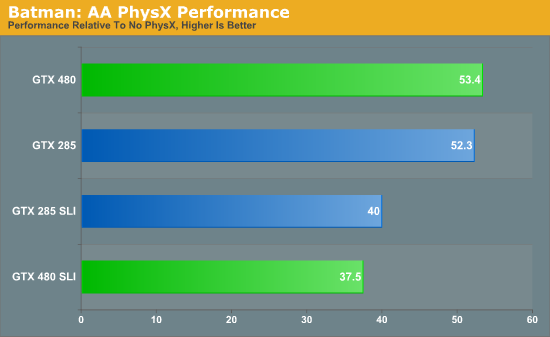

To put this idea to the test, we will be using the Batman: Arkham Asylum benchmark to measure PhysX performance. If PhysX has less overhead on Fermi hardware then the framerate hit on the GTX 480 from enabling PhysX effects should be lower than the framerate hit on the GTX 285. For this test we are running at 2560x1600, comparing performance between PhysX being disabled and when it’s set on High.

If PhysX has less overhead on Fermi hardware, Batman is not the game to show it. On both the GTX 480 and the GTX 285, the performance hit on a percentage basis for enabling PhysX is roughly 47%. The GTX 480 may be faster overall, but it takes the same heavy performance hit for enabling PhysX. The SLI cards fare even worse here: the performance hit for enabling PhysX is 60% on both the GTX 480 SLI and the GTX 285 SLI.

PhysX unquestionably has the same amount of overhead on the GTX 480 as it does the GTX 285. If PhysX is going to take up less overhead, then from what we can gather it either will be a benefit limited to PhysX 3, or will require future PhysX 2.x updates that have yet to be delivered.

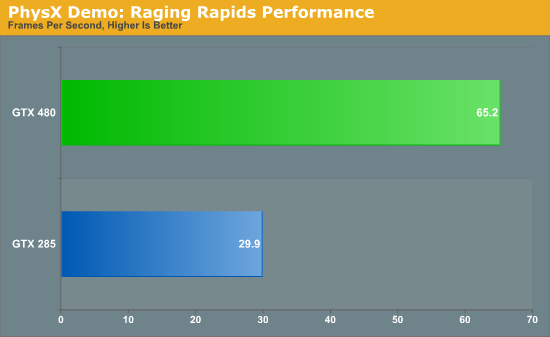

Or second PhysX test is a more generalized look at PhysX performance. Here we’re using NVIDIA’s Raging Rapids tech demo to measure PhysX performance. Raging Rapids is a water simulation demonstration that uses PhysX to simulate waves, waterfalls, and more. Here we are measuring the framerate in the demo’s benchmark mode.

Overall the Raging Rapids benchmark gives us mixed results. Out of all of the benchmarks we have run on the GTX 480, this is one of the larger performance jumps over the GTX 285. On the other hand, once we compensate for the GTX 480’s additional shaders, we end up with a result only around 10% faster than a strict doubling in performance. This is a sign of good scaling, but it isn’t a sign that the GTX 480 is significantly faster than the GTX 285 due to more efficient use of compute resources. Just having all of this extra compute power is certainly going to make a difference overall, but on an architectural level the GTX 480 doesn’t look to be significantly faster at PhysX than the GTX 285 on a per-clock/per-shader basis.

196 Comments

View All Comments

Ryan Smith - Saturday, March 27, 2010 - link

Yes, we did. We were running really close to the limits of our 850W Corsair unit. We measured the 480SLI at 900W, which after some power efficiency math comes out to around 750-800W actual load. At that load there's less extra space than we'd like to have.Just to add to that, we had originally been looking for a larger PSU after talking about PSU requirements with an NVNDIA partner, as the timing of this required we secure a new PSU before the cards arrived. So Antec had already shipped us their 1200W PSU before we could test the 850W, and we're glad since we would have been cutting it so close.

bigboxes - Sunday, March 28, 2010 - link

Appreciate the reply.GullLars - Saturday, March 27, 2010 - link

OK, so 480 generally beats 5870, and 470 generally beats 5850, but at higher prices, temperatures, wattage, and noise levels. What about 5970?As far as i can tell, the 5970 beat or came even with 480 in all tests, draws less power, runs cooler, and makes less noise. The price isn't that much more either.

It seems more fair to me to compare 480 with 5970 as both are the fastest single-card (as in PCIe slot) sollutions and are close in price and wattage.

I would also like to see what framerate FPS games come in at with gamer settings (1680x1050 and 1920x1200 resolutions), and if average is higher than game cutoff or tickrate, what is the minimum FPS, and how much can you bump eyecandy before avg drops below cutoff/tickrate or minimum drops below acceptable (30).

The reason for gamers sacraficing visuals to get high FPS can be summarized to game flow and latency. If FPS is below game tickrate, you get latency. For many games the tickrate is around 100 (100 updates in the game engine pr second). At 100 FPS you have 10ms latency between frames, if it drops to 50 you have 20 ms, and at 25 you have 40 ms. Lower than 25-30 FPS will obviously also result in virtually unplayable performance since aiming will becoming hard, so added latency from FPS below this becomes moot. If you are playing multiplayer games, this is added to the network latency. As most gamers know, latency below 30ms is generally desired, and above 50ms starts to matter, and above 100ms is very noticable. If you are on a bad connection (or have a bad connection to the server), 20-30ms added latency starts to matter even if it isn't visually notable.

bigboxes - Saturday, March 27, 2010 - link

Anyone else getting that message? I finally had to turn off the 'attack site' option in FF. It wasn't doing this last night. It's not doing it all over AT, just on the GTX 480 article.GullLars - Saturday, March 27, 2010 - link

Here too, it listed among others googleanalytics.com as a hostile site.It was probebly because NVidia wasn't happy with the review XD

(just joking ofc)

chrisinedmonton - Saturday, March 27, 2010 - link

Great article. Here's a small suggestion; temperature graphs should be normalised to room temperature rather than to 0C.GourdFreeMan - Sunday, March 28, 2010 - link

I agree. Temperature graphs should either be normalized to the ambient environment or absolute zero; any other choice of basis is completely arbitrary.Ahmed0 - Saturday, March 27, 2010 - link

Uh oh, my browser just got a heartwarming warning when I clicked on this article, the warning said that it might infect my computer badly and that I should definitely run home faster than my legs can carry.So, whats up with that?

Lifted - Saturday, March 27, 2010 - link

I just got that too. Had to disable the feature in Firefox.NJoy - Saturday, March 27, 2010 - link

well, Charlie was semi-accurate, but quite right =))What a hot chick... I mean, literately hot. Way too hot