NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

The GF100 Recap

NVIDIA first unveiled its GF100 (then called Fermi) architecture last September. If you've read our Fermi and GF100 architecture articles, you can skip this part. Otherwise, here's a quick refresher on how this clock ticks.

First, let’s refresh the basics. NVIDIA’s GeForce GTX 480 and 470 are based on the GF100 chip, the gaming version of what was originally introduced last September as Fermi. GF100 goes into GeForces and Fermi goes into Tesla cards. But fundamentally the two chips are the same.

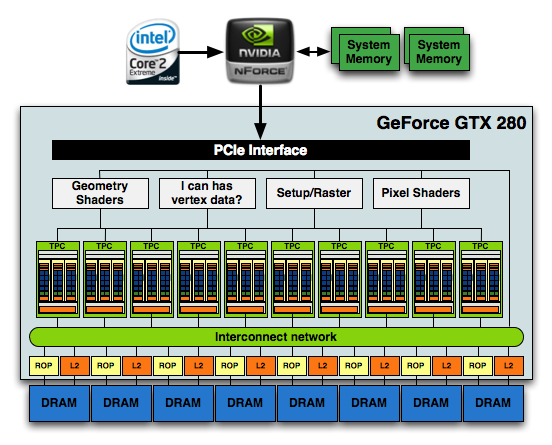

At a high level, GF100 just looks like a bigger GT200, however a lot has changed. It starts at the front end. Prior to GF100 NVIDIA had a large unified front end that handled all thread scheduling for the chip, setup, rasterization and z-culling. Here’s the diagram we made for GT200 showing that:

NVIDIA's GT200

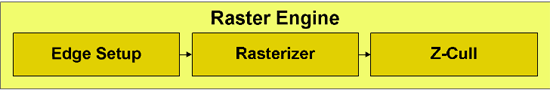

The grey boxes up top were shared by all of the compute clusters in the chip below. In GF100, the majority of that unified front end is chopped up and moved further down the pipeline. With the exception of the thread scheduling engine, everything else decreases in size, increases in quantity and moves down closer to the execution hardware. It makes sense. The larger these chips get, the harder it is to have big unified blocks feeding everything.

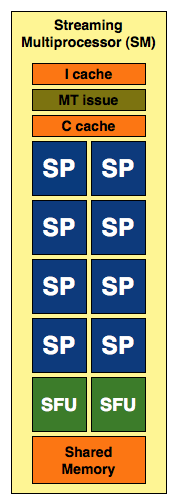

In the old days NVIDIA took a bunch of cores, gave them a cache, some shared memory and a couple of special function units and called the whole construct a Streaming Multiprocessor (SM). The GT200 took three of these SMs, added texture units and an L1 texture cache (as well as some scheduling hardware) and called it a Texture/Processor Cluster. The old GeForce GTX 280 had 10 of these TPCs and that’s what made up the execution engine of the GPU.

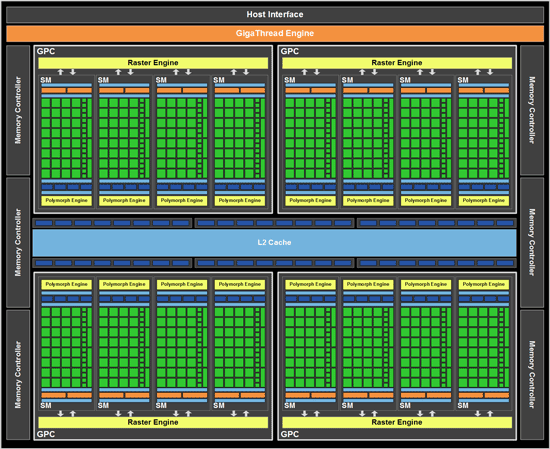

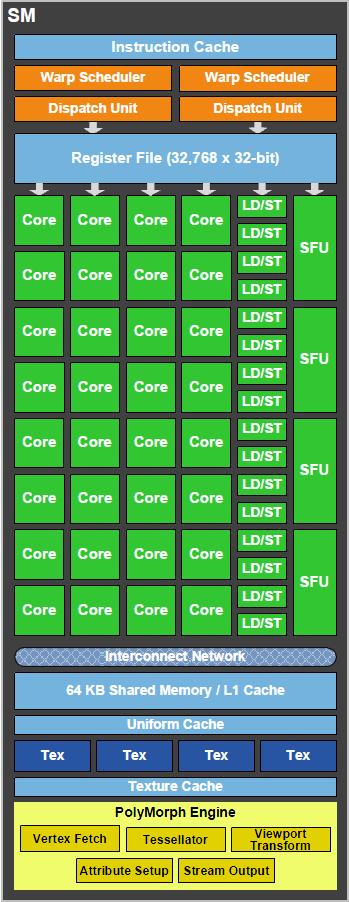

NVIDIA's GF100

Click to Enlarge

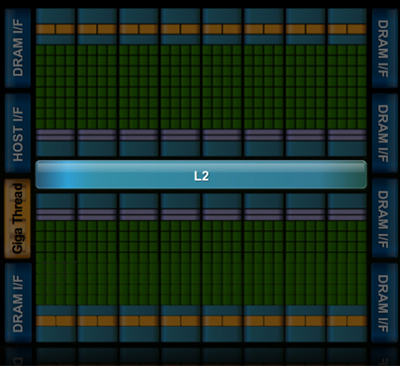

With GF100, the TPC is gone. It’s now a Graphics Processing Cluster (GPC) and is made up of much larger SMs. Each SM now has 32 cores and there are four SMs per GPC. Each GPC gets its own raster engine, instead of the entire chip sharing a larger front end. There are four GPCs on a GF100 (however no GF100 shipping today has all SMs enabled in order to improve yield).

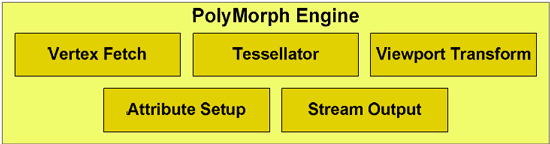

Each SM also has what NVIDIA is calling a PolyMorph engine. This engine is responsible for all geometry execution and hardware tessellation, something NVIDIA expects to be well used in DX11 and future games. NV30 (GeForce FX 5800) and GT200 (GeForce GTX 280), the geometry performance of NVIDIA’s hardware only increases roughly 3x in performance. Meanwhile the shader performance of their cards increased by over 150x. Compared just to GT200, GF100 has 8x the geometry performance of GT200, and NVIDIA tells us this is something they have measured in their labs. This is where NVIDIA hopes to have the advantage over AMD, assuming game developers do scale up geometry and tessellation use as much as NVIDIA is counting on.

NVIDIA also clocks the chip much differently than before. In the GT200 days we had a core clock, a shader clock and a memory clock. The core clock is almost completely out of the picture now. Only the ROPs and L2 cache operate on a separate clock domain. Everything else runs at a derivative of the shader clock. The execution hardware runs at the full shader clock speed, while the texture units, PolyMorph and Raster engines all run at 1/2 shader clock speed.

Cores and Memory

While we’re looking at GF100 today through gaming colored glasses, NVIDIA is also trying to build an army of GPU compute cards. In serving that master, the GF100’s architecture also differs tremendously from its predecessors.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect. Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5).

GT200 SM

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in GF100 it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled. The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

GF100 SM

NVIDIA’s GT200 had a 16KB shared memory in each SM. This didn’t function as a cache, it was software managed memory. GF100 increases the size to 64KB but it can operate as a real L1 cache now. In order to maintain compatibility with CUDA applications written for G80/GT200 the 64KB can be configured as 16/48 or 48/16 shared memory/L1 cache. GT200 did have a 12KB L1 texture cache but that was mostly useless for CUDA applications. That cache still remains intact for graphics operations. All four GPCs share a large 768KB L2 cache.

Each SM has four texture units, each capable of 1 texture address and 4 texture sample ops. We have more texture sampling units but fewer texture addressing units in GF100 vs. GT200. All texture hardware runs at 1/2 shader clock and not core clock.

| NVIDIA Architecture Comparison | G80 | G92 | GT200 | GF100 | GF100 Full* |

| Streaming Processors per TPC/GPC | 16 | 16 | 24 | 128 | 128 |

| Texture Address Units per TPC/GPC | 4 | 8 | 8 | 16 | 16 |

| Texture Filtering Units per TPC/GPC | 8 | 8 | 8 | 64 | 64 |

| Total SPs | 128 | 128 | 240 | 480 | 512 |

| Total Texture Address Units | 32 | 64 | 80 | 60 | 64 |

| Total Texture Filtering Units | 64 | 64 | 80 | 240 | 256 |

Last but not least, this brings us to the ROPs. The ROPs have been reorganized, there are now 48 of them in 6 parttions of 8, and a 64bit memory channel serving each partition. The ROPs now share the L2 cache with the rest of GF100, while under GT200 they had their own L2 cache. Each ROP can do 1 regular 32bit pixel per clock, 1 FP16 pixel over 2 clocks, or 1 FP32 pixel over 4 clocks, giving the GF100 the ability to retire 48 regular pixels per clock. The ROPs are clocked together with the L2 cache.

Threads and Scheduling

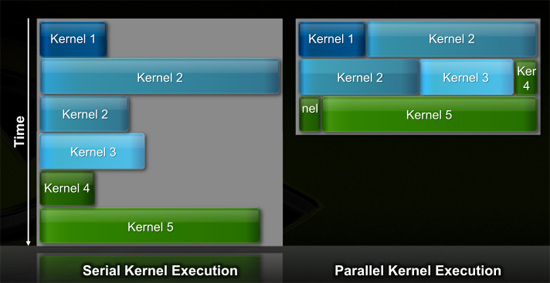

While NVIDIA’s G80 didn’t start out as a compute chip, GF100/Fermi were clearly built with general purpose compute in mind from the start. Previous architectures required that all SMs in the chip worked on the same kernel (function/program/loop) at the same time. If the kernel wasn’t wide enough to occupy all execution hardware, that hardware went idle, and efficiency dropped as a result. Remember these chips are only powerful when they’re operating near 100% utilization.

In this generation the scheduler can execute threads from multiple kernels in parallel, which allowed NVIDIA to scale the number of cores in the chip without decreasing efficiency.

GT200 (left) vs. GF100 (right)

With a more compute leaning focus, GF100 also improves switch time between GPU and CUDA mode by a factor of 10x. It’s now fast enough to switch back and forth between modes multiple times within a single frame, which should allow for more elaborate GPU accelerated physics.

NVIDIA’s GT200 was a thread monster. The chip supported over 30,000 threads in flight. With GF100, NVIDIA scaled that number down to roughly 24K as it found that the chips weren’t thread bound but rather memory bound. In order to accommodate the larger shared memory per SM, max thread count went down.

| GF100 | GT200 | G80 | |

| Max Threads in Flight | 24576 | 30720 | 12288 |

NVIDIA groups 32 threads into a unit called a warp (taken from the looming term warp, referring to a group of parallel threads). In GT200 and G80, half of a warp was issued to an SM every clock cycle. In other words, it takes two clocks to issue a full 32 threads to a single SM.

In previous architectures, the SM dispatch logic was closely coupled to the execution hardware. If you sent threads to the SFU, the entire SM couldn't issue new instructions until those instructions were done executing. If the only execution units in use were in your SFUs, the vast majority of your SM in GT200/G80 went unused. That's terrible for efficiency.

Fermi fixes this. There are two independent dispatch units at the front end of each SM in Fermi. These units are completely decoupled from the rest of the SM. Each dispatch unit can select and issue half of a warp every clock cycle. The threads can be from different warps in order to optimize the chance of finding independent operations.

There's a full crossbar between the dispatch units and the execution hardware in the SM. Each unit can dispatch threads to any group of units within the SM (with some limitations).

The inflexibility of NVIDIA's threading architecture is that every thread in the warp must be executing the same instruction at the same time. If they are, then you get full utilization of your resources. If they aren't, then some units go idle.

A single SM can execute:

| GF100 | FP32 | FP64 | INT | SFU | LD/ST |

| Ops per clock | 32 | 16 | 32 | 4 | 16 |

If you're executing FP64 instructions the entire SM can only run at 16 ops per clock. You can't dual issue FP64 and SFU operations.

The good news is that the SFU doesn't tie up the entire SM anymore. One dispatch unit can send 16 threads to the array of cores, while another can send 16 threads to the SFU. After two clocks, the dispatchers are free to send another pair of half-warps out again. As I mentioned before, in GT200/G80 the entire SM was tied up for a full 8 cycles after an SFU issue.

The flexibility is nice, or rather, the inflexibility of GT200/G80 was horrible for efficiency and Fermi fixes that.

196 Comments

View All Comments

Ryan Smith - Saturday, March 27, 2010 - link

Yes, we did. We were running really close to the limits of our 850W Corsair unit. We measured the 480SLI at 900W, which after some power efficiency math comes out to around 750-800W actual load. At that load there's less extra space than we'd like to have.Just to add to that, we had originally been looking for a larger PSU after talking about PSU requirements with an NVNDIA partner, as the timing of this required we secure a new PSU before the cards arrived. So Antec had already shipped us their 1200W PSU before we could test the 850W, and we're glad since we would have been cutting it so close.

bigboxes - Sunday, March 28, 2010 - link

Appreciate the reply.GullLars - Saturday, March 27, 2010 - link

OK, so 480 generally beats 5870, and 470 generally beats 5850, but at higher prices, temperatures, wattage, and noise levels. What about 5970?As far as i can tell, the 5970 beat or came even with 480 in all tests, draws less power, runs cooler, and makes less noise. The price isn't that much more either.

It seems more fair to me to compare 480 with 5970 as both are the fastest single-card (as in PCIe slot) sollutions and are close in price and wattage.

I would also like to see what framerate FPS games come in at with gamer settings (1680x1050 and 1920x1200 resolutions), and if average is higher than game cutoff or tickrate, what is the minimum FPS, and how much can you bump eyecandy before avg drops below cutoff/tickrate or minimum drops below acceptable (30).

The reason for gamers sacraficing visuals to get high FPS can be summarized to game flow and latency. If FPS is below game tickrate, you get latency. For many games the tickrate is around 100 (100 updates in the game engine pr second). At 100 FPS you have 10ms latency between frames, if it drops to 50 you have 20 ms, and at 25 you have 40 ms. Lower than 25-30 FPS will obviously also result in virtually unplayable performance since aiming will becoming hard, so added latency from FPS below this becomes moot. If you are playing multiplayer games, this is added to the network latency. As most gamers know, latency below 30ms is generally desired, and above 50ms starts to matter, and above 100ms is very noticable. If you are on a bad connection (or have a bad connection to the server), 20-30ms added latency starts to matter even if it isn't visually notable.

bigboxes - Saturday, March 27, 2010 - link

Anyone else getting that message? I finally had to turn off the 'attack site' option in FF. It wasn't doing this last night. It's not doing it all over AT, just on the GTX 480 article.GullLars - Saturday, March 27, 2010 - link

Here too, it listed among others googleanalytics.com as a hostile site.It was probebly because NVidia wasn't happy with the review XD

(just joking ofc)

chrisinedmonton - Saturday, March 27, 2010 - link

Great article. Here's a small suggestion; temperature graphs should be normalised to room temperature rather than to 0C.GourdFreeMan - Sunday, March 28, 2010 - link

I agree. Temperature graphs should either be normalized to the ambient environment or absolute zero; any other choice of basis is completely arbitrary.Ahmed0 - Saturday, March 27, 2010 - link

Uh oh, my browser just got a heartwarming warning when I clicked on this article, the warning said that it might infect my computer badly and that I should definitely run home faster than my legs can carry.So, whats up with that?

Lifted - Saturday, March 27, 2010 - link

I just got that too. Had to disable the feature in Firefox.NJoy - Saturday, March 27, 2010 - link

well, Charlie was semi-accurate, but quite right =))What a hot chick... I mean, literately hot. Way too hot