NVIDIA’s GeForce GTX 480 and GTX 470: 6 Months Late, Was It Worth the Wait?

by Ryan Smith on March 26, 2010 7:00 PM EST- Posted in

- GPUs

Temperature, Power, & Noise: Hot and Loud, but Not in the Good Way

For all of the gaming and compute performance data we have seen so far, we’ve only seen half of the story. With a 500mm2+ die and a TDP over 200W, there’s a second story to be told about the power, temperature, and noise characteristics of the GTX 400 series.

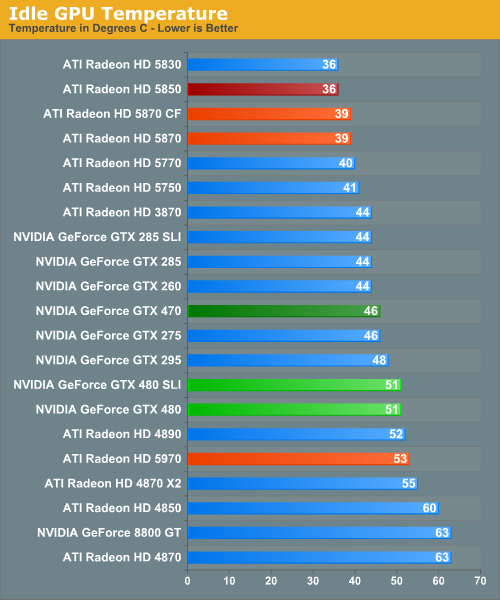

Starting with idle temperatures, we can quickly see some distinct events among our cards. The top of the chart is occupied solely by AMD’s Radeon 5000 series, whose small die and low idle power usage let these cards idle at very cool temperatures. It’s not until half-way down the chart that we find our first GTX 400 card, with the 470 at 46C. Truth be told we were expecting something a bit better out of it given that its 33W idle is only a few watts over the 5870 and has a fairly large cooler to work with. Farther down the chart is the GTX 480, which is in the over-50 club at 51C idle. This is where NVIDIA has to pay the piper on their die size – even the amazingly low idle clockspeed of 50MHz/core 101MHz/shader 67.5Mhz/RAM isn't enough to drop it any further.

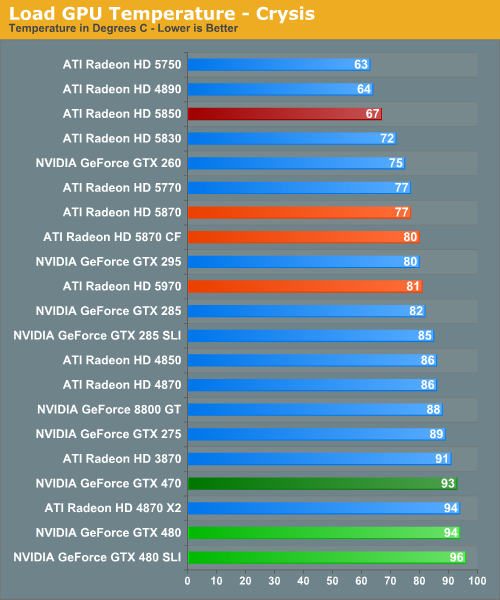

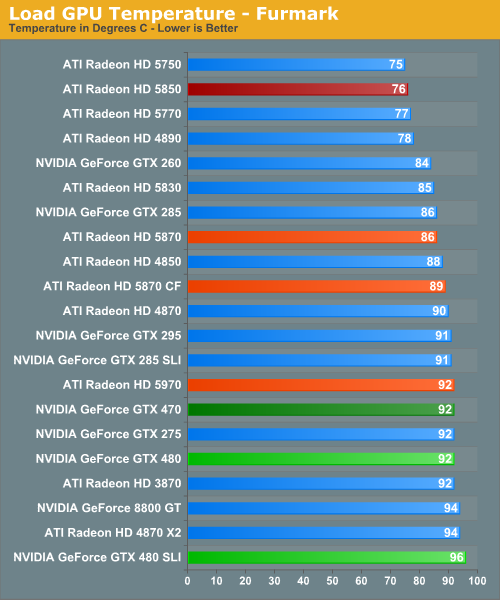

For our load temperatures, we have gone ahead and added Crysis to our temperature testing so that we can see both the worst-case temperatures of FurMark and a more normal gameplay temperature.

At this point the GTX 400 series is in a pretty exclusive club of hot cards – under Crysis the only other single-GPU card above 90C is the 3870, and the GTX 480 SLI is the hottest of any configuration we have tested. Even the dual-GPU cards don’t get quite this hot. In fact it’s quite interesting that unlike FurMark there’s quite a larger spread among card temperatures here, which only makes the GTX 400 series stand out more.

While we’re on the subject of temperatures, we should note that NVIDIA has changed the fan ramp-up behavior from the GTX 200 series. Rather than reacting immediately, the GTX 400 series fans have a ramp-up delay of a few seconds when responding to high temperatures, meaning you’ll actually see those cards get hotter than our sustained temperatures. This won’t have any significant impact on the card, but if you’re like us your eyes will pop out of your head at least once when you see a GTX 480 hitting 98C on FurMark.

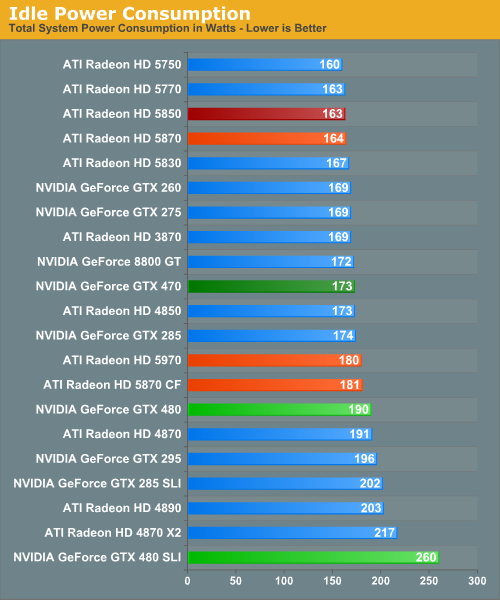

Up next is power consumption. As we’ve already discussed, the GTX 480 and GTX 470 have an idle power consumption of 47W and 33W respectively, putting them out of the running for the least power hungry of the high-end cards. Furthermore the 1200W PSU we switched to for this review has driven up our idle power load a bit, which serves to suppress some of the differences in idle power draw between cards.

With that said the GTX 200 series either does decently or poorly, depending on your point of view. The GTX 480 is below our poorly-idling Radeon 4000 series cards, but well above the 5000 series. Meanwhile the GTX 470 is in the middle of the pack, sharing space with most of the GTX 200 series. The lone outlier here is the GTX 480 SLI. AMD’s power saving mode for Crossfire cards means that the GTX 480 SLI is all alone at a total power draw of 260W when idle.

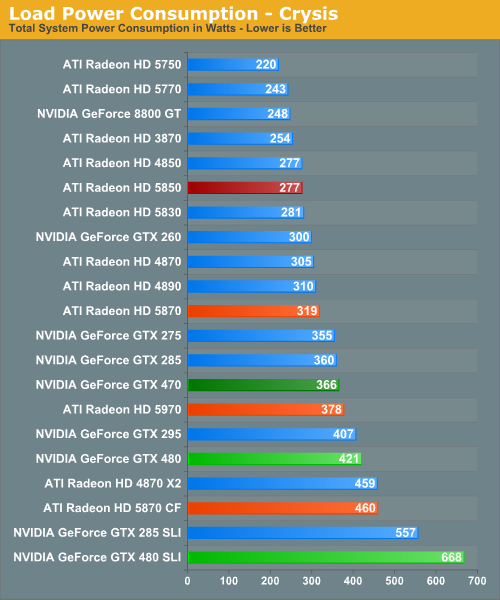

For load power we have Crysis and FurMark, the results of which are quite interesting. Under Crysis not only is the GTX 480 SLI the most demanding card setup as we would expect, but the GTX 480 itself isn’t too far behind. As a single-GPU card it pulls in more power than either the GTX 295 or the Radeon 5970, both of which are dual-GPU cards. Farther up the chart is the GTX 470, which is the 2nd most power draining of our single-GPU cards.

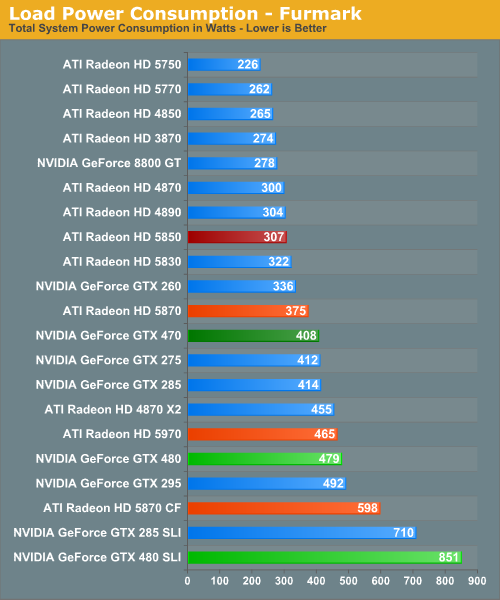

Under FurMark our results change ever so slightly. The GTX 480 manages to get under the GTX 295, while the GTX 470 falls in the middle of the GTX 200 series pack. A special mention goes out to the GTX 480 SLI here, which at 851W under load is the greatest power draw we have ever seen for a pair of GPUs.

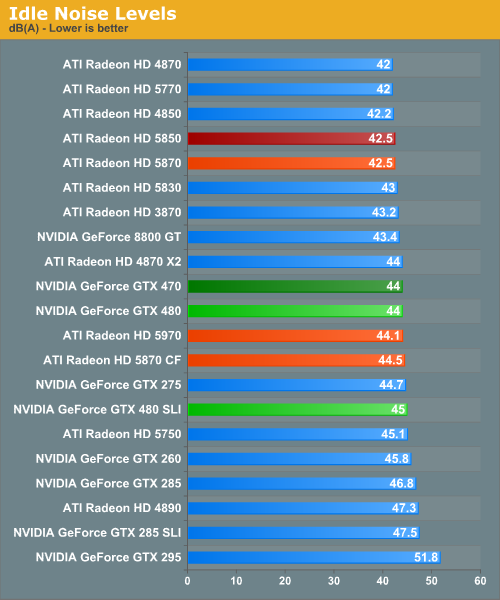

Idle noise doesn’t contain any particular surprises since virtually every card can reduce its fan speed to near-silent levels and still stay cool enough. The GTX 400 series is within a few dB of our noise floor here.

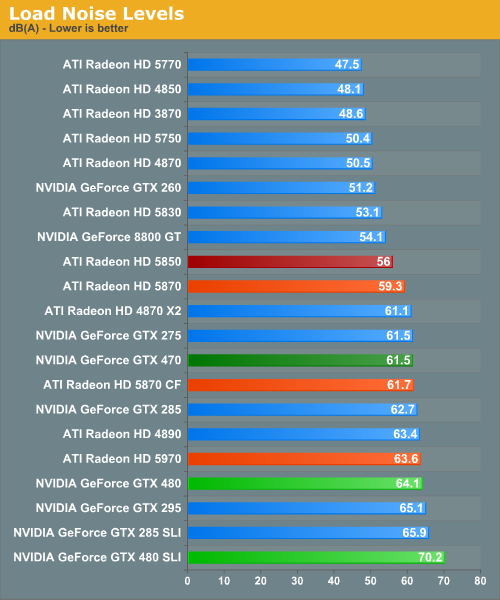

Hot, power hungry things are often loud things, and there are no disappointments here. At 70dB the GTX 480 SLI is the loudest card configuration we have ever tested, while at 64.1dB the GTX 480 is the loudest single-GPU card, beating out even our unreasonably loud 4890. Meanwhile the GTX 470 is in the middle of the pack at 61.5dB, coming in amidst some of our louder single-GPU cards and our dual-GPU cards.

Finally, with this data in hand we went to NVIDIA to ask about the longevity of their cards at these temperatures, as seeing the GTX 480 hitting 94C sustained in a game left us worried. In response NVIDIA told us that they have done significant testing of the cards at high temperatures to validate their longevity, and their models predict a lifetime of years even at temperatures approaching 105C (the throttle point for GF100). Furthermore as they note they have shipped other cards that run roughly this hot such as the GTX 295, and those cards have held up just fine.

At this point we don’t have any reason to doubt NVIDIA’s word on this matter, but with that said this wouldn’t discourage us from taking the appropriate precautions. Heat does impact longevity to some degree – we would strongly consider getting a lifetime warranty for the GTX 480 to hedge our bets.

196 Comments

View All Comments

Ryan Smith - Wednesday, March 31, 2010 - link

My master copies are labeled the same, but after looking at the pictures I agree with you; something must have gotten switched. I'll go flip things. Thanks.Wesgoood - Wednesday, March 31, 2010 - link

Correction, Nvidia retained their crown on Anandtech. Even though some resolutions even on here were favored to ATI(mostly the higher ones). On Toms Hardware 5870 pretty much beat GTX 480 from 1900x1200 to 2560x1600, not every time in 1900 but pretty much every single time in 2560.That ...is where the crown is, in the best of the best situations, not ....OMG it beat it in 1680 ...THAT HAS TO BE THE BEST!

Plus the power hungry state of this card is just appauling. Nvidia have shown they can't compete with proper technology, rather having to just cram everything they can onto a chip and prey it works right.

Where as ATI's GPU is designed perfectly to where they have plenty of room to almost double the size of the 5870.

efeman - Wednesday, March 31, 2010 - link

I copied this over from a comment I made on a blog post.I've been with nVidia for the past decade. My brother built his desktop way back when with the Ti 4200, I bought a prefab with a 5950 ultra, my last budget build had an 8600 GTS in it, and I upgraded to the GTX 275 last year. I am in no way a fanboy, nVidia just has treated me very well. If I had made that last decision a few months later after the price hike, it would've definitely been the HD 4890; almost identical performance for ballpark $100 less.

I recently built a new high-end rig (Core i7 and all), but I waited out on dropping the money on a 5800 series card. I knew nVidia's new cards were on the way, and I was excited and willing to wait it out; I expected a lot out of them.

Now that they're are out in the open, I have to say I'm a little shaken. In many cases, the performance of the cards are not where I would've hoped they be (the general consensus seems to be 5-10% increase in performance over their ATI counterparts; I see that failing in many cases, however). It seems like the effort that nVidia put into the cards gave them lots of potential, but most of it is wasted.

"The future of PC gaming" is right in the title of this post, and that's what these cards have been built for. Nvidia has a strong lead over ATI in compute and tessellation performance now, that's obvious; however, that will only provide useful if and when developers decide to put the extra effort into taking advantage of those technologies. Nvidia is gambling right now; it has already given ATI a half-year lead on the DX11 market, and it's pushing cards that won't be fully utilized until who-knows-when (there's no telling when these technologies will be more widely integrated into the gaming market). What will it do in the meantime? ATI is already on it's way to producing its 5000-series refresh; and this time it knows the competition's performance.

I was hoping for the GTX 400s to do the same thing that the GTX 200s did: give nVidia back the high-end performance throne. ATI is not only competitive with it's counterparts, but it still has the 5970 for the enthusiast performance crown (don't forget Eyefinity!). I think nVidia made a mistake in putting so much focus into compute and tessellation performance; it would've been smarter to produce cards with similar die sizes (crappy wafer yields, anyone?), faster raw performance with tessellation/compute as a secondary objective, and more competitive pricing. It wouldn't have been a bad option to create a separate chip for the Tesla cards, one that focused on the compute performance while the GeForce cards focused on the rest.

I still have faith. Maybe nVidia will work wonders with the drivers and producing performance we were waiting for. Maybe it has something awesome brewing deep within its labs. Or maybe my fears will embody themselves, and nVidia is crossing its fingers and hoping for its tessellation/compute performance to give it the market share later on. If so, ATI will provide me with my pair of cards.

That was quite the rant; I wasn't planning on writing that much when I decided to comment on Drew Henry's (nVidia GM) blog post. I suppose I'm passionate about this sort of thing, and I really hope nVidia doesn't lose me after all this time.

Kevinmbaron - Wednesday, March 31, 2010 - link

The fact that this card comes out a year and a 1/2 after the the GTX 295 makes me sick. Add to that the fact that the GTX 295 actually is faster then the GTX 480 in a few benchmarks and very close in others is like a bad dream for nvidia. Forget if they can beat AMD, they can't even beat themselves. They could have did a die shrink on the GTX 295, add some more shadders and double the memory and had that card out a year ago and it would have crushed anything on the market. Instead they risked it all on a hair brained new card. I am a GTX 295 owner. Apperently my card is a all arround better card being it doesnt lag in some games like the 480 does. I guess i will stick with my old GTX 295 for another year. Maybe then there might be a card worth buying. Even the ATI 5970 doesn't have enough juice to justify a new purchase from me. This should be considered horrible news for Nvidia. They should be ashammed of themselves and the CEO should be asked to step down.ol1bit - Thursday, April 1, 2010 - link

I just snagged a 5870 gen 2 I think (XFX) from NewEgg.They have been hard to find in stock, and they are out again.

I think many were waiting to see if the GF100 was a cruel joke or not. I am sorry for Nivida, but love the completion. I hope Nvidia will survive.

I'll bet they are burning the midnight oil for gen 2 of the GF100.

bala_gamer - Friday, April 2, 2010 - link

Did you guys recieve the GTX480 earlier than other reviewers? There were 17 cards tested on 3 drivers and i am assuming tests were done multiple times per game to get an average. installing, reinstalling drivers, etc 10.3 catalyst drivers came out week of march 18.Do you guys have multiple computers benchmarking at the same time? I just cannot imagine how the tests were all done within the time frame.

Ryan Smith - Sunday, April 4, 2010 - link

Our cards arrived on Friday the 19th, and in reality we didn't start real benchmarking until Saturday. So all of that was done in roughly a 5 day span. In true AnandTech tradition, there wasn't much sleep to be had that week. ;-)mrbig1225 - Tuesday, April 6, 2010 - link

I felt compelled to say a few things about nvidia’s Fermi (480/470 GTX). I like to always start out by saying…let’s take the fanboyism out of the equation and look at the facts. I am a huge nvidia fan, however they dropped the ball big time. They are selling people on ONE aspect of DX11 (tessellation) and that’s really the only thing there cards does well but it’s not an efficient design. What people aren’t looking at is that their tessellation is done by the polymorh engine which ties directly into the cuda cores, meaning the more cuda cores occupied by shaders processing…etc the less tessellation performance and vice versa = less frames per sec. As you noticed we see tons of tessellation benchmarks that show the gtx 480 is substantially faster at tessellation, I agree when the conditions suite that type of architecture (and there isn’t a lot of other things going on). We know that the gf100(480/470gtx) is a computing beast, but I don’t believe that will equate to overall gaming performance. The facts are this gpu is huge (3billion + transistors), creates a boat load of heat, and sucks up more power than any of the latest dual gpu cards (295gtx, 5970) came to market 6 months late and is only faster than its single gpu competition by 10-15% and some of us are happy? Oh that’s right it will be faster in the future when dx11 is relevant…I don’t think so for a few reasons but ill name two. If you look at the current crop of dx11 games, the benchmarks and actual dx11 game benchmarks (shaders and tessellation…etc) shows something completely different. I think if tessellation was nvidia’s trump card in games then basically the 5800 series would be beat substantially in any dx11 title with tessellation turned on…we aren’t seeing that(we are seeing the opposite in some circumstances), I don’t think we will. I also am fully aware that tessellation is scalable, but that brings me to another point. I know many of you will say that it is only in extreme tessellation environments that we really start to see the nvidias card take off. Well if you agree with that statement then you will see that nvidia has another issue. The 1st is the way they implement tessellation in their cards (not very scalable imo) 2nd is, the video card industry sales are not comprised of high end gpus, but the cheaper mainstream ones. Since nvidia polymorph engine is tied directly to its shaders…u kinda see where this is going, basicly less powerful cards will be bottlenecked by their lack of shaders for tessellation and vice versa. Developers want to make money, the way they make money is selling lots of games, example crysis was a big game, however it didn’t break any records sales…truth of the matter is most people systems couldn’t run crysis. Now you look at valve software and a lot of their titles sale well because of how friendly it is to mainstream gpus(not the only thing but it does help). The hardware has to be there to support a large # of game sales, meaning that if the majority of parts cannot do extreme levels of tessellation then you will find few games to implement it. Food for thought… can anyone show me a dx11 title that the gtx480 handily beats the 5870 by the same amount that it does in the heaven benchmark or even close to that. I think as a few of you have said, it will come down to what game work better with what architecture..some will benefit nvidia(Farcry2..good example) others Ati (Stalker)…I think that is what we are seeing now. IMOP.S. I think also why people are pissed is because this card was stated to be 60% faster than the 5870. As u can see its not!!

houkouonchi - Thursday, April 8, 2010 - link

Why the hell are the screenshots showing off the AA results in a lossy JPEG format instead of PNG like pretty much anything else?dzmcm - Monday, April 12, 2010 - link

I'm not familiar with Battleforge firsthand, but I understood it uses HD Ambient Occlusion wich is a variation of Screen Space Ambient Occlusion that includes normal maps. And since it's inception in Crysis SSAO has stood for Screen Space AO. So why is it called Self Shadow AO in this article?Bit-tech refers to Stalker:CoP's SSAO as "Soft Shadow." That I'm willing to dismiss. But I think they're wrong.

Am I'm falling behind with my jargon, or are you guys not bothering to keep up?