One thing that specifically sets MSI’s G210 card apart from the others is that it comes packed with more than the bare minimum as is usually found with cards at this price level. In terms of included hardware you don’t get anything besides the card, the manual, the brackets, and the software – but it’s the software that makes the difference here.

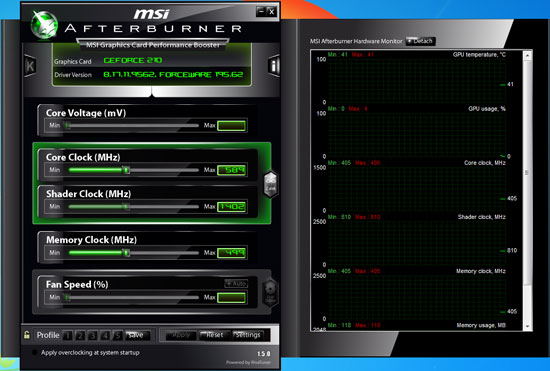

MSI offers several software utilities for all of their cards, and the cornerstone of this is their Afterburner software. In a nutshell Afterburner is the distilled child of the long-favored RivaTuner utility, using RivaTuner’s technology to offer a straightforward video card overclocking and monitoring tool. We’ll be looking at Afterburner and other overclocking utilities in-depth later this month, but we wanted to take a quick look at it today.

As a RivaTuner descendant, Afterburner offers overclocking of the core, shader, and memory clocks, along with the usual suite of clock and temperature monitoring. Furthermore despite being an MSI utility, it works on a generic level with all NVIDIA and AMD GPUs that Afterburner supports. As a trump card specifically for MSI’s cards, it’s also capable of doing voltage tweaking on most of their cards. In the case of the G210 however, this feature is not supported (which would be a bad idea anyhow since it’s passively cooled).

Finally, we took a look at the G210’s usability in an HTPC setting. With the same VP4 decoder and 8-channel LPCM audio capabilities as on the rest of NVIDIA’s 40nm G(T) 200 series, the G210 has the potential to be a solid HTPC card on paper. As with the other low-profile cards we’ve been looking at this month, we ran it through the Cheese Slices HD deinterlacing test, which as we’ve seen can quickly expose any flaws or limitations in a card’s video decoding and post-processing capabilities

Unfortunately the G210 did extremely poorly here. In our testing the G210 would consistently drop frames when trying to run the Cheese Slices test, leading to it only processing around 2 out of every 3 frames. NVIDIA doesn’t offer any deinterlacing settings beyond enabling/disabling Inverse Telecine support, so the interlacing method used here is whatever the card/drivers support, which looks to be an attempt at Vector Adaptive deinterlacing.

GeForce 210

GeForce GT 220

The quality is reminiscent of VA deinterlacing, however it’s not as clean as what we’ve seen on the GT 220. More to the point, the G210 clearly doesn’t have the processing power to do this, but it’s unable to fall back to a lesser mode. Cheese Slices isn’t a fair test by any means, but it does mean something when a card can’t gracefully fail the test. Once we throw deinterlacing out of the equation however the G210 has no problem playing back progressively encoded MPEG-2 and H.264 material. It looks to only be serious limited when deinterlacing, which means the G210 is only at a serious disadvantage with interlaced material such as live television.

24 Comments

View All Comments

mindless1 - Thursday, February 18, 2010 - link

Can you just aim for lowest cost possible with integrated video? Of course, but as always some features come at additional cost and in this case it's motherboard features you stand to gain.The advantage is being able to use whatever motherboard you want to, instead of being limited by the feature sets of those with integrated video, boards that are typically economized low-end offerings.

JarredWalton - Tuesday, February 16, 2010 - link

If you read some of http://www.anandtech.com/mobile/showdoc.aspx?i=373...">my laptop reviews, you'll see that even a slow GPU like a G210M is around 60% faster than GeForce 9400M, which is a huge step up from GMA 4500MHD (same as X4500, except for lower clocks). HD 3200/4200 are also slower than 9400M, so really we're looking at $30 to get full HD video support (outside of 1080i deinterlacing)... plus Flash HD acceleration that works without problems in my experience. Yes, you'll be stuck at 1366x768 and low detail settings in many games, but at least you can run the games... something you can't guarantee on most IGP solutions.LtGoonRush - Wednesday, February 17, 2010 - link

We're talking about desktop cards though, and as the tests above show this card simply isn't fast enough for HTPC or gaming usage. The point I'm making is that if the IGP doesn't meet your needs, then it's extremely doubtful that a low-end card will. There's a performance cliff below the R5670/GT 240 GDDR5, and it makes more sense for the performance of IGP solutions to grow up into that space than it does to buy an incredibly cut down discrete GPU. Not that I'm expecting competitive gaming performance from an IGP of course, but R5450-level results (in terms of HTPC and overall functionality) are achievable.Ryan Smith - Wednesday, February 17, 2010 - link

Even though the G210 isn't perfect for HTPC use, it's still better than IGPs. I don't think any IGP can score 100 on the HD HQV v1 test - the G210 (and all other discrete GPUs) can.shiggz - Wednesday, February 17, 2010 - link

Recently bought a 220 for 55$ to replace an old 3470 for my HTPC. MPCHC uses dxva for decoding but shaders for picture cleanup. "sharpen complex 2" greatly improves video appearance on a 1080p x264 video but was too much for a 3470. My new 220 handles it with about 30-70% gpu usage depending on the scene according to evga precision. The 220 was the perfect sweet spot of performance/power/cost. My HTPC and 42" TV used to pour out the heat in summer leaving TV viewing unpleasant.Had a 65nm dual/8800gt thus a 200watt loaded htpc and 2x 150watt light bulbs plus 200w for the LCD tv. That was a constant 700Watts. Switch the two bulbs to new lower power 30w=150 cut the HTPC to a 45nm cpu and 40nm gpu making htpc 100w leaving me 160w+200w from tv for 360W a big improvement over 700watt. Next year I'll get an LCD backlight and I should be able to get all of that down to about 150-200w for total setup that 3 years ago was 700w.

shiggz - Thursday, February 18, 2010 - link

Meant to say "LED Backlit LCD." As their power consumption is impressive.milli - Tuesday, February 16, 2010 - link

Don't you think it's about time you stop using Furmark for power consumption testing. Both nVidia and Ati products throttle when they detect something like Furmark. The problem is that you don't know how much each product throttles so the results become inconsistent.Measuring the average power consumption during actual gaming seems more realistic to me (not just the peak). 3DMark06 Canyon Flight is a good candidate. It generates higher power usage than most games but still doesn't cause unrealistic high load on the shader domain like Furmark.

7Enigma - Wednesday, February 17, 2010 - link

I completely agree with the post above. The load power consumption chart is meaningless in this context. Feel free to keep it in if you want, but please add in a more "real-world" test, be it a demanding game or something like futuremark which, while not quite "real-world", is definitely going to get the companies to run at their peak (after all they are trying for the highest score in that instance, not trying to limit power consumption).When I read something like, "On paper the G210 should be slightly more power hungry and slightly warmer than the Radeon HD 5450 we tested last week. In practice it does better than that here, although we’ll note that some of this likely comes down to throttling NVIDIA’s drivers do when they see FurMark and OCCT", I immediately ask myself why did you choose to report this data if you know it's possibly not accurate?

What I really think a site as respected and large as Anandtech needs is proprietary benchmarks that NEVER get released to the general public nor the companies that make these parts. In a similar fashion to your new way of testing server equipment with the custom benchmark, I'd love to see you guys come up with a rigorous video test that ATI/NVIDIA can't "tailor" their products towards by recognizing a program and adjusting settings to gain every possible edge.

Please consider it Anand and company, I'm sure we would all be greatly appreciative!

iamezza - Friday, February 19, 2010 - link

+1GiantPandaMan - Tuesday, February 16, 2010 - link

So is it pretty much set that Global Foundries will be the next major builder of AMD and nVidia GPU's? Even when AMD owned GF outright they never used them for GPU's, right? What's changed other than TSMC under-delivering on 40nm for a half year?