NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

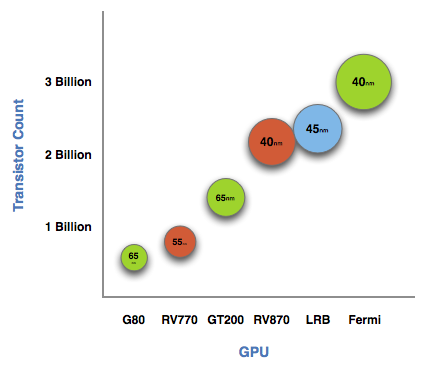

The graph below is one of transistor count, not die size. Inevitably, on the same manufacturing process, a significantly higher transistor count translates into a larger die size. But for the purposes of this article, all I need to show you is a representation of transistor count.

See that big circle on the right? That's Fermi. NVIDIA's next-generation architecture.

NVIDIA astonished us with GT200 tipping the scales at 1.4 billion transistors. Fermi is more than twice that at 3 billion. And literally, that's what Fermi is - more than twice a GT200.

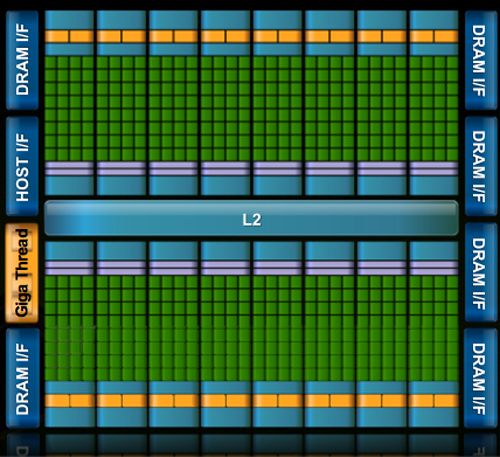

At the high level the specs are simple. Fermi has a 384-bit GDDR5 memory interface and 512 cores. That's more than twice the processing power of GT200 but, just like RV870 (Cypress), it's not twice the memory bandwidth.

The architecture goes much further than that, but NVIDIA believes that AMD has shown its cards (literally) and is very confident that Fermi will be faster. The questions are at what price and when.

The price is a valid concern. Fermi is a 40nm GPU just like RV870 but it has a 40% higher transistor count. Both are built at TSMC, so you can expect that Fermi will cost NVIDIA more to make than ATI's Radeon HD 5870.

Then timing is just as valid, because while Fermi currently exists on paper, it's not a product yet. Fermi is late. Clock speeds, configurations and price points have yet to be finalized. NVIDIA just recently got working chips back and it's going to be at least two months before I see the first samples. Widespread availability won't be until at least Q1 2010.

I asked two people at NVIDIA why Fermi is late; NVIDIA's VP of Product Marketing, Ujesh Desai and NVIDIA's VP of GPU Engineering, Jonah Alben. Ujesh responded: because designing GPUs this big is "fucking hard".

Jonah elaborated, as I will attempt to do here today.

415 Comments

View All Comments

Voo - Saturday, October 3, 2009 - link

You may overseen it, but there was a edit by an administrator to one of his posts which did exactly what you want ;)james jwb - Sunday, October 4, 2009 - link

that's good to hear :)Hxx - Friday, October 2, 2009 - link

By the looks of it, Nvidia doesn't have much going on for this year. If they loose the DX11 boat against ATI then I will pity their stockholders. About the only thing that makes those green cards attractive is their Physics spiel. Now if ATI would hurry up and do somethin with that Havoc, then dark days will await Nvidia. One way or the other, its a win-win for the consumer. I just wish their AMD division would fare just as well against intel.Zool - Friday, October 2, 2009 - link

I dont wont to be too pesimistic but availability in Q1 2010 is lame late. Windows 7 will come out soon so people will surely want to upgrade to dx11 till christmas. Also OEM market which is actualy the most profitable. Dell, HP and others will hawe windows 7 systems and they will of course need dx11 cards till christmas.(amd will hawe hopefully all models out till that time)Than of course dx11 games that will come out in future can be optimized for radeon 5K now while for gt300 we dont even know the graphic specs and the only working silicon dont even resemble to a card.

Very bad timing for nvidia this time that will give amd a huge advantage.

Zool - Friday, October 2, 2009 - link

Actualy this could hapen if u merge a super gpgpu tesla card and a GPU and want to sell it as one("because designing GPUs this big is "fucking hard"). Average people (maybe 95% of all) dont even know what Megabyte or bit is not even GPGPU. They will want to buy a graphic card not cuda card.If amd and microsoft will make heawy DX11 pr than even the rest of nvidias gpus wont sell.

PorscheRacer - Friday, October 2, 2009 - link

As with anything hardware, you need the killer software to have consumers want it. DX11 is out now, so we have Windows 7 (which most people are taking a liking to, even gamers) and you have a few upcoming games that people look to be interested in. For GPGPU and all that, well... What do we have as a seriously awesome application that consumers want and feel they need to go out and buy a GPU for? Some do that for F@H and the like, and a few for transcoding video, but what else is there? Until we see that, it's going to be ahrd to convince consumers to buy that GPU. As it is, most feel IGP is good enough for them...PorscheRacer - Friday, October 2, 2009 - link

Actually, thinking about this... Maybe if they were able to put a small portion of this into IGP, and include some good software with it, maybe the average consumer could see the benefits easier and quicker and be inclined to go for that step up to a dedicated GPU?RXR - Friday, October 2, 2009 - link

DocSilicon, you are one funny as hell mental patient to be!. I really hope you dont get banned. You just made reading the comments a whole lot more fun. Plus, it's win win. You get to satisfy your need to go completely postal at everyone, and we get a funny sideshow.- Friday, October 2, 2009 - link

Great words but nothing behind! Fermis is Nvidias Prescott or should I say much like the last Voodoo chip that never really appeared on the market? Too many transistors are not good ...ioannis - Friday, October 2, 2009 - link

Although the Star Trek TNG reference is ok, 'Nexus' should have been accompanied by a Blade Runner reference instead, Nexus-6 :)