IDF 2009 - World's First Larrabee Demo, More Clarkdale, Gulftown

by Anand Lal Shimpi on September 22, 2009 12:00 AM EST- Posted in

- Trade Shows

Sean Maloney is getting his speaking practice in and he was back during the afternoon for the second set of keynotes at IDF. This one is a bit more interesting.

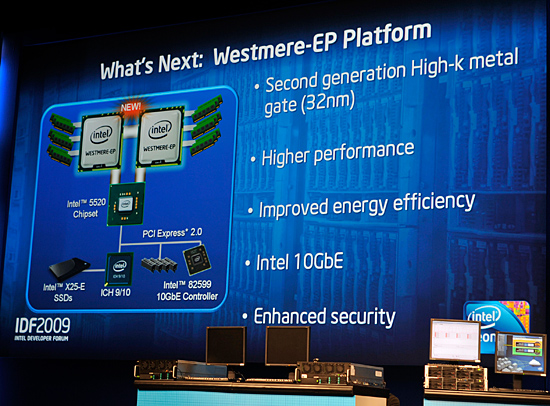

Sean started by tackling Pat Gelsinger's old playground: Servers. Nehalem-EX (8-core Nehalem) was the primary topic of discussion, but he also demonstrated the new 32nm Westmere-EP processors due out next year.

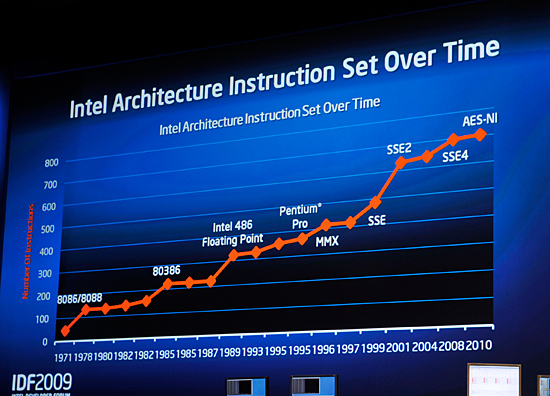

Westmere-EP is the dual-socket workstation/server version of Westmere (aka 32nm Xeon). The feature-set is nearly identical to Westmere on the desktop, so you get full AES acceleration for improved encrypt/decrypt performance. This is particularly useful for enterprise applications that do a lot of encryption/decryption.

The AES-NI instructions get added to x86 with Westmere. The x86 ISA will be over 700 instructions at that point.

The other major change to the Xeon platform is networking support. The Westmere-EP platforms will ship with Intel's 82599 10GbE controller.

Power consumption should be lower on Westmere and you can expect slightly higher clock speeds as well. If Apple sticks to its tradition, you can expect Westmere-EP to be in the next-generation Mac Pro.

What if you built a Core i7 using 1000nm transistors?

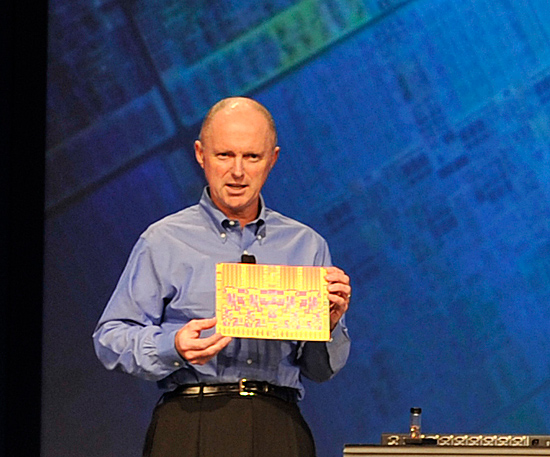

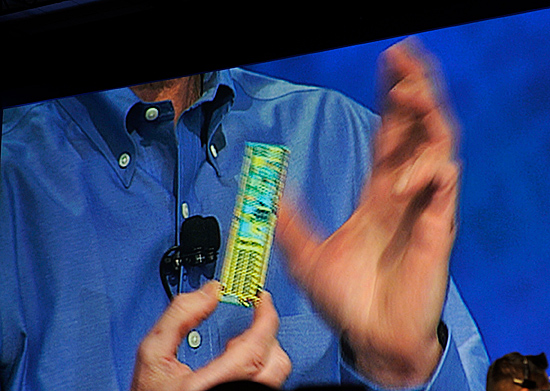

Intel has been on an integration rampage lately. Bloomfield integrated the memory controller and Lynnfield brought the PCIe controller on-die. Sean held up an example of what would happen if Intel had stopped reducing transistor size back in the 386 days.

Here's an image of what the Core i7 die would look like built using ~1000nm transistors instead of 45nm:

That's what a single Core i7 would look like on the 386's manufacturing technology

Assuming it could actually be built, the power consumption would be over 1000W with clock speeds at less than 100MHz.

Next he held up an Atom processor built on the same process:

And that's what Sean Maloney shaking an Atom built on the 386's manufacturing process would look like

Power consumption is a bit more reasonable at 65W, but that's just for the chip. You could drive a few modern day laptops off of the same power.

Useful comparisons? Not really, interesting? Sure. Next.

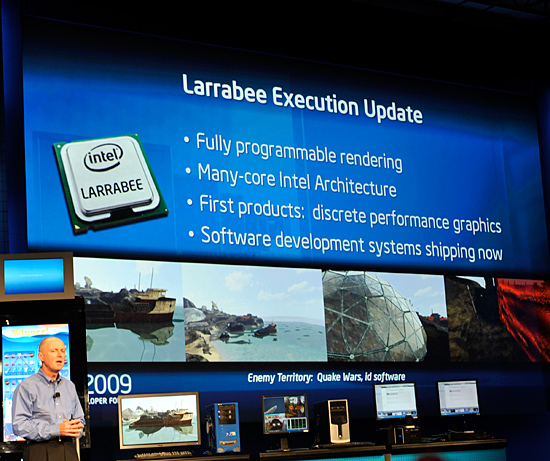

Larrabee Demo

Larrabee is of course at IDF, but its presence is very muted. The chip is scheduled for a release in the middle of next year as a discrete GPU.

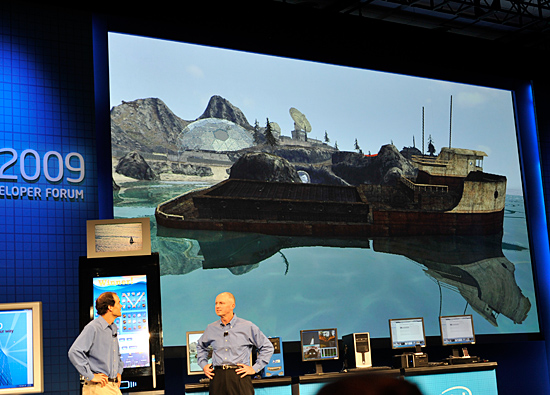

Bill Mark, a Senior Research Scientist at Intel, ran a raytracing demo using content from Enemy Territory Quake Wars on a Gulftown system (32nm 6-core) with Larrabee.

The demo was nothing more than proof of functionality, but Sean Maloney did officially confirm that Larrabee's architecture would eventually be integrated into a desktop CPU at some point in the future.

Larrabee rendered that image above using raytracing, it's not running anywhere near full performance

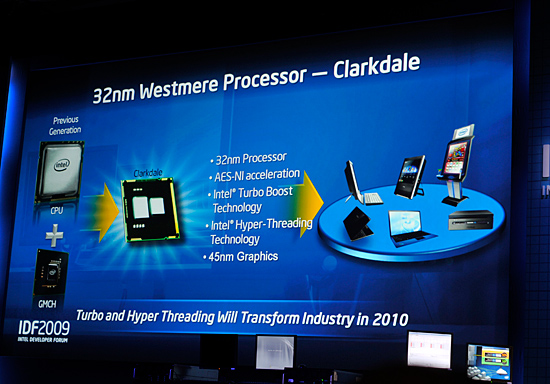

Clarkdale: Dual Core Nehalem

Clarkdale is the desktop dual-core Nehalem due out by the end of this year with widespread availability in Q1 2010.

Clarkdale will be the ideal upgrade for existing dual-core users as it adds Hyper Threading and aggressive turbo modes. There's also on-package 45nm Intel graphics, which I've heard referred to as finally "good enough" graphics from Intel.

Two cores but four threads, that's Clarkdale

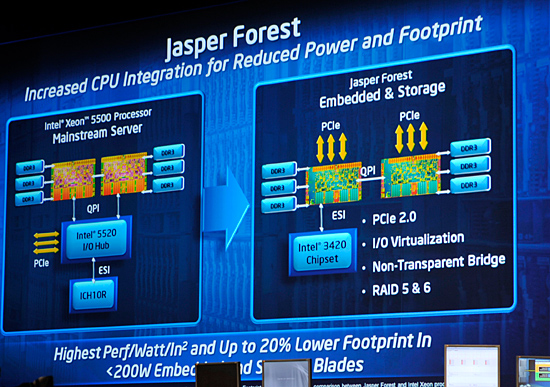

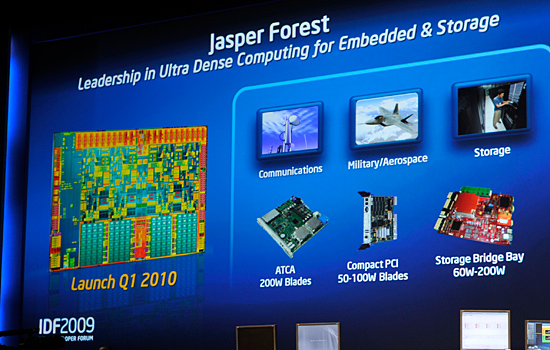

Jasper Forest

Take Nehalem with three DDR3 memory channels, add PCIe 2.0 and RAID acceleration and you've got Jasper Forest. Due out in Q1 2010 this is a very specific implementation of Nehalem for the embedded and storage servers.

Nehalem was architected to be very modular, Jasper Forest is just another example of that. Long term you can expect Nehalem cores (or a similar derivative) to work alongside Larrabee cores, which is what many believe Haswell will end up looking like.

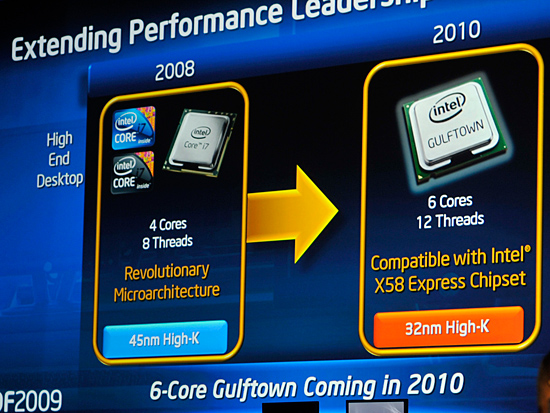

Gulftown: Six Cores for X58

I've mentioned Gulftown a couple of times already, but Intel re-affirmed support for the chip that's due out next year.

Built on the 32nm Westmere architecture, Gulftown brings all of the Westmere goodness in addition to having 6-cores on a single die.

Compatibility is going to be the big story with Gulftown: it will work in all existing X58 motherboards with nothing more than a BIOS update.

47 Comments

View All Comments

TinyTeeth - Wednesday, September 23, 2009 - link

lol, how exactly is Intel at a disadvantage to AMD? The only reason AMD still exists is because Intel doesn't want the anti-trust hassle they would have if they dominated the market completely.TA152H - Wednesday, September 23, 2009 - link

Do you understand the word 'context'?x86 is a lousy instruction set. For Intel to base a GPU on it, when their competitors are using instruction sets better suited for the task, puts them at a disadvantage.

It's why Atom can't compete in a lot of markets. The x86 instruction set is hideous, and you can't really execute it well directly. The original Pentium actually did, and they say this is based on the Pentium, so they're apparently not de-coupling it.

Where compatibility is king, you'd have no choice, but where it's not very important, you've got to be wondering why Intel went down this road. It's definitely going to put them at a disadvantage against ATI and NVIDIA. I don't know how the compatibility is going to offset that. I guess we'll see.

ggathagan - Wednesday, September 30, 2009 - link

I think the went down this road because it's the one they know.They're a CPU company, so they're trying to make a GPU with that filter in place.

I agree that it's dumb, but once we moved into the realm of programmable GPU's it was inevitable that they would take this approach.

Intel has a long history of trying to steer all processes toward the CPU (MMX,SSE,SSE2, etc.).They've always been jealous of their "territory".

Their overall goal remains integrating everything into the CPU.

NVIDIA and ATI are taking the opposite approach and trying to steer functions that have traditionally been CPU-based toward the GPU, to take advantage of that parallel processing.

They're at an advantage because all they have to do is offload processes that are currently tying up CPU time to their GPU's and they can call it a win.

Since they're able to hand-pick the processes that can take the most advantage of the GPU's approach to processing, they have a much easier time of it.

Like I said, Intel *is* dumb for taking this approach, but it's right in line with their philosophy.

If they can produce a somewhat decent level of performance in Larrabee, that ends up being a proof-of-concept that the CPU can replace a GPU.

james jwb - Wednesday, September 23, 2009 - link

Larrabee is a failure? Don't you mean "larrabee is brain-damaged?"Still, you do make me laugh with your posts... :)

Spathi - Tuesday, September 22, 2009 - link

I love playing cards with people like you.mczak - Tuesday, September 22, 2009 - link

This picture is pretty interesting, looks exactly the same as lynnfield to me. It would mean lynnfield in fact also has 3 memory controllers on die, but 1 disabled (something I speculated already about, since if you compare lynnfield and bloomfield die shots the memory controller looks exactly the same). Plus a unused QPI link.vailr - Tuesday, September 22, 2009 - link

Any info on future chipsets ?And: any chance Intel will be offering a revised 32nm socket775 CPU ?

I'm doubting they will, but thought I'd ask anyway.

Or: even just a revised chipset for either socket775 or for socket1156, that includes onboard USB 3.0 & SATA 3.0 (6 Gb SATA) controller.

And: did they specifically state whether there'd be a future multi-core 32nm socket1156 (non-IGP) CPU usable with the current P55 motherboards?

Also: any info on possible UEFI implementation by Apple, that might then allow using unmodified "generic" PC video cards in (future) Mac Pro's ?

http://www.uefi.org/about/">http://www.uefi.org/about/

ilkhan - Tuesday, September 22, 2009 - link

"Larrabee rendered that image above using raytracing, it's not running anywhere near full performance"Does that mean larrabee isn't running at its peak to render the scene or that the game is running at low FPS?

Spathi - Tuesday, September 22, 2009 - link

They might mean it is running at 1000MHz instead of 2000 and/or 24cores instead or 48.Raytracing requires a lot more performance than rasterization. An Intel staff member on a blog years ago said the Larrabee was not intended to be a raytracing GPU, it is just that is can perform pretty well as one. Other GPU's would get about 1-2 fps or something unless they cheat, then they may get 5 fps. 99% of games will still use rasterization for a long while as it is faster.

I am getting one slow or not, bugs or not ;oP. Intel tend to be pretty good at removing most hardware bugs you would ever see though. The rumor is most of the bugs are ironed out now.

jigglywiggly - Tuesday, September 22, 2009 - link

I want 6 cores + 6 threads NOW.